Artificial intelligence has moved from experimental laboratories into the core of everyday digital life. Today AI helps write reports, analyse financial markets, assist medical research, and automate countless operational tasks across industries. Its ability to process enormous volumes of data and generate insights within seconds has transformed how organisations operate. Yet as impressive as these capabilities are, they also reveal a growing challenge: the need for dependable and verifiable AI outputs.

Artificial intelligence has moved from experimental laboratories into the core of everyday digital life. Today AI helps write reports, analyse financial markets, assist medical research, and automate countless operational tasks across industries. Its ability to process enormous volumes of data and generate insights within seconds has transformed how organisations operate. Yet as impressive as these capabilities are, they also reveal a growing challenge: the need for dependable and verifiable AI outputs.

One of the most widely discussed limitations of modern AI systems is that they often sound confident even when they are wrong. AI models can generate convincing responses that contain factual inaccuracies, incomplete reasoning, or subtle biases. These issues are often referred to as hallucinations, where the system produces information that appears logical but lacks real grounding. While such mistakes may be harmless in casual use, they become far more serious when AI is involved in critical environments such as financial analysis, policy recommendations, scientific research, or healthcare support.

This growing reliance on AI has created an important shift in how people evaluate artificial intelligence systems. The conversation is no longer focused only on how powerful AI models are, but also on how much they can be trusted. If organisations are going to rely on AI for important decisions, there must be mechanisms that confirm whether the information generated by these systems is reliable.

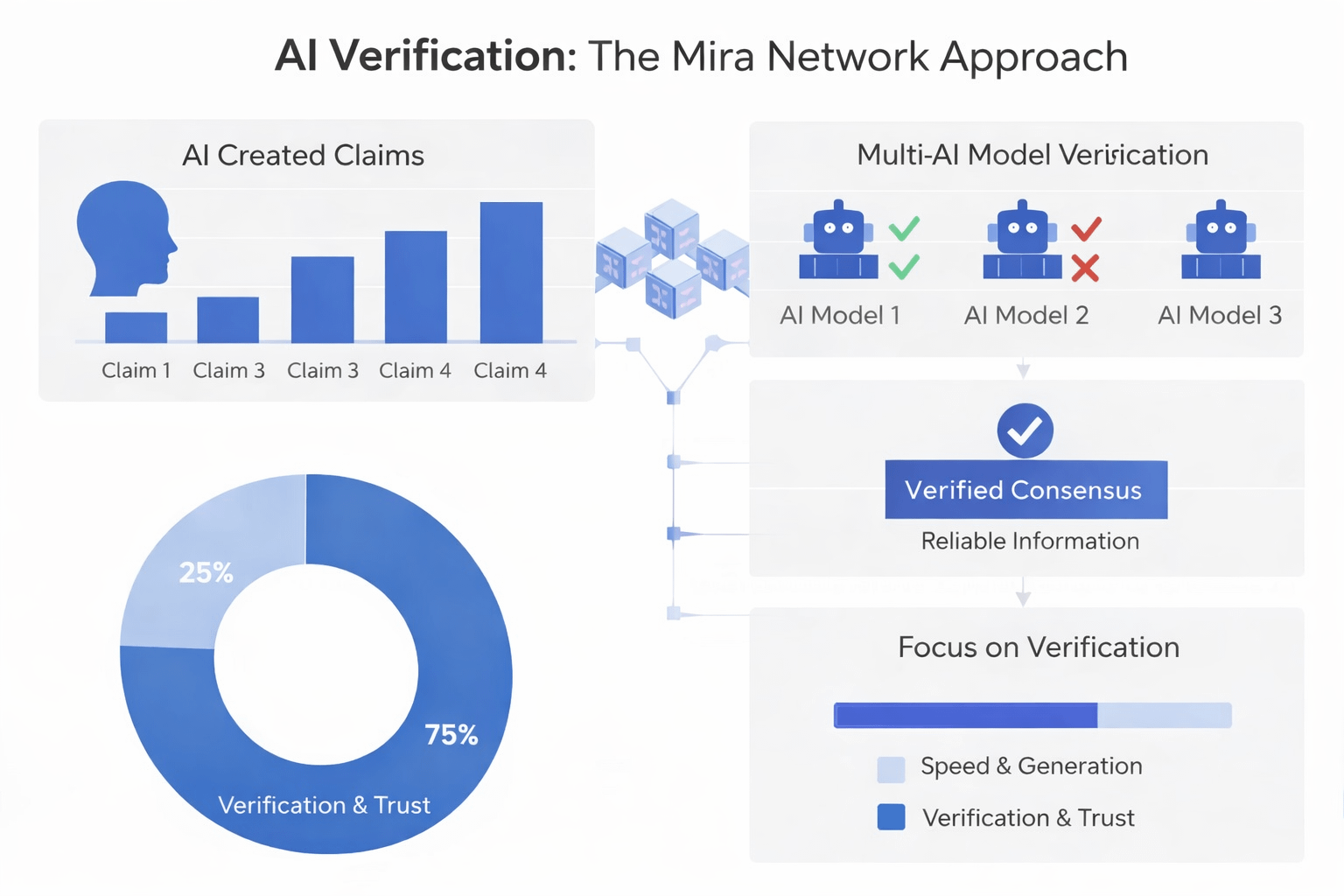

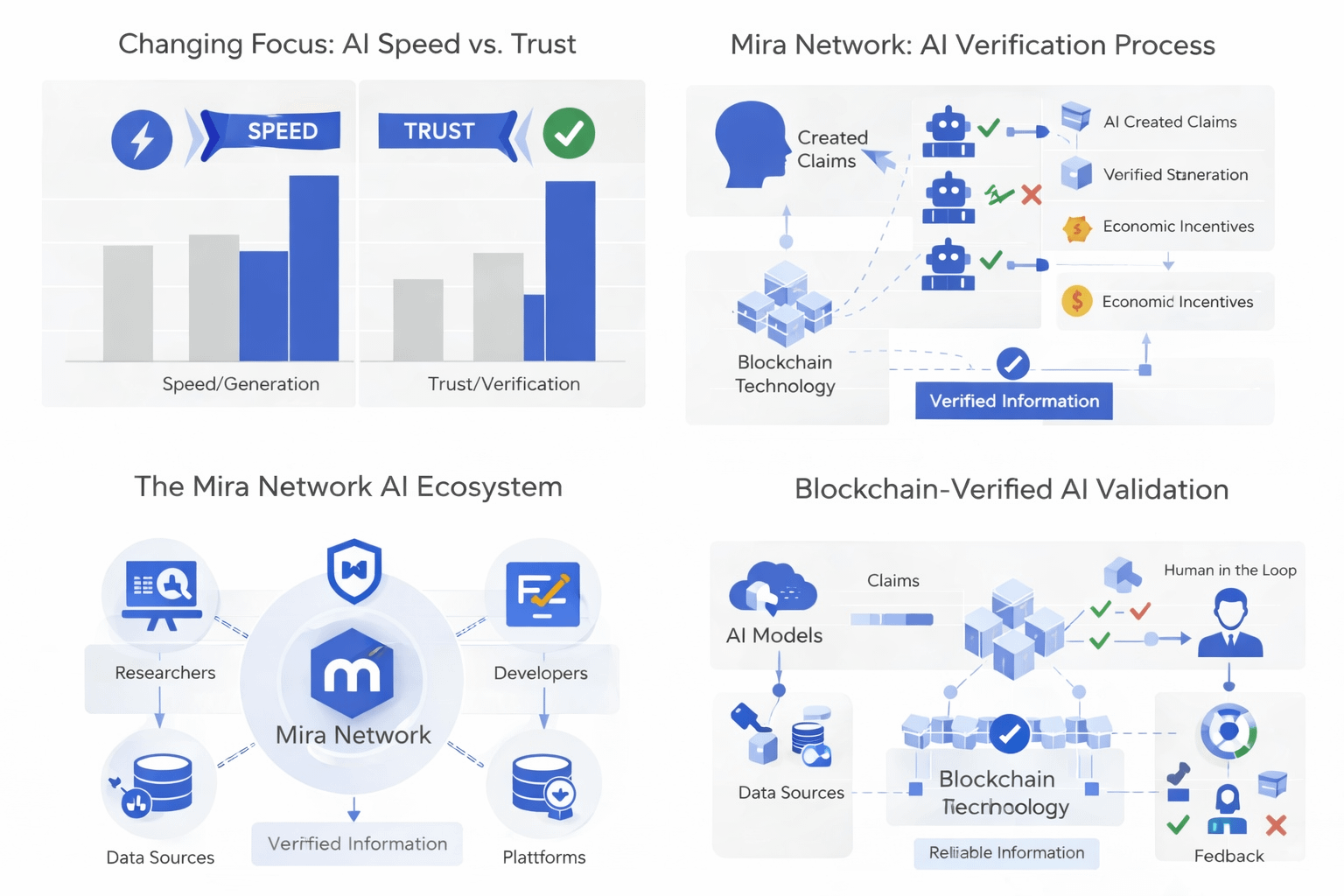

The idea behind Mira Network emerges from this exact challenge. Rather than treating AI outputs as final answers, the network treats them as claims that require verification. In this approach, information generated by an AI model is not immediately accepted as truth. Instead, it becomes a statement that must be evaluated by other independent systems.

To achieve this, the network introduces a structure where multiple AI models participate in the verification process. When a piece of information is generated, it can be divided into smaller claims or statements. These statements are then analysed by different AI models within the network. Each model examines the claim and provides its evaluation. Through this process, the network builds a form of consensus that determines whether the information is trustworthy.

This multi-model evaluation creates a more resilient system compared to relying on a single AI model. When one model generates an incorrect or biased output, other models can challenge or reject it. The final outcome is not determined by a single source but by collective agreement across several independent evaluators. This significantly reduces the risk that errors will pass through the system unnoticed.

Another key element that strengthens this model is the use of blockchain infrastructure. The results of verification are recorded on a decentralized ledger, creating a transparent and traceable record of how decisions were reached. Instead of relying on hidden internal processes, the verification steps become part of a publicly auditable system. Anyone examining the results can understand how a particular claim was validated and which models participated in the evaluation process.

This transparency introduces an additional layer of accountability. Because verification results are permanently recorded, the system discourages dishonest behaviour and encourages accurate validation. Economic incentives can also be integrated into the process, rewarding participants who contribute reliable evaluations and penalising dishonest or careless verification.

Beyond verification itself, the network also aims to support interoperability between different platforms. Once a piece of information has been verified, it can be reused across multiple applications and systems. Developers can build tools that rely on trusted data without needing to perform the same validation repeatedly. Over time, this could create a shared infrastructure where reliable AI outputs become a foundational resource for digital applications.

Such an approach could significantly influence the future architecture of artificial intelligence. Instead of isolated AI models operating independently, the ecosystem could evolve toward networks of models that validate and refine each other’s outputs. In this environment, intelligence would not only come from individual systems but from the collaborative verification process that connects them.

This shift represents a broader change in how artificial intelligence may evolve over the coming years. Early stages of AI development focused on improving performance, accuracy, and computational power. The next stage may place equal importance on trust, verification, and transparency. As AI systems become more deeply embedded in economic and social structures, mechanisms that confirm the reliability of their outputs will become increasingly essential.

In this context, projects like Mira Network illustrate an emerging direction for the AI ecosystem. By combining distributed verification, blockchain-based transparency, and collaborative AI evaluation, the network explores how trust can be built into the architecture of artificial intelligence itself. If such systems continue to mature, they could help bridge the gap between powerful AI capabilities and the level of reliability required for real-world decision-making.

Ultimately, the long-term success of artificial intelligence will not depend solely on how intelligent these systems become, but on how much confidence people can place in the information they produce. Verification layers that ensure accuracy and accountability may therefore become one of the most important building blocks of the future AI infrastructure.

@Mira - Trust Layer of AI #mira $MIRA