One problem keeps coming up as I spend more time researching AI: AI sounds confident, but confidence does not equate to truth. Even though the majority of today's large language models are extremely powerful, they still rely on probability. They use patterns in training data to predict the next word. In addition to explaining why hallucinations occur, that usually works well.

I initially believed that better models would be the only way to solve the problem. larger datasets, improved training, and increased processing power. However, the more I studied initiatives like @Mira - Trust Layer of AI , the more I saw that intelligence might not be the true obstacle. It could be confirmation.

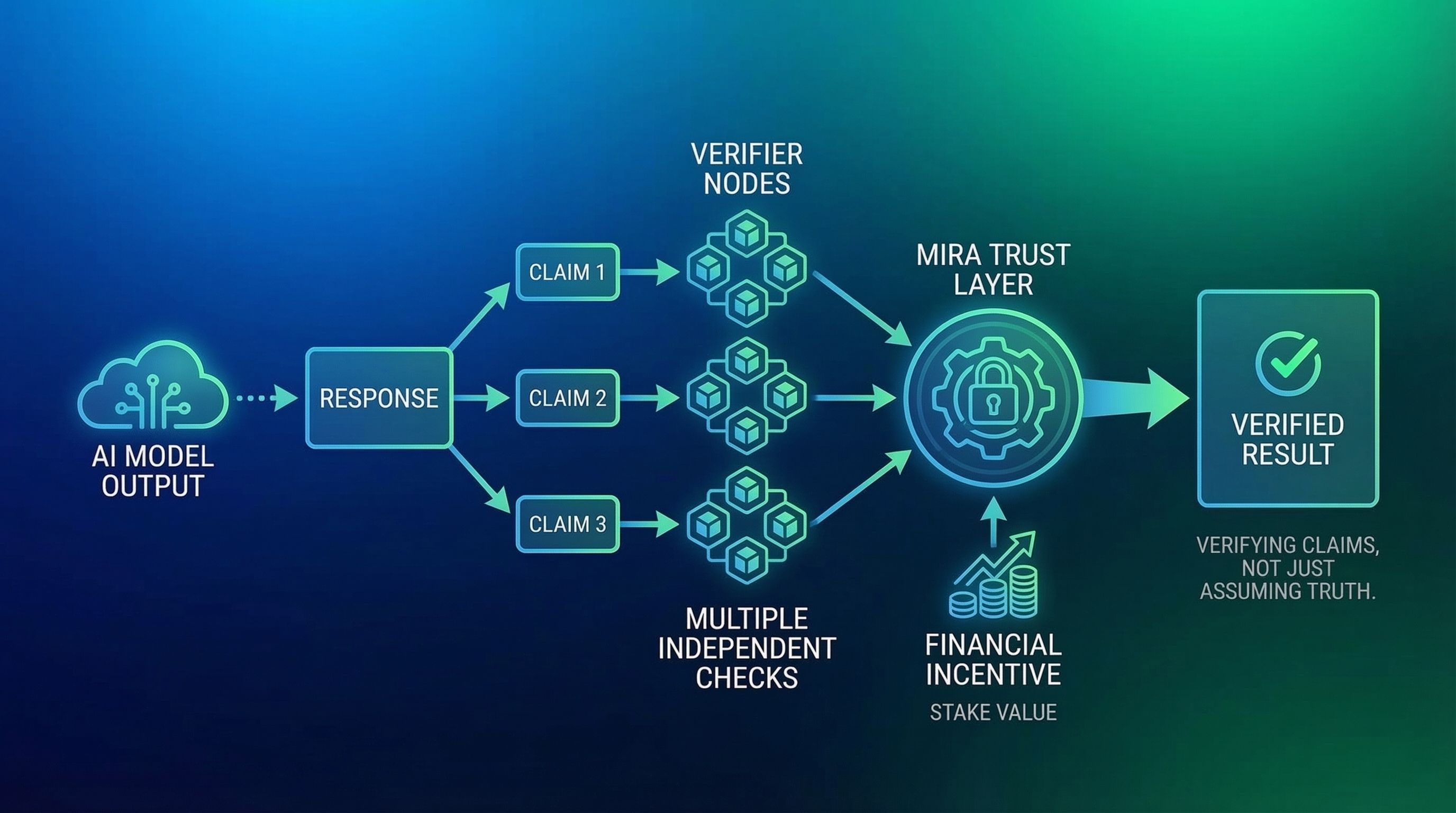

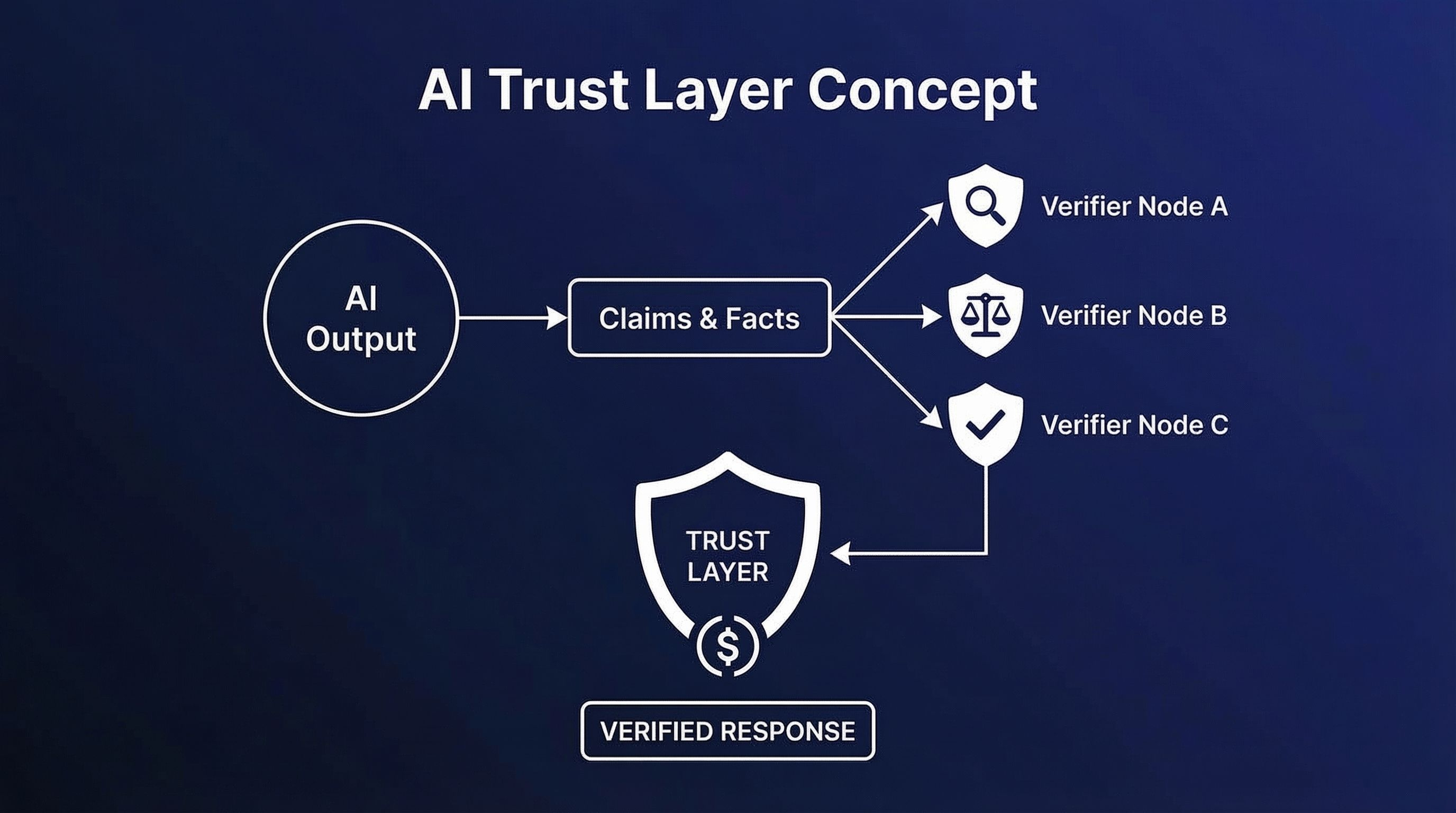

Instead of another model vying for prompts, what $MIRA is attempting to develop seems more like a trust layer for AI. The system treats each response as something that needs to be verified rather than assuming that AI outputs are accurate.

An AI response is first divided into smaller claims. Every claim is a distinct piece of information that can be independently confirmed. Several verifier nodes using various models then assess these claims. Instead of relying solely on one model , nodes network looks for agreement across several independent checks.

The fact that this system also incorporates financial incentives is what I find intriguing. While inaccurate or malicious behaviour may result in penalties, network stake value validators are rewarded for truthful verification. To put it another way, accuracy is economically enforced rather than merely encouraged.

This method changes the way we consider the results of AI. "Can this result be proven?" becomes the question instead of "does this model seem trustworthy?"

That distinction may become crucial as AI is incorporated more deeply into automation, research, and finance. Adoption of AI may be hampered more by confidence in the results than by capability.

Because of this, $MIRA's emphasis on verifiable AI outputs seems like a crucial move. Making AI trustworthy enough to make decisions in the real world is more important than making it sound smarter.

#mira $MIRA