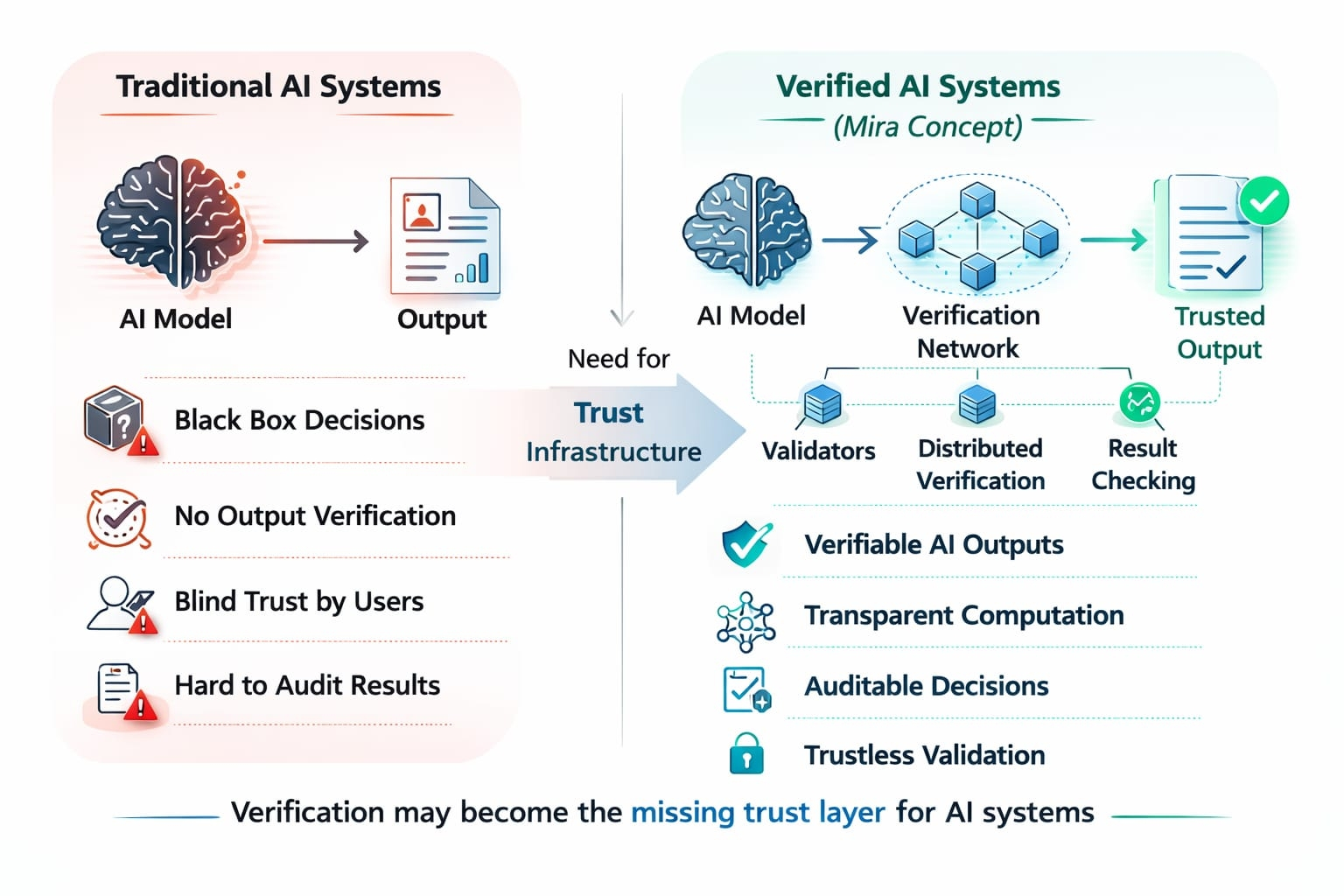

A few months ago, I noticed something interesting while following different AI and blockchain projects. Many teams were racing to build bigger models, faster inference systems, and smarter AI agents. But very few were asking a basic question: How do we verify what AI produces?

That question is where Mira starts to stand out.

Instead of focusing only on building AI, Mira is focused on something that might become even more important in the long run verification of AI outputs. In simple terms, Mira is building infrastructure that helps prove whether an AI result is reliable, reproducible, and trustworthy.

At first glance, this might sound like a small technical layer. But when you think about how AI is being used today in finance, research, automation, and digital decision-making verification quickly becomes a serious challenge.

The Growing Trust Problem in AI

Today, AI models generate answers, predictions, and decisions at an incredible scale. But the systems that verify those results are often weak or missing entirely.

For example, if an AI model generates market analysis, medical insights, or code, users often have to trust that output blindly. Even developers sometimes cannot fully explain how a model arrived at its result.

This creates a trust gap.

Mira approaches this problem by introducing a verification layer for AI outputs, supported by decentralized infrastructure. Instead of relying on a single system to confirm results, the network can verify computations and outputs through distributed participants.

The result is a framework where AI results can be checked, validated, and trusted more transparently.

Mira’s Core Idea: Verifiable Intelligence

The central idea behind Mira is what many people describe as verifiable intelligence.

Rather than treating AI as a black box, Mira aims to make outputs provable and auditable. This concept has important implications for industries where trust and accuracy matter.

For example:

• AI-generated research or reports could be verified through Mira’s network.

• Automated trading models could have their logic validated.

• AI agents interacting with blockchains could prove their execution steps.

This approach is particularly relevant in Web3 environments, where transparency and trustless verification are core principles.

In many ways, Mira is trying to extend those principles into the AI world.

Infrastructure Designed for AI Verification

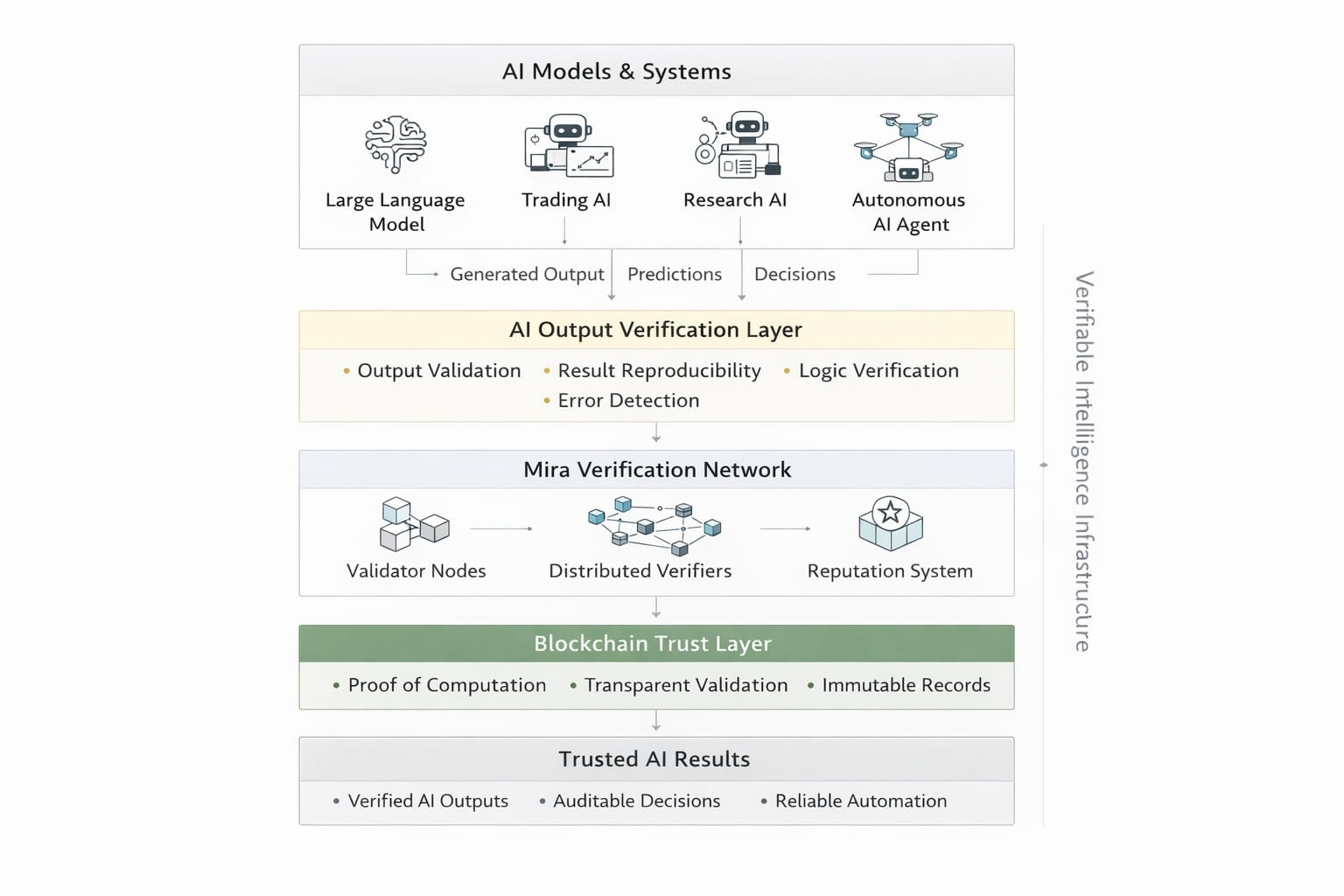

One of the interesting aspects of Mira is that it is not simply a tool or application. Instead, it is designed as infrastructure that other projects and developers can build on.

From what I’ve observed, Mira’s architecture focuses on several key components:

1. Verification Network

Mira introduces a network where participants help verify AI outputs. Instead of a centralized authority validating results, distributed nodes contribute to the verification process.

This makes the system more transparent and resistant to manipulation.

2. Integration with AI Models

The platform is designed to connect with different AI models and services. This means developers building AI applications can integrate verification mechanisms directly into their workflows.

Over time, this could create a broader ecosystem of AI systems that prove their outputs rather than just producing them.

3. Tokenized Incentives

The ecosystem also includes the $MIRA token, which helps coordinate participation within the network. Incentives can be aligned so that validators, developers, and participants contribute to maintaining reliable verification processes.

Token-based systems are common in Web3, but in this case they serve a very specific purpose: encouraging accurate validation of AI results.

Why This Approach Matters

In my opinion, the most interesting part of Mira is not just the technology itself but the timing of the problem it addresses.

AI is growing quickly, and many industries are starting to rely on it for important decisions. However, the systems that ensure those decisions are correct are still developing.

If AI becomes a foundational technology for the digital economy, verification could become just as important as computation.

Think about how blockchain works. Blockchains did not just introduce digital assets they introduced verifiable transactions.

Mira is exploring whether a similar idea can exist for AI outputs.

Potential Use Cases

Several use cases could benefit from Mira’s verification layer.

AI Research and Data Analysis

Researchers increasingly use AI tools to analyze data or generate insights. Mira could help verify that those outputs follow reproducible logic rather than random generation.

Autonomous AI Agents

As AI agents begin interacting with decentralized systems, verification becomes essential. Mira’s network could ensure that agents execute tasks correctly and transparently.

Financial and Trading Systems

In financial environments, AI models often make predictions or trading decisions. Verification mechanisms could provide additional confidence that those outputs are valid.

Decentralized AI Applications

Developers building Web3 AI applications may use Mira to introduce trust layers into their systems, making their products more reliable.

These examples highlight why verification might become an important component of future AI ecosystems.

My Personal Perspective on Mira

When I first looked into Mira, I initially thought of it as another AI-related blockchain project. But after studying the concept more carefully, I started seeing it differently.

Many projects focus on making AI stronger.

Mira focuses on making AI accountable.

That distinction is subtle but important.

If AI systems continue to expand into critical areas like governance, finance, and automation, users will demand stronger guarantees about the outputs they receive. Verification infrastructure could play a major role in meeting that demand.

Of course, the success of a project like Mira will depend on adoption, developer participation, and the strength of its ecosystem. Infrastructure projects often take time to mature.

But the idea itself — building a verification layer for AI — feels both practical and forward-looking.

Final Thoughts

The future of AI may not be defined only by how powerful models become, but also by how trustworthy their outputs are.

Mira is exploring this challenge by building a system where AI results can be validated through decentralized networks rather than blind trust.

In my view, that direction deserves attention. As AI continues to integrate into everyday systems, the ability to verify its decisions could become one of the most important pieces of the technology stack.

Projects like Mira are attempting to build that missing layer and if they succeed, they could quietly reshape how we trust intelligent machines.

@Mira - Trust Layer of AI #Mira $MIRA