@Mira - Trust Layer of AI Just after 6 a.m. on an ordinary Tuesday, I was at my kitchen table with a cooling mug beside my laptop when an AI summary confidently got a number wrong. I knew that number by heart. It was a little error, but it stayed on my mind because it made me wonder about something bigger. If I cannot trust the easy stuff then what happens next?

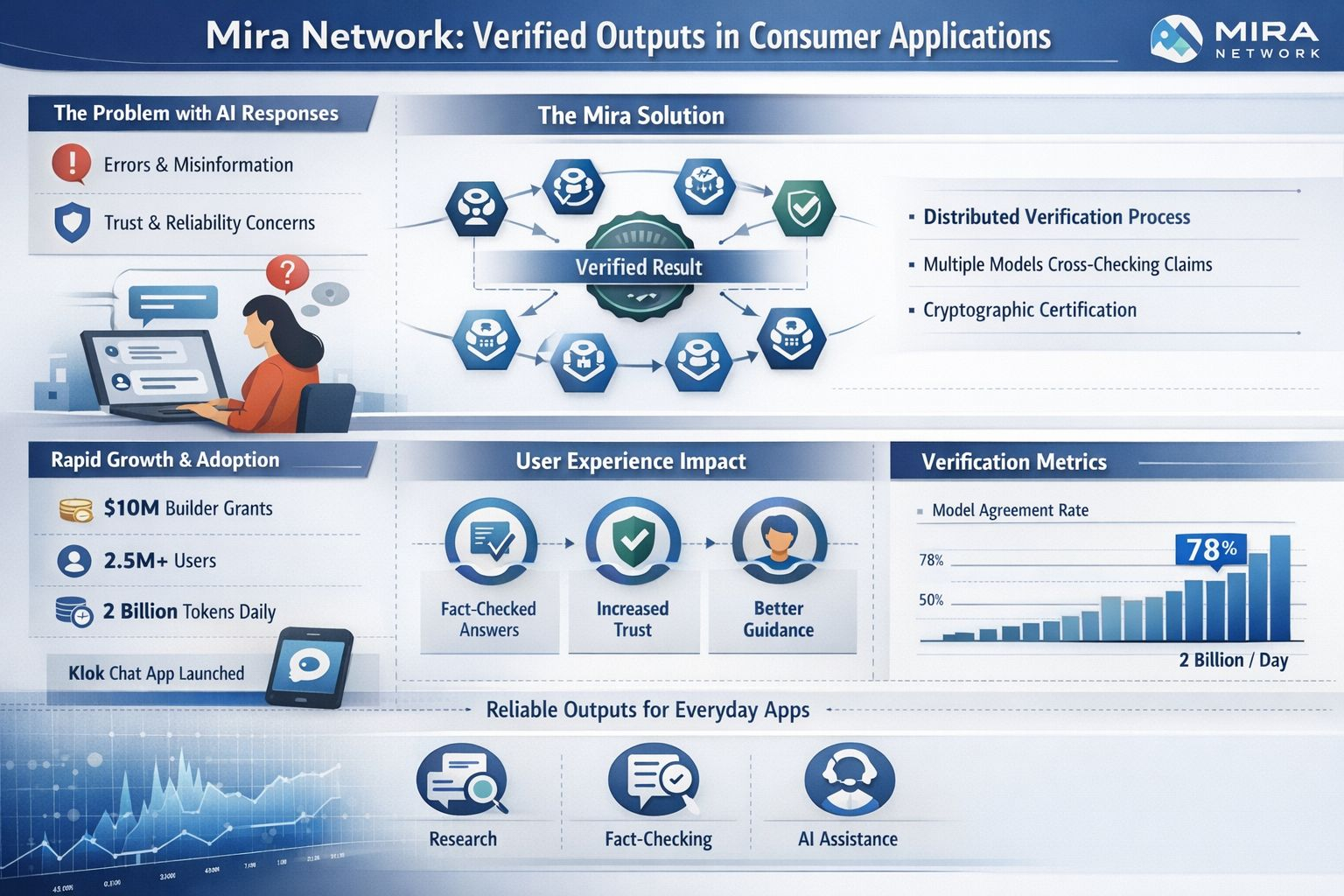

I care about Mira Network for a simple reason. Consumer AI no longer feels like a toy to me. I now see chat tools used for research fact checking guidance and even emotional support in everyday life. In that setting a wrong answer is not just irritating. It can waste time distort judgment and quietly send someone toward a poor decision. Mira’s premise sits directly in that gap because it is not asking people to trust a single model on faith. It is trying to verify outputs before they turn into something a user relies on.

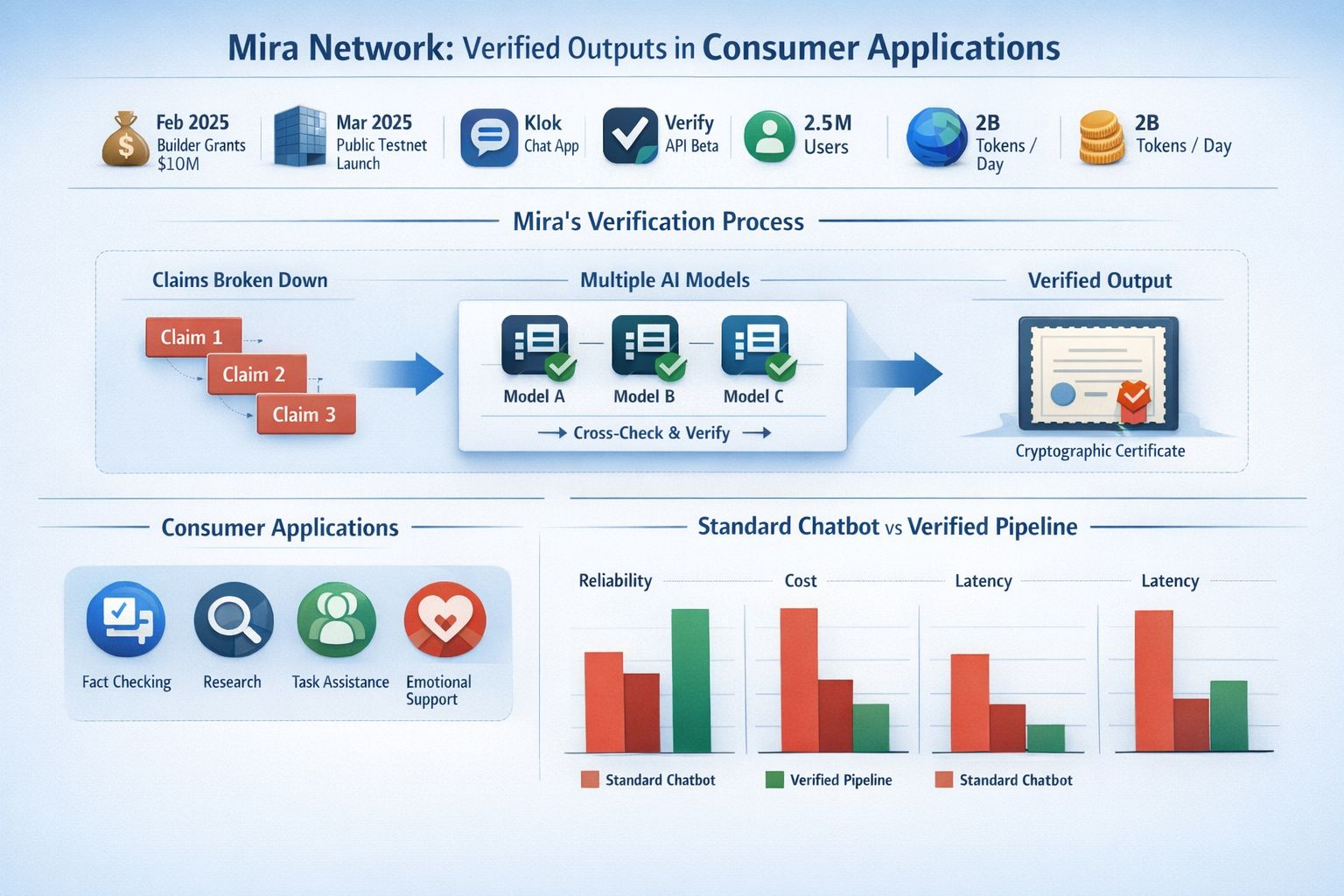

What holds my attention is that Mira is not chasing the usual story about one larger and smarter model finally solving everything. Its own materials describe a different path. The network breaks an output into smaller claims and sends those claims through distributed verification across multiple models before recording the outcome as a cryptographic certificate. In its whitepaper Mira argues that reliability improves when models check each other instead of one model being treated as the final authority. That may sound technical at first glance yet the consumer implication is very plain to me. The answer I read inside an app could arrive with more scrutiny behind it than a standard chatbot response.

That also helps explain why the topic is getting more attention now. Over the past year Mira has moved from concept language into product language and ecosystem language in a way that makes it easier to notice. It introduced Klok as a chat app built on its own infrastructure. It announced a $10 million builder grant program in February 2025 and launched a public testnet in March 2025. It also began promoting a beta Verify API for developers who want a fact checking layer inside their products. Around the same period the company said it had reached 2.5 million users and was processing two billion tokens each day across its ecosystem. Even after allowing for the usual optimism that comes with startup messaging that is still enough visible movement to put Mira in more conversations.

I also think Mira feels timely because the consumer side of AI has changed. A year ago many people were still testing chatbots for novelty and speed. Now I see more products trying to become dependable companions for repeated tasks that users actually care about. Mira’s own examples reflect that shift through tools built for chat fact checking guidance and conversational support. I do not endorse every category equally and some of them make me more careful rather than less. Even so they reveal the direction clearly. Mira wants verification to live inside ordinary applications instead of staying parked inside a research demo that never reaches real users.

The progress that seems most real to me is not the branding. It is the way verification appears inside the product stack. Mira’s SDK describes itself as a unified interface for multiple language models with routing load balancing flow management and usage tracking built in. That matters because verified output is much harder to use when developers have to stitch together a pile of separate systems before they can even test it. The stronger angle here is practical. If a team can call one interface decide when extra checking is worth the added delay and return a more dependable answer in higher risk moments then verification starts to feel like infrastructure instead of a theory.

I still do not see this as a solved problem. Verification can reduce error but it can also increase cost latency and hidden complexity that ordinary users never notice directly. Agreement across several models is not the same thing as truth especially in areas shaped by judgment culture or incomplete information. Mira’s own paper makes room for that limitation by acknowledging that context matters and that no single system can fully eliminate hallucination or bias. I find that restraint more convincing than a louder claim would be because it keeps me from treating the word verified as if it means perfect.

What I keep coming back to is the consumer experience. Most people will never read a whitepaper inspect a blockchain record or compare verifier nodes. They will simply notice whether an app feels steadier and less slippery and less likely to say something polished that turns out to be wrong. That is where Mira Network could matter most. If it succeeds I think its contribution will look modest on the surface and that may be the point. Fewer quiet mistakes in ordinary apps would be a meaningful improvement and right now that sounds more useful to me than another dazzling demo.