Something unusual is happening in crypto that many people don't fully see.

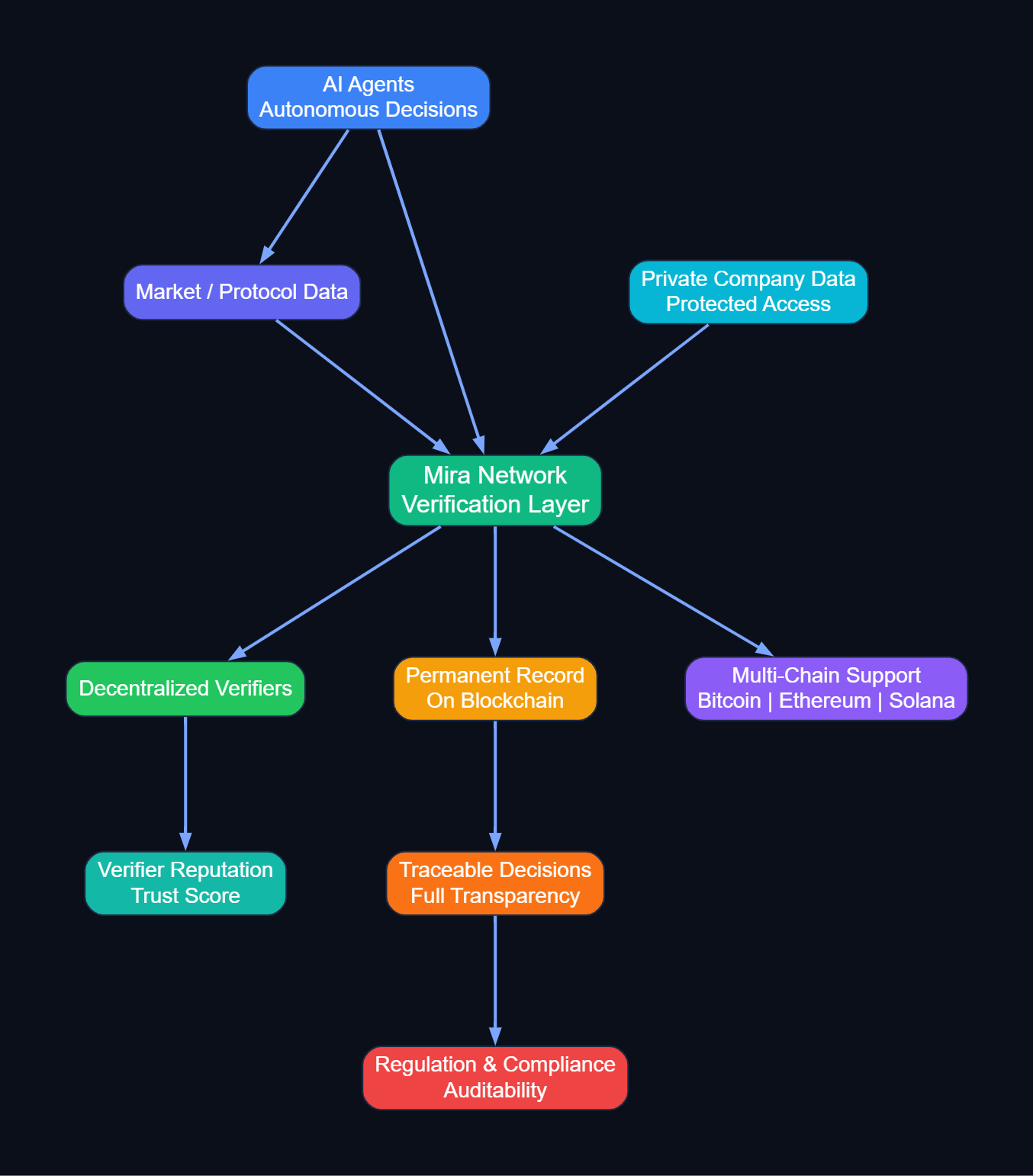

AI systems are no longer just experimental. They are making real decisions.

They are moving assets, managing liquidity, and interacting with protocols.

And it has created a problem most people weren't prepared for.

When a person makes a trade, we can see who decided.

When a smart contract executes a transaction, we can see the logic.

When an AI agent acts based on information from a language model, the decision is invisible.

There is no system to hold it accountable.

Mira Network fills this gap.

The old systems weren't designed for AI agents.

They weren’t built for a world where AI makes financial decisions autonomously.

Mira Network was built for this world.

When an AI agent requests information about the market, Mira verifies it.

Each piece of information has a record of who checked it and how.

It is stored permanently on the blockchain.

The difference between unverified AI outputs and Mira‑verified data is accountability.

The system shows exactly what happened. And if something goes wrong, we can see why.

This is important a

s authorities are formulating regulations for AI, based decisions.

Besides outcomes, they are interested in the logic that led to those results.

Mira provides that visibility.

Every decision becomes a traceable record.

Compliance officers can follow the entire path without needing cryptography expertise.

Companies are joining Mira because they want trustworthy and accountable systems.

Mira also tracks the quality of verifiers.

Participants who consistently verify correctly build a reputation.

The network learns who can be trusted over time.

This creates reliability without central control.

Mira can be put in the loop with some of the blockchains such as Bitcoin and Ethereum and Solana.

AI agents working on any chain can still be held accountable.

It even works with private company data without exposing sensitive information.

AI agents can make informed decisions without seeing the underlying data.

The real problem isn’t that AI is untrustworthy.

The problem is that there’s no system to hold it accountable.

Mira Network solves that problem.

Verified information becomes auditable and transparent.

The AI economy needs this to function responsibly.

Every participant, every decision, every verification is recorded.

The network ensures transparency and trust.

Mira Network is building the infrastructure that makes AI accountable.

And as AI becomes more active, this layer will be essential.