If $MIRA security model fails, it does not fail like a bug on a dashboard. It fails like a referee taking cash before a title match. That is the frame I keep coming back to. A pro athlete who throws a game for the other team is not just fined a little and told to do better.

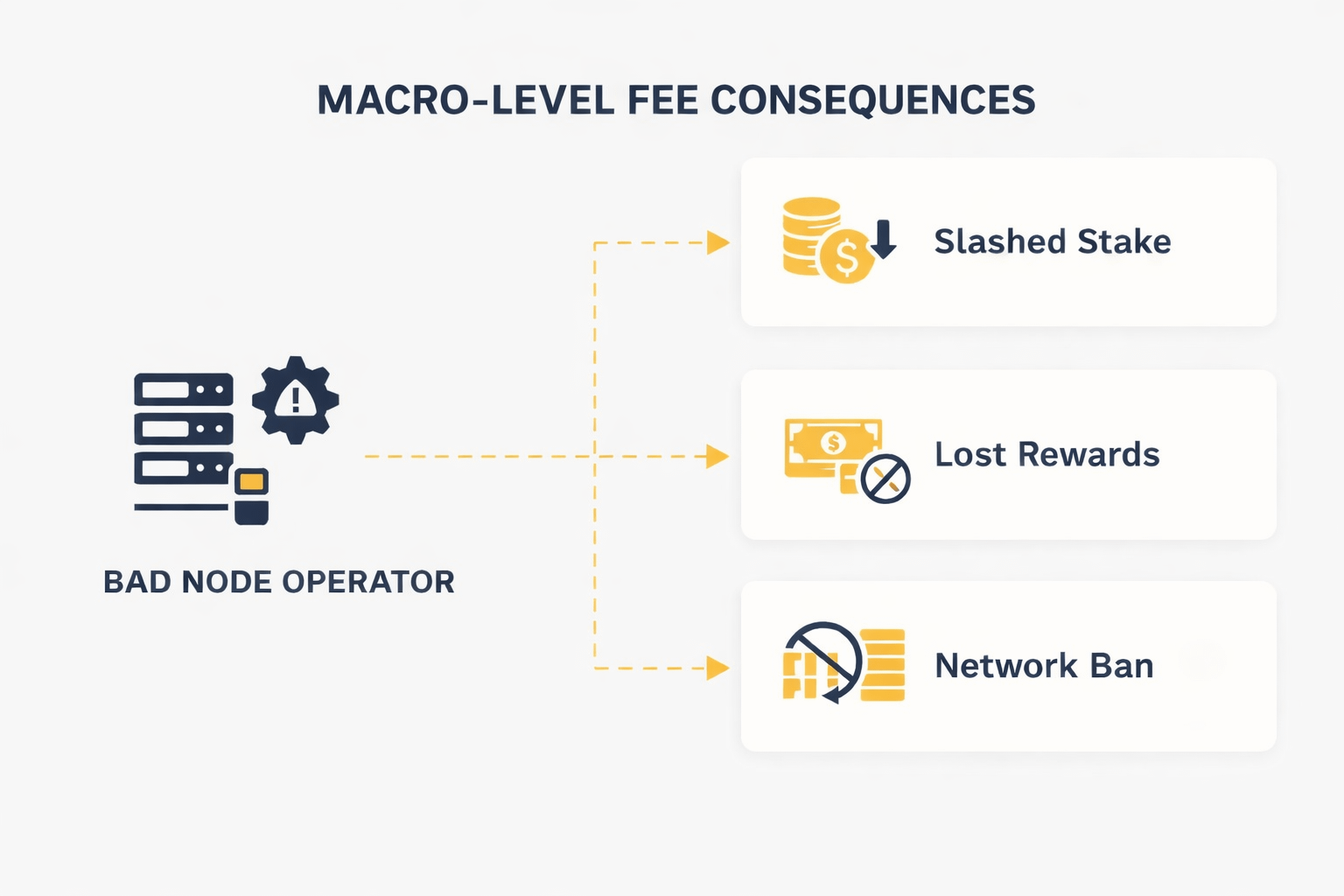

In a serious league, they can lose the whole contract, the career path, the room. That is what slashing is trying to do inside Mira. It turns bad behavior from a small mistake into a career-ending Economic Penalty for the node operator who thought Deviation was worth it.

What pulled me in is that Mira is not securing a simple yes-no ledger entry. It is trying to verify AI output through a network of operators running verifier models and reaching consensus on claims, with stake on the line.

In the whitepaper, Mira says outputs are broken into claims, checked by diverse models, and settled through distributed consensus rather than one trusted party. It also says node operators are economically incentivized to do honest verification, and that the model mixes staking with slashing because random guessing can look attractive when choices are limited. Binary task can hand a lazy node a 50% shot by luck. Four options still give random responses a 25% shot. That is not a side issue. That is the attack surface.

I think this part gets missed because people love clean stories. They want the network to be smart, fair, almost moral. But a node operator does not face a moral quiz. He faces a balance sheet. Electricity, model cost, latency, staff time, and missed rewards all hit at once.

Then the bad idea sneaks in. What if I cut corners. What if I answer fast instead of right. What if I let the crowd carry me. In weak systems, that drift is common. In Mira, the design aim is to make that drift expensive enough that the shortcut stops looking clever.

This is where many people get the logic wrong. They hear slashing and think punishment. I hear insurance pricing. Staked Value is not there to look tough. It is posted collateral. It tells the network, If I act like a clown, you may seize my bond.

In Mira’s own wording, if a node consistently deviates from consensus or shows patterns that suggest random responses instead of real inference, that stake can be slashed, making the shortcut economically irrational.

I like that framing because it is cold and honest. The network is not asking operators to be noble. It is asking them to do math. If the Financial penalty is heavier than the possible gain from Manipulation attempts, Honesty becomes the Rational response. That is game theory in work boots.

Still, this only holds under one hard condition: honest actors must control the majority of staked value. Mira says that point directly too, and it matters more than the slogan layer around any token. If bad Node operators gain Majority control, the social story breaks and the math starts to bend.

Consensus then stops being a truth filter and starts to look like a cartel vote. I have seen people act confused here, as if more Participation alone solves it. It does not. More Participation helps only when it adds real model diversity, real capital at risk, and real independence. Fake decentralization is just many logos around one hand on the switch.

Mira’s whitepaper argues that manipulation should become prohibitively expensive when honest operators control most of the stake and when model diversity grows with the network. In theory, that is the right equilibrium. The market has to keep checking whether the cost to corrupt the vote stays prohibitive or quietly gets cheap.

The bad node operator, then, is not just a cheater. Sometimes he is lazier than that. He is the guy who stops doing inference and starts spraying answers. He is the firm that cuts cost by running weak models while still collecting rewards. He is the operator who thinks a little Deviation will hide inside the crowd. And, yes, he is the actor who may collude if the upside beats the risk. Mira is built around making that trade feel awful. Lose stake. Lose future fees. Lose trust. Maybe lose the right to keep playing.

That is why I think slashing has to be severe enough to hurt, but also clear enough that honest operators can price the Risk before they join. A vague Penalty is weak security. A known Financial penalty can anchor behavior because people fear what they can count.

My own view is simple. A network like Mira should not depend on good vibes, sharp branding, or the hope that node operators wake up ethical each morning. It should depend on a game-theoretic equilibrium where cheating feels irrational, honest work pays enough to keep skilled operators in, and Majority control by bad actors stays prohibitively expensive. That does not make Mira safe by magic.

It means the system at least respects the real problem: people respond to incentives, especially under stress. So when I think about a bad node operator, I do not picture a comic villain. I picture a salary, a spreadsheet, a corner cut, a bad bet. The point of slashing is to make that bet so ugly that the clean path wins more often. That, to me, is adult network design at scale. Not Financial Advice.

@Mira - Trust Layer of AI #Mira $MIRA