Whenever I come across a new AI system, I end up asking the same thing: what am I really being asked to trust? My perspective on that has moved around a lot over the past year. I used to think reliability would mostly come from bigger models, better training, and a lot of post-release patching. Mira Network pushes a different idea, and I find it interesting because it changes the unit of trust. Instead of asking one model to be right often enough, it tries to make each output checkable by breaking it into smaller claims and sending those claims through a network of independent verifiers. In Mira’s whitepaper, that is the core move: transform generated content into verifiable claims, have multiple models judge them, and return the result with a record of how consensus was reached.

What feels different here is that Mira is not mainly presenting itself as a better chatbot. It is trying to act more like a verification layer that sits behind other AI tools. That distinction matters to me. Most people are not bothered by AI because it sounds awkward. They are bothered because it can sound smooth, confident, and totally wrong at the same time. Mira’s answer is to treat truth a little less like a vibe and a little more like an auditable process. If several differently run models have to agree on a claim before that claim passes through, the system is no longer relying on one model’s confidence, which is often a poor stand-in for correctness. That does not make truth automatic, but it does change the rules from “trust the model” to “trust the verification procedure.”

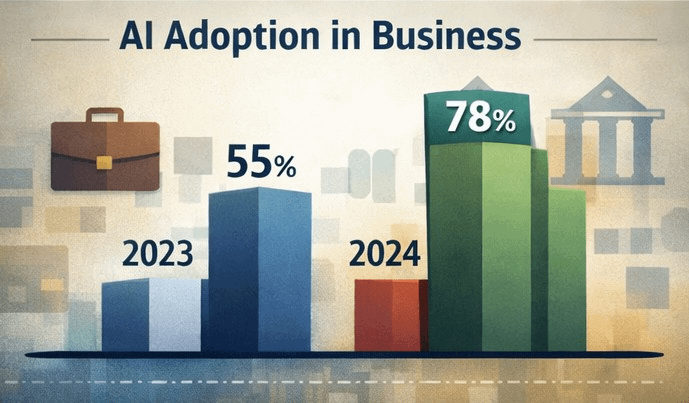

I think that shift is getting attention now because AI is no longer living in the safe corner of the internet where a bad answer is merely annoying. Stanford’s 2025 AI Index says that business use of AI climbed to 78% of organizations in 2024, up from 55% the year before. It also points to AI becoming more woven into everyday life, even as new ways of measuring factuality and safety begin to emerge. Around the same time, NIST’s generative AI risk profile identified being valid and reliable — alongside transparency and safety — as a core feature of trustworthy AI, while warning that these systems can also amplify misinformation, impersonation, and prompt-injection risks. Five years ago, plenty of people still treated hallucinations as an odd side effect of a fun new tool. Now the same flaw lands inside work, medicine, finance, security, and public information. That changes the emotional weight of the problem.

Mira’s deeper argument is that reliability may not come from any single model at all. Its whitepaper says one model will always carry some minimum error rate, because improving one kind of mistake can worsen another. So the network leans on diversity instead: different models, different operators, and economic incentives meant to discourage dishonest verification. In simple terms, Mira is borrowing one lesson from AI ensembles and another from blockchains. From the first, it takes the idea that several systems together can outperform one. From the second, it takes the idea that no single party should get to declare the answer unilaterally. That is why the project keeps using the word “trustless.” It does not mean trust disappears. What this suggests is that trust should no longer depend on one central authority, but on a process that remains open to outside scrutiny.

What surprises me is that this is both more modest and more ambitious than it sounds. It is modest because Mira is not claiming to solve intelligence itself. It is trying to solve verification, which is narrower and, frankly, more practical. But it is ambitious because verification gets hard very quickly once outputs become long, nuanced, or time-sensitive. Mira’s own research acknowledges that. In one early study, its three-model consensus setup reached 95.6 percent precision versus 73.1 percent for the generator alone, but the test set was only 78 cases, and the authors explicitly say larger datasets are needed. They also note tradeoffs: strict agreement improves precision but can reject good answers, and formatting claims into standardized questions helps reliability while limiting scope. I actually find those caveats reassuring. They make the project sound more like engineering than mythology.

So when people say Mira is rewriting the rules of AI reliability, I do not hear that as magic. I hear something simpler. The old rule was that a model’s answer should be trusted if the model seemed advanced enough. The rule Mira is proposing is that important answers should pass through a system designed to challenge them before anyone acts on them. Whether that becomes a widely used standard is still an open question. But as AI moves from drafting text to making decisions, I think that question starts to matter more than model personality, benchmark theater, or product demos ever did.

@Mira - Trust Layer of AI #Mira #mira $MIRA