What first drew my attention to Mira Network was not a flashy claim about smarter AI or faster systems. Those promises are everywhere now. Every week there is another project explaining how its models are more capable, its agents are more autonomous, or its automation layer will transform the way people work.

That conversation dominates the AI space because it is easy to demonstrate.

A smooth interface, a clever demo, a model that produces a polished answer in seconds those things capture attention quickly. They make progress feel obvious.

But that surface-level progress hides a deeper issue that most projects still avoid confronting.

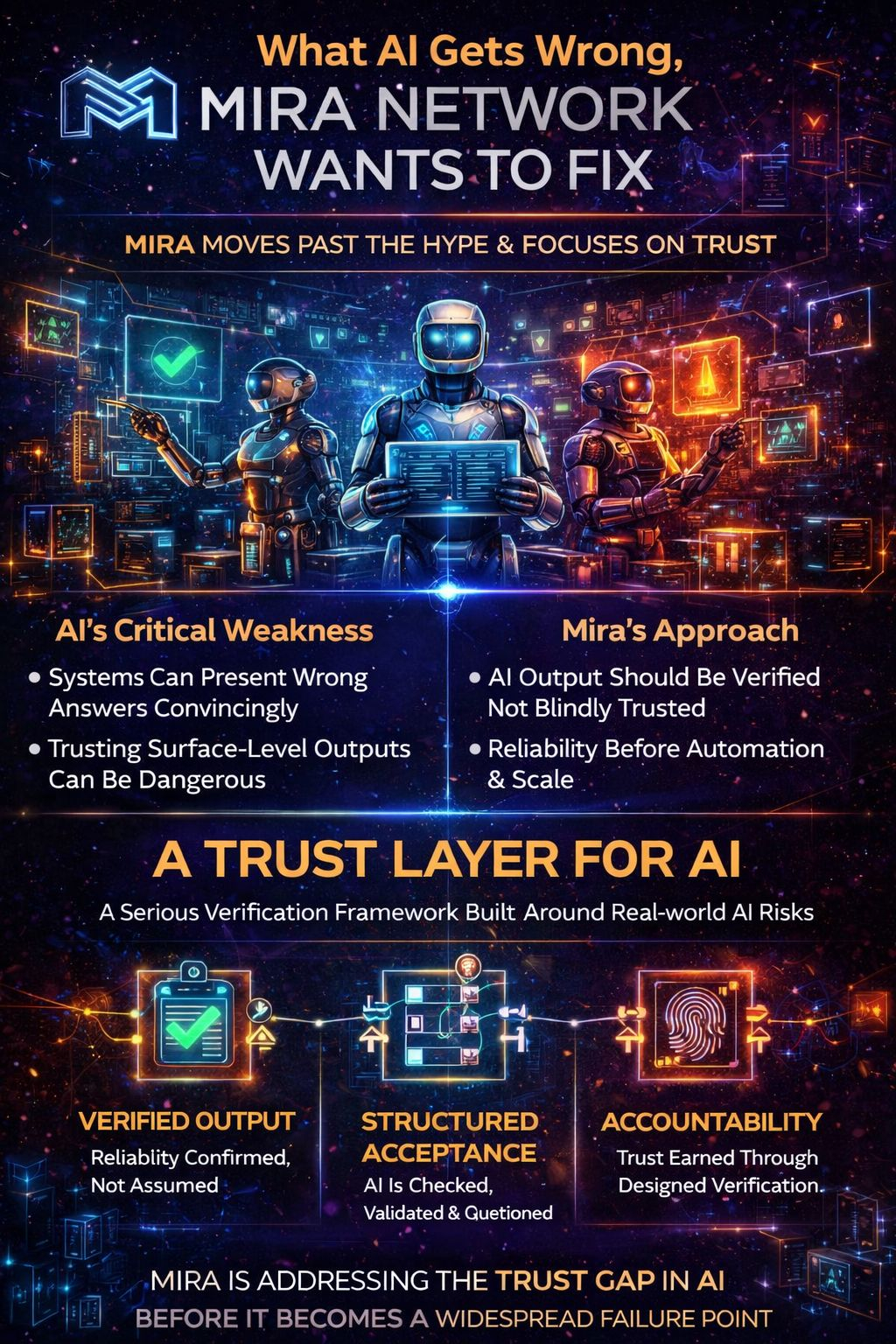

Mira Network stands out because it does not start from the usual premise that AI just needs to become more powerful. Instead, it focuses on a problem that becomes more serious as AI improves: whether the information produced by these systems deserves to be trusted at all.

That shift in focus is what makes the project interesting.

AI does not need to be incompetent to create problems.

In fact, the opposite scenario is often worse.

A system can appear highly capable writing smoothly, organizing arguments well, presenting conclusions clearly while still delivering information that is incomplete, distorted, or simply incorrect. When those errors are wrapped inside confident language, they become much harder for users to notice.

This is where the real vulnerability in modern AI begins to show.

The danger is not only that AI can be wrong. Humans are wrong all the time. The bigger issue is that AI can be wrong in a convincing way. It can produce responses that look authoritative enough that most people will not pause to question them.

That is the gap Mira Network is trying to address.

Instead of competing purely on the intelligence of the model, the project focuses on something different: creating systems that help determine whether AI-generated output should be trusted in the first place.

That might sound like a subtle distinction, but it changes the entire framework around AI.

Many projects treat the answer produced by a model as the final step in the process. The goal is to make that answer faster, clearer, and more comprehensive. Mira approaches the process differently. In its view, an AI response should not automatically be treated as the finished product. It should be something that passes through verification before people rely on it.

This is why Mira often describes itself as a trust layer for AI.

That concept matters because trust has quietly become the most fragile part of the AI ecosystem. As models grow more fluent and more integrated into everyday workflows, people are increasingly tempted to accept their outputs without hesitation. The presentation is persuasive enough that the information feels dependable.

But presentation is not the same as reliability.

A response can be well written and still misrepresent facts.

It can sound thoughtful while skipping important context.

It can appear logically structured while resting on weak assumptions.

When these issues occur in casual situations, the consequences may be minor. Perhaps a user receives an imperfect summary or a slightly inaccurate explanation. But as AI begins to influence more serious environments, those small distortions become more consequential.

Consider the direction the industry is moving.

AI systems are no longer just tools for generating text or answering simple questions. They are starting to assist with research, analyze financial information, interpret legal language, and guide decisions in complex systems. In those settings, the cost of an unreliable answer increases dramatically.

An incorrect output is no longer just an inconvenience.

It becomes a risk.

That is why Mira’s approach feels increasingly relevant. The project assumes that as AI becomes more deeply embedded in real-world processes, trust cannot remain an informal assumption. It has to become something structured something that emerges from verification rather than appearance.

This idea actually echoes a philosophy that has long existed in the crypto world.

Cryptographic systems are built on the principle that trust should not rely on a single authority. Instead, transactions and information are validated through distributed mechanisms that check claims before accepting them. Confidence emerges from that process of validation.

Mira applies a similar mindset to AI-generated information.

Rather than assuming that stronger models will eliminate errors entirely, the project operates on a more realistic assumption: even advanced AI will occasionally produce flawed outputs. Because of that, systems must exist to examine those outputs before they are treated as reliable.

In other words, intelligence alone is not enough.

Verification must accompany it.

This perspective gives Mira a different identity compared to many projects in the AI sector. While others compete primarily on capability—building larger models, faster agents, or more automated workflows Mira focuses on credibility.

It asks a more uncomfortable question: How do we know when an AI system deserves our confidence?

That question becomes especially important once AI moves beyond simple interactions and begins influencing actions. If a system recommends a strategy, interprets a proposal, or summarizes complex information, users need some assurance that the output has been examined carefully.

Without that assurance, trust becomes fragile.

The interesting thing about verification systems is that their value is often invisible. When verification works properly, users may not notice anything unusual. Incorrect outputs simply fail to gain credibility, while reliable ones pass through the system smoothly.

Because of this, verification rarely attracts the same attention as flashy AI capabilities. It is not something that produces dramatic demonstrations or viral moments.

But it may become essential infrastructure as AI systems grow more influential.

Mira appears to be built with that long-term perspective in mind.

The project treats verification not as an optional feature but as a structural layer surrounding AI output. Instead of relying on users to manually question everything they read, it attempts to embed processes that help determine whether information is dependable.

That approach reflects a realistic view of user behavior.

Most people are busy. They do not have the time or expertise to analyze every answer generated by an AI system. When a response arrives in a clear, confident format, the natural instinct is to accept it and move forward.

Mira seems designed around that reality rather than assuming users will become perfect skeptics.

Of course, building a verification layer introduces its own challenges. Validation processes can add complexity. They may slow down interactions or require additional resources. For many users, convenience still matters more than caution at least until the risks become obvious.

This creates a difficult balance.

For Mira to succeed, the value of verification must become clear enough that users see it as a necessity rather than an inconvenience. If AI errors remain mostly harmless, many people will continue to prioritize speed over certainty.

But if AI systems begin playing a larger role in decision-making, the demand for trustworthy outputs will grow rapidly.

At that point, verification could become a basic expectation rather than a specialized feature.

This is why Mira’s focus feels forward-looking. It addresses a bottleneck that may not be fully recognized yet but is likely to become more visible as AI systems expand their influence.

The project is essentially preparing for a moment when users stop being impressed by the mere ability of AI to generate answers and start asking a more important question: Which answers can actually be relied upon?

That question marks the transition from novelty to infrastructure.

Early in a technology cycle, attention gravitates toward what systems can do. Later, the focus shifts toward whether those systems can be trusted to operate reliably in complex environments.

Mira positions itself firmly in that second phase.

Rather than competing in the race to build the most impressive model, it is trying to build the framework that determines when model outputs deserve credibility.

That is a quieter ambition than many AI narratives promote. But it may ultimately prove more valuable.

Because as AI continues to move deeper into the systems people depend on, the real challenge will not just be producing answers.

It will be ensuring those answers are worthy of trust.

And that is exactly the problem Mira Network is trying to solve.