When I first dove into the @Mira - Trust Layer of AI whitepaper, what struck me most wasn’t the buzzwords or the tokenomics chart it was the clarity of the problem Mira is trying to solve. The challenge isn’t simply “make AI better.” It’s much subtler: how do we trust AI systems when they’re trained, evaluated, and deployed across distributed infrastructures with opaque incentives? My take is that trust isn’t a feature you add later it’s a structural property that must be engineered into the protocol from the ground up.

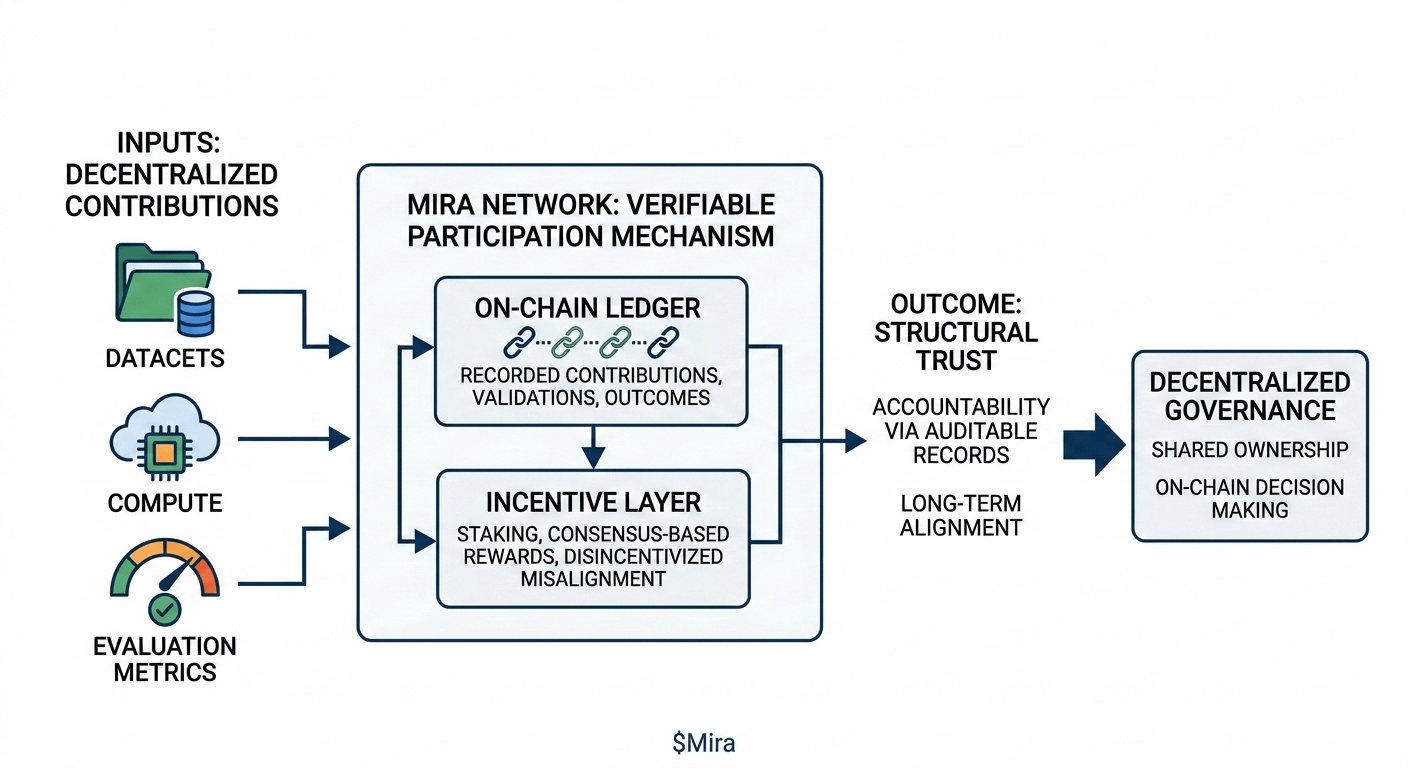

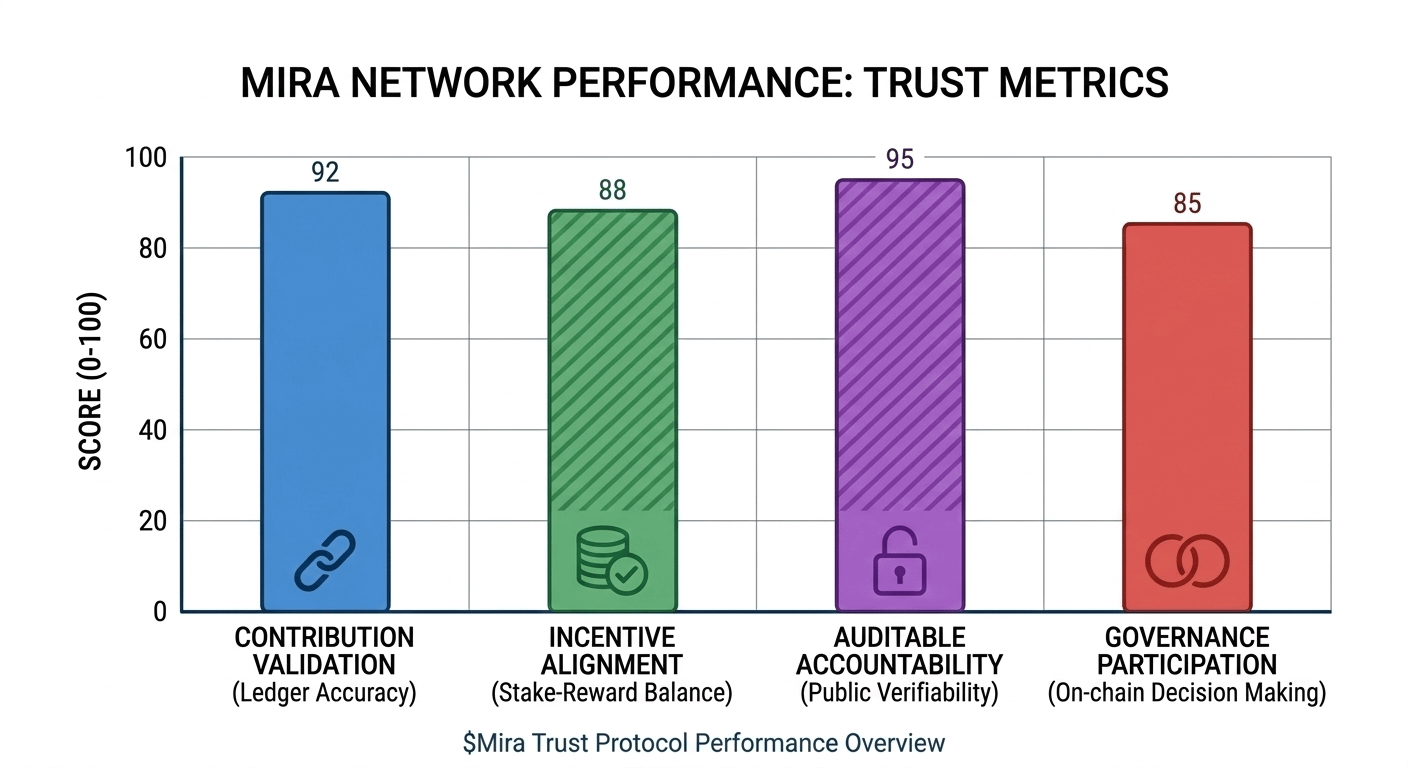

In my view, Mira’s approach to verifiable participation and aligned incentives is where it starts to feel different. Instead of centralized evaluations or proprietary quality signals, Mira’s mechanism uses on chain ledgers to record contributions, validations, and outcomes with cryptographic finality. I’ve noticed this isn’t just about transparency for its own sake; it fundamentally reframes accountability. When every actor whether contributing datasets, training compute, or evaluation metrics has their work logged and auditable, you begin to reduce the informational asymmetry that plagues many current AI ecosystems.

What resonates with me about Mira is how the incentive layer is structured. The system doesn’t reward short‑term wins or one off achievements; it rewards sustained, verifiable contributions that pass consensus. Contributors stake value, validators verify truth, and misalignment isn’t just frowned upon it’s economically disincentivized. This isn’t token reward design for attention; it’s reward design for trustworthy participation. From a governance perspective, that’s a profound shift. Shared ownership isn’t a slogan it’s baked into how decisions are recorded, challenged, and ratified on‑chain.

I’ve noticed that some frameworks claim to decentralize, but in practice they still rely on centralized oracles or subjective scorecards. Mira’s push toward objective, publicly verifiable records creates a baseline where claims about model quality, data provenance, or benchmarking results can be independently confirmed. That doesn’t solve every ethical or safety question in AI but it does create a substrate where those questions can be meaningfully interrogated rather than obscured behind black boxes.

My cautious opinion is this: trust isn’t solved by tech alone, but without structural accountability mechanisms like those in $MIRA , trust remains fragile and localized. Mira doesn’t promise perfect answers, but it does offer a protocol where accountability, shared ownership, and long‑term alignment aren’t afterthoughts they’re part of the incentive fabric.

I’m curious how others interpret this mechanism focus. Do you see verifiable on‑chain records as a meaningful step toward trustworthy AI governance?