Most people look at new crypto projects through the same lens: another token, another network, another incentive campaign. After spending some time observing the emergence of **Fabric Foundation** and its ecosystem, I don’t think it fits neatly into that familiar pattern. What caught my attention is not the token campaign or the reward pool tied to $ROBO , but the timing of the idea itself. The market is entering a phase where artificial intelligence systems are becoming agents rather than tools, and yet the infrastructure that coordinates those agents is still fragmented. Fabric Protocol seems to exist precisely in that gap.

For years the crypto industry has experimented with decentralized storage, compute markets, and data networks. At the same time, AI has been evolving toward autonomous systems capable of making decisions and performing tasks without constant human oversight. What has been missing is a coordination layer where machines, data providers, and computational resources can interact with each other in a verifiable way. Fabric Protocol appears to approach this problem by combining a public ledger with modular infrastructure designed for agent-native systems. The core idea is simple in principle but complicated in execution: autonomous machines should be able to request computation, verify results, and coordinate actions through a shared protocol rather than through centralized APIs.

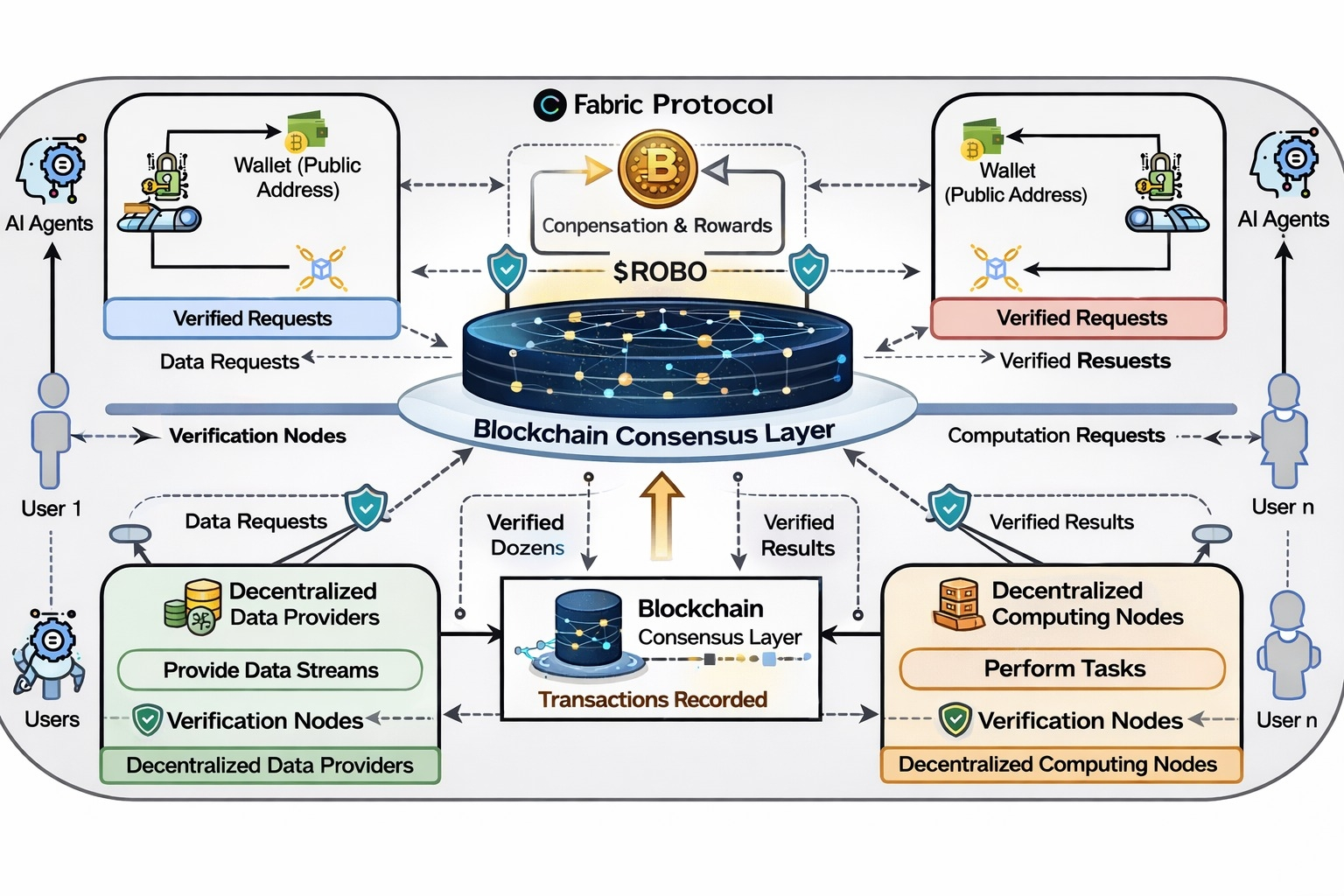

When I look at the design philosophy behind Fabric, I see an attempt to treat machines as economic actors inside a network. Instead of assuming that robots or AI agents are passive tools controlled by a single company, the protocol treats them as participants that can request services, exchange data, and verify outcomes across multiple nodes. The public ledger becomes the coordination layer where these interactions are recorded and validated. This matters because once machines begin interacting with other machines at scale, trust becomes a real problem. A robot requesting navigation data or compute resources needs a way to verify that the response it receives is reliable. Fabric tries to address that by embedding verification mechanisms directly into the protocol’s architecture.

In practical terms, the system coordinates three things that usually exist in isolation: data, computation, and governance. Data providers can contribute information streams, computational nodes can process tasks, and agents can consume those services. The ledger ensures that these interactions are traceable and auditable. The reason this structure matters is because autonomous systems require a level of reliability that traditional web infrastructure does not always guarantee. If an AI-driven robot relies on external computation or external datasets, it must be able to confirm that the information it receives has not been manipulated. A blockchain-based coordination layer provides a way to establish that trust without relying on a central authority.

From a user perspective, interaction with the ecosystem will likely feel indirect. Most people will not interact with Fabric the same way they interact with a decentralized exchange or a lending protocol. Instead, the network operates more like infrastructure in the background. Developers building robotics platforms or autonomous agents could integrate the protocol so their systems can request computation, verify model outputs, or coordinate with other agents across a decentralized network. Traders and token holders, on the other hand, engage with the ecosystem through the economic layer represented by **ROBO**.

The role of **ROBO** in the network is tied to the economic incentives that keep the infrastructure running. Tokens function as a coordination mechanism between participants who provide resources and those who consume them. Computational nodes that perform tasks or validate outputs need to be compensated, and the token becomes the medium through which that compensation flows. In theory, the more activity the network supports—data exchange, computational tasks, agent coordination—the more the token becomes embedded in the system’s economic activity.

However, there are trade-offs that deserve attention. The first challenge is adoption. Infrastructure protocols often struggle because their success depends heavily on developer ecosystems rather than retail speculation. A decentralized robotics coordination layer only becomes meaningful if builders actually integrate it into their systems. Without that integration, the protocol risks remaining a theoretical framework rather than a widely used network.

The second limitation is complexity. Coordinating autonomous agents across a decentralized infrastructure introduces technical challenges that go far beyond standard blockchain applications. Latency, reliability, and security become critical issues when machines rely on network responses to perform real-world tasks. A delay in a financial transaction is inconvenient; a delay in an autonomous machine’s decision-making process could be much more problematic.

From a market perspective, price behavior around tokens like ROBO will likely reflect expectations rather than current usage in the early stages. When investors look at infrastructure tokens tied to emerging technologies, they tend to price in the potential scale of the problem being solved rather than the current state of adoption. If the narrative around AI agents and robotics continues expanding, tokens connected to that narrative can experience speculative interest long before the underlying infrastructure reaches maturity.

What I find more interesting is the longer-term signal that on-chain activity could reveal. If the network begins to show consistent computational demand, agent interactions, or data verification transactions, those metrics would suggest real usage rather than speculative interest. In that sense, the most meaningful indicators for Fabric will probably appear in network activity rather than short-term price charts.

Another factor shaping the project’s trajectory is the broader market cycle. Crypto markets often move through phases where certain narratives dominate attention. We have already seen cycles focused on decentralized finance, NFTs, and modular infrastructure. The next narrative wave increasingly revolves around artificial intelligence. Fabric sits at the intersection of these themes, attempting to merge decentralized coordination with autonomous machine systems.

Whether that positioning becomes an advantage depends on execution. Many projects attempt to attach themselves to emerging technological narratives, but only a few manage to build infrastructure that actually becomes indispensable. Fabric’s success will depend less on marketing and more on whether developers see genuine value in using a decentralized coordination layer for machines.

After watching this space for years, I have learned to treat ambitious infrastructure projects with cautious curiosity. The idea of a protocol designed for machine-to-machine coordination is compelling, especially as AI systems move toward greater autonomy. At the same time, the distance between an interesting concept and a functioning global network is enormous.

Fabric Protocol represents an attempt to prepare for a future where machines are not just tools but participants in digital economies. The question is not whether that future will exist—autonomous systems are already emerging—but whether decentralized infrastructure will become the foundation that supports them.

I suspect the answer will reveal itself slowly, not through announcements or token campaigns, but through quiet signals: developers building on top of the network, machines requesting computation, and autonomous agents beginning to rely on protocols like @Fabric Foundation to coordinate their decisions. If that begins to happen, the project may turn out to be less about robotics and more about redefining how intelligent systems interact with the digital world.