When I first started using AI tools every day, I was amazed at how confidently they answered almost any question I threw at them.

I assumed that confidence meant accuracy. But the more I tested them, the more I realized something strange.

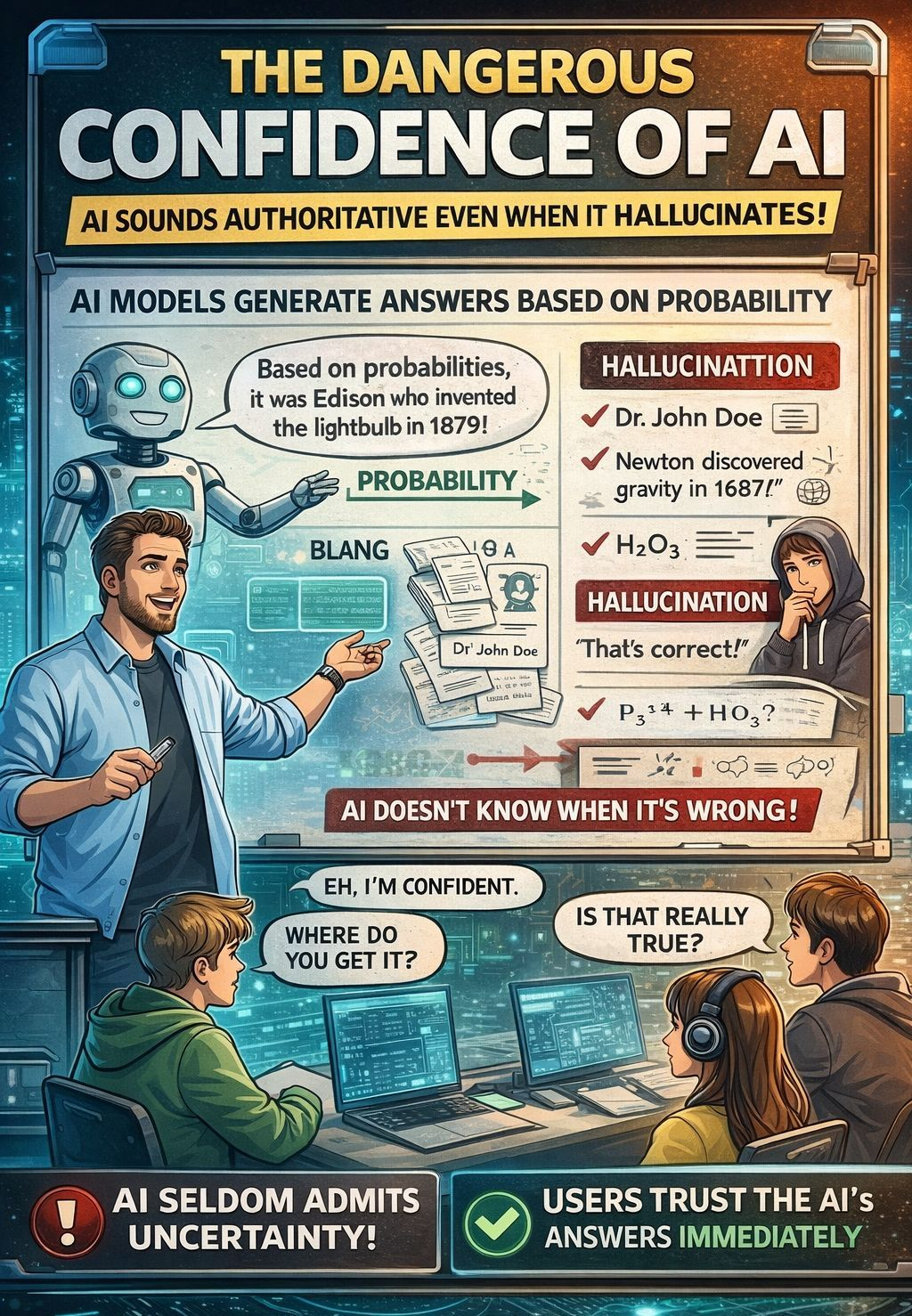

AI can sound extremely convincing even when the information is wrong.

Unlike humans who might say “I’m not sure,” AI often fills the gap with something that sounds correct.

I began noticing this especially when asking about specific facts. Names of researchers, dates of discoveries, or technical explanations. Sometimes the answers were accurate, but sometimes the AI simply invented details that looked believable.

It wasn’t lying intentionally instead it was just predicting the most likely answer based on patterns in their data. But the result is the same.

A confident statement that might not actually be true.

That’s when it hit me. The real danger of AI isn’t that it lacks intelligence but speaks with certainty even when the information is uncertain.

For users who trust the answer immediately, the line between fact and hallucination becomes incredibly thin.

When I wanted to verify something, I would open a search engine and compare several sources.

That process forced me to think critically.

I had to read multiple articles, check the credibility of the sources, and decide what information made the most sense.

But AI has quietly changed that habit.

Now, instead of scanning ten search results, I simply ask a question and receive one clean answer instantly.

It’s fast and convenient, but it also removes the verification process that used to happen naturally when browsing different sources.

Sometimes I catch myself accepting the answer without questioning it at all. And that’s a little unsettling.

If millions of people begin relying on AI responses instead of searching and comparing sources, the internet could slowly shift from an ecosystem of information into a single stream of AI-generated answers. The answers that may or may not be correct.

After seeing these problems repeatedly, I started thinking about what AI actually lacks. The issue isn’t intelligence or speed. What’s missing is a reliable way to verify whether an AI answer is actually true.

This is why the concept behind Mira Network caught my attention.

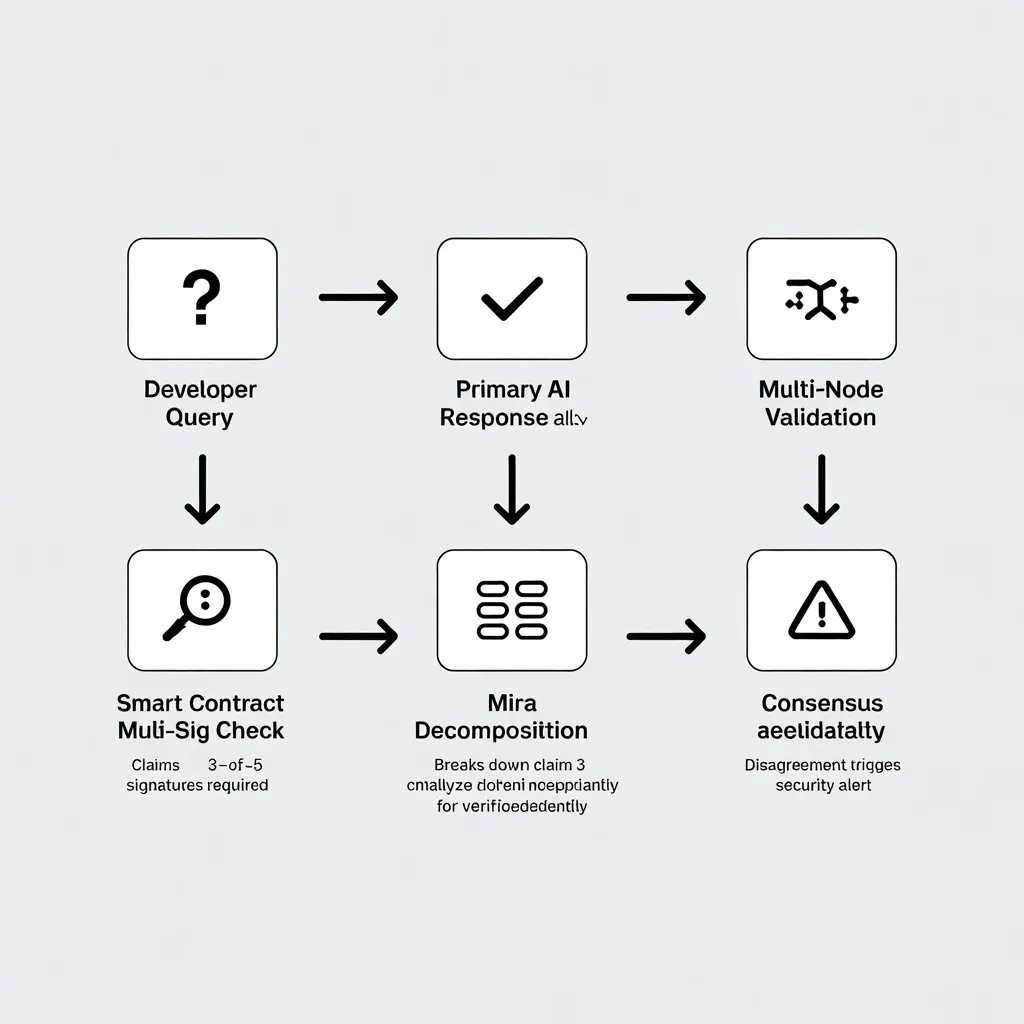

Instead of relying on a single AI model, the system uses a process called multi-model consensus, where multiple models evaluate a claim before confirming whether it’s accurate.

In other words, the AI answer isn’t just generated—it’s verified.

When I imagine the future of AI, I don’t think the winning systems will be the ones that simply produce the fastest answers.

The real winners will be the ones that can prove their answers are correct. In an internet increasingly filled with AI-generated information, verification might become the most valuable layer of all.

Let me breakdown it for you:

Mira does not rely on a single AI model. Multiple AI models evaluate the same claim. If several models agree, the answer becomes more reliable and less biased.

The Scenario: I asked an AI agent to check if a smart contract had a "multi-signature" requirement for emergency withdrawals.

The Hallucination: The primary AI agent confidently replied, Yes, the contract requires 3-of-5 signatures for withdrawals.

The Mira Check: Mira decomposed this into the claim. Function X requires 3-of-5 signatures. Three independent validator nodes analyzed the raw code. Two nodes correctly identified that the function was actually controlled by a single private key.

The Result: Because the models disagreed, Mira failed to reach consensus and alerted the developer. The "consensus of truth" overrode the "eloquence of the hallucination," preventing a potential multi-million dollar exploit.

Mira is also helpful to determine which crypto project has a mistakes in their white-paper.

For instance, I saw a DeFI token in binance alpha has surged more than 128% a day. I ask an AI assistant. Is this new DeFi token a good investment?

The AI reads promotional blogs and says the project has strong adoption and high TVL. But these lack of validity. The AI just gives you answer to satisfy you without checking the fact.

But Mira checks on-chain data and finds most liquidity comes from a few internal wallets. So, i know that the adoption is artificial and avoid investing in a risky project.

Another example. My friend is Reviewing a new protocol. She asks an AI tool if a protocol’s smart contract is secure.

Without Mira, AI claims the project is safe because it mentions an audit in its documentation.

With Mira, validator nodes verify the audit report and detect it is outdated and not covering the latest contract version.

My friend is avoiding integrating a potentially vulnerable protocol.