Mira Network is one of the rare AI-crypto projects that starts in the right place.

Not chasing scale. Not chasing speed. Not promising that more intelligence automatically means better results.

It starts with a tougher, deeper question:

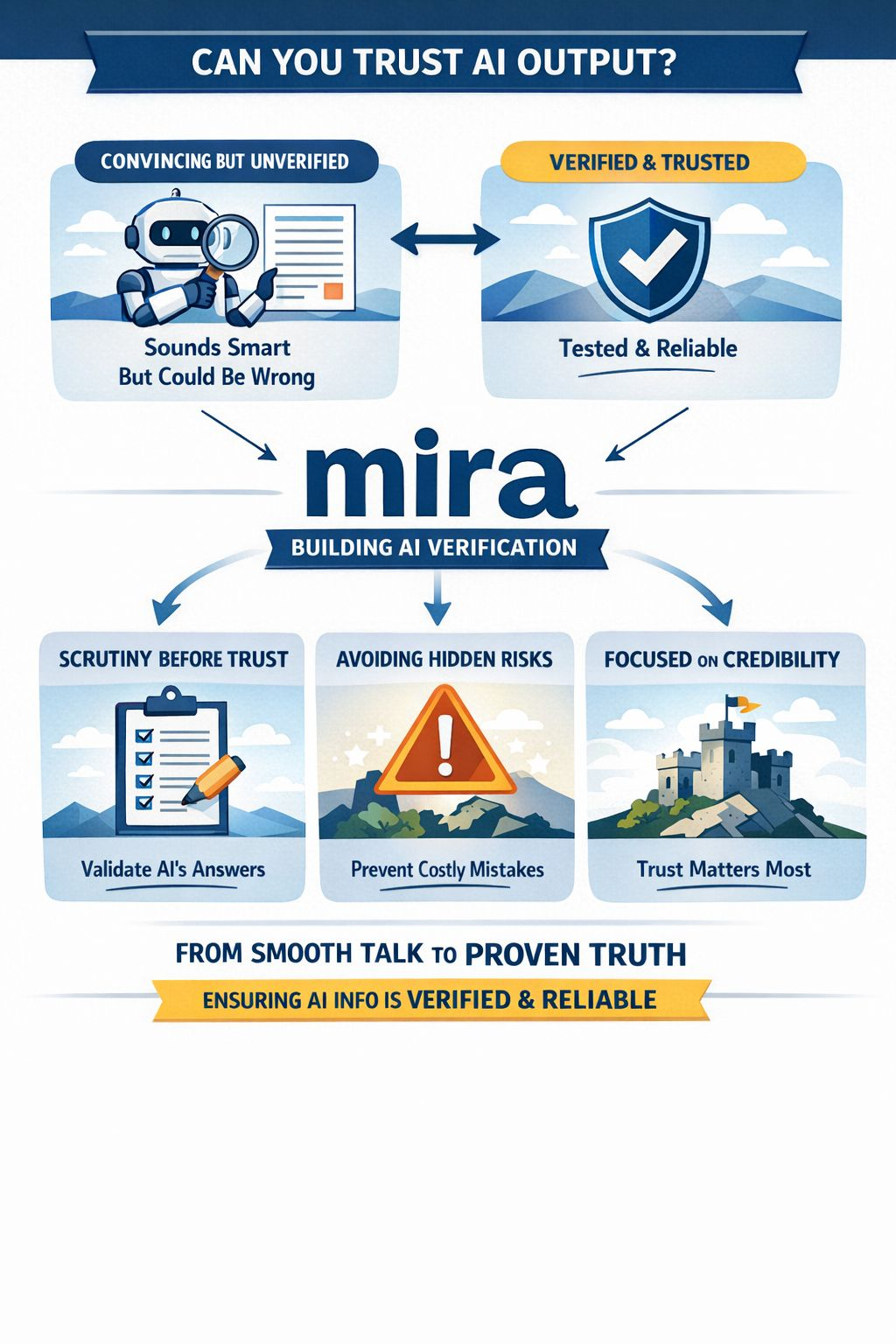

What happens when people stop telling the difference between an answer that simply sounds convincing and an answer that has actually earned trust?

That’s the real ground Mira is working on. And it’s more important than most of the market wants to admit. Many AI projects are still focused on output—more generation, more automation, more responsiveness, more tools stacked on top of models that are already treated as if fluency alone proves reliability.

Mira takes a different path.

It begins with the understanding that AI doesn’t become valuable simply because it can churn out language quickly. That’s exactly when it also becomes risky.

And that’s the part most projects overlook.

A polished answer isn’t automatically a reliable one. A model can sound confident, knowledgeable, and precise while quietly slipping in errors most people will never notice. Once that answer is delivered, most users don’t pause to verify it. They read it, internalize it, and act on it. In that sense, the real danger in today’s AI isn’t just that it can be wrong—it’s that it can be wrong convincingly.

That’s a serious concern.

Mira seems to grasp that better than almost anyone else.

The project isn’t focused on making AI look smarter. It’s focused on making trust in AI something that isn’t handed out too easily. That gives it a completely different tone compared to the wider AI-token scene. It cares less about showcasing machine ability and more about defining the circumstances under which machine output should actually be trusted.

It’s a narrower focus, but also a far deeper one.

It shifts the conversation away from sheer performance and toward judgment.

And that’s where Mira becomes compelling.

At its heart, the project is built around verification. Not as a cosmetic feature. Not as a final layer added for show. Verification is the very core of the model.

The concept is simple to state, but far harder to pull off: AI output shouldn’t be accepted just because a system produced it. It needs to be verified. Its claims need to be scrutinized. True confidence should come after that process, not before.

It sounds obvious.

It isn’t.

Most of today’s AI economy still acts as if stronger models will eventually fix the trust problem on their own. Better training. Better retrieval. Better tuning. Better context. Better interfaces. Sure, all of that can improve quality—but none of it addresses the core issue. Even the most advanced model can still generate a highly convincing error. It can misread information, exaggerate, compress nuance, or deliver a weak conclusion in a polished, authoritative form.

Mira seems to start from a more disciplined place: reliability isn’t just a question of how good the model is. It’s a question of validation.

That’s actually a very crypto-native idea, even if it doesn’t read that way at first. Crypto, in principle, is built on skepticism toward unearned trust. It replaces single points of authority with distributed validation. Mira takes a similar approach to AI. It’s not claiming intelligence alone is enough. It’s saying intelligence without structured verification is unstable.

In that sense, the project is less about producing AI and more about holding AI accountable.

That distinction gives it real weight.

It also makes Mira feel more connected to how people actually behave. The project doesn’t rely on the fantasy that users will automatically slow down just because AI can be flawed. They won’t. Most people are busy. Most are impatient. Most will trust whatever feels complete. That’s the pattern you see in practice: a polished answer lowers resistance. A confident tone lowers scrutiny.

Mira starts to make sense once you realize it’s built around real user habits, not around the fantasy of people who double-check everything themselves.

That realism matters.

The next stage of AI in crypto isn’t just about producing summaries or answering questions. It’s about shaping judgment. And that’s the shift most people fail to appreciate. Once AI begins guiding users to interpret proposals, evaluate markets, weigh risk, or inform decisions, mistakes aren’t just minor errors—they have consequences.

A flawed output is no longer merely a small embarrassment.

It becomes a liability.

And that’s exactly the point where Mira’s approach begins to feel far more robust.

The project is really asking a fundamental question: can trust in machine-generated output be treated as infrastructure instead of just an assumption? That’s a big question. It goes beyond the usual focus on AI simply producing more content and asks whether the system around that output can make trust something that isn’t easily faked. Very few projects in this space are even thinking at that level. Most are still competing on sheer capability.

Mira, by contrast, is competing on credibility.

That’s a tougher market to build.

It’s also a far more defensible one—if it works.

Because once verification becomes essential, it stops being a nice-to-have. It becomes plumbing. People might overlook it at first, undervalue it, or treat it as invisible, since its success often looks like nothing at all. But the layers you barely notice are usually the ones that matter most when systems grow complex.

Verification works like that. When it’s effective, flawed outputs don’t gain trust easily. That absence is hard to sell, but it can be extremely valuable.

Still, none of this means Mira has a free pass.

The system introduces real friction. Verification isn’t free. It adds effort. It can slow things down. It brings in complexity that users and builders will only accept if the payoff is obvious. That’s the core challenge for the project.

It’s not about whether verification sounds important in theory. Of course it does.

The real test is whether Mira can make the value of verification tangible enough to justify the added cost.

That’s where the project will face its true trial.

If verification stays something people admire in theory but ignore in practice, Mira could end up as a strong concept with limited real-world need. But if unverified AI output starts to feel too risky in situations where decisions carry actual consequences, the project’s reasoning becomes far more persuasive. At that point, verification isn’t just a nice-to-have—it becomes part of the baseline standard.

That’s the threshold that really matters.

I think Mira is focused on the right problem because the market is quietly moving in that direction, whether anyone admits it or not. The more AI is used to interpret rather than just generate, the faster users confront an uncomfortable truth: polished language doesn’t prove sound reasoning. A smooth answer isn’t evidence. A response that sounds complete isn’t automatically trustworthy.

It’s in that gap between appearance and reliability where most of the real risk exists today.

Mira is built right inside that gap.

That’s why I wouldn’t describe it as just another AI project riding on crypto rails. That view is too superficial. A more accurate way to see it is as an effort to formalize doubt before confidence turns into action. It’s trying to build a system where machine output isn’t trusted simply because it’s presented smoothly, but because it has gone through a process meant to test it.

That’s a far more mature goal.

It also gives the project a clearer identity than most of its peers. It’s not chasing the widest narrative. It’s carving out a narrower space: trust infrastructure for AI-generated information. A smaller lane, yes—but the lanes that endure are often the ones that start small.

Big, broad narratives grab attention. Narrow, concrete problems create endurance.

Mira is focused on a specific problem.

And it’s a real one.

If the project keeps moving in that direction, its strongest impact will likely emerge wherever AI stops being a passive tool and starts shaping how people make decisions, interpret information, and take action. That’s where verification can’t be ignored. That’s where trust has to be built into the system.$MIRA @Mira - Trust Layer of AI #Mira