When I started spending more time reading about open robotics systems, I kept feeling that something was missing from the conversation. A lot of writing in this area is strong on ambition and weak on structure. It is easy to imagine capable machines moving across warehouses, homes, farms, labs, and industrial settings, but it is much harder to explain who verifies the work, who carries the downside when a machine underperforms, and who gets to shape the rules as those systems improve. While reading the Fabric Foundation material, I found myself less interested in the futuristic language and more interested in the underlying premise that robot development is not only a hardware problem or a model problem. It is also a coordination problem.

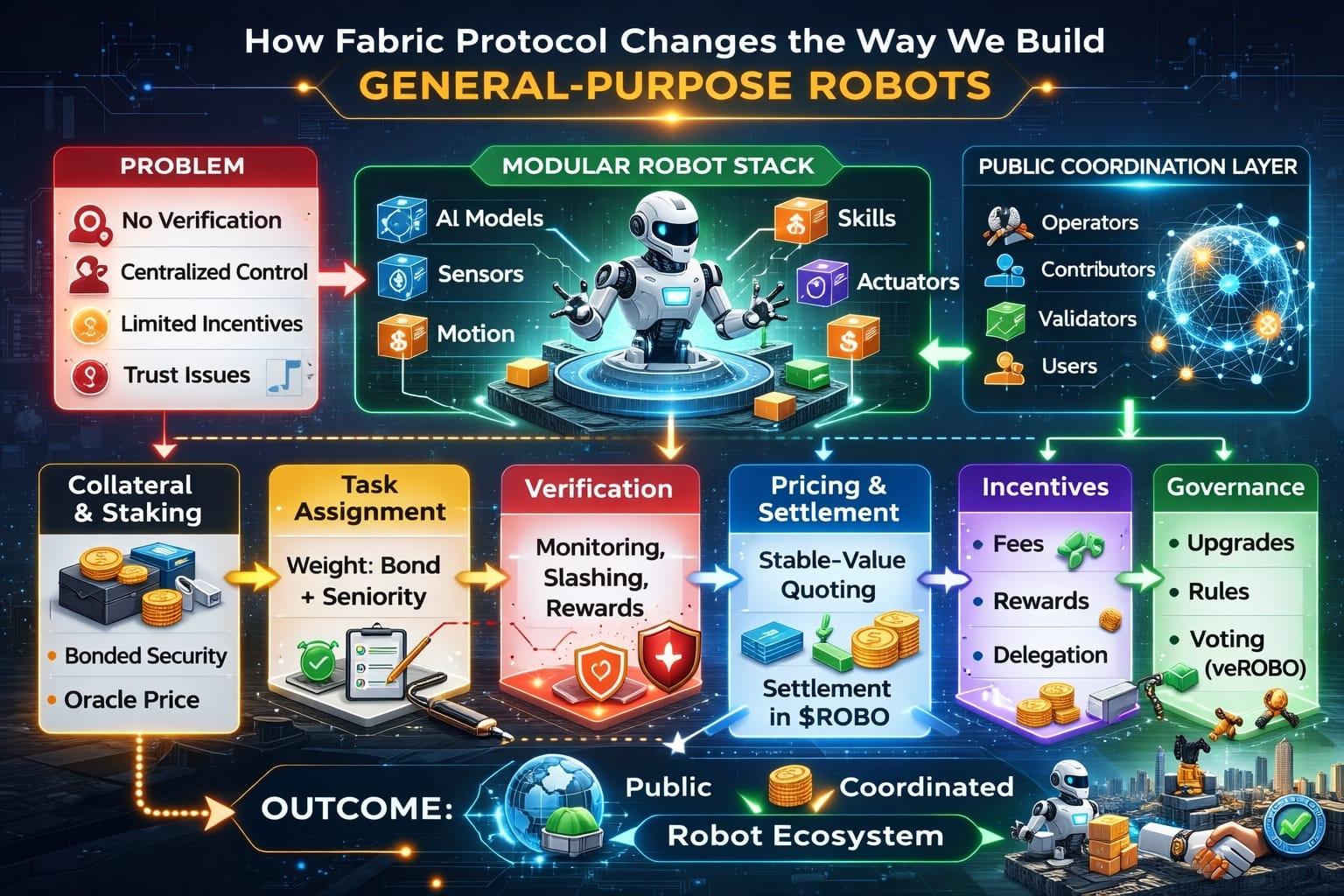

That distinction matters because general-purpose robots do not fail only when intelligence is weak. They also fail when the surrounding system is incomplete. A machine that is expected to operate across changing tasks and environments needs more than perception, planning, and control. It needs identity, task assignment, payment logic, quality tracking, upgrade pathways, accountability rules, and a credible way to measure whether participants are behaving honestly. In closed architectures, those pieces usually sit inside one company or one vertically managed platform. That can speed up iteration in the early stage, but it also concentrates oversight and narrows who gets rewarded for meaningful contributions. If the long-term objective is to build machines that can evolve across many contexts, that model starts to feel too narrow for the scale of the problem.

To me, it is similar to designing a large public transport network with excellent vehicles but no shared signaling system, no accepted inspection standard, and no reliable way to coordinate routes, pricing, and responsibility.

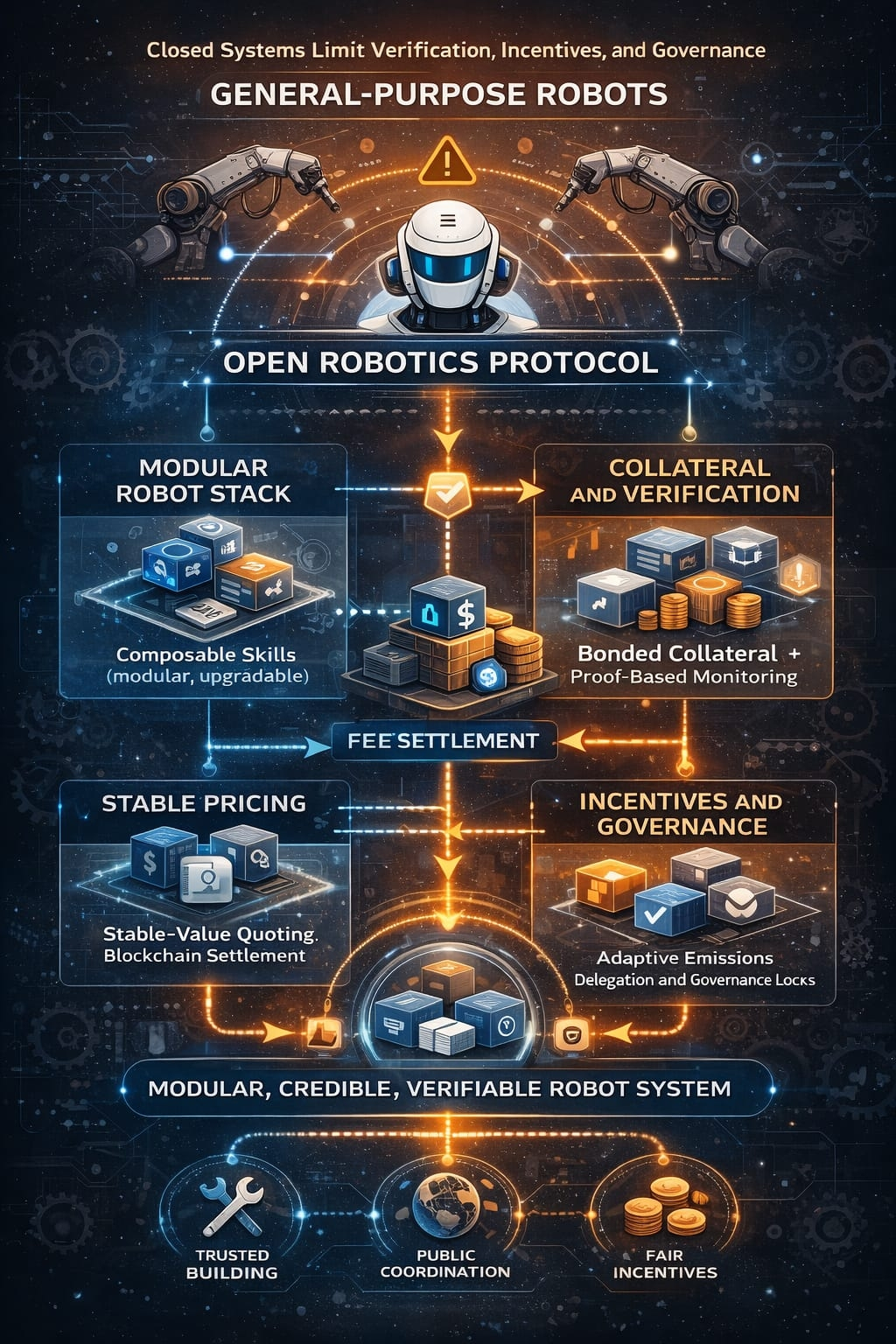

What Fabric Protocol changes is the frame through which the robot stack is organized. Instead of assuming that a general-purpose machine must come from one tightly controlled organization, the network treats the system as a public coordination layer where operators, model contributors, validators, and users interact through a ledger that records obligations and outcomes. The most important part of that approach, in my view, is modularity. The paper leans toward composable skills and layered infrastructure rather than one sealed end-to-end machine intelligence. That matters because modular systems are easier to inspect, constrain, replace, and improve. A robot that is intended for broad use should not have to be reinvented from zero every time a capability improves. It should be able to evolve in parts, with components that can be measured, rewarded, challenged, and replaced without collapsing the full stack.

At the mechanism level, the chain does not seem designed around the unrealistic idea that every physical action can be perfectly proven in a strict mathematical sense. Instead, it is built around making honest behavior economically rational and dishonest behavior expensive. Operators post a refundable base bond in the native asset, but that bond is referenced against a stable-value benchmark through oracle input so its security meaning does not drift too far with token volatility. For active work, a portion of that collateral is committed as job-specific backing, which means the system can assign tasks without forcing a new staking cycle every single time. That is a practical design choice. The whitepaper also describes selection logic shaped by bonded capacity and seniority, with weighting that can be validated on-chain through proof structures such as Merkle-based verification. That suggests the state model is doing more than tracking balances. It is maintaining live records of capacity, eligibility, active collateral, assignment history, and quality performance.

I also think the pricing flow is more grounded than it might look at first glance. Service value can be negotiated in a stable reference unit so buyers and operators are not forced to reason in a volatile denominator during normal activity. But settlement still clears in the native asset through oracle-based conversion. That separation is useful because it keeps negotiation human-readable while preserving a single settlement and security layer at the protocol level. In simple terms, the person buying a service can think in stable purchasing terms, while the network still uses one asset for accounting, fees, and collateral logic. That does not eliminate market risk, but it reduces confusion and keeps pricing more legible.

The utility of the token is therefore tied to actual network function rather than abstract symbolism. Fees create transactional demand, staking secures operator behavior, work bonds back real service commitments, delegation expands usable capacity, and governance locks influence procedural decisions. The economic design described in the paper is also more adaptive than the usual fixed-emission model. Instead of relying on one rigid issuance schedule, it introduces an emission engine that responds to utilization and quality conditions, while a circuit breaker limits abrupt changes from one epoch to the next. My reading is that this is meant to avoid a familiar problem in crypto systems: if emissions are too low, useful supply never arrives when the network needs it; if emissions remain too aggressive once activity matures, dilution starts to overpower the purpose of participation. Here, the model tries to negotiate that balance dynamically instead of pretending one schedule will suit every stage.

Delegation and governance are handled in a way that feels more operational than theatrical. Delegation is not framed as passive yield for its own sake. It functions more like directed capacity support for devices or service pools, with slash exposure attached if operators fail, cheat, or degrade below acceptable thresholds. Governance, through longer-duration locking such as veROBO, appears focused on matters that directly affect system discipline: parameters, slashing conditions, quality thresholds, upgrades, and procedural rules. I prefer that narrower scope because robotics coordination does not benefit from vague governance promises. It benefits from clear authority over concrete risk controls.

The verification layer is where the design feels most realistic to me. The document does not claim that every robot action can be transformed into a neat cryptographic certainty. Instead, it relies on challenge mechanisms, validator observation, heartbeat checks, quality thresholds, and differentiated penalties. Fraud, downtime, and degraded service are treated as different categories of failure, which is exactly how they should be treated. In physical systems, full proof is often too expensive or simply impossible, so the more honest target is to make manipulation detectable, punishable, and economically unattractive. That is where bonded exposure, validator bounties, suspension rules, and targeted slashing come in. It is not a perfect answer, but it is a more mature answer than pretending real-world machine work can always be reduced to clean on-chain certainty.

Another part I found thoughtful is the reward model. The paper outlines a graph-based reward layer that begins by weighting activity more heavily during the bootstrap phase and then gradually shifts toward revenue weighting as utilization increases. That transition matters because young ecosystems often need to recognize early contribution before demand is fully formed, while mature ecosystems should stop rewarding movement that does not create real value. By blending verified activity, network growth, and revenue over time, the chain tries to move from early coordination into durable economic discipline without confusing those two stages.

My uncertainty is whether the design will remain coherent once real hardware diversity, adversarial behavior, and regulatory pressure become more intense. The coordination logic is thoughtful, but real deployment will test whether oracle assumptions, validator measurement, modular skill composition, and enforcement rules can hold up outside a document. An honest limitation is that the whitepaper explains the economic and verification architecture in stronger detail than the final low-level implementation of the underlying chain, so some consensus and execution specifics still seem intentionally open. Even with that limit, I think the central idea remains important. This network does not treat general-purpose robots as products that can be built first and governed later. It treats them as systems that need accountability, verifiable contribution, and shared coordination from the beginning.

@Fabric Foundation #ROBO #robo $ROBO