Most conversations around AI are obsessed with improvement.

Smarter models.

Faster responses.

More data, more parameters, better training.

It’s the obvious direction.

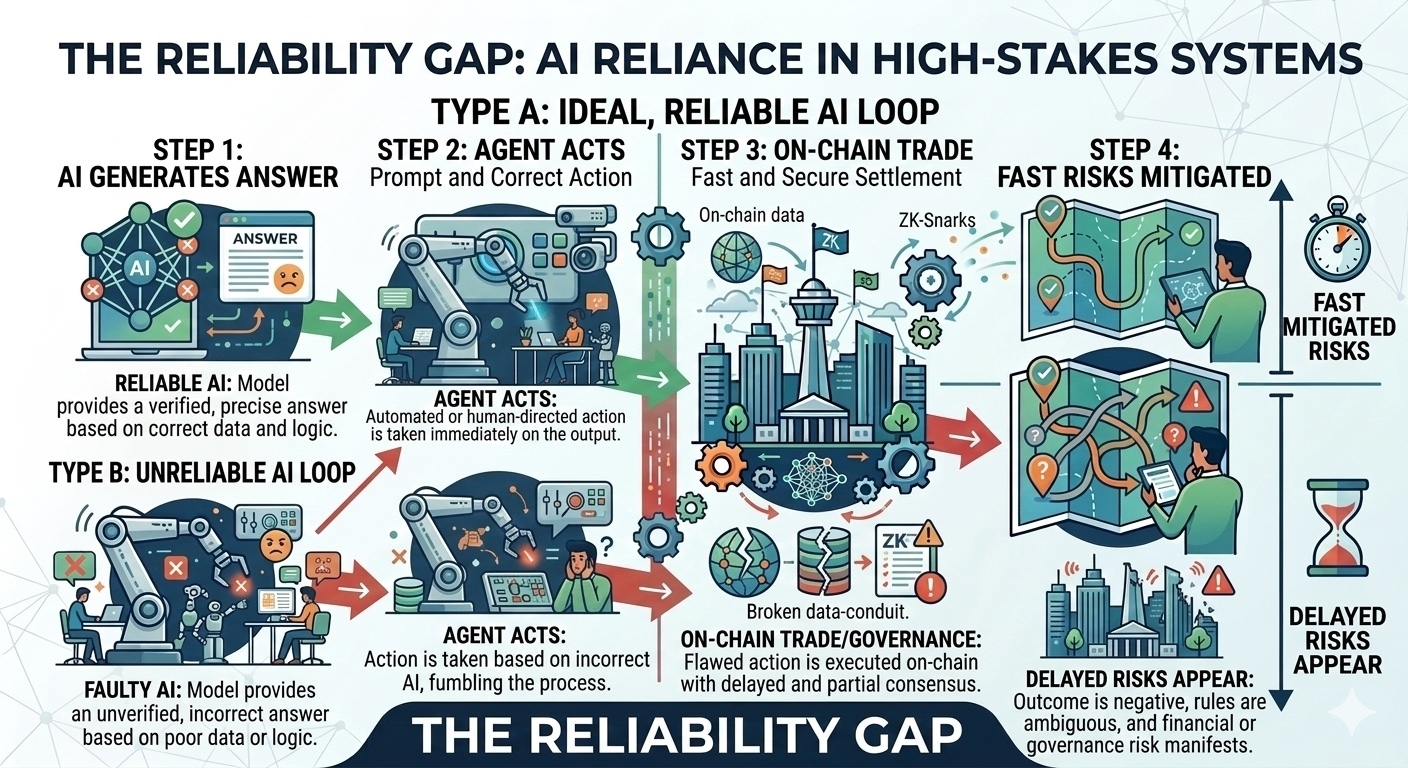

But once AI starts operating inside financial systems, the question changes. The challenge is no longer just intelligence. It becomes reliability.

Because when AI begins executing trades, interpreting DAO governance proposals, or guiding autonomous agents managing DeFi strategies, its outputs stop being suggestions.

They become actions.

And actions based on unverified information create a type of risk the ecosystem is only beginning to understand.

This is the problem Mira Network is trying to address.

Right now, most AI systems operate like black boxes. You ask a question, the model produces an answer, and you decide whether you trust it. That works in research environments or casual use cases.

It becomes dangerous when those outputs are connected directly to capital or governance.

A single incorrect interpretation can influence a vote.

A flawed analysis can trigger a trade.

A hallucinated data point can move real funds.

Smarter models reduce mistakes, but they do not eliminate them. Hallucinations and bias remain structural limitations of probabilistic systems.

What’s missing is not intelligence.

It’s verification.

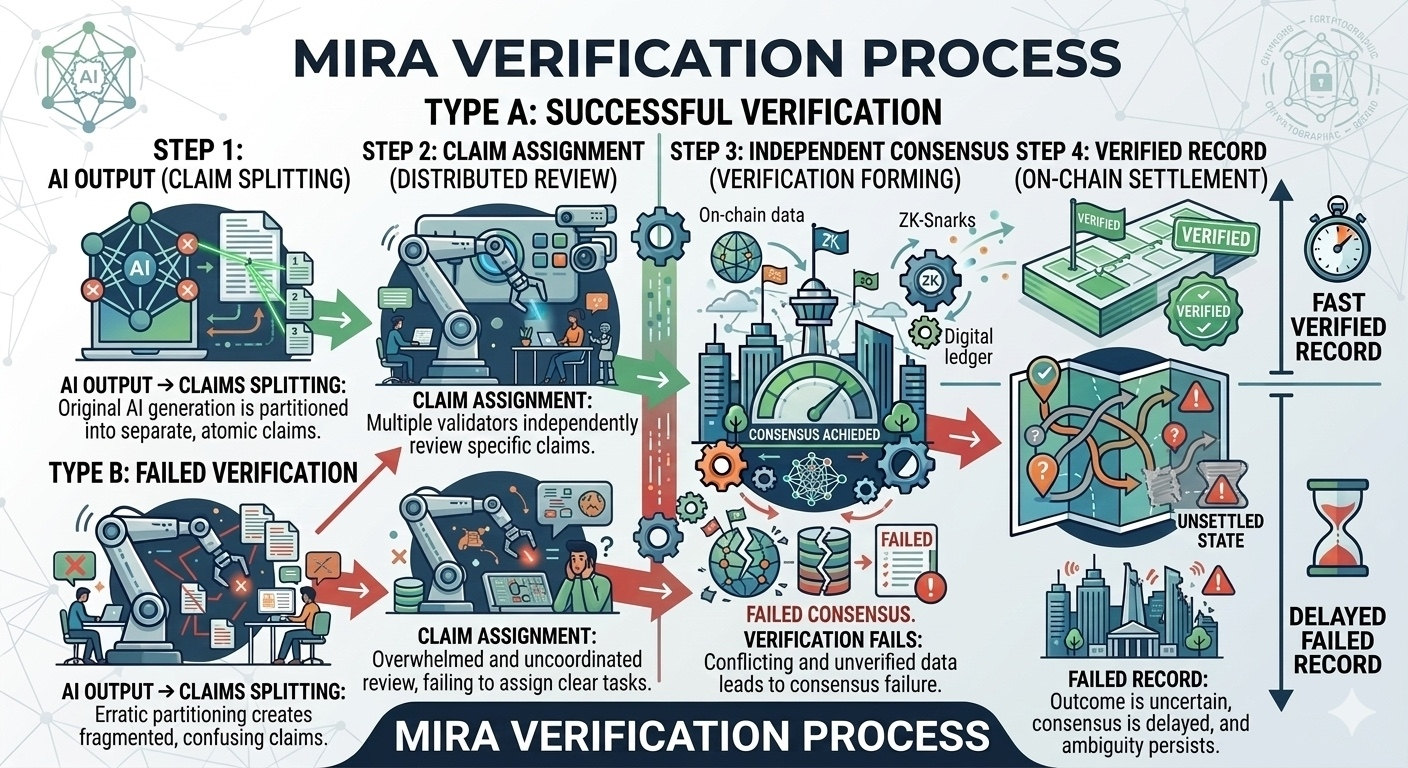

Mira approaches the problem from a different direction.

Instead of relying on a single model to produce the correct answer, the protocol separates the process into two parts: generation and verification.

An AI model generates an output. That output is then broken into smaller claims. Each claim is distributed across a network of independent validators that evaluate them individually.

These validators can include different AI models or hybrid participants.

The important detail is that they operate independently. Each validator evaluates claims without knowing how others respond, preventing coordination or bias from influencing the process.

Once enough validators examine the claims, consensus forms around which ones are valid.

The verified results are then recorded on-chain, creating a transparent and auditable record of how the final output was validated.

The economic layer strengthens this system.

Validators must stake $MIRA to participate in the verification process. Accurate validation earns rewards, while incorrect or dishonest behavior results in penalties. This creates an incentive structure where reliability becomes economically enforced rather than assumed.

Instead of trusting a single model or centralized authority, the network relies on distributed verification supported by incentives.

This approach becomes increasingly relevant as AI agents gain more autonomy within Web3.

Agents managing liquidity pools.

Agents executing arbitrage strategies.

Agents interpreting governance proposals in real time.

As these systems begin interacting directly with capital, the cost of incorrect outputs increases dramatically.

Mira’s approach acknowledges a simple reality: intelligence alone is not enough to build trustworthy autonomous systems.

Verification must exist alongside it.

If AI is going to operate inside financial infrastructure, its outputs need more than confidence.

They need proof.