I started thinking about robotics networks from the wrong angle.

Most conversations focus on the robots themselves. The machines. The automation. The idea that agents could one day execute tasks, coordinate work, and transact without human supervision.

But the more I read about what Fabric Foundation is building around ROBO, the more something else stood out.

Not robots.

Verification.

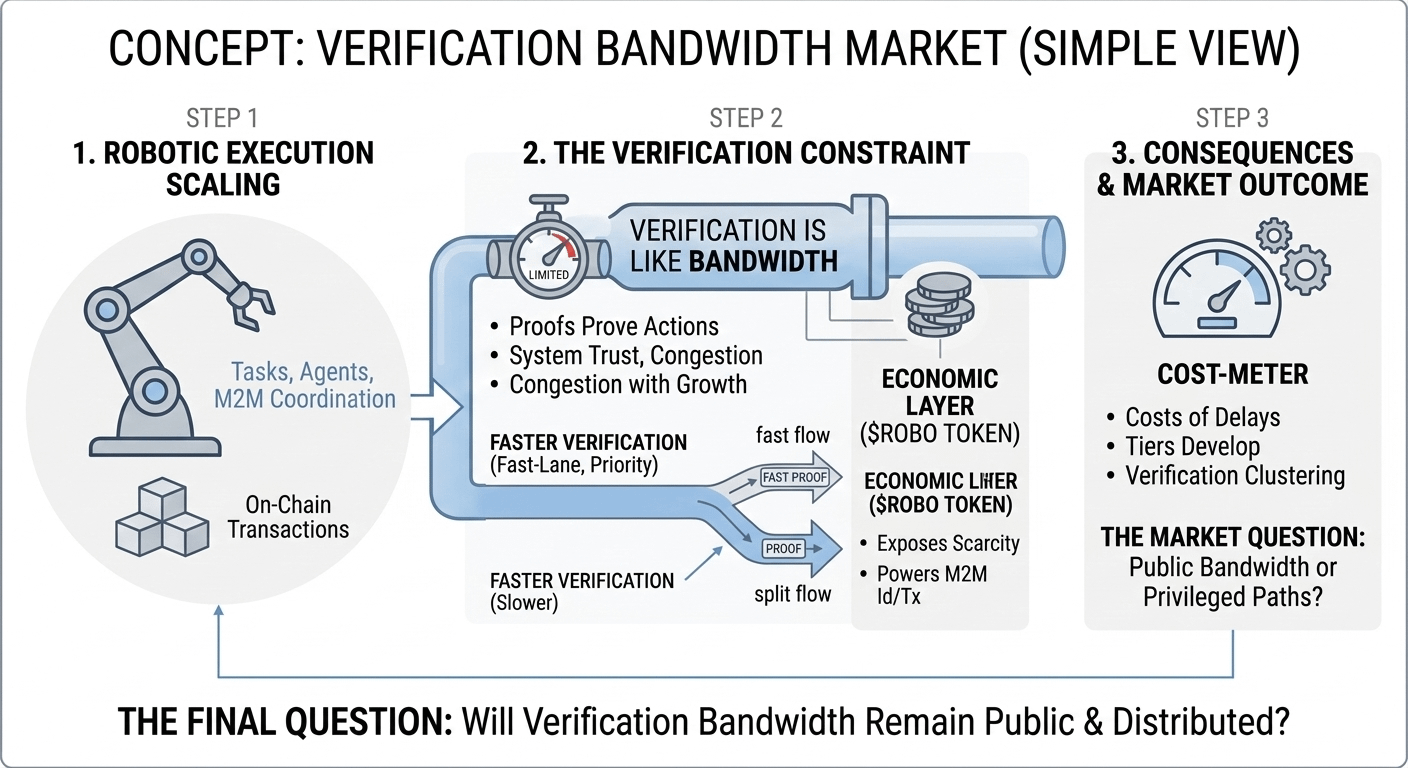

Any system that coordinates machines at scale eventually runs into the same constraint: proving that actions actually happened. Execution is easy to imagine. Verification is what determines whether the system can trust itself.

Fabric frames ROBO as the economic layer for a network where machines, services, and autonomous agents can interact through wallets, identities, and on-chain transactions.

In that world, every robotic action leaves a trace. A payment. A task completion. A state update.

And every trace needs verification.

Verification sounds infinite when we talk about blockchains in theory. In practice it behaves more like bandwidth. When usage grows, the network starts deciding which proofs close quickly and which ones wait.

That is where a market quietly appears.

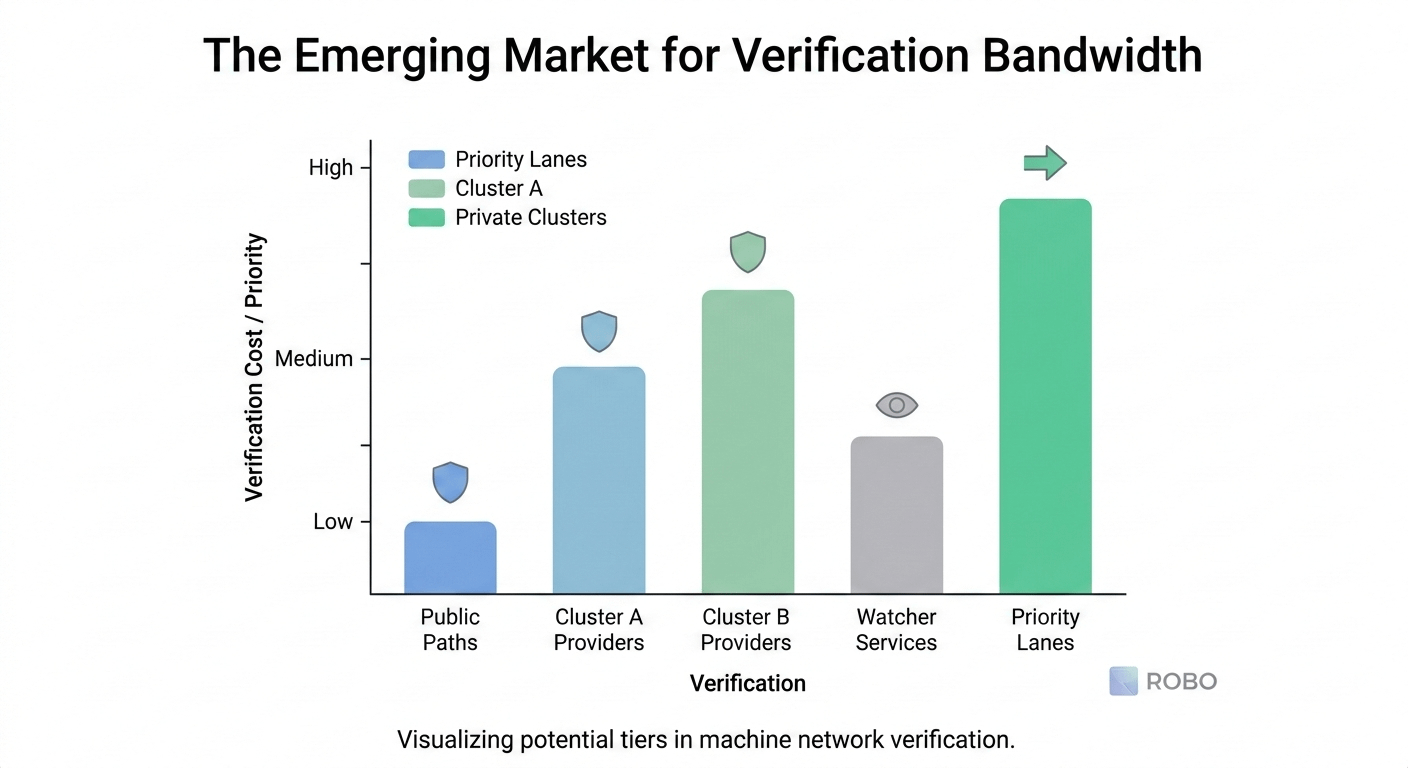

Once verification has limits, the system begins to form lanes.

Some infrastructure becomes faster to confirm actions. Some verification paths become more reliable. Developers start routing through the same providers because they close tasks consistently during busy periods.

None of this looks dramatic from the outside.

It looks like reliability engineering.

But it slowly turns verification into a service level rather than a guarantee.

On a network built for machine coordination, that matters more than most people realize.

Robots and agents do not just execute tasks. They depend on confirmation before the next step begins. A delayed verification step can stall downstream actions, force systems to wait, or push operators to build extra guard layers.

This is where the real cost appears.

Watcher services. Retry logic. Confirmation windows before acting. Infrastructure designed not for execution, but for waiting until the system agrees something happened.

The interesting thing about ROBO is that it places an economic layer around these processes. The token is designed to power identity operations, machine transactions, and coordination activities across the Fabric network.

But tokens don’t eliminate scarcity.

They expose it.

If the verification layer remains open and distributed, ROBO could support a wide ecosystem of machines and services interacting without relying on a privileged infrastructure path.

If verification begins clustering around a few providers, the system still works. It just quietly develops tiers.

The network keeps running.

But the clean path for proving actions starts to belong to whoever can supply the fastest verification.

So the question I keep coming back to is not whether machines will transact onchain.

It is whether verification bandwidth stays public when they do.

Because once machines begin operating at scale, the most valuable resource in the system may not be computation or automation.

It may simply be the capacity to prove that something actually happened.