I’m waiting through another slow night in the  #market. I’m watching charts move but the real signals are in the infrastructure dashboards. I’m looking at the same pattern that shows up every cycle when systems get stressed. I’ve seen enough outages to know the problem usually starts far away from consensus. I focus on the pipes, because when the pipes clog the screen starts lying.

#market. I’m watching charts move but the real signals are in the infrastructure dashboards. I’m looking at the same pattern that shows up every cycle when systems get stressed. I’ve seen enough outages to know the problem usually starts far away from consensus. I focus on the pipes, because when the pipes clog the screen starts lying.

Fabric Protocol sits in the part of the stack most people only notice when something breaks. It’s an open network backed by the Fabric Foundation that tries to coordinate robots, data, and computation through a public ledger. The key idea is simple but heavy: robots and agents shouldn’t just run tasks somewhere in the dark. Their work should be provable through verifiable computing, and the coordination around that work should live on a shared system that anyone can audit.

That sounds abstract until the operational reality shows up.

Most systems start clean. Roles are separated at the beginning. There are nodes that execute workloads, systems that ingest data, services that answer queries, and infrastructure that records the canonical history. Over time convenience starts winning small arguments. Operators combine layers. Query endpoints sit on top of the same machines doing heavy processing. Indexers and storage share disks with execution workloads. The system becomes faster to deploy but harder to isolate.

Hidden coupling forms quietly.

When pressure hits, that coupling becomes visible. The first domino almost always falls at the edge. Public endpoints take a traffic spike and suddenly every client is hammering the same gateway. Caches start disagreeing about state because responses arrive out of order. Wallets, bots, and services assume something failed and begin retrying. Those retries turn into a storm.

Now disk pressure builds. Queues grow faster than they drain. Indexers fall behind because they cannot write state quickly enough. The chain itself might still be producing blocks normally, but the surface layer can’t keep up. Confirmations start to feel stuck. Balances look wrong. Transactions appear missing even though they are sitting in the ledger just fine.

For the people using the system, trust cracks in seconds. Traders see stuck confirmations. Developers think their transaction disappeared. The underlying consensus might be healthy, but the infrastructure exposing that truth is struggling to breathe.

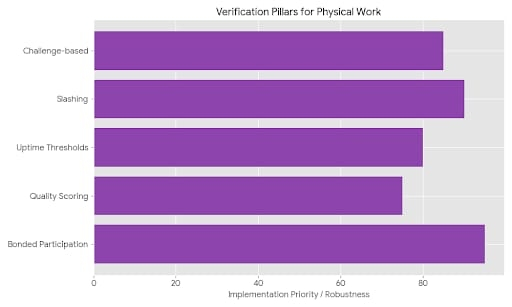

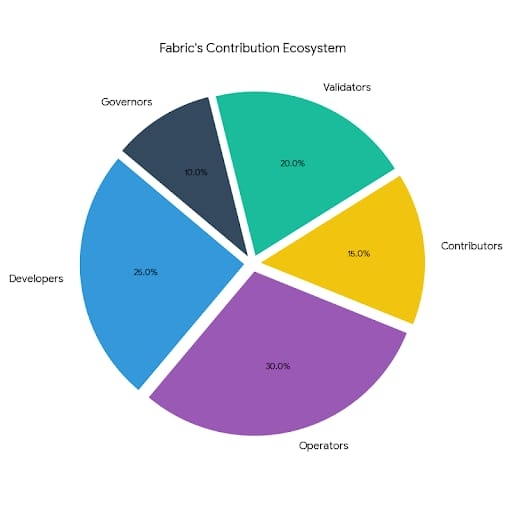

Fabric Protocol tries to reduce that blast radius by drawing harder boundaries between roles. The ledger coordinates activity and records outcomes, but it doesn’t try to swallow every operational responsibility. Execution nodes run robotic tasks. Verifiable computing proves the results of those tasks. Data pipelines bring information into the system. Governance decides how those resources are allocated.

Each part has a narrower job.

That separation matters because stress can stay contained. If compute nodes get overloaded by robotic workloads, verification still confirms whether outputs are valid. If data ingestion slows down, the coordination layer continues to record what the network is doing. The system bends instead of collapsing across every layer at once.

Scaling then becomes less chaotic. Data ingestion can grow through dedicated pipelines. Query layers can add caching and load balancing to absorb spikes in demand. Rate limits and abuse filters can slow automated floods before they punch holes in the system. Ingest and query paths can split so analytics traffic doesn’t suffocate execution workloads. Storage and indexing strategies can let lagging nodes catch up without freezing everything.

But none of this makes the hard problems disappear.

Access layers become a place where trust has to be managed carefully. The endpoint that developers rely on most quietly shapes how fast truth reaches the screen. Data providers need to stay consistent across multiple operators or small differences start creeping into results. Latency becomes its own argument when different endpoints reveal the same information at different speeds.

There is also a gravitational pull toward convenience. Developers often default to the fastest endpoint available. Over time that can centralize traffic around a few operators even if the protocol itself stays open. Balancing operator diversity with simple developer experience becomes a constant tension.

Fabric Protocol doesn’t pretend those tradeoffs vanish. What it does is introduce discipline in how the system is structured. Execution proves its work. Data pipelines feed the network without owning it. The ledger coordinates without absorbing every operational task.

The point isn’t perfection. The point is containment.

Because real infrastructure eventually gets stressed. And when that moment arrives, the systems that survive are usually the ones that drew their boundaries early and refused to let one part choke the rest.