Been staring at the same proof record for twenty minutes.

Not because something broke. Because of what's sitting inside it.

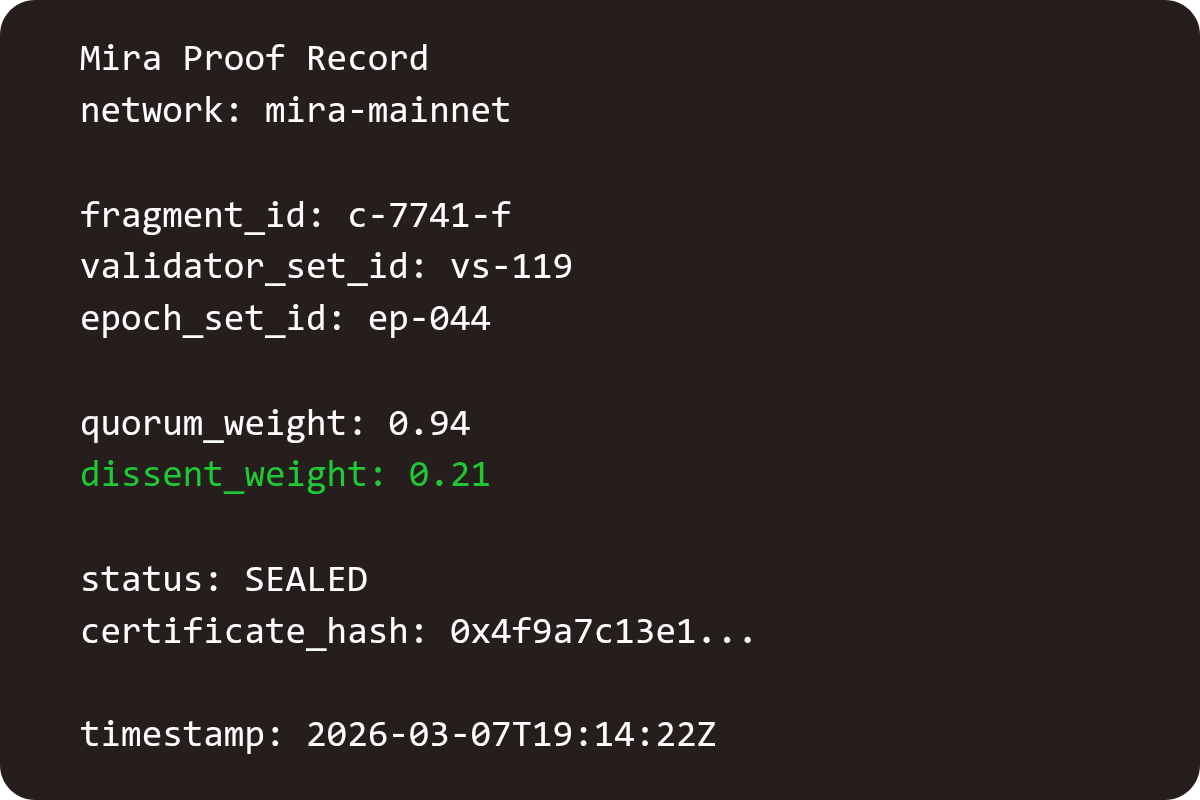

dissent_weight: 0.21

fragment_id: c-7741-f

validator_set_id: vs-119

epoch_set_id: ep-044

This fragment cleared three days ago. The certificate sealed. Downstream consumed it. Everyone moved on.

But the dissent weight stayed elevated. 0.21.

Higher than most clean claims. Lower than a real dispute. Still sitting permanently in the proof record.

That record isn't going anywhere.

I noticed it after dinner and started pulling the ones above 0.15. I just wanted to see if this was a one-off. Wrong tab first. Back again. Query again.

There were more than I expected.

Different claim types. Different validator sets. Different epochs. But the same pattern underneath.

Claims passed, just not cleanly. Validators disagreed. Not enough to block. Enough that the disagreement stayed visible after consensus closed.

At some point I stopped reading them one by one. I just started scrolling the dissent column.

That’s when the pattern showed up.

It's a map.

Not of what AI gets wrong. Of where AI confidence and AI accuracy start to diverge.

These are the claim types where independent validators trained on different data land in different places. Truth isn't unstable enough to fail verification. But it is unstable enough that consensus still costs something.

No single model produces this map.

A single model gives one answer in a confident tone. It leaves no trace of the uncertainty it smoothed over to get there.

Mira’s proof records produce something different.

Every elevated dissent weight becomes a coordinate. Every contested fragment that clears becomes a data point. Every validator set that disagreed before converging marks a boundary where the claim was less clean than it looked.

And the network records all of it automatically.

Nobody designed that map. It emerges from the process. Every $MIRA stake committed behind a contested verification. Every confidence vector drifting before settling. Every certificate sealing with disagreement still visible in the margin.

All of it permanent. All of it queryable. All of it growing.

The longer Mira runs, the more detailed the map becomes.

And the strange part is what that map might become later.

Because a consensus record of where independent validators disagree is basically a dataset. Not normal training data. A map of where models struggle to agree with each other.

That's the signal future models will need. Not just what is true. Where truth is expensive to establish.

Right now that dataset does not really exist anywhere else. No single lab assembles it. No single model produces it.

It only appears in a verification network where independent validators with $MIRA stake behind their assessments disagree and the disagreement stays recorded.

The disagreement archive might quietly become the most honest map of AI uncertainty ever assembled.

I'm not sure anyone designed it for that.

But every fragment that passes just not quietly…

adds another coordinate.