Artificial intelligence is advancing at an astonishing speed. Every few months, new models appear claiming higher intelligence, better reasoning, and stronger capabilities. But beneath this rapid progress lies a problem that almost nobody outside the technical world talks about enough: trust. How do we actually know that an AI system is producing reliable outputs? How can developers, companies, and users verify that a model behaves as claimed? This is exactly where the narrative around $MIRA becomes interesting.

Right now, most AI systems operate inside opaque environments. Models are trained on massive datasets, deployed through APIs, and then used by millions of people without any transparent way to verify how they work or whether their outputs can truly be trusted. Even the companies building these models sometimes struggle to fully explain the reasoning behind certain outputs. As AI becomes more deeply integrated into decision-making systems, this lack of verification becomes a serious problem.

Imagine a future where AI systems are responsible for financial analysis, healthcare insights, supply chain optimization, legal recommendations, or automated governance. In such environments, a simple hallucination or incorrect output could have massive consequences. Yet today, there is still no universal infrastructure for verifying the reliability of AI models and their outputs. That missing layer is what projects like MIRA are trying to address.

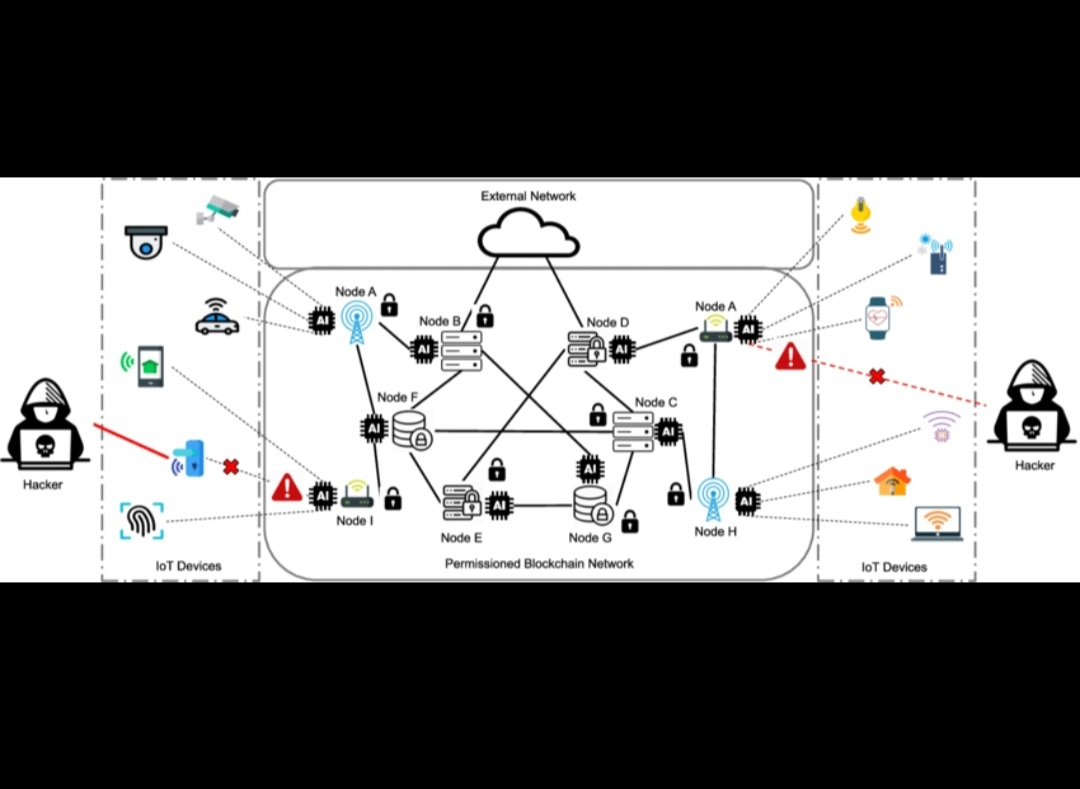

The core idea behind MIRA is deceptively simple but incredibly powerful: create a network where AI outputs and models can be verified in a transparent and decentralized way. Instead of blindly trusting a single model or provider, systems could rely on a verification layer that evaluates whether outputs meet certain standards of reliability and correctness. In other words, MIRA is exploring how trust in AI can become a shared infrastructure rather than a closed corporate promise.

What makes this concept compelling is that it tackles one of the most urgent issues in the AI industry. The conversation around artificial intelligence is often dominated by performance metrics—larger models, faster inference, better benchmarks. But performance alone does not solve the trust problem. A model that is extremely powerful but occasionally unreliable can still be dangerous when deployed at scale.

This is why verification networks could become an essential layer in the AI stack. If AI systems are going to interact with financial networks, automate economic decisions, or operate critical infrastructure, their outputs must be verifiable. Without verification, the entire system depends on blind trust, which is a fragile foundation for technologies that could eventually influence billions of people.

Another reason the MIRA narrative stands out is its timing. We are entering an era where AI agents are becoming increasingly autonomous. These agents will interact with digital systems, execute tasks, and potentially even transact economically. But autonomous systems require credibility frameworks to function properly. If an AI agent produces data that other systems cannot verify, coordination becomes difficult and trust collapses quickly.

This is where decentralized verification begins to make sense. Instead of relying on a single authority to validate AI outputs, a network of validators or verification mechanisms can evaluate results collectively. This approach mirrors the way decentralized networks verify transactions, but applied to information produced by AI systems.

From my perspective, this is one of the most underrated narratives emerging in the intersection of AI and Web3. Most blockchain projects still focus on finance, trading, or speculative tokens. But infrastructure that improves AI reliability could become far more valuable in the long term. If AI becomes a foundational technology across industries, the systems that ensure its integrity will become equally critical.

Of course, building such infrastructure is not easy. Verifying AI outputs requires sophisticated mechanisms capable of evaluating complex computations, probabilistic reasoning, and evolving models. It demands a combination of cryptography, distributed systems, and machine learning expertise. Many attempts will likely fail before a reliable standard emerges.

However, the importance of the problem cannot be ignored. Trust is the invisible foundation of every technological system. Financial markets require trusted settlement. The internet requires trusted communication protocols. And in the near future, artificial intelligence will require trusted verification layers.

That is why the idea behind MIRA is so compelling to me. It is not just another AI token trying to ride a narrative wave. It represents a deeper attempt to solve one of the fundamental structural challenges in the AI ecosystem.

If artificial intelligence is going to shape the next era of technology, the world will eventually need a way to verify what AI says and does.

And when that conversation becomes mainstream, infrastructure focused on AI trust might suddenly become one of the most important pieces of the entire ecosystem.