When discussions about robotics come up, the focus often centers on the machines themselves. Faster processors, better sensors, smarter AI models these advancements are impressive. However, as I delve deeper into the sector, a more significant limitation becomes clear. The real issue is not intelligence; it is coordination.

Managing a single robot in a warehouse is relatively straightforward. The company owns the hardware, the software, and the control environment. There is implicit trust due to centralized authority. Yet, once robotics steps outside those controlled spaces and enters open infrastructure, like cities, logistics corridors, and agricultural networks, the situation changes. Machines start interacting with data sources and decision systems beyond their control.

This is where the architecture developed by @FabricFoundation becomes important.

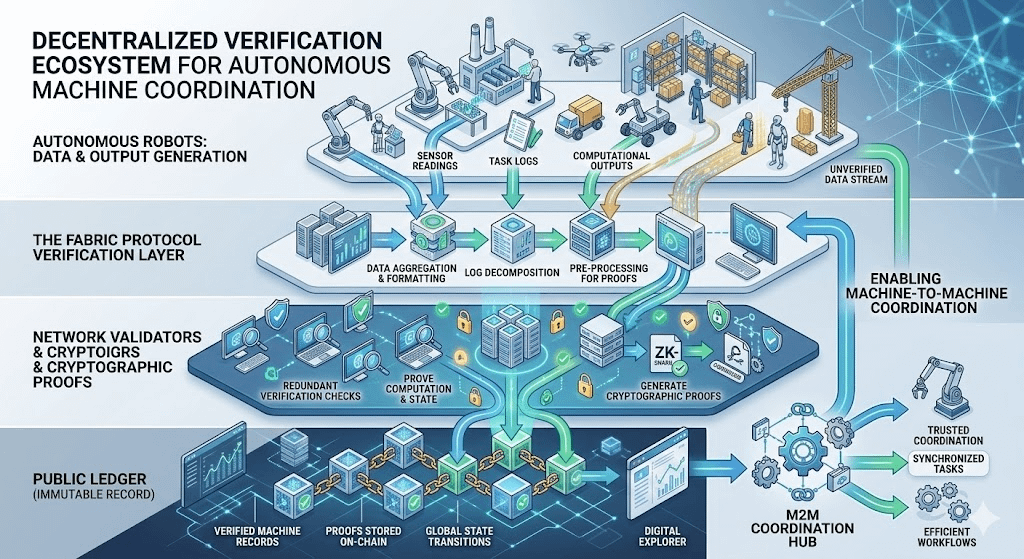

Fabric Protocol looks at robotics from a viewpoint that most automation platforms overlook: machines are not just tools; they are part of distributed systems. For robots to work together across organizations and environments, their actions must be verifiable. Without this verification, autonomous systems can turn into black boxes, and their outputs may not be trusted.

Fabric aims to address this with verifiable computing linked to a public coordination layer. When a robot performs a task, whether it is mapping an environment, interpreting sensor data, or executing an operation, the output can be paired with cryptographic proof. Instead of relying on blind trust in a machine’s internal software, the network can confirm that the task was completed correctly.

This verification layer is recorded in a public ledger, creating an auditable history of machine behavior. I find it particularly intriguing how this changes robotics from isolated implementations to a more interconnected infrastructure.

Think about the scenario when multiple autonomous systems operate in the same space. Delivery robots, mapping drones, inspection bots, and logistics machines can all gather useful information about their surroundings. Without a shared verification layer, each participant must independently verify the reliability of external inputs, which quickly becomes inefficient and fragile.

Fabric Protocol establishes a framework where outputs can be validated once and then referenced across the network. This effectively turns robotics data into a verifiable public resource.

The economic layer that supports this coordination model is the token $ROBO. In decentralized infrastructure, verification cannot solely depend on technical design. Participants need incentives to honestly validate computations and uphold the network’s integrity.Create a layered ecosystem diagram showing autonomous robots generating data and computational outputs that flow into the Fabric Protocol verification layer. Network validators confirm these outputs through cryptographic proofs before storing them on a public ledger, illustrating how decentralized verification enables machine-to-machine coordination.

Within Fabric’s framework, $ROBO helps align those incentives. Validators who confirm computational proofs secure the system by verifying machine outputs. Developers creating robotic applications depend on this verification layer to ensure that incoming data is reliable. Governance participants influence how the network evolves over time.

This results in an incentive structure that economically reinforces the reliability of machine coordination. The existence of ROBO in the ecosystem signifies more than just token branding; it represents the economic base that sustains the verification process.

What makes this architecture particularly relevant now is the rapid convergence between autonomous AI agents and physical robotics systems. AI agents can already manage resources, perform digital tasks, and interact with decentralized services. As these abilities extend to machines in the physical world, coordination becomes much more complex.

Autonomous agents working with infrastructure cannot rely solely on unclear logic. Their actions need to be auditable. Systems must verify that a robot interpreting environmental data or carrying out a task follows the correct processes. Fabric Protocol seeks to create that verification environment before autonomous machine networks expand beyond manageable limits.

Of course, transforming this architecture into real-world robotics will not be easy. Physical machines face constraints that digital networks often do not. Issues like latency, energy use, sensor reliability, and regulatory oversight add layers of complexity. Verification systems must operate efficiently to support real-time decision-making.

There is also a governance challenge that is seldom discussed. If decentralized protocols manage autonomous machines, the rules in those protocols start to affect real-world infrastructure behavior. Governance linked to ROBO carries responsibilities that extend beyond updating software. It influences how machine coordination systems develop.

Despite these challenges, the path Fabric is exploring feels increasingly pertinent as robotics shifts toward distributed autonomy. The next generation of machines will not function in isolation. They will interact in shared environments, share data, and contribute to collective decision-making.

The infrastructure that verifies these interactions may ultimately determine whether large-scale robotic networks remain trustworthy.

Fabric suggests and I increasingly agree that the future of robotics may rely less on creating smarter machines and more on developing systems that enable those machines to trust one another.Create a layered ecosystem diagram showing autonomous robots generating data and computational outputs that flow into the Fabric Protocol verification layer. Network validators confirm these outputs through cryptographic proofs before storing them on a public ledger, illustrating how decentralized verification enables machine-to-machine coordination.

#ROBO $ROBO @Fabric Foundation