For years the world has watched artificial intelligence grow from a curious experiment into one of the most powerful technologies ever created. Machines that once struggled to recognize simple patterns can now write essays, analyze markets, help doctors study diseases, and assist millions of people in their daily lives. It feels almost magical. You ask a question, and within seconds a machine produces an answer that sounds thoughtful, intelligent, and confident.

But behind this remarkable progress lies a quiet and uncomfortable truth. Artificial intelligence, for all its brilliance, does not always know when it is wrong.

Many AI systems occasionally produce information that sounds convincing but is completely false. These errors are known as hallucinations. The machine is not lying in the human sense. It is simply predicting what seems most likely to sound correct based on patterns in the data it was trained on. The words flow smoothly, the explanation feels logical, and the tone carries authority. Yet the facts may not exist at all.

For casual conversations this might be harmless. If an AI invents a small detail in a story or misremembers a historical statistic, the consequences are minor. But when these systems are used in fields like healthcare, finance, education, and law, even a small mistake can become dangerous. Imagine a doctor relying on incorrect medical information generated by AI, or a financial analyst basing decisions on fabricated data. Suddenly the illusion of intelligence becomes a risk.

This growing tension has created one of the biggest challenges in the modern AI era. Humanity has built machines capable of generating knowledge at incredible speed, yet we still struggle with a simple question. Can we trust what they say?

This is the problem that Mira Network is trying to solve.

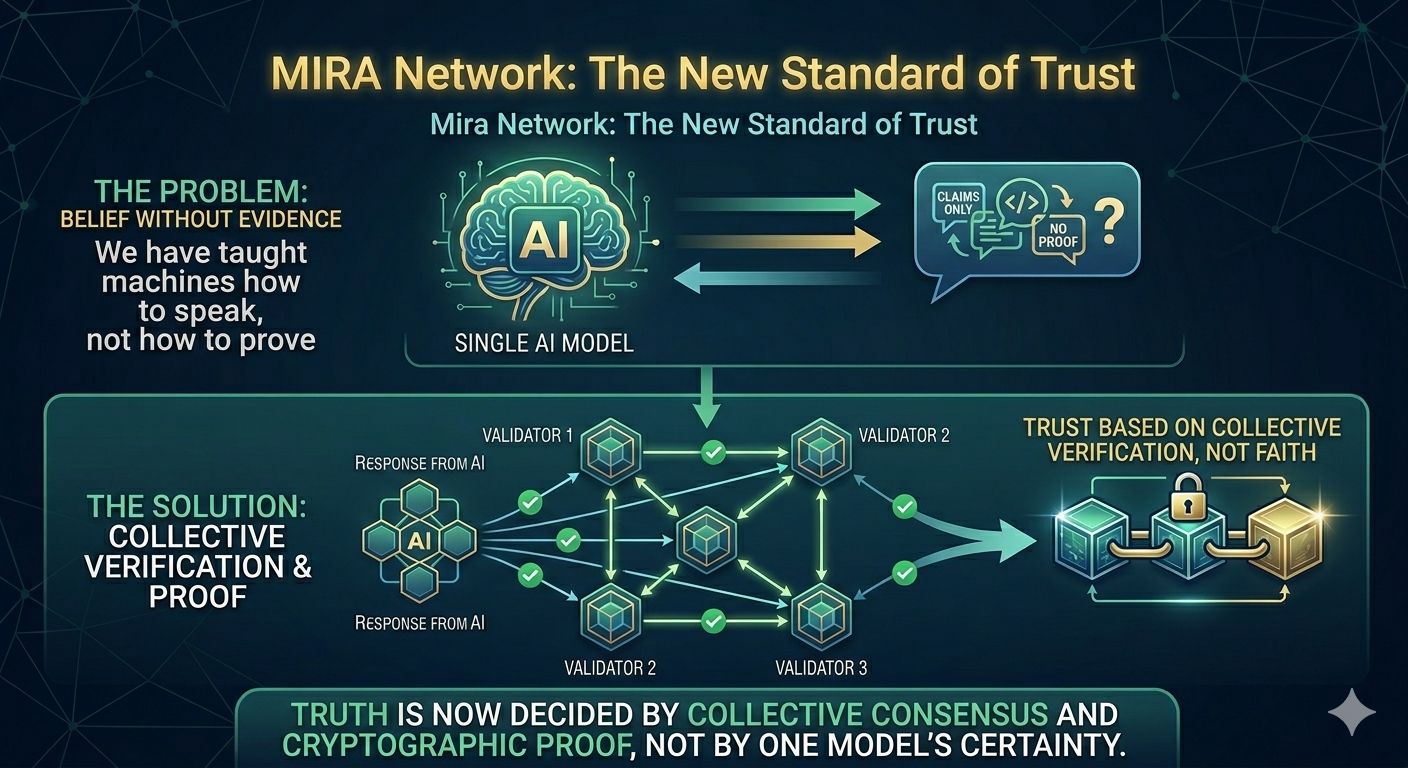

Mira Network was created with the vision of building a verification layer for artificial intelligence. Instead of treating AI outputs as unquestionable answers, the system treats them as claims that must be checked, validated, and confirmed. It introduces a decentralized process where multiple independent AI systems evaluate information before it can be trusted. The goal is simple but deeply ambitious. Transform AI from a machine that produces answers into a system that produces verifiable truth.

To understand why this idea matters so much, it helps to look at how modern AI works. Most advanced models today are trained using enormous collections of data that include books, articles, research papers, and conversations from across the internet. During training, the system learns patterns within language and knowledge. When a user asks a question, the model generates a response by predicting the most likely sequence of words that should follow.

This approach is incredibly powerful because it allows the AI to respond naturally to almost any topic. However, the system is not actually verifying whether the information is correct. It is predicting what sounds correct. The difference is subtle but important. A response can appear intelligent even when the underlying facts are uncertain.

This is why hallucinations occur. The model fills gaps in knowledge with plausible sounding guesses. Sometimes those guesses happen to be right. Other times they drift away from reality. The more complex the topic becomes, the harder it is for a single model to maintain perfect accuracy.

For years researchers tried to reduce these errors by improving training data or refining model architectures. These efforts helped, but they never fully solved the core issue. The challenge was not only about training better AI. It was about creating a system that could verify AI outputs independently.

Mira Network approaches this problem in a very different way. Instead of relying on one model to produce perfect answers, it allows many models to examine and validate each other’s work.

Whenever an AI generates a response within the Mira ecosystem, the system analyzes the content and breaks it into smaller factual claims. A single paragraph might contain several independent statements about dates, numbers, events, or relationships between ideas. Mira isolates these claims so they can be evaluated individually.

Each claim is then distributed across a network of validator nodes. These nodes operate independently and run their own AI models to analyze the information. Some validators might confirm the claim as accurate, others may detect inconsistencies, and some may mark it as uncertain. Through this process the network gradually builds a consensus about the truthfulness of the statement.

When enough validators reach agreement, the result is recorded on a blockchain ledger. This ledger acts as a transparent and tamper resistant record of the verification process. Anyone can trace how the decision was made and which nodes participated in the evaluation. No single authority controls the outcome, and no organization can secretly alter the results.

The idea behind this system is deeply rooted in the philosophy of decentralization. Instead of trusting one source of intelligence, trust emerges from the collective judgment of many independent participants. In many ways it mirrors how scientific knowledge evolves. A researcher proposes a theory, other experts review the evidence, challenge assumptions, and replicate experiments. Over time the truth becomes stronger through collaboration.

Mira Network applies that same principle to artificial intelligence.

Another important aspect of the system is the economic structure that encourages honest participation. Validator nodes must stake tokens in order to operate within the network. If they perform accurate verification work, they receive rewards. If they attempt to manipulate results or provide dishonest evaluations, they risk losing their stake. This mechanism aligns financial incentives with truthful behavior.

Truth becomes valuable, not just philosophically but economically.

This combination of decentralized validation and economic incentives creates a powerful environment where reliability can improve over time. Instead of depending on the internal knowledge of a single AI model, the system draws strength from the diversity of many models working together.

Early observations suggest that this approach can dramatically reduce hallucination rates and improve factual accuracy. By cross checking information across multiple independent systems, errors become easier to detect and correct. Even when one model makes a mistake, others can identify the inconsistency and prevent it from spreading.

The potential impact of such a system reaches far beyond simple chatbots or online assistants. As artificial intelligence continues to evolve, autonomous AI agents may soon perform complex tasks across industries. They may manage logistics networks, assist in scientific research, analyze financial markets, or support critical infrastructure decisions. In such a world, the reliability of AI outputs becomes more important than ever.

A machine making decisions must rely on information that can be trusted.

Without verification, every AI decision would still require human supervision. That limitation slows progress and reduces the potential benefits of automation. But with a reliable verification layer, AI systems could operate with far greater confidence and independence.

Mira Network represents an early attempt to build that foundation.

The project is also exploring ways to expand its infrastructure through distributed computing resources. Participants can contribute GPU power to support the verification process, allowing the network to scale as demand grows. Instead of relying on centralized data centers controlled by a few corporations, the verification layer can evolve through global collaboration.

This open participation model reflects a broader shift occurring across the technology landscape. Just as decentralized finance challenged traditional banking systems and decentralized storage challenged centralized cloud platforms, decentralized AI verification is beginning to challenge the assumption that trust must come from a single authority.

Trust can emerge from networks.

Perhaps the most powerful idea behind Mira Network is that intelligence alone is not enough for the future we are building. Artificial intelligence may become faster, smarter, and more capable every year, but without trust it will always remain limited.

Human society is built on trust. We trust information sources, scientific institutions, financial systems, and legal frameworks because mechanisms exist to verify truth and accountability. As machines take on greater roles in producing knowledge and making decisions, they must operate within similar systems of verification.

Mira Network is an attempt to create that system for the age of artificial intelligence.

It is an effort to ensure that when a machine speaks, its words are not just impressive but dependable. That the knowledge generated by algorithms can be examined, validated, and confirmed before it influences the real world.

In the end, the future of AI will not only be defined by how intelligent machines become. It will also be defined by how much we trust them.

And that trust will not appear automatically. It must be built carefully, layer by layer, through transparency, collaboration, and accountability.

Mira Network is taking one of the first steps toward building that foundation. If its vision succeeds, artificial intelligence may finally evolve from a powerful generator of answers into something humanity has been searching for all along.A reliable partner in the pursuit of truth.

@Mira - Trust Layer of AI #Mira $MIRA