Recently, a few weeks ago, I had assisted a friend who was preparing a short presentation to his business team. He intended to describe a new technology trend, and we have decided to use an AI tool to find some background information. The system generated a well written and clean explanation in a few seconds. It contained statistics, examples, even a brief summary that was presentable.

It appeared to be ideal at first sight. The writing was clear, the structure was sensible and the answer was professional. My friend was impressed. This wastes so much time said he. And honestly, I agreed. Artificial intelligence may be very helpful in times of sudden need of information.

However, just as we were going to complete the slides, I proposed that we went back to confirm some of the assertions. I did not anticipate an issue, but just because I did it. We also used the statistics that were mentioned in the response and attempted to locate the actual sources.

It was then we realized that something was wrong.

The number of one was not available anywhere on the internet. It was sensible, yet no credible account of it was heard. The other quote was based on a research that was not apparent. The account remained plausible but the facts that underpinned it were absent.

The mistake wasn’t huge. It would not have been a disaster. But it brought to our mind something. AI can know it very well, which does not necessarily imply that the information has been confirmed.

This constitutes one of the largest challenges of the contemporary AI systems. They are conditioned into giving answers that appear rational and useful. In the majority of cases, they are good workers. But they do not necessarily inquire of all the details whether they are true or not. They give predictions on the basis of data patterns. Don't do too bad in a talk, but it will occasionally build assertive mistakes.

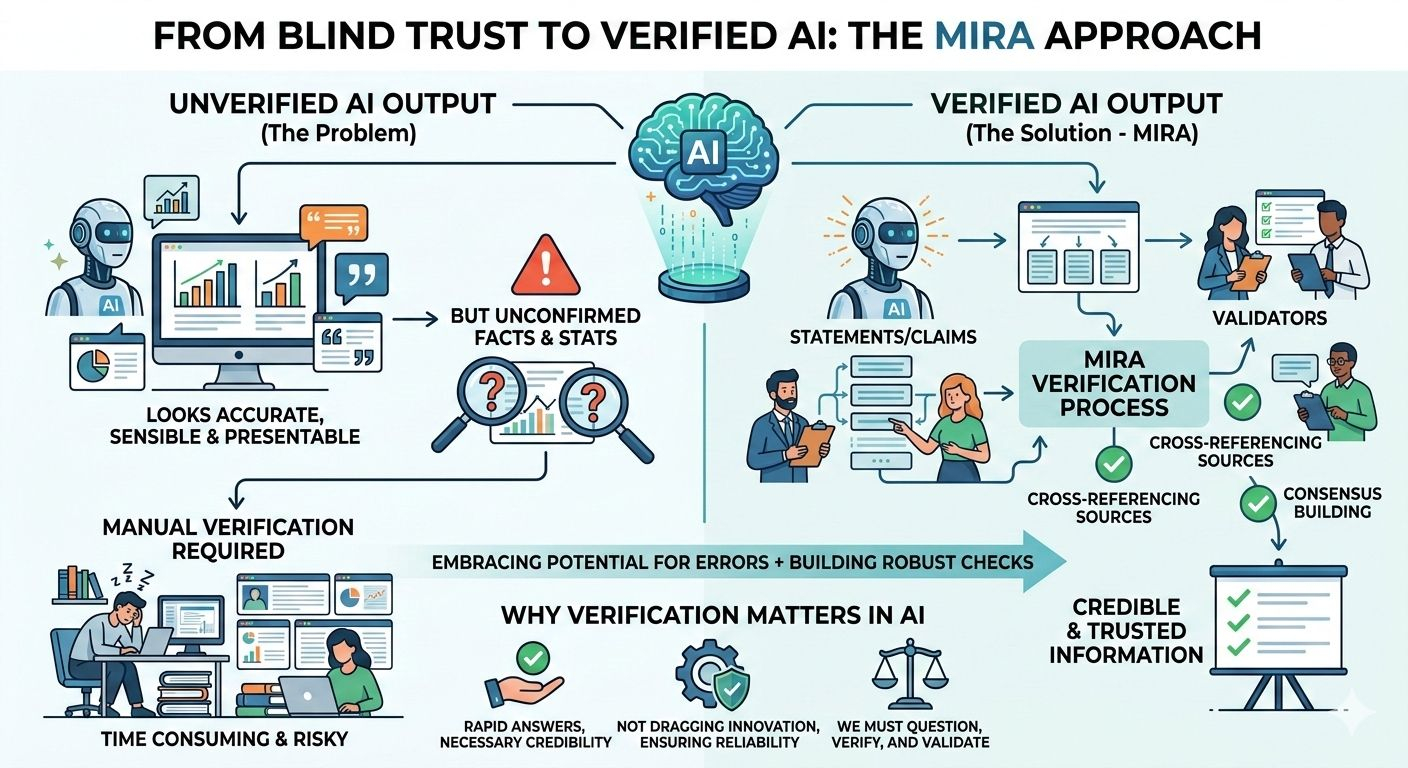

This and other experiences are the reason why the emergence of new concepts about AI verification is beginning to emerge. Some systems do not consider AI reactions to be definitive but rather make them claims that must be verified. Mira is an example of one that is interesting.

The concept of Mira is not complex. The response is divided into smaller statements rather than being dependent on the output of just a model. Multiplex validators examine these statements. Each validator examines the claim that it is either right or evidence based. It is only after having sufficient justified persons that the system checks the claim as valid.

To some extent, it is a group review process. The outcome is more credible when the reviewers are multiple and make the same conclusion. This method does not make the errors entirely ineffective, yet it will leave behind the possibility of one false response being overlooked.

Reflecting upon the way we prepared our presentation, such a system would have saved us time. Rather than having to search through the sources manually and find out which of them have been proven correct, we would have been able to see which of them were checked and which ones are to be repeated.

The interesting part to me is that the solution does not seek to perfect AI. Rather it embraces the fact that mistakes may occur and creates a mechanism to identify them. Such an attitude is more plausible. In reality, all the significant systems are checked and balanced. Banks audit transactions. Scientists review research. Designs are tested by the engineers.

The same should be the case with AI.

Due to the increased influence of artificial intelligence on business decisions, research, and the work routine, verification will gain greater significance. Rapid responses are practical, however, credible responses are necessary. This is not aimed at dragging innovation. This is done to ensure that the technology can be relied upon at the time that it most counts.

Eventually, the little error we made on our presentation became an important lesson. AI can assist us in being able to think faster, write faster and learn faster. However, we are also responsible. We should doubt, verify and validate valuable information.

Such technologies as Mira demonstrate that the future of AI does not necessarily rely on smarter models. It can also rely on smarter systems to verify the answers that the same models give.And sometimes, a little mistake is just what will make us understand the level of importance of that process.

@Mira - Trust Layer of AI #Mira $MIRA