A few months ago a friend of mine used ChatGPT to prepare a quick research summary before a meeting. Nothing unusual. The AI gave a clean explanation, cited a couple of sources, even threw in a statistic that sounded perfectly reasonable. He built part of his presentation around it. Later someone in the room checked the number.

It didn’t exist.

Not slightly wrong. Completely made up.

Now imagine that moment. You’re standing there explaining something confidently… and suddenly you realize the AI basically invented a fact. Awkward silence. Laptop screens opening. Someone googling. Meeting energy gone.

Most people using AI today have had some version of that experience. Maybe not in a boardroom, but in daily work. You ask a model something technical. It answers smoothly. It sounds right. But deep down you’re thinking, Should I actually trust this?

That small doubt is becoming the real bottleneck of modern AI.

The models are getting smarter every month. Faster. Bigger. More capable. But reliability? That part still feels messy. Sometimes AI is brilliant. Sometimes it hallucinates things like a very confident intern who doesn’t want to admit they don’t know the answer.

And the frustrating part is this the AI doesn’t signal uncertainty very well. It delivers correct facts and fake ones with the same tone. Same confidence. Same formatting.

The way I see it, the problem isn’t just intelligence anymore. It’s verification.

This is where Mira started to catch my attention. Not because it’s another AI model trying to beat OpenAI or Anthropic or whoever wins the next benchmark. Mira is approaching the issue from a slightly different angle. Instead of asking How do we build smarter models? the project asks a simpler question What if AI outputs didn’t need to be trusted blindly because they could be verified?

That shift in thinking matters more than it sounds.

Right now most AI systems operate like a very advanced guessing engine. The answers are generated based on probabilities learned from training data. Usually it works well. But when it doesn’t, the system has no built-in mechanism to prove whether its statement is actually correct.

Mira basically tries to insert a verification layer between “AI said something and “people believe it.

The way it works is actually easier to understand if you think about it like breaking a report into pieces. When an AI generates a long answer, Mira doesn’t treat that output as one big block. Instead the system slices it into smaller claims. A statistic becomes one claim. A factual statement becomes another. A logical conclusion becomes another piece.

Then those claims are distributed across a network of independent validators and AI models.

Think of it like asking several analysts to check different sections of the same document. One checks the numbers. Another checks the sources. Another checks whether the conclusion actually follows from the data.

If enough participants agree that a claim is valid, it becomes verified information. The verification itself is recorded through blockchain consensus so there’s a transparent record that multiple independent actors checked it.

The real kicker here is that Mira isn’t asking you to trust a single model anymore. It’s asking the network to confirm whether the information holds up.

And of course, whenever you hear “network of validators,” the next question is obvious: why would they behave honestly?

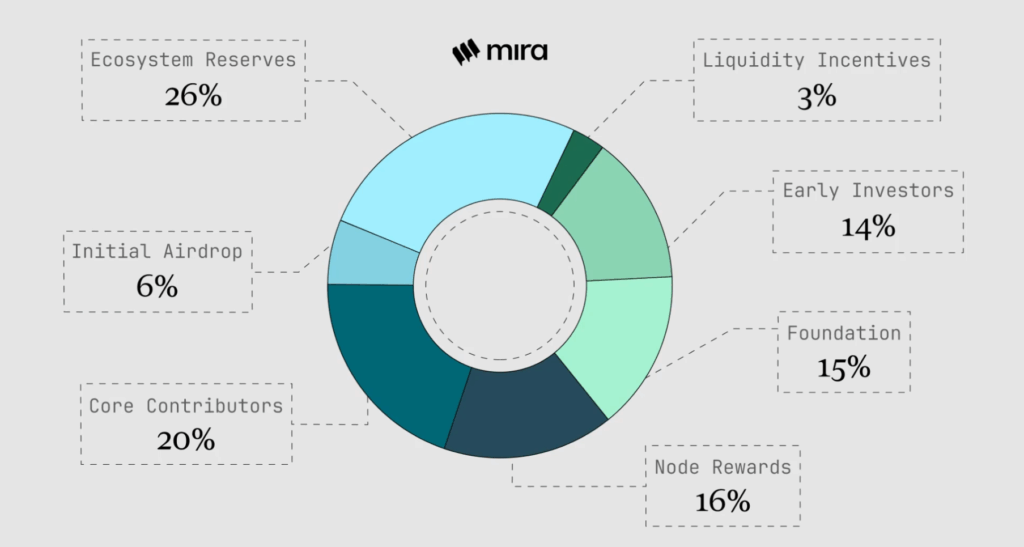

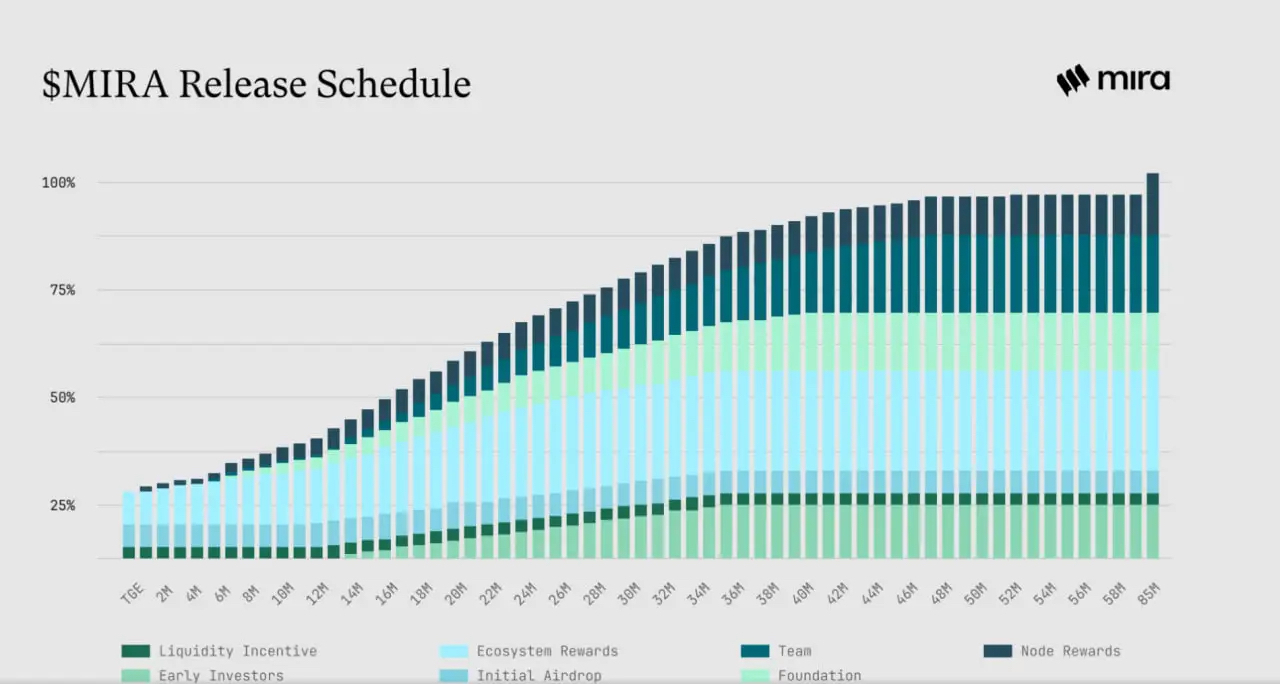

This is where the incentives come in. Participants in the Mira network have to stake value when they validate information. If they verify claims correctly, they earn rewards. If they approve bad information or act irresponsibly, they can lose part of their stake.

In other words, they have skin in the game.

That economic pressure changes behavior. Suddenly verification isn’t just a technical process. It’s a game theory system where accuracy becomes financially rational. Being sloppy costs money.

Another interesting angle here is privacy. Verification systems often run into a tricky issue: if everything needs to be checked publicly, what happens to sensitive data?

Mira tries to avoid that trap by focusing on validating claims rather than exposing raw information. The network doesn’t always need the entire dataset to verify whether a statement is correct. Sometimes it just needs proof that the claim aligns with the underlying data.

That distinction matters a lot if AI starts operating in areas like finance, healthcare, or enterprise systems where data can’t simply be broadcast to the world.

And this connects to something bigger that’s quietly happening in the AI space. Right now humans still sit in the middle of most AI workflows. We read the outputs. We sanity-check things. We decide whether something makes sense.

But that setup probably won’t last forever.

AI agents are already starting to interact with each other. Software negotiating tasks. Bots running trading strategies. Autonomous systems making decisions without waiting for human approval every time.

In that world, machines will need a way to verify other machines.

Otherwise the entire ecosystem becomes fragile. One confident hallucination could ripple through multiple systems before anyone notices. That’s not a great foundation for an automated future.

Now, to be fair, there are still a lot of open questions around projects like Mira. Verification adds extra computational work, which means additional cost. If verifying every AI claim becomes expensive, adoption could slow down.

Speed is another challenge. Consensus networks are rarely lightning fast. Some real-time AI applications might struggle if verification takes too long.

And the architecture itself is not exactly simple. Breaking outputs into claims, distributing them across validators, reaching consensus it’s a complex pipeline. Developers will only adopt it if the integration becomes smooth enough.

But despite those challenges, I think the broader idea behind Mira is pointing in the right direction.

The AI industry has spent the last few years racing to build more powerful models. That race isn’t slowing down. But eventually people start asking a different question: How do we know when these systems are actually telling the truth?

That’s where verification layers start to matter.

If Mira succeeds, most users probably won’t even realize it exists. And honestly that’s how infrastructure usually works. The systems that hold the internet together—DNS, encryption protocols, routing layers—are invisible to most people.

You just open a website and things work.

Something similar could happen with AI verification. A background network quietly checking claims, attaching proof to information, and making sure machines aren’t confidently passing around nonsense.

Not flashy. Not hype.

Just the plumbing that keeps the whole AI ecosystem from getting a little too… clunky.

Personally, I’m tired of double-checking every single thing my AI tells me. If a protocol like Mira can take that load off my shoulders, I’m all in even if it costs a bit more.