Systems rarely reveal their real behavior during early testing.

Under light demand everything looks stable.

Queues clear quickly. Verification cycles complete on time. Coordination feels predictable.

But once real value begins moving through the network, another dynamic appears.

Retries.

Requests arrive unevenly.

Responses drift beyond expected windows.

Retry attempts begin appearing in system logs.

Nothing dramatic breaks.

Yet the system begins accumulating operational pressure.

Retry debt.

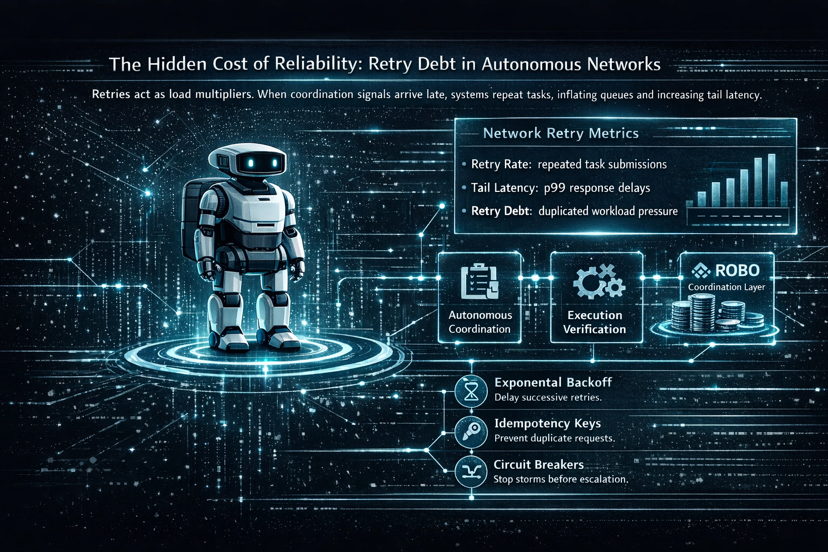

This is retry debt: the silent tax on reliability that grows not from crashes, but from repetition.

In simple terms, retry debt emerges when systems pay for uncertainty with repetition.

The Fabric Foundation’s ROBO infrastructure examines this dynamic within autonomous machine coordination networks. Instead of measuring infrastructure purely through throughput, it looks closely at how coordination behaves when tasks begin repeating across nodes.

In distributed infrastructure, breakdown rarely arrives as a sudden crash.

More often it appears as repetition.

A task stalls, so the client submits it again.

The retry succeeds slightly later than expected.

Another component applies the same recovery logic.

Each retry appears reasonable when viewed in isolation.

Infrastructure, however, never experiences retries individually.

It absorbs them collectively.

Retries are designed as recovery mechanisms.

At scale, however, they often behave like load multipliers.

Verification layers process overlapping requests.

Coordination channels carry duplicated signals.

Many coordination systems rely on timeout windows.

A task is submitted to the network.

Nodes begin processing.

Verification responses are expected within a defined time frame.

If that response does not arrive in time, the client assumes failure.

So the task is submitted again.

The original request may still be progressing somewhere in the system.

Now both tasks exist simultaneously.

This mechanism expands workload without producing any obvious error.

Distributed systems rarely collapse because of visible faults.

They degrade under repetition.

Airline logistics shows a similar pattern.

A single delayed flight rarely causes chaos.

But if that delay prevents a crew from reaching their next rotation, the following departure slips.

Gate schedules begin overlapping.

Within hours the entire timetable begins unraveling.

Airline operators describe this as schedule debt.

Infrastructure systems experience the same pattern.

Retries rarely shatter a system.

They reshape its internal timing.

Large digital platforms have observed this pattern repeatedly.

In streaming systems or ride-hailing networks, one slow dependency can trigger thousands of simultaneous retries.

Clients interpret delay as failure and attempt again.

What began as a small slowdown multiplies incoming load several times over.

A service that was already under strain may suddenly face four to ten times more traffic.

This is how retry storms emerge.

Latency data often reveals the shift.

Median response time may remain steady around fifty milliseconds.

Tail latency tells a different story.

Under retry pressure, p99 latency can stretch from milliseconds into several seconds.

Users feel those delays even when the average appears healthy.

Retries can also introduce financial consequences.

Payment gateways illustrate the risk clearly.

If retry logic runs without idempotency protection, a transaction attempt may execute twice.

A customer clicks once, the system retries automatically, and the card is charged twice.

Technically the infrastructure performed its task.

Coordination did not.

ROBO organizes work through three interacting elements.

Task coordination between nodes.

Execution verification across participants.

Retry handling when responses fall outside coordination windows.

When verification responses exceed those windows, retry logic activates automatically.

Clients resubmit tasks.

Nodes attempt execution again.

Verification layers reconcile overlapping outcomes.

In many autonomous networks, retry storms appear when coordination nodes lose alignment with verification pipelines.

What begins as a simple retry becomes a coordination puzzle.

Within Fabric Foundation’s ROBO environment, robots stake $ROBO to obtain coordination priority and task access. If verification arrives too late, duplicated movement instructions could be triggered, draining battery capacity or creating conflicts in shared physical space.

What might look like a harmless retry in software can translate into measurable operational loss in the physical world.

Understanding how this pressure forms requires looking at the system through three observable layers.

Queue formation

In distributed infrastructure, queues are not anomalies.

They act as indicators of pressure inside the system.

Tasks wait for execution.

Messages wait for propagation.

Verification responses wait for confirmation.

Under normal conditions these queues clear quickly.

Retries disturb that balance.

A failed task returns to the queue.

Now the network processes both new work and repeated work.

Average latency may remain stable.

Yet deeper metrics begin shifting.

Queue latency tails stretch beyond expected coordination windows.

An early signal often appears as divergence between median latency and tail latency across task queues.

The system continues processing tasks.

But its timing becomes uneven.

Trust thresholds

Verification answers a narrow question.

Did the operation occur?

Retries introduce a different concern.

Who absorbs the operational cost when coordination breaks down?

A retry may reflect network delay.

It may signal node failure.

Or it may simply reveal mismatched timing between infrastructure layers.

Verification confirms outcomes.

It does not assign responsibility.

Distributed systems do not remove coordination costs.

They redistribute them.

Operational coping

Eventually the network adapts.

Operators modify timeout parameters.

Clients introduce caching layers.

Infrastructure providers refine routing paths.

Each participant attempts to reduce exposure to retries.

Over time these adjustments reshape system behavior.

Certain requests begin clearing faster.

Some nodes become preferred execution paths.

The infrastructure continues operating.

Yet the symmetry of coordination fades.

If ROBO succeeds as an infrastructure experiment, its real contribution may lie in measurement.

Retry pressure rarely appears in simple dashboards.

More subtle signals expose it.

Retry rates per task begin rising beyond normal baselines.

Verification delays extend past expected response windows.

Queue latency tails expand across coordination layers.

Infrastructure stress rarely begins with visible failure.

It begins with repetition.

To contain that pressure, systems often rely on exponential backoff with jitter to prevent synchronized retries, idempotency keys to ensure duplicate requests execute safely, and circuit breakers to halt retry storms before they cascade.

Economic incentives are often presented as the answer to scaling.

Reward operators and capacity expands.

More compute.

More validators.

More redundancy.

Yet incentives cannot align the timing assumptions between execution, verification, and governance layers.

Retries appear when those layers begin operating at different speeds.

Autonomous infrastructure does not remove coordination problems.

It redistributes them across queues, retries, and verification cycles.

Retries are signals.

Signals that coordination assumptions have drifted.

Signals that infrastructure is absorbing uncertainty.

Signals that repetition is becoming routine.

And when repetition becomes routine, reliability stops being a guarantee.

It becomes a cost center.

@Fabric Foundation #ROBO $ROBO