For a long time, the dominant mental model around AI has been simple.

You ask.

It answers.

You decide whether to trust it.

That interaction feels natural because it mirrors how we use tools. A calculator returns a number. A search engine returns links. AI, in that sense, behaves like a more articulate extension of the same pattern.

But the more AI begins touching operational systems—finance workflows, compliance analysis, risk evaluation, automated coordination—the more that tool-based model starts to crack.

Because tools don’t carry responsibility.

Systems do.

What’s interesting about Mira is that it seems designed around this distinction. Instead of treating AI output as something an individual user interprets privately, it treats the output as something that may eventually need to stand inside a larger environment where other actors depend on it.

And when multiple actors depend on the same conclusion, the question changes.

It’s no longer “Does this look reasonable?”

It becomes “How did this become something everyone is comfortable acting on?”

That difference sounds philosophical, but it has practical consequences.

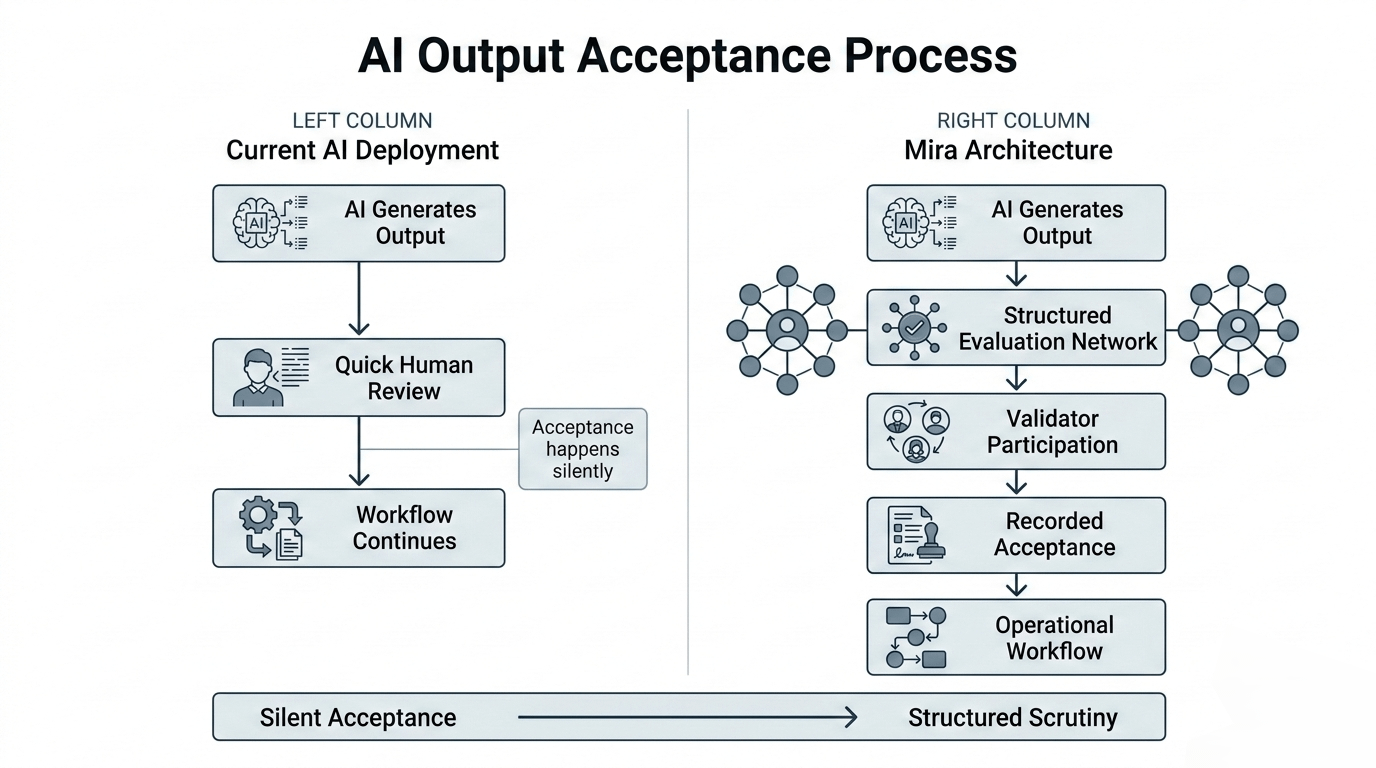

In most AI deployments today, the moment when an output becomes operational is vague. A model generates something, someone glances at it, and the workflow moves forward. If the result turns out to be flawed, it’s often hard to reconstruct how the decision gained legitimacy in the first place.

The output existed.

Someone accepted it.

Everything else happened afterward.

Mira introduces the idea that this acceptance should not be a silent event.

Instead, it should happen inside a structured environment where evaluation is visible, contestable, and economically meaningful. Outputs don’t simply move forward because they were generated—they move forward because they survive scrutiny within a system designed to encourage careful examination.

This design reframes AI in a subtle but important way.

Rather than acting as a source of isolated answers, AI becomes a participant in a process where conclusions emerge through interaction. The model may produce the initial reasoning, but the network determines whether that reasoning becomes something others rely on.

That separation between generation and acceptance is where Mira’s architecture becomes interesting.

Model generation is improving quickly across the industry. Every few months, new benchmarks appear and new architectures outperform the previous generation. Competing directly in that race is expensive and unpredictable.

But the process that decides when AI output becomes reliable enough for shared use—that process remains largely unsolved.

Most organizations still manage it informally.

Engineers build internal review layers.

Compliance teams document evaluation procedures.

Managers approve outputs before they move downstream.

All of this works, but it scales poorly. As AI output volume increases, human oversight becomes a bottleneck. Systems that were designed for experimentation struggle when they start influencing real decisions across departments or institutions.

A decentralized validation environment changes that dynamic.

Instead of relying entirely on internal review, evaluation becomes something that participants in the network have incentives to perform carefully. The protocol rewards accurate assessment and discourages careless acceptance.

Over time, this produces something that current AI pipelines lack: collective confidence that emerges from structured participation rather than individual judgment.

This matters especially in environments where multiple independent systems interact.

Imagine a financial network where several services rely on AI-generated interpretations of regulatory language. In today’s architecture, each service might run its own model, interpret the results independently, and hope the outputs align. Divergence becomes inevitable.

With a shared validation layer, the conclusion itself can become something multiple systems reference. Instead of each system trusting its own interpretation, they rely on a conclusion that already passed through a known evaluation process.

That shifts coordination from duplicated reasoning to shared validation.

It also introduces a form of accountability that current AI deployments often lack.

When an AI-generated conclusion becomes part of a network process, participants can examine not just the output, but the conditions under which it was accepted. Agreement stops being opaque. It becomes something observable.

This doesn’t eliminate disagreement.

In fact, it can surface disagreement earlier.

But surfacing disagreement earlier is often healthier for complex systems. It prevents silent divergence, where different actors unknowingly depend on incompatible interpretations of the same information.

Mira’s architecture appears to favor this kind of early visibility.

By making evaluation part of the protocol, it narrows the space where uncertain outputs can quietly become trusted simply because nobody challenged them.

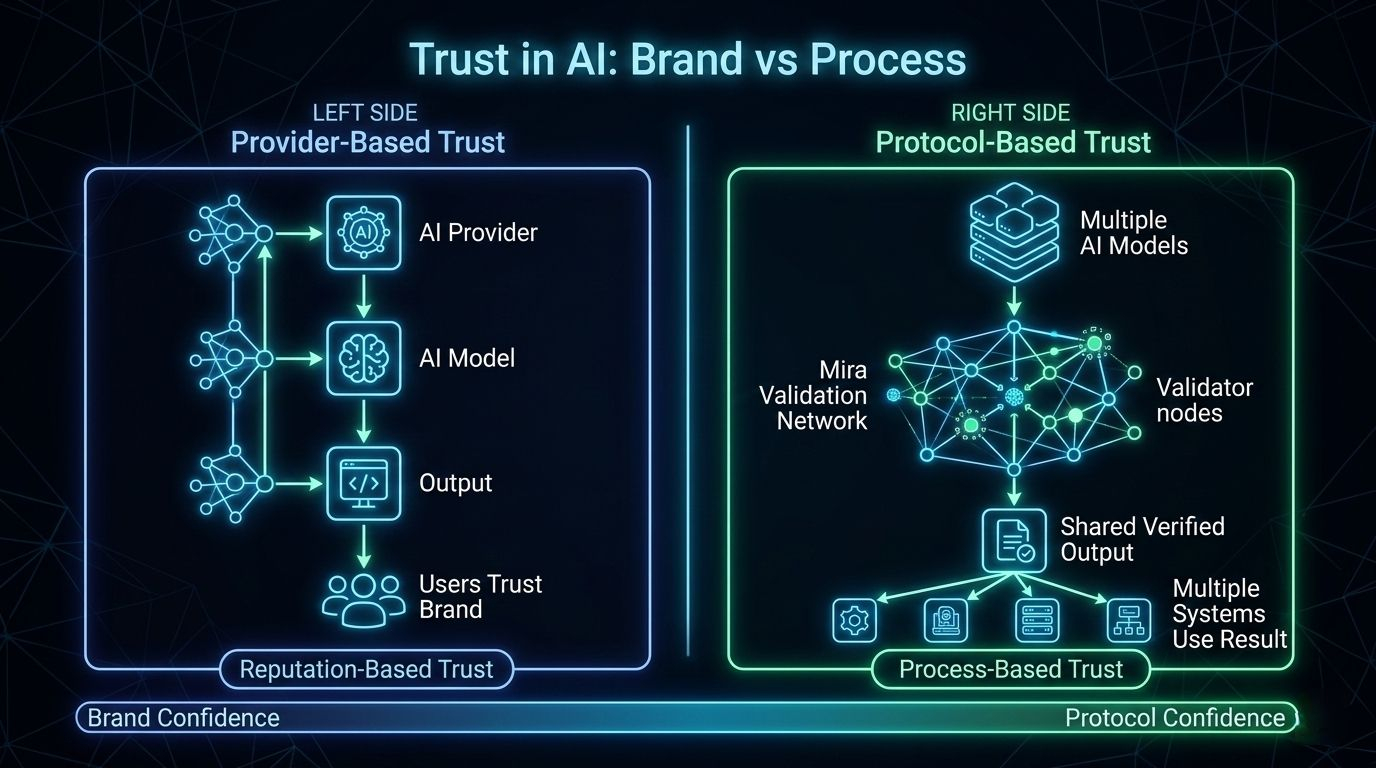

Another implication of this approach is that it decouples trust from model identity.

Right now, many organizations trust AI outputs largely because they trust the provider. If the model comes from a respected company, the output inherits some of that credibility.

But reputation-based trust is fragile. Providers change. Models evolve. Public perception shifts.

A protocol-based validation environment creates a different foundation. Trust emerges from the process rather than from the brand that generated the answer.

That process-driven trust is easier for independent systems to rely on.

Over time, this could reshape how AI integrates into distributed environments. Instead of every application carrying its own private trust model, networks could share a validation surface where conclusions gain legitimacy before becoming operational.

In that world, using AI wouldn’t mean simply asking a model for answers.

It would mean participating in an ecosystem where answers become reliable through collective scrutiny.

That’s a much heavier responsibility than a chatbot interaction.

But it’s also what makes AI useful beyond individual productivity.

The real transformation of AI will not happen when models become slightly more accurate or slightly more articulate.

It will happen when the systems around them make it possible for their conclusions to be trusted across organizations, applications, and automated processes.

Mira appears to be building precisely that surrounding structure.

Not a smarter model.

A more reliable environment for what models produce.

And in the long run, the environment where intelligence lands may matter more than the intelligence itself.