Trust, truth and token economics.

On the first glance at Mira, I was more concerned with the issue it is addressing than with the token excitement: ensuring software outputs are reliable. Big AI models are certain but have the ability to fabricate or reveal the biases of their training data. According to the whitepaper there is no model against hallucinations and bias. Such a discrepancy implies that AI remains risky in such vital areas of business as disease diagnosis or loan decisions. This is why it is important to be verified.

Social aspect is not any less important. Previously we have believed in the experts, the regulators and peer review to discover the truth. Mira suggests the addition or substitution of that with on-chain consensus. Rather than putting their faith in a single AI or a single institution, it divides claims to a large number of independent models and waits to see them agree as evidence. Theoretically, it divides power and removes it in one location. As a matter of fact it poses a troubling question: what would happen in case markets determine truth?

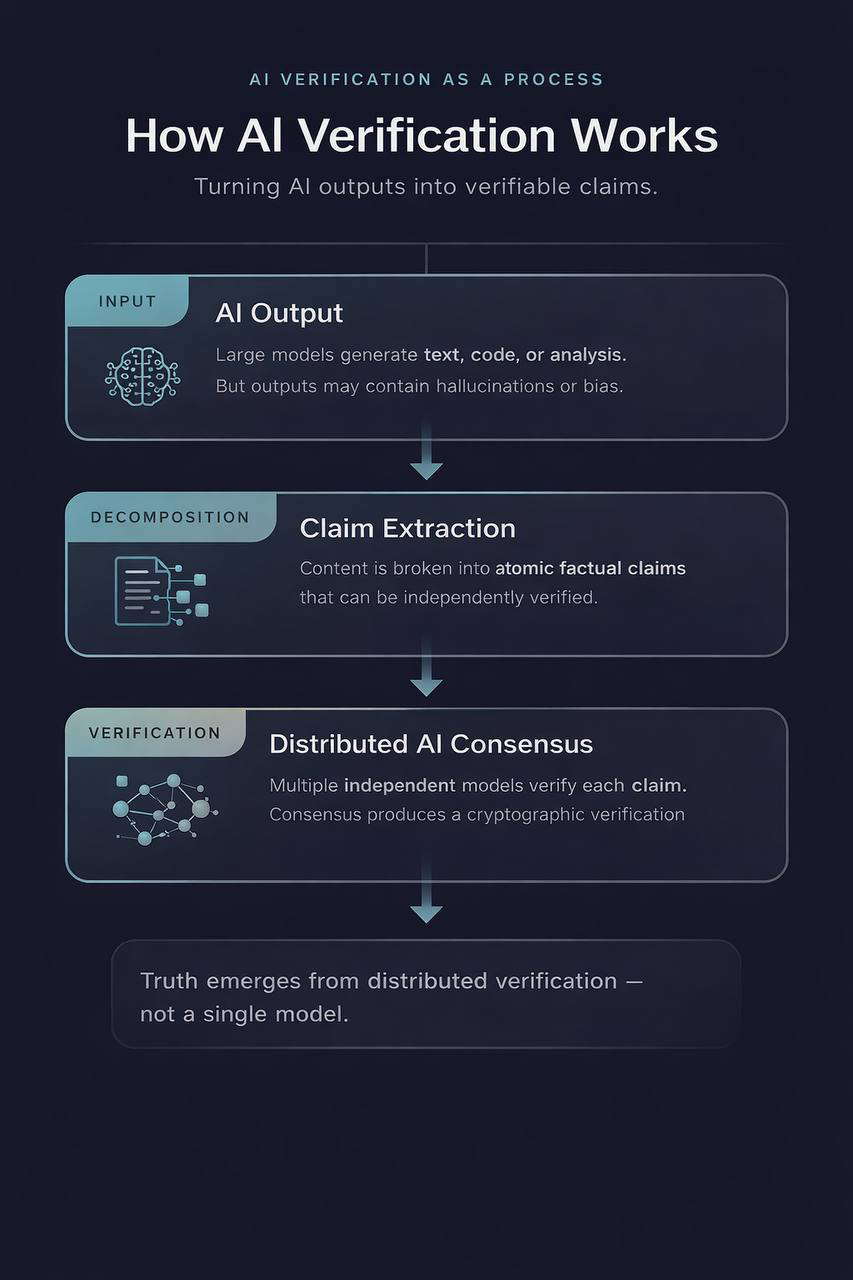

How Mira verifies AI

Mira transforms complicated results to basic assertions and forwards them to verifier nodes which execute various models. When a sufficient number of models accept the claim, the result is recorded in a public ledger and the claim is accepted. Verifier nodes are rewarded by a combination of proof-of-work and proof-of-stake which rewards good work and removes stake of bad actors. This is aimed at making it expensive to lie and preventing system manipulation.

Mira is not similar to academic ensemble methods because of the token layer. The $MIRA token is also a collateral and a payment. The total number of tokens is one billion, and an approximate of a fifth is introduced at the launch. The verification services are charged in MIRA by the developers. Nodes are run by locking the token by operators. The protocol changes are voted by the token holders. The distribution will be community-centric: small airdrops and node rewards, a massive repository of grants and partners, and long-term vesting of the team and investors.

Mira made a bet that with good incentives people will be honest by transforming verification into a marketplace. The assertions turn into employment, auditors turn into bidders and the price is the fee and the guarantee. It is hoped that rational actors will prefer truth since it is costly cheating. It is a beautiful concept, but it combines philosophy with the speculations that have poisoned crypto projects.

Rewards and behavioural tension.

According to the designers, participants are not angels. The protocol takes hard work and punishes dishonesty, but it is difficult to exploit self-interest. Staking is an attractive activity due to the high token prices but it also attracts speculation. A warning note by Bitget informs that decentralized AI is emerging, unlocking of tokens may lead to selling, and markets are unstable. Since staking requires proof-of-work the nodes require actual work to be done, making lazy staking impossible but more complex. Model diversity is another risk: in case most verifiers have similar models or the ones are operated by a small number of people, the system may reproduce biases. Weighted governance by tokens also allows big holders to influence the definition of truth.

Possible futures

Various routes might occurs. At best, Mira will be an AI helper to high-stakes: hospitals, law firms and banks will display its certificates to demonstrate compliance with AI decisions. The second consequence is that it remains a niche tool: the network answers millions of questions and drives apps such as Klok and Astro, but further expansion might halt in case of competitors providing easier checks, businesses develop in-house solutions, or the regulators remain suspicious. The worst scenario is the speculation at the expense of the mission. Large unlocks may lead to sell-offs; companies with small shareholdings may dominate decision-making and price volatility may push out operators.

My reflections

I respect and am worried after reading the whitepaper, tokenomics and third-party emotions. On a positive note, Mira acknowledges that AI requires a trust layer and it does not assume that all people are good. The hybrid design of proving-of-work / proving-of-stake and the split of tokens demonstrate that the team is concerned with the security and persistence, and the locking of the team and investors tokens proves the long-term perspective.

However the primary supposition that truth can be determined by markets has not been tested. There is a mispricing of risk in markets and epistemic markets may also fail. The weighted governance token comprehends a preference of cash rather than skill, perhaps solidifying authority. The success will be determined by the level of interest of the researchers and developers in Mira and acceptability of its certificates by the regulators. Ecosystem fund and builder grants will not necessarily produce a community.

Finally Mira is on the intersection of two experiments: decentralized finance and AI. The urge to substitute human controls with algorithms clashes with human behavior that is messy. The network of Mira would be one of the major layers of AI trust provided that it balances incentives, prevents concentration, and responds to regulation. Or it might turn into a second lesson on how to build technology by speculation. I am also sceptically optimistic but retains a jaded opinion on the influence of economics in seeking the truth.