When you look at Fabric Protocol, it feels less like a piece of software and more like the beginnings of a society for machines. The idea didn’t start with tokens or operating systems, it started with a simple observation: robots don’t trust each other. A delivery robot from one company can’t easily coordinate with a warehouse robot from another. They live in separate worlds, speaking different languages, locked inside their own servers. That lack of trust is what keeps them from forming real teams.

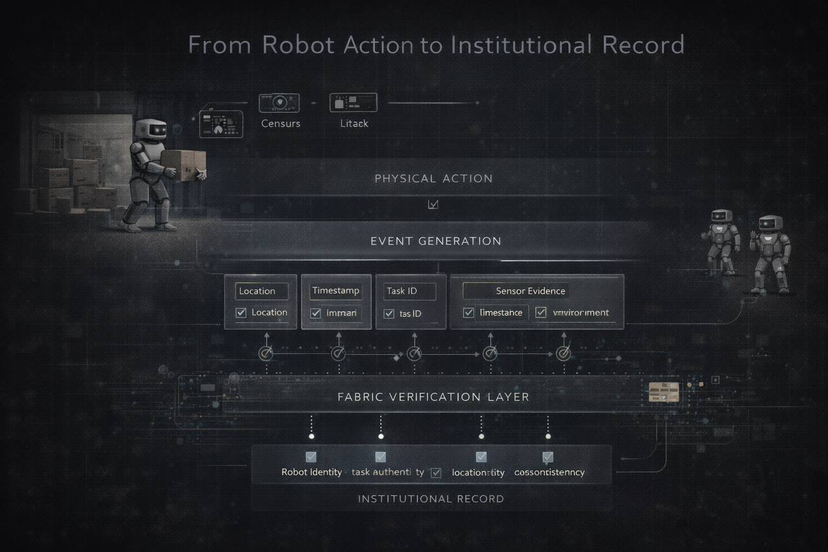

Fabric steps in by giving robots something humans have relied on for centuries: institutions. Just as contracts, accounting, and property rights allow people to cooperate at scale, Fabric builds a governance layer for machines. Every robot gets a cryptographic identity tied to its hardware. Every action, moving goods, scanning a building, inspecting infrastructure, becomes a verifiable record. These records aren’t private logs; they’re shared across the network, open to inspection and correction. A robot can’t just claim it was on the second floor—other sensors and robots check, and only then is the record finalized. That’s how Fabric turns behavior into official proof.

This changes the way robots work. Instead of central servers issuing commands, Fabric creates open task markets. Jobs are posted, robots pick them up, and once completed, the system verifies and pays automatically. Deposits and settlements are enforced by code, not trust. It feels less like machines being ordered around and more like them negotiating contracts in a marketplace.

The reason this matters is scale. A factory can manage a handful of robots with central control, but what happens when thousands of robots operate across cities, firms, and countries? They need answers to basic questions: Who are you? Did you finish the job? Can I trust your data? Fabric provides those answers through identity checks, shared context, and automatic settlements. It’s the same invisible scaffolding that lets humans trade globally, now applied to machines.

The boldest design choice was embedding governance rules directly into code. Human institutions evolve slowly through laws and procedures, but Fabric’s rules can be updated programmatically. Smart contracts can split profits among multiple robots, enforce insurance deposits, or restrict certain devices to specific tasks. The risk is adoption. If too few robots join, Fabric remains an experiment. Metrics like throughput, verification speed, and active identities will decide whether it scales. The team is betting on interoperability, making sure robots from different manufacturers can join without rewriting their systems.

If Fabric succeeds, it could become the bookkeeping system of a global machine economy. Robots would no longer be isolated tools but autonomous agents embedded in institutional frameworks. They could form partnerships, resolve disputes, and trade services across borders. If it fails, it will still stand as a bold experiment showing how machines might one day learn to cooperate.

What’s most striking is that Fabric isn’t really about coins or tokens, it’s about giving robots the same invisible agreements that make human societies possible. It transforms actions into records, jobs into contracts, and cooperation into rule-based trust. If it takes off, we may see cities where autonomous systems trade, negotiate, and collaborate without central control. If not, it will remain a glimpse into a future where robots are not just tools but participants in an economy of their own.

It leaves us with a thought that feels both strange and inevitable: when robots need institutions, Fabric may be the first draft of their society.