When I started digging into how people actually verify AI outputs on chain,I noticed something odd.Everyone loves to talk about models and performance,but barely anyone brings up trust.The real challenge isn’t just building a good AI system it’s whether anyone outside that system can independently check what the AI actually produced.The more I explored,the clearer it got:verification only matters if it can cross ecosystems,not just stay locked up in a single chain.

The problems start popping up when AI generated results need to interact with decentralized systems that don’t share the same infrastructure.Maybe a model spits out an answer,maybe there’s a proof floating somewhere,and now a contract on another chain depends on that output.But the path connecting those dots is weak.Each blockchain has its own rules,its own execution setup,its own way of keeping state.So if you’ve got your AI verification layer living on one network and your apps on another,you’re either stuck relying on some centralized relay service or you’re settling for weaker guarantees.And that tension just gets worse as AI agents start working across more and more chains at once.

Honestly,it feels like trying to validate a signed document in a country that uses a completely different legal system.

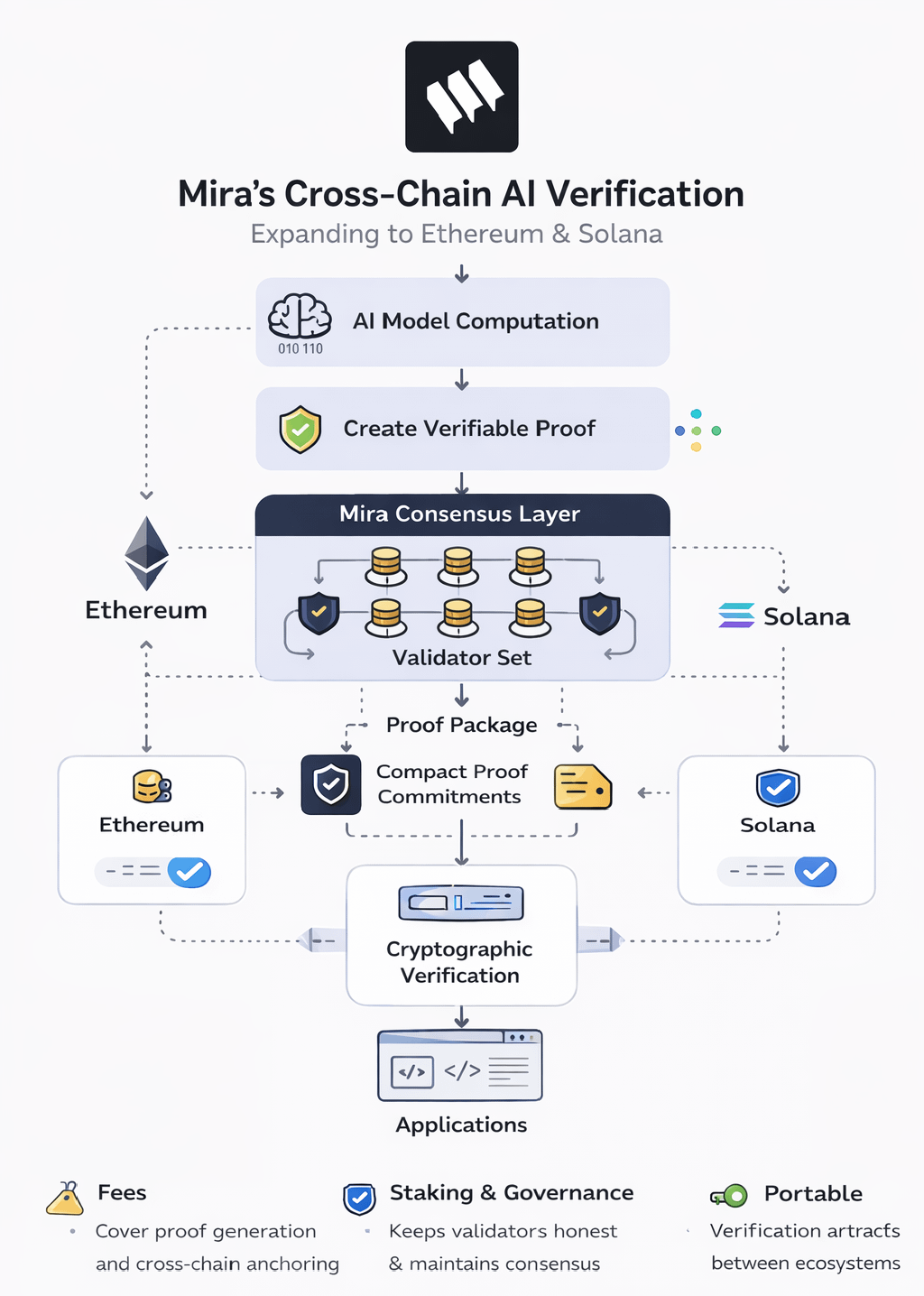

That’s where Mira’s cross chain verification push comes in.The big idea is to separate where you generate the proof from where you use it.Instead of tying AI validation to a single ledger,the network treats verification artifacts as portable cryptographic objects. You can anchor or reference these artifacts on other chains without breaking their integrity.Adding support for Ethereum and Solana means dealing with two totally different execution models,so the verification layer has to stay neutral and flexible.

At the consensus layer,the network uses a validator set to confirm AI proofs before they get shipped out.These validators don’t care about the model’s opinions they just check the deterministic proof structure tied to the computation.Once consensus signs off,the proof package is ready to be referenced elsewhere.This way,downstream chains don’t have to redo all that heavy AI computation.

On the state side,the system records and indexes those verified outputs as compact proof commitments,plus some metadata about the computation.You don’t store raw AI outputs on every chain.Instead,these commitments act like receipts:they point back to the verified execution but are small enough to move easily between networks.If an app on Ethereum or Solana needs to trust a result,all it has to do is check the commitment and its proof pathway.

The cryptography that ties everything together is where you really see the cross chain design.After some AI work gets done, the network creates a verifiable proof linked to that execution.Validators review the proof and collectively sign off.Then,the confirmation record gets packaged up in a way that smart contracts on the destination chain can understand.They can validate the proof’s authenticity without running the original AI job again.So you end up with this layered system AI execution,proof verification,application use all separated but cryptographically connected.

The network’s value comes from keeping this whole verification structure alive.There are fees to cover proof generation and cross chain anchoring,since verifying AI outputs isn’t free.Staking keeps validators honest and consensus solid,while governance steers how validator sets change,how proof standards evolve,and which integrations get priority as AI workloads grow.

Still,let’s be real:cross chain verification is a messy space.Even with portable proofs, you’re up against different execution environments,message delays,and bridge security headaches.The architecture cuts down on trust requirements,but it can’t erase every risk.

Right now,the whole thing feels more like a blueprint than a finished answer.It’s a framework that tries to bring AI verification in line with the multi chain reality of decentralized tech.If AI agents and apps keep spreading across more chains, verification layers will have to keep up. Whether Mira’s approach turns into a lasting standard depends on how it handles scale, real world integration challenges,and the unpredictable ways developers end up using it.

@Mira - Trust Layer of AI $MIRA #Mira