I didn't really question the meaning of proof of work until MIRA pulled it out of Bitcoin’s shadow. Bitcoin made the template feel permanent: expend raw computation on a puzzle that has no ambiguity, no judgment call, and almost no room for interpretation. Then I spent more time with MIRA’s model and realized it is using the phrase in a way that quietly changes the center of gravity. The work is not hash grinding. The work is inference. And once “work” means answering standardized verification questions rather than discovering a lucky nonce, the security problem stops looking like physics and starts looking like incentives. MIRA’s own research frames this directly: verification tasks are turned into standardized multiple choice questions, which makes them systematically checkable across nodes, but also creates a bounded answer space where random success is no longer vanishingly unlikely.

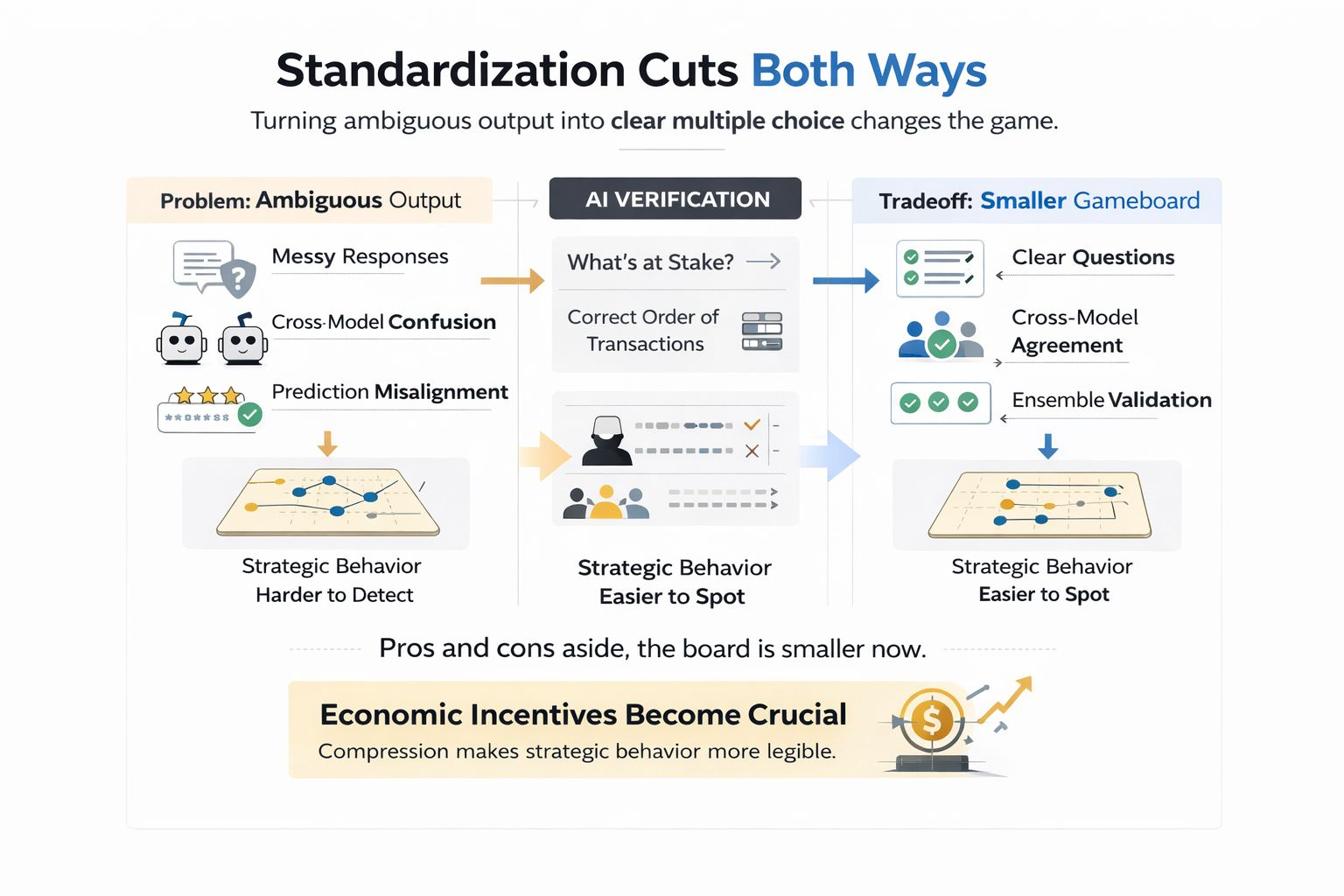

That is the first thing I think many people misunderstand about MIRA. Bitcoin PoW is difficult because the right answer is rare. MIRA style PoW is difficult for the opposite reason: the answer space is small enough that lazy behavior becomes tempting. A hash puzzle does not care whether the miner is clever, honest, or bored. It only cares whether enough computational effort was irreversibly burned to find a valid result. A multiple choice verifier lives in a different world. If the task is reduced to A, B, C, or D, “guessing” becomes economically imaginable in a way it simply is not in Bitcoin mining. MIRA itself has highlighted this tension, noting that constrained answer spaces can make random success probabilities uncomfortably high unless the protocol adds another layer of discipline.

That is why I do not read MIRA as “AI Bitcoin.” I read it as something stranger and, in some ways, more modern: a system where computation alone is not enough to prove seriousness. The missing layer in MIRA is not more compute, it is credible penalty, because an inference based network can always be attacked by partial effort. In Bitcoin, a miner either expends the energy or does not. In MIRA, a verifier can shade effort: run weaker checks, skip full reasoning, outsource judgment to a cheap heuristic, or just gamble that consensus will carry them. This is exactly where stake and slashing stop looking like add-ons and start looking like the actual security envelope. Several current explanations of the network describe it as hybridized around inference work plus staking, with slashing designed to make dishonest or low quality verification economically irrational over time.

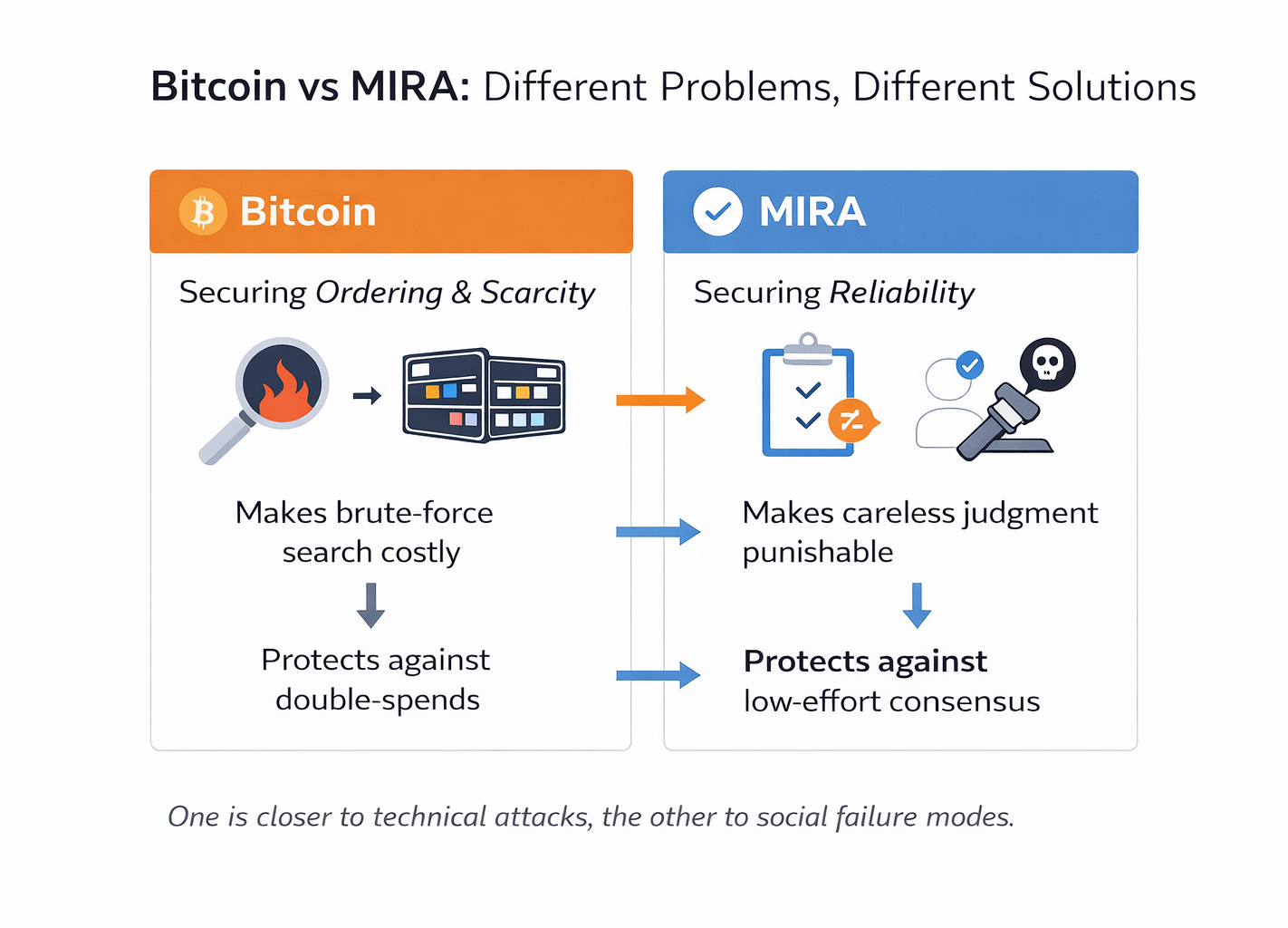

The more I look at this design, the more obvious the philosophical shift becomes: MIRA is not trying to make truth free, it is trying to make laziness expensive. That sounds like a small distinction, but it changes how you evaluate the protocol. Bitcoin secures ordering and scarcity by making brute-force search costly. MIRA is trying to secure reliability by making careless judgment punishable. Those are not the same problem. One protects a ledger from double-spends. The other protects an AI verification market from low-effort consensus theater. And that second problem is harder in a very human way, because it lives much closer to social failure modes: corner cutting, herd behavior, passive collusion, and reputation laundering through majority alignment.

Before I looked closely at MIRA, I thought consensus attacks had to look like spectacle. Then I ran into the quieter form, where the danger is not chaos but tolerated laziness. If the network’s job is to verify claims by routing them into standardized, defensible questions, the real adversary may not be an obvious saboteur. It may be the operator who realizes that “good enough most of the time” remains profitable unless mistakes carry real financial consequences. In that sense, slashing is not just a punishment mechanism. It is the protocol’s way of pricing discipline. Without it, consensus can slowly degrade into correlated guessing that still looks reliable from a distance.

What makes this even more interesting is that standardization cuts both ways. MIRA’s multiple choice framing is powerful because it solves a real verification problem: long form outputs are messy, and different models often “verify” different interpretations of the same text if you leave the task too open-ended. Standardizing claims into discrete answerable questions narrows that ambiguity and makes cross model comparison possible. MIRA’s research on ensemble validation argues that this is not cosmetic formatting but a necessary condition for reliable consensus at all. But the same move that makes verification tractable also compresses uncertainty into a smaller gameboard. Once the board is smaller, strategic behavior becomes more legible. That is why the protocol has to lean harder on economic design than many people initially expect.

This is where I think the real emerging trend sits, and it is bigger than $MIRA . We are entering an era where “proof” in crypto will increasingly mean economically constrained model behavior, not just cryptographic finality. MIRA’s core move is turning complex AI outputs into independently verifiable claims, and once networks start doing that at scale, the boundary between consensus engineering and model governance starts to blur. A verifier is no longer just a machine executing deterministic code. It is an operator running probabilistic judgment under incentives. That means future protocol design will likely focus less on pure throughput metrics and more on calibration metrics: error clustering, verifier diversity, disagreement patterns, abstention rates, and how often consensus was reached too easily. The attack does not disappear, but it gets easier to measure, easier to simulate, and easier to design against.

My own prediction is that the most valuable AI verification networks will not be the ones that shout “decentralized” the loudest. They will be the ones that build the best economics for uncertainty. That means three things. First, stake will matter less as a symbolic commitment and more as loss bearing collateral tied to actual verification quality. Second, slashing will get more nuanced, moving beyond blunt punishment toward performance aware penalties that distinguish error, negligence, and adversarial coordination. Third, the strongest networks will produce something more important than consensus itself: receipts. Once verified outputs come with auditable traces of how agreement was reached, what models participated, and where disagreement emerged, verification stops being just a safety feature and starts becoming an analytics layer for AI. That is where enterprise demand probably compounds fastest, because institutions do not merely want “better answers.” They want accountability they can inspect after something goes wrong.

When I first thought about MIRA’s PoW, I expected to compare it to Bitcoin on energy, speed, or architecture. But the sharper comparison turned out to be moral rather than mechanical. Bitcoin’s miners prove expenditure. MIRA’s verifiers are being asked to prove diligence. One system secures itself by making computation scarce and expensive. The other secures itself by making bad judgment costly and visible. That is why the phrase “proof of work” feels familiar here while the actual game feels entirely different. MIRA does not inherit Bitcoin’s security story. It writes a new one for a world where the thing being defended is not just transaction history, but the reliability of machine-made claims. And in that world, hashing is the easy part. The hard part is making sure nobody gets paid for pretending to think.

@Mira - Trust Layer of AI #Mira $MIRA