@Mira - Trust Layer of AI #mira $MIRA

Mira Network is a project that aims to solve one of the most fundamental issues in artificial intelligence today — trust. I’m genuinely amazed at how much AI has progressed over the past few years, yet there’s a persistent problem: many powerful AI models still produce outputs that are incorrect, misleading, biased, or unverified. They’re seeing this problem everywhere, from healthcare decision systems to legal research tools, and the core of the issue is that traditional AI gives answers without a guarantee of truth. If Mira succeeds, it becomes a foundational layer that makes AI outputs safe, dependable, and auditable.

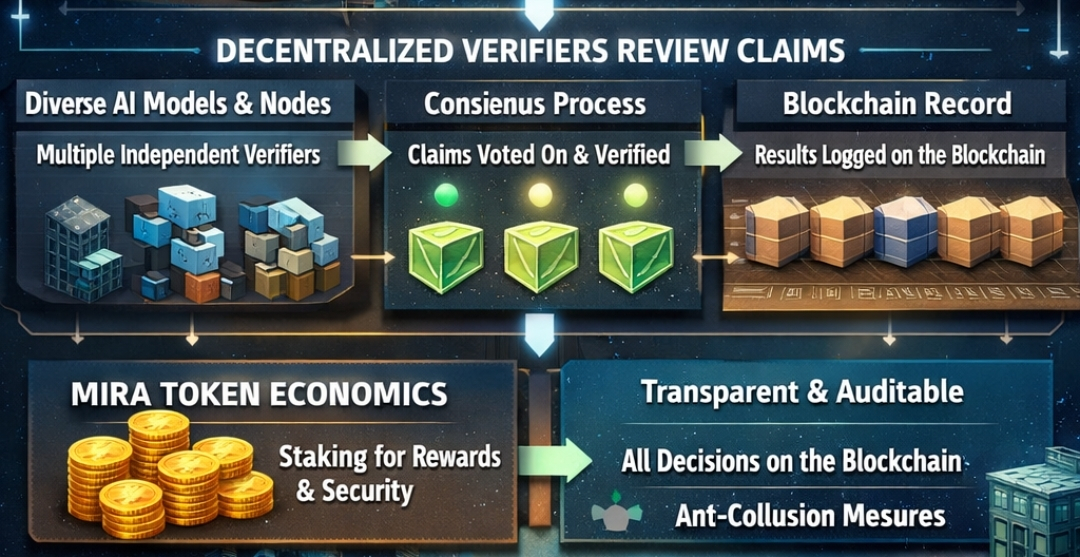

Mira Network calls itself a decentralized trust layer for AI, and that description carries deep meaning. Instead of relying on a single AI model to decide what’s true, Mira breaks down AI responses into smaller pieces called claims. Each of these claims is independently verified by a network of nodes running multiple different models. When most of these verifiers agree a claim is true, that claim is recorded on the network as verified knowledge. This process creates a publicly auditable trail of decisions, built directly into Mira’s internal mechanics and powered by a blockchain ledger that can’t be tampered with after the fact. Real verifiable truth becomes more than an idea; it becomes a stored fact that any application can reference.

Inside Mira’s system, the first major step is transforming a raw AI answer into discrete factual units. For example, if an AI says “The population of City X is Y and it became the capital in year Z,” Mira isolates each assertion. Each claim is then sent to different verifier nodes, each running different AI models or verification logic. This is intentional because traditional neural networks operate probabilistically — they produce the most likely answer given the patterns they learned, not an assuredly correct answer. By using multiple verifiers, Mira dramatically reduces the chance that a single model’s hallucination becomes accepted as truth. The decision about whether a claim is correct becomes a consensus process among independent verifiers, not the sole decision of one monolithic system.

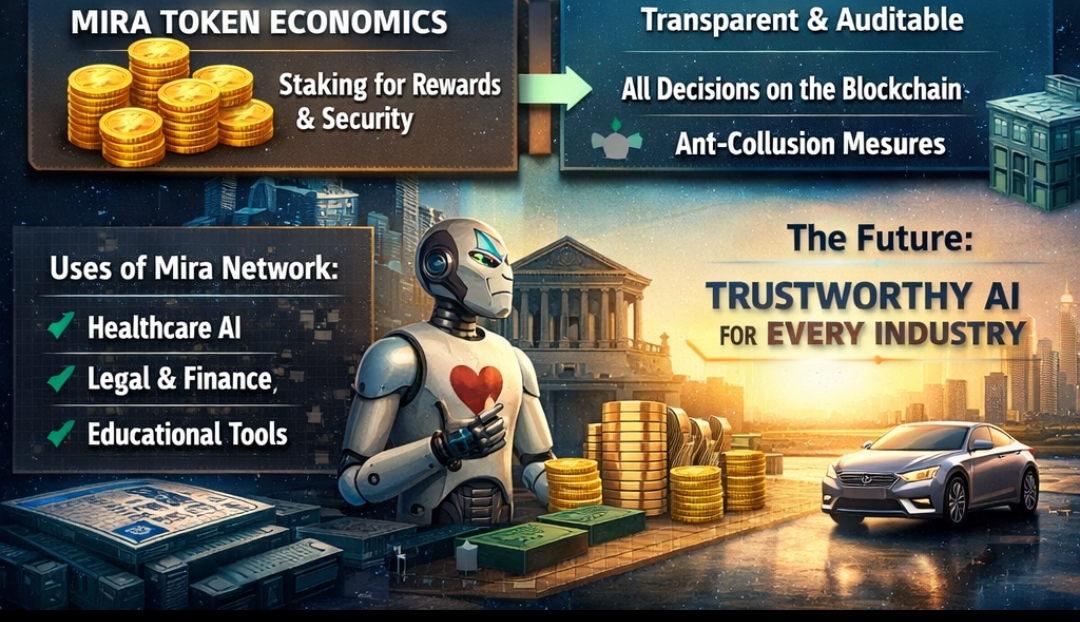

This design decision was made after careful reasoning: if AI continues to expand into areas where mistakes can have serious consequences, then blind trust won’t suffice. They’re seeing that even state‑of‑the‑art AI models can confidently present false statements, and that’s unacceptable for anything beyond casual use. Mira’s solution is to decentralize verification itself so that no single authority controls truth. Each verifier stakes Mira tokens to participate, aligning economic incentives so that honest truth verification is the most rewarding strategy. If a verifier lies or behaves dishonestly, they risk losing their stake. This economic mechanism helps ensure that incentives and integrity move in the same direction.

Once a claim has gone through this decentralized verification process, and a majority of independent verifiers agree it is accurate, Mira records the verified result on its blockchain. By putting this data on chain, Mira ensures transparency and permanence: anyone can later inspect how the decision was reached, which verifiers participated, and what models were used in the process. This auditability turns opaque AI outputs into documented truth that can be referenced by any downstream system. Mira’s blockchain acts as a trust ledger where decisions are chronologically preserved and resistant to tampering, which is vital for sectors where accountability is non‑negotiable.

Mira’s internal consensus mechanism is a hybrid mix of economic alignment and logical verification. Traditional blockchains use approaches like proof‑of‑stake or proof‑of‑work to secure the network, but Mira goes further by embedding purposeful reasoning into its consensus. Verifiers don’t just produce cryptographic work; they produce reasoned judgments about factual claims. That’s a fundamental difference. Stakers are motivated both by economic rewards and by the shared goal of building a trustworthy AI ecosystem. The MIRA token is central to this structure: it is used for staking to participate in verification, for governance rights, and as a fee mechanism when applications request verified outputs.

The metrics that define the health of the Mira Network span both technical performance and community engagement. One key indicator is verification accuracy, which refers to the difference in error rates between unverified AI outputs and those passed through the Mira system. Rallying consensus across multiple verifiers tends to cut error rates that were once common in standalone AI models. Another important metric is network participation, including the number and distribution of verifier nodes, which shows how decentralized and resilient the system has become. Token activity is also informative; higher staking rates, active governance voting, and real‑use verification fees all show that the network is alive and continuously used by the community.

As with any ambitious system, Mira faces multiple risks. One of the main challenges is the potential for bias even among multiple models. If a group of verifier models share similar blind spots, their consensus might still be skewed. Mira mitigates this by encouraging diversity in verification models, meaning the system doesn’t depend on any single kind of reasoning. Another risk is collusion among verifiers; if many validators were to coordinate to verify false claims, the integrity of the trust layer could be endangered. To counter this, Mira uses randomization in claim assignment so that it becomes extremely costly and difficult for colluding actors to control enough verifiers to dominate consensus.

Scalability is another concern. Verifying every claim across many nodes requires significant computing resources. Mira addresses this with distributed verification techniques and optimized load balancing built into its software developer tools, enabling the system to process large volumes of verification requests without slowing down or bottlenecking. Additionally, the project’s economic design ensures that even if the value of the token fluctuates, participation remains attractive because the token is not just a speculative asset but a functional tool for accessing and maintaining trust services.

Mira also gives the community a direct voice in the project’s evolution. Token holders can influence upgrades, network parameters, and ecosystem development through on‑chain governance. This shared responsibility means that as the network grows, decisions about how it should evolve reflect the voices of those who actively use and contribute to the system rather than a centralized authority.

We’re seeing early real‑world use cases emerge where Mira’s verification layer adds tangible value. AI chat applications, research assistants, and content generation tools are using Mira to provide outputs that can be confidently backed by verified claims. The number of daily verification transactions and the volume of tokens processed have steadily increased, indicating that developers and users alike are embracing the concept of trustable AI at scale.

Looking to the future, Mira could become a foundational layer across industries that cannot afford uncertainty — sectors like finance, legal, medicine, and public policy. Imagine a world where autonomous systems are not only powerful but also transparently accountable, where every decision an AI makes can be traced and verified by a decentralized community of verifiers. That world feels closer because projects like Mira are actively building the infrastructure to make honesty and reliability a natural part of AI’s design.

As I reflect on Mira’s journey, it feels like more than just a piece of software. It’s a philosophical stand — a reminder that as technology becomes ever more capable, it must also become ever more trustworthy. We’re at a moment where AI’s potential can either divide us or empower us, and the difference lies in whether its outputs are grounded in truth. Mira Network represents a hopeful vision where trust isn’t optional, where technology serves humanity with accountability, and where we build systems that reflect our highest ideals. If we can make AI that genuinely earns trust, then we’re not just solving a technical problem — we’re building a future where machines help us navigate the world with confidence, clarity, and shared responsibility.