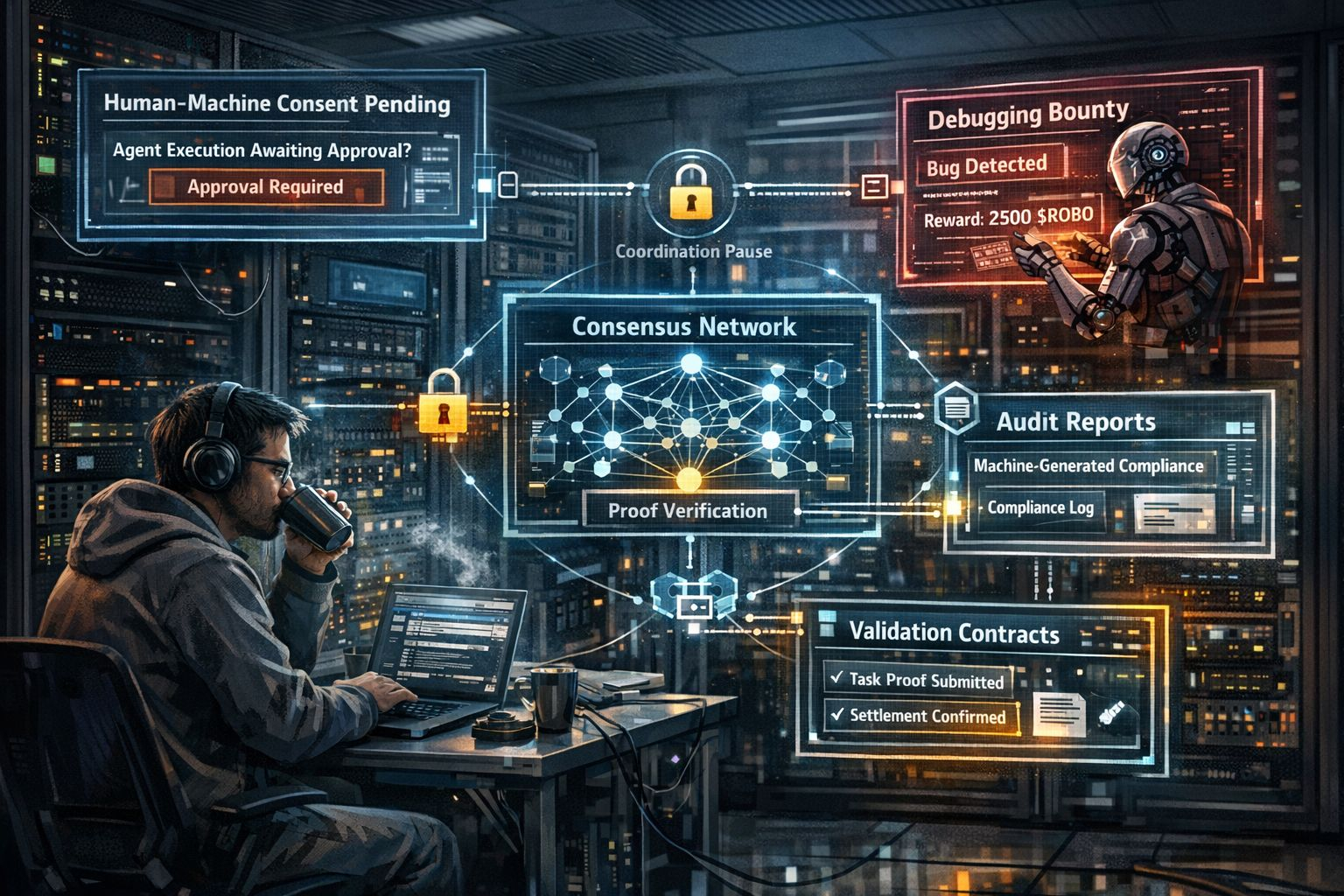

The server room lights were still glowing when I checked the dashboard early this morning. My coffee had already gone cold another casualty of staring too long at task queues and execution logs. Behind the rack, the ventilation fan hummed with its usual tired rhythm, a mechanical sigh that made the room feel alive in a strangely contemplative way.

On the screen, one entry caught my attention again: a Human-Machine Consent Framework execution waiting for approval. It wasn’t failing, and it wasn’t progressing either. It was simply paused inside ledger memory, like a message someone forgot to read. I shrugged and murmured, “Maybe someone’s busy.” Of course, the terminal didn’t respond, but sometimes the network feels different when it’s waiting for a human signal.

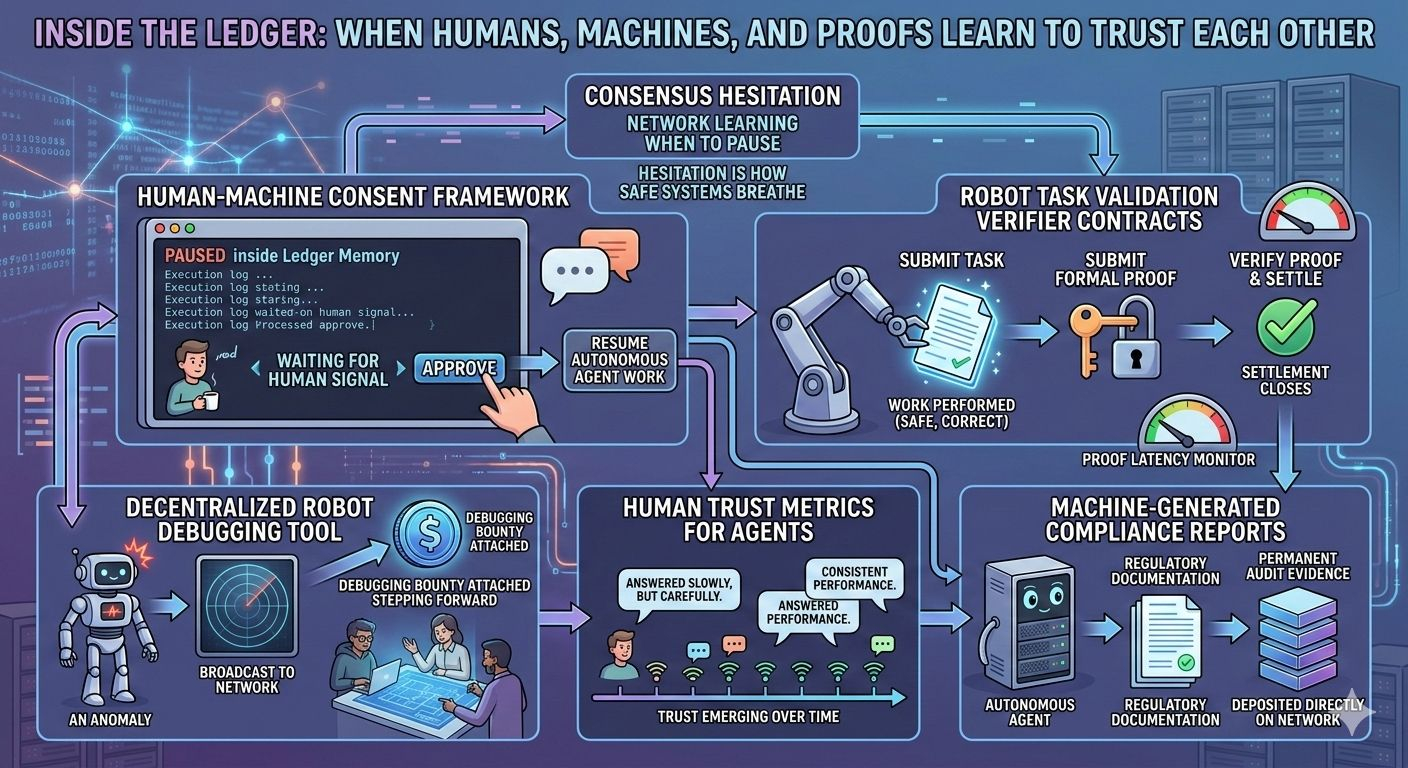

That pause is one of the unusual characteristics of the @Fabric Foundation Protocol ecosystem. Most infrastructure systems are designed to push forward relentlessly, automating every step to minimize human friction. Fabric takes a different approach. It behaves less like a machine pipeline and more like a coordination organism—one that occasionally pauses, requiring small moments of human acknowledgment before autonomous agents continue their work.

As I watched the activity feed scroll, a debugging bounty transaction appeared. It reminded me how decentralized robot debugging tools are quietly changing how we deal with failure. Instead of hiding bugs in internal logs or private issue trackers, anomalies are surfaced publicly on the network. A bounty is attached, inviting anyone who can diagnose the problem to step forward. It’s a transparent approach to reliability: when something breaks, the network doesn’t conceal the issue it broadcasts it.

Another subtle layer of the system is how human trust metrics for agents are collected. Users can leave feedback after interacting with machine actors, but the protocol doesn’t demand emotional ratings or forced reviews. Some people leave thoughtful comments—“It answered slowly, but carefully.” Others leave nothing at all. The ledger simply records behavioral signals and lets patterns form over time. Trust emerges not from a single interaction but from consistent performance across many.

Robot task validation verifier contracts are often misunderstood by newcomers. The purpose isn’t to make robots more intelligent. Instead, it’s to make their work provable. Before a task is considered complete and settlement closes on the network, a formal proof must be submitted verifying that the work was performed safely and correctly. I monitor proof latency frequently, because delays often reveal hidden coordination friction between computation nodes and consensus routing.

Institutional deployments introduce another fascinating dimension: machine-generated compliance reports. Organizations increasingly want autonomous systems that can also produce their own regulatory documentation. These reports are stored directly on the network, forming permanent audit evidence. Sometimes I wonder if future administrators will trust machines more than humans—not because machines are wiser, but because they never forget.

Still, the risks are subtle. If human feedback slows down, autonomous execution layers can accumulate long chains of waiting approvals. Incentive structures must remain balanced as well. Debugging bounties only work if the rewards remain meaningful; otherwise, anomalies might go unnoticed.

Standing in that quiet server room, I found myself reflecting on what the protocol is really doing. Maybe it isn’t just teaching machines how to be trustworthy. Maybe it’s teaching humans how to trust systems they can’t fully understand.

After all, consensus might simply be the network learning when to pause.

And perhaps hesitation, in the right place, is how safe systems breathe.$ROBO #robo