I keep noticing that systems built for reliability rarely move as fast as we expect. Every time verification enters the picture, something else appears too: extra steps, extra coordination, and usually a little more time.

AI systems normally avoid that tradeoff.

A model generates an answer and the response appears almost instantly. It feels finished. But the strange part is that the same model that produces the answer is often the one implicitly confirming it.

That structure always felt a bit fragile.

What @Mira - Trust Layer of AI seems to explore is a different path. Instead of assuming the answer is final, the output can be treated as something that still needs checking.

In practice that means the response can be broken into smaller claims. Those claims can then be reviewed by other models running independently across the network.

Sometimes they agree.

Sometimes they don’t.

That disagreement is actually useful, because it makes uncertainty visible.

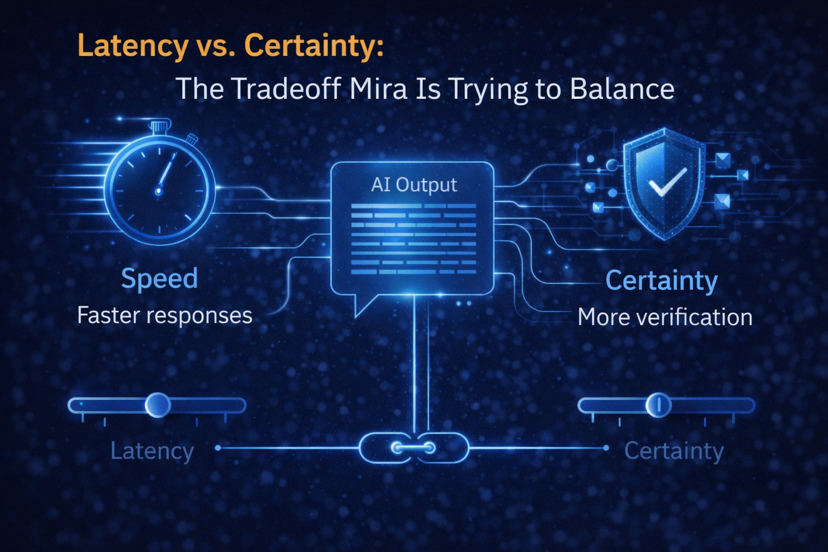

But there’s an obvious tension hiding inside this design. Every additional verifier introduces a small delay. More models reviewing a claim means more computation and more coordination before the system settles on a result.

Verification increases confidence.

Speed keeps systems usable.

Trying to have both at the same time is where the challenge sits.

If verification becomes too light, the system starts to look like traditional AI again—fast, but easier to mislead. If verification becomes too heavy, responses may slow down enough to affect real-time interaction.

Somewhere between those two extremes is the balance point.

That seems to be the space where $MIRA and #Mira are experimenting: building a system where answers can still arrive quickly, but where reliability doesn’t depend on trusting a single model’s confidence score.

Because sometimes the real question isn’t how fast an answer appears.

It’s how certain we can be once it does.