Something I have noticed over time.

People talk about robots as if the machines themselves are the main story.

Better sensors.

Smarter models.

Faster processors.

But when I look at how complex systems actually grow, a different pattern keeps showing up.

Technology evolves first.

Then the coordination layer quietly becomes the real foundation.

The internet did this for computers.

Mobile operating systems did it for apps.

And when machines begin operating together at scale, something similar will likely be required.

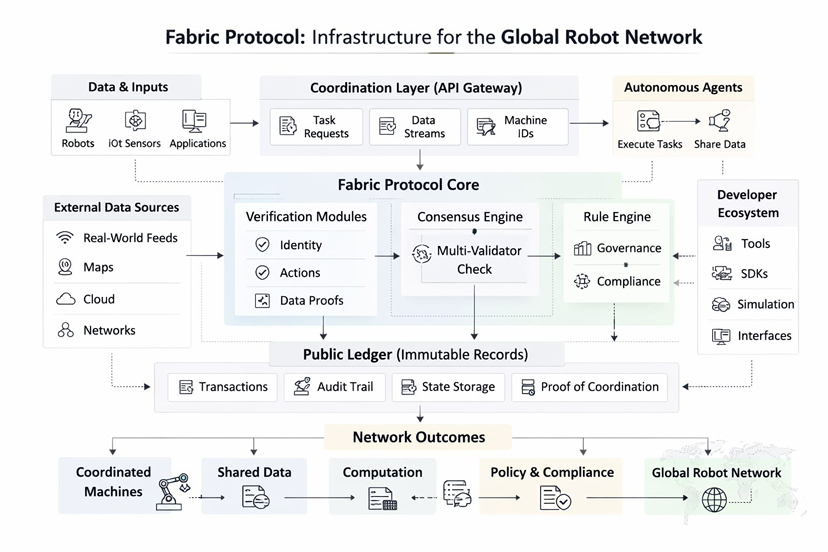

That is the idea that keeps coming back to me when thinking about Fabric Protocol: The Infrastructure Layer for the Global Robot Network. The protocol is not focused on building individual robots. It is attempting to create the structure that allows machines, data, and computation to coordinate through a shared system.

While reading recent ecosystem notes from @Fabric Foundation , one small technical detail stood out to me. A protocol update earlier this year expanded the modular execution framework used for machine coordination. Instead of relying on a single rigid system, the infrastructure now allows different modules to manage verification, data handling, and computation separately.

It sounds subtle.

But the implications are larger than they appear.

A robotic action is no longer just a command executed by one device.

It becomes an event recorded and validated across a network.

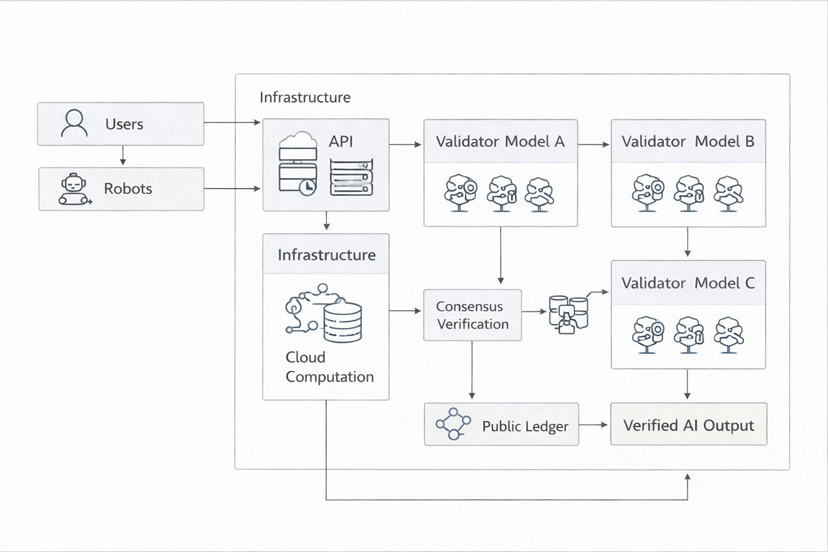

That distinction matters because autonomous machines introduce a new form of trust problem. Humans can debate instructions or correct misunderstandings. Machines cannot. They rely entirely on the structure of the system coordinating them.

Which raises an interesting question.

If machine networks grow larger, will coordination infrastructure become more important than the machines themselves?

Another signal appears when observing how the surrounding ecosystem is evolving. Some developers are building control frameworks. Others are experimenting with agent interfaces. A few are designing simulation environments that test robotic behavior before it reaches real-world deployment.

Different tools.

Different contributors.

But everything connects back to the same coordination layer.

This kind of structure usually appears when a technology moves from a single project to something closer to a network.

There is also a simple practical lesson that keeps appearing in early autonomous systems.

Machines are good at executing tasks.

They struggle with shared context.

One robot collecting environmental data.

Another analyzing that information.

A third performing an action based on the result.

All of them need agreement about what actually happened.

Infrastructure quietly provides that agreement.

What makes Fabric interesting is that it treats robots less like isolated tools and more like participants inside a coordinated network where data, computation, and rule enforcement interact through a public ledger.

Right now it still feels early.

But history tends to follow familiar patterns.

First comes the breakthrough technology.Then comes the network layer that allows everything to coordinate.And that second layer often ends up shaping the entire ecosystem.

I keep noticing that many conversations still revolve around machines becoming smarter.

That will matter, of course.

But coordination may end up mattering more.Because when machines begin working together, intelligence alone is not enough.

They need infrastructure that allows them to agree on reality.