For a long time, I was skeptical of anything that sounded like “AI verification.”

Not because reliability isn’t important. Anyone who has worked with real systems knows reliability is everything.

But the phrase usually attracts solutions that try to package a deeply complicated problem into a clean label and sell it as a product.

AI already has plenty of labels.

Yet sometimes you can tell when an idea doesn’t come from a pitch deck, but from a real operational pain point. The moment AI systems start touching real-world decisions, that pain point becomes obvious.

Money moves.

Access gets granted.

Claims get approved or denied.

Compliance reports are filed.

Medical notes are added to patient records.

Even something simple—like an automated refund decision in customer support—can escalate into disputes if nobody can explain how the decision was made.

This is exactly the problem that Mira Network is trying to solve.

Because the real question about AI isn’t:

“Is the model smart?”

It’s:

“What happens when the AI is wrong—and who can prove what happened?”

The Real Problem With AI Isn’t Errors

AI making mistakes isn’t new.

Humans make mistakes.

Spreadsheets make mistakes.

Databases contain errors.

The world has always been imperfect.

The problem with modern AI is different.

AI often produces answers that sound perfectly correct even when they are wrong.

There’s no hesitation.

No visible uncertainty.

No built-in evidence trail.

The output looks finished.

That changes how people interact with it.

And that’s where the reliability problem begins.

Reliability isn’t just about how good a model is.

Reliability is about the entire workflow around the model.

If a system rewards speed, people accept plausible answers.

If a system punishes mistakes, people demand evidence.

AI adapts to the environment you drop it into.

Right now, most environments reward speed.

And that’s exactly why Mira Network approaches the problem differently.

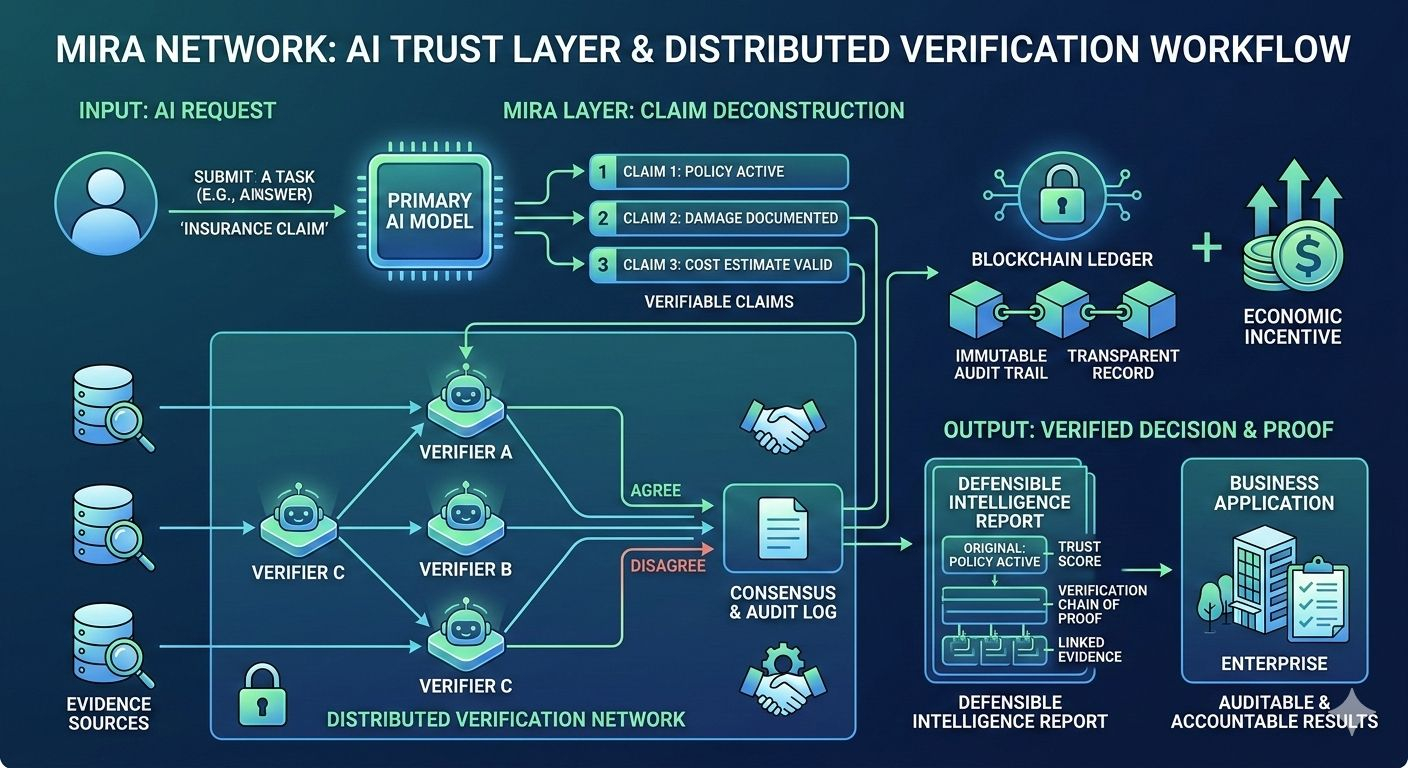

Instead of treating AI outputs as final answers, Mira treats them as claims that must be verified.

Why Traditional AI Safety Methods Fall Short

When organizations realize AI errors can create risk, they usually reach for familiar solutions.

They add:

Human reviewers

More prompts

More rules

Logging systems

Internal evaluation dashboards

None of these are useless.

But they rarely solve the core problem.

Take human review.

In theory, a human checking AI output sounds responsible.

In practice, something predictable happens:

The AI output becomes the default.

The human becomes a rubber stamp.

Not because humans are careless, but because the queue is long, the workload is high, and organizations prioritize throughput.

The question shifts from:

“Is this correct?”

to

“Did someone review it?”

And those are very different questions.

Fine-tuned models create another treadmill.

Policies change.

Data drifts.

Edge cases appear.

Even after retraining, the same fundamental issue remains:

When something goes wrong, can you prove how the decision was made?

This is the gap that Mira Network is designed to fill.

Mira Network Changes the Shape of AI Output

@Mira - Trust Layer of AI doesn’t try to make AI perfect.

Instead, it changes how AI outputs are structured and validated.

Rather than producing a single confident answer, Mira breaks outputs into verifiable claims.

Each claim can then be independently checked by other AI systems within the network.

This transforms AI output from:

A single block of text

into

A set of traceable assertions with verification results.

That difference is enormous in high-stakes environments.

It aligns much more closely with how real institutions operate.

Compliance teams don’t approve documents because they “feel right.”

They approve them because specific claims meet specific standards.

Mira brings that same structure to AI.

Distributed Verification Instead of Single-Point Trust

Another core idea behind Mira Network is distributed verification.

Instead of trusting one model—or one organization—to determine whether an AI output is correct, Mira allows multiple independent AI verifiers to evaluate claims.

These verifiers reach consensus about whether a claim is supported by evidence.

The process creates a transparent record that shows:

• What the original AI claimed

• Which verifiers checked it

• What evidence was used

• Where verifiers agreed or disagreed

This record becomes part of the Mira verification layer.

And that record matters far more than people think.

Because when disputes happen, nobody cares that your AI was “state-of-the-art.”

They care about what you can prove.

Why Cryptographic Infrastructure Matters

At first glance, it may seem strange that blockchain infrastructure appears in this discussion.

But the reason is simple.

Blockchains are fundamentally tamper-resistant record systems.

They are designed to create shared logs that multiple parties can rely on without trusting a single operator.

In the case of Mira Network, blockchain infrastructure ensures that verification results are:

Immutable

Transparent

Auditable

This doesn’t magically guarantee correctness.

But it guarantees something equally important:

History cannot quietly be rewritten after the fact.

That matters enormously in regulated environments where auditability is essential.

Trust Needs Economic Incentives

Another important aspect of Mira Network is the incentive structure.

Verification doesn’t happen automatically.

It requires resources.

Mira introduces economic incentives that reward participants in the network for verifying AI claims accurately.

In other words:

Verification becomes a service that is priced and rewarded.

This matters because organizations behave according to cost structures.

If verification is expensive, it won’t happen.

If verification is cheap and automated, it becomes standard practice.

Mira’s long-term vision is to make trust cheaper than failure.

Where Mira Network Can Be Used

The most realistic use cases for Mira are not glamorous.

They are operational systems where mistakes create real financial or legal risk.

Examples include:

Insurance claims processing

Credit and lending decisions

Healthcare billing and coding

Compliance and sanctions screening

Enterprise procurement workflows

Financial reporting automation

In these systems, the biggest problem isn’t occasional AI errors.

The real problem is the absence of defensible decision records.

Mira Network creates those records.

The Risks Mira Still Needs to Solve

No infrastructure system is perfect.

Mira Network faces real challenges.

Verification must remain fast enough for operational workflows.

Costs must remain lower than the human processes it replaces.

The system must avoid verifier collusion or groupthink.

Verification standards must be meaningful, not just a symbolic checkbox.

And institutions will inevitably ask difficult questions about governance and accountability.

These challenges are unavoidable.

But they are also the exact problems that any AI trust infrastructure must eventually address.

The Quiet Role Mira Is Trying to Play

When you step back, Mira Network is not trying to “fix AI.”

That’s an impossible goal.

Instead, Mira is attempting something more practical.

It is trying to give AI outputs a structure that fits into existing human systems of trust.

Systems built on:

Audit trails

Evidence

Verification

Accountability

That kind of infrastructure is rarely exciting.

But it is what makes complex systems reliable.

You usually only notice it when it’s missing.

As AI moves from answering questions to making real decisions, systems like Mira may become essential.

Because at that stage, the goal isn’t impressive intelligence.

The goal is defensible intelligence.

And that is the real problem Mira Network is trying to solve.