Most coordination systems look fine when activity is light.

Agents act.

Logs record.

Decisions propagate.

Nothing unusual.

Pressure reveals the structure.

A task executes.

State updates.

Another agent reacts to that state.

Minutes later a governance parameter tightens or a verification window resolves differently. Nothing “fails.” But the meaning of the earlier action quietly shifts.

That is the pattern I keep watching with $ROBO inside Fabric Protocol.

Not whether agents can act.

Whether meaning holds once activity stacks.

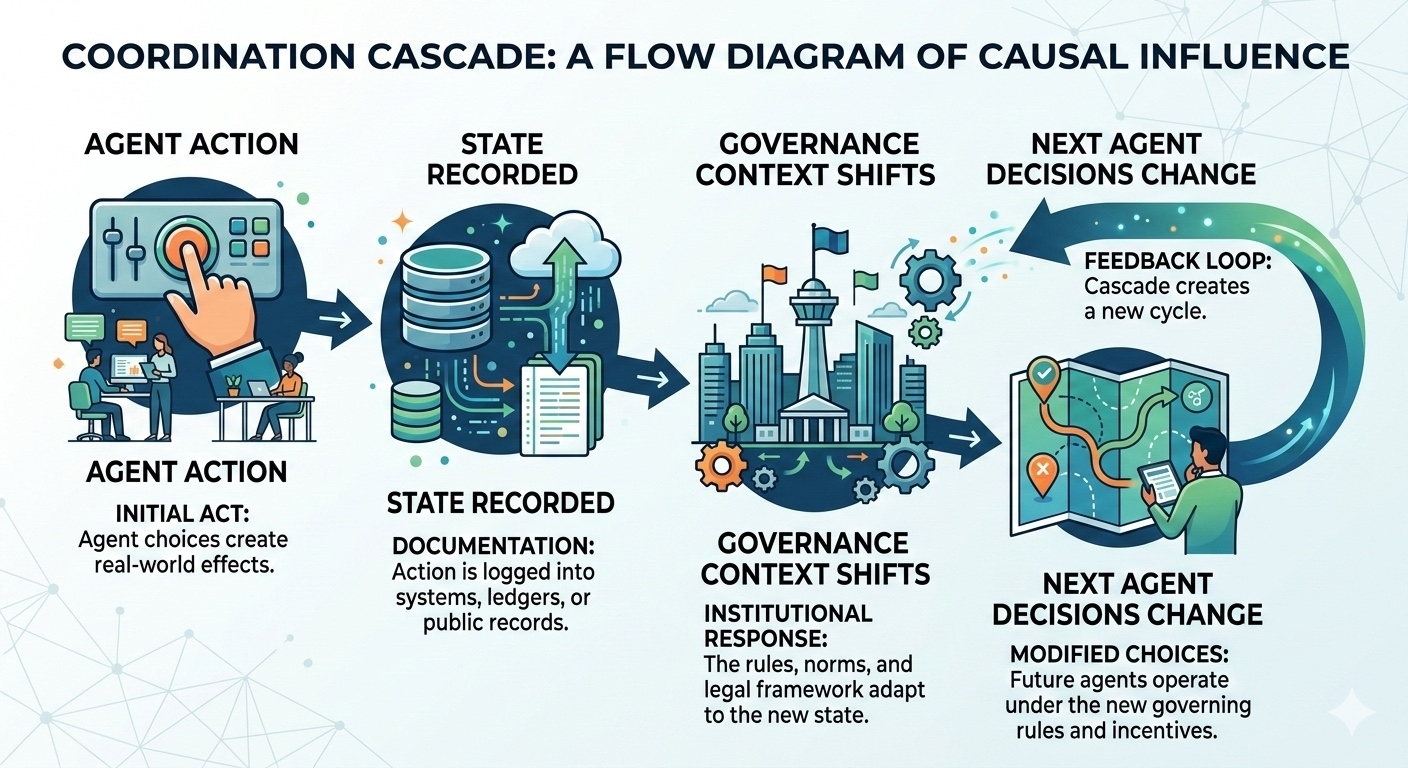

Because in agent-native infrastructure, actions don’t sit alone. They cascade. Execution influences state. State influences governance context. Governance context shapes what future agents are allowed to do.

If interpretation changes after those layers propagate, the network does not collapse. It reallocates work. Humans step in to reconcile what automation already advanced.

The cost appears slowly.

The first signal to watch is reinterpretation frequency.

How often does an accepted outcome keep its form but change consequence later?

Rare reinterpretations are manageable. Systems expect occasional adjustments.

But when reinterpretations cluster around busy periods or governance updates, behavior adapts quickly. Teams begin inserting waiting periods. Extra checks appear. Downstream actions pause.

Autonomy quietly becomes supervised automation.

That shift rarely shows in headline metrics. It shows in how participants design around uncertainty.

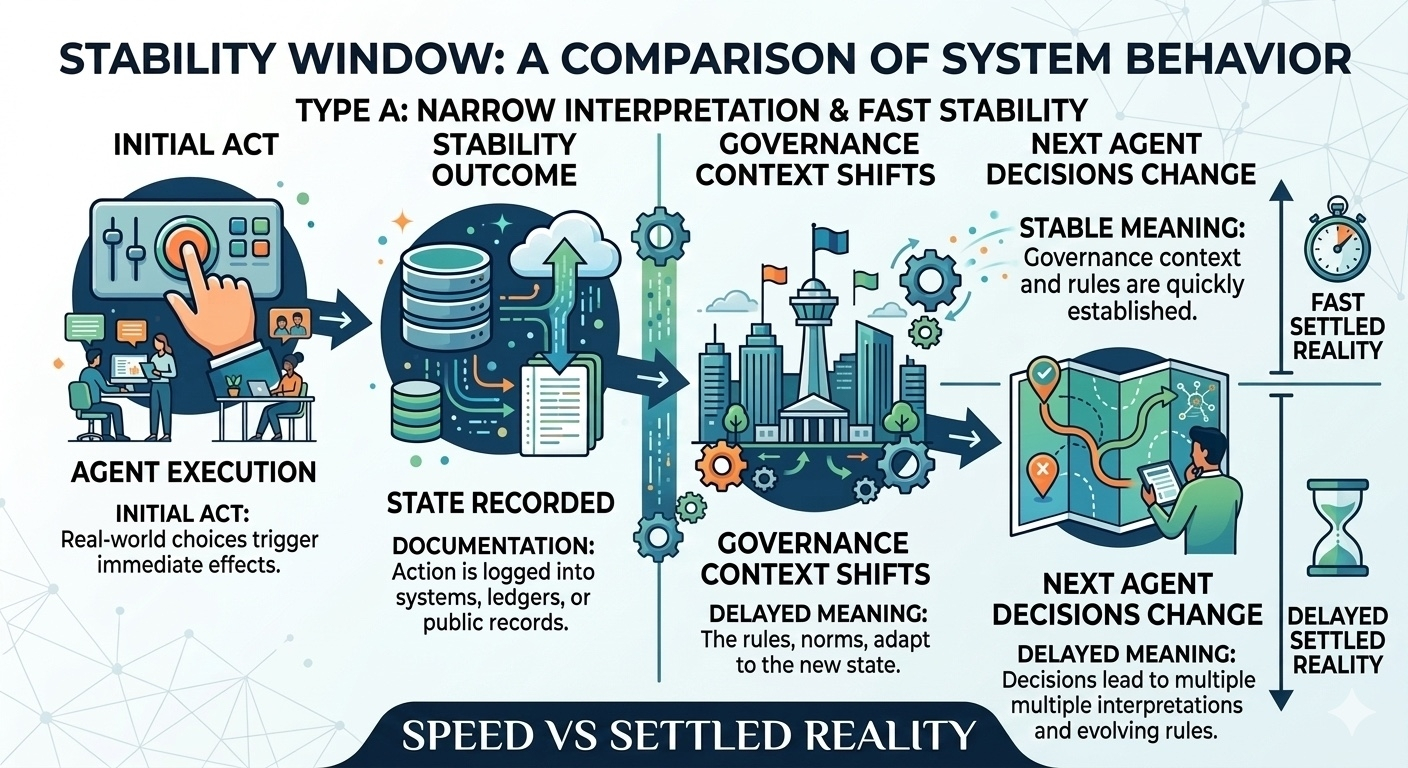

The second signal is time to stable meaning.

Execution speed is easy to celebrate. But speed without stability simply moves uncertainty forward.

An action that executes instantly but takes minutes to settle in interpretation is not efficient. It is deferred ambiguity.

Healthy systems compress that window after stress.

Unhealthy ones normalize it.

The third signal is explanatory clarity.

When reinterpretation happens, explanation determines whether the system learns.

If reason codes remain stable, builders can automate reconciliation. Agents can replay logic. Systems adapt.

If explanations drift, reconciliation becomes manual. Operators intervene. Automation slows.

That is where the infrastructure underneath $ROBO matters.

Backed by the Fabric Foundation, the goal is not just activity. It is verifiable computing and transparent coordination between agents, data, and governance.

That means adjustments must remain legible.

Because legibility determines whether complexity compounds or stabilizes.

Markets tend to measure excitement.

Systems reveal discipline differently.

Compare a calm period with a high-activity one. Watch whether interpretation windows tighten again. Watch whether explanations stay consistent.

Healthy networks show scars that heal.

Unhealthy ones accumulate small buffers everywhere.

And buffers always mean the same thing.

Someone is waiting before acting.