A few months ago I watched a short video of a warehouse where almost everything moved on its own. Small robots carried shelves from one side of the building to the other while humans stood nearby checking screens. Nothing about the scene looked dramatic. It felt strangely ordinary. That is probably the most interesting part. Machines making decisions used to sound futuristic. Now they simply show up in daily work environments and people accept them quietly.

But acceptance is not the same as trust.

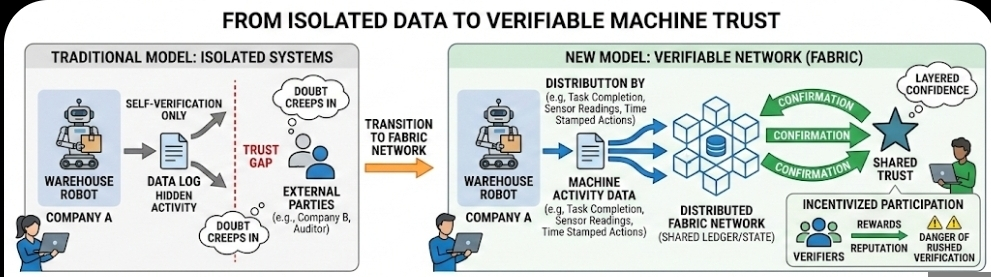

Most autonomous systems today operate inside controlled environments where the organization running them already assumes responsibility. A company owns the robots, the software, and the logs that track what happened. If something goes wrong, the company checks its internal records and decides what went wrong. This works when everything stays inside one system. The moment machines begin interacting across different organizations, things become less comfortable.

Think about a delivery drone moving goods between two companies or a robot verifying inventory inside a shared warehouse. The machine might claim it completed a task, but who confirms that claim? Usually the answer is simple: the system that produced the data verifies itself. And that is where doubt quietly creeps in. When the same machine that performs an action also produces the only record of that action, the idea of verification becomes a little thin.

Fabric is trying to address that small but important gap.

Instead of letting machines operate inside isolated data environments, Fabric attempts to create a structure where machine activity can be recorded in a way others can inspect. Not just the operator. Potentially anyone participating in the network. In simple terms, the system treats machine behavior as something that should leave a verifiable trail. A robot moving an item, a sensor recording a measurement, a machine completing a task—these events become pieces of data that other participants can check.

This might sound technical, but the logic is actually very familiar. Financial systems already rely on shared records. When money moves between accounts, the record exists outside the control of a single participant. Multiple systems confirm the same transaction. Fabric tries to extend that thinking into the physical world where machines are doing work.

The challenge is that physical activity is messy.

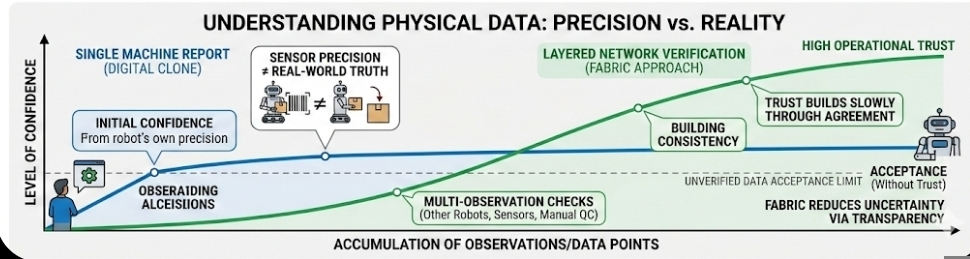

Machines generate enormous amounts of data, but that data does not automatically prove anything. A robot can report that it lifted a package. A drone can report that it delivered a parcel. Sensors can record movement, location, or temperature. Yet each of those signals depends on hardware that might fail or misreport. A digital record can be precise and still be wrong about reality.

Fabric’s approach seems to acknowledge this indirectly. Instead of relying on a single data point, the network encourages multiple forms of observation and verification. If several participants check the same machine-generated claim, confidence gradually increases. It is less about proving something absolutely and more about building a layered picture that becomes difficult to falsify.

This idea becomes especially interesting when incentives appear.

Networks rarely run on goodwill alone. In some designs connected to Fabric-like systems, participants who verify machine activity may receive rewards. Verification becomes a kind of job. Someone checks whether the data produced by a machine is consistent with other signals, and the system compensates them for doing that work carefully.

The moment incentives enter the picture, behavior changes. Anyone who has spent time on Binance Square understands this instinctively. Visibility metrics—likes, comments, rankings—shape how people write and what they choose to discuss. Content that fits the platform’s feedback loops spreads faster. Over time, creators begin adapting their style to the environment.

Verification networks may develop similar patterns. If reputation or rewards depend on confirming machine data accurately, participants will pay clo se attention to the signals that affect credibility. Who verified correctly. Who rushed. Who disagreed with the majority and turned out to be right.

se attention to the signals that affect credibility. Who verified correctly. Who rushed. Who disagreed with the majority and turned out to be right.

Of course incentives can also distort behavior. If verification becomes profitable, some participants may try to validate data too quickly just to collect rewards. That risk exists in every incentive system. The real question is whether the network structure encourages careful verification or shallow agreement.

Another issue sits quietly in the background. Even if machine activity is becoming visible and verifiable, humans still needs to interpret what the data really means. A robot might record that it moved an object from one location to another. But was that the correct object? Was the destination correct? Did the action match the intended task? Data can confirm motion, timestamps, and location coordinates, but intention is harder to capture.

This is why trust between humans and machines rarely comes from the technology at alone. It grows through layers of observation, correction, and sometimes disagreement. Systems like Fabric do not eliminate uncertainty. They try to make uncertainty easier to examine.

What I find interesting about the project is not the technical mechanism itself but the direction it hints at. Machines are no longer just tools executing instructions. They are becoming independent participants in complex environments—warehouses, transportation networks, supply chains. As their role expands, the question of how their actions are recorded and verified becomes unavoidable.

Fabric seems to be experimenting with one possible answer: treat machine behavior the same way financial systems treat transactions. Record it openly, allow others to inspect it, and let trust grow slowly through repeated verification.

Whether that approach works at large scale is still unclear. Physical systems are unpredictable in ways digital ledgers are not. Sensors fail. Environments change. Machines encounter situations no dataset prepared them for.

But the attempt itself says something important. The future relationship between humans and autonomous systems may depend less on how intelligent machines become and more on how visible their actions are once they start working among us.

Articolo

Fabric’s Role in Creating Trust Between Humans and Autonomous Systems

Disclaimer: Include opinioni di terze parti. Non è una consulenza finanziaria. Può includere contenuti sponsorizzati. Consulta i T&C.

0

8

449

Esplora le ultime notizie sulle crypto

⚡️ Partecipa alle ultime discussioni sulle crypto

💬 Interagisci con i tuoi creator preferiti

👍 Goditi i contenuti che ti interessano

Email / numero di telefono