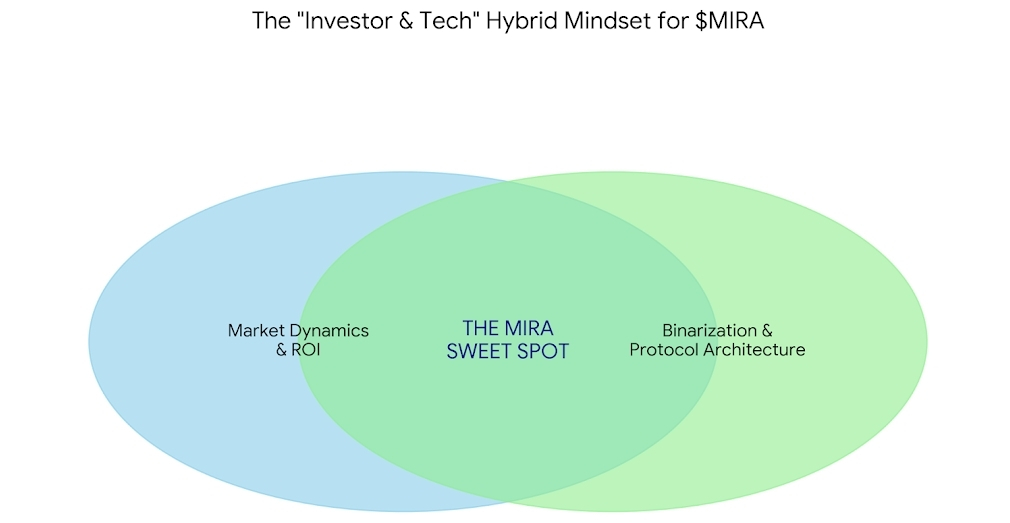

Over the past few years, I’ve noticed something interesting about crypto communities. The conversations that create the most value aren’t purely technical, and they’re not purely speculative either. The most engaging discussions happen somewhere in the middle — where investor curiosity meets real technological understanding. I like to call this the Investor & Tech hybrid mindset, and in my view it’s the perfect lens for understanding what projects like Mira and the $MIRA token are actually trying to build.

Crypto started as a financial revolution, but it has gradually evolved into something much bigger: a space where people collectively explore emerging technologies before the rest of the world fully understands them. Artificial intelligence is now entering that same conversation. But with AI comes a major challenge that many people are only beginning to notice — the problem of trust.

AI systems are incredibly powerful, but they have a strange flaw. They can generate answers that look completely convincing while still being wrong. These mistakes are often called hallucinations, and they highlight a deeper issue: most AI today operates like a black box. You receive an answer, but you have no reliable way to verify whether it’s actually correct.

This is the exact problem that Mira is trying to solve.

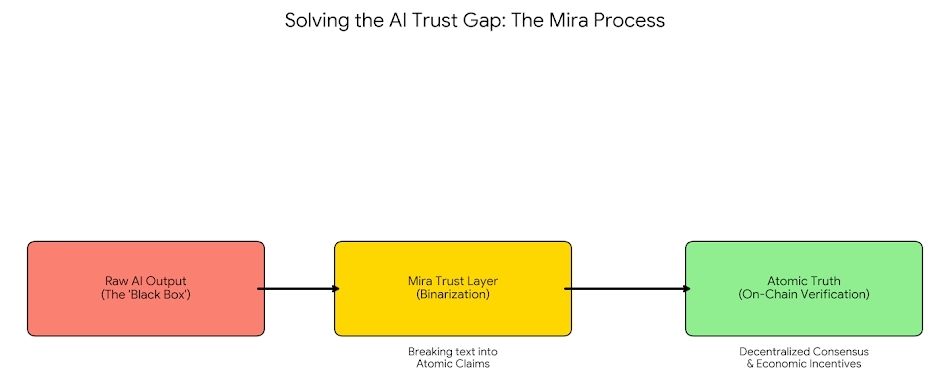

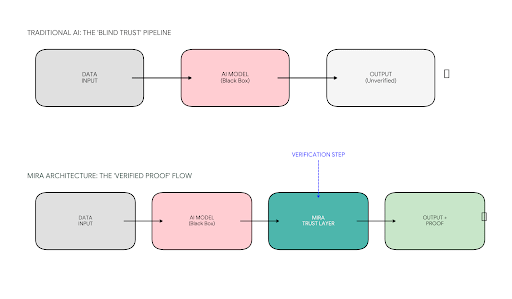

When I first explored Mira’s architecture, what stood out to me was its simplicity at the conceptual level. Traditional AI works like a pipeline: data goes into a model, the model produces an output, and users are expected to trust the result. There is no built-in mechanism to prove the answer is accurate.

Mira introduces something new in this flow — a verification layer.

Instead of blindly accepting an AI-generated answer, the system adds an additional step where the output can be validated. That means applications don’t just receive a response from a model. They receive something much more powerful: an output accompanied by proof that the result has been verified.

This simple change fundamentally alters how trust works in AI systems.

The more I think about it, the more it reminds me of what blockchain did for digital finance. Before Bitcoin, trusting online transactions required centralized intermediaries like banks. Blockchain introduced a system where transactions could be verified by a decentralized network instead of trusted by authority.

Mira applies a similar philosophy to artificial intelligence.

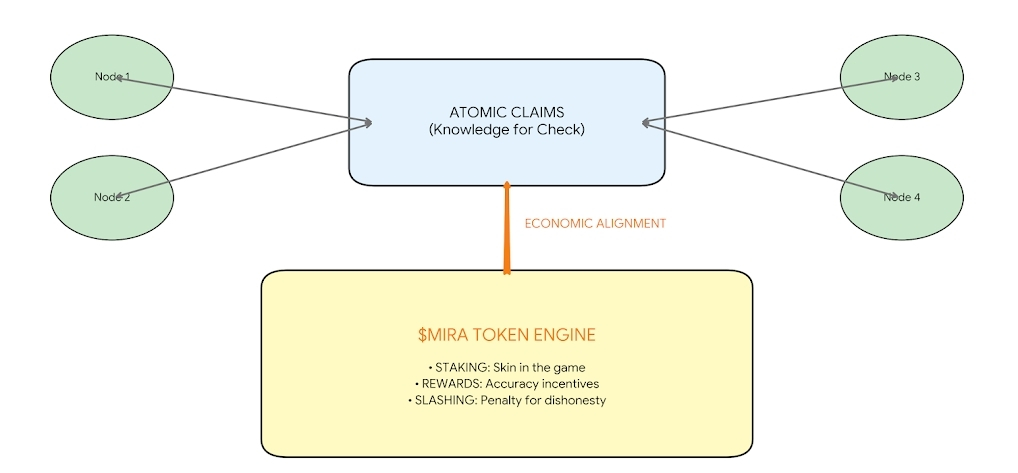

When an AI system generates an answer, Mira breaks that output into smaller factual components called claims. These claims are then evaluated by a decentralized network of verification models. Instead of relying on a single AI system to judge its own answer, multiple independent models analyze the claims and check whether they reach agreement.

If consensus is achieved, the output can be considered verified.

What’s fascinating to me is that this approach transforms AI responses from simple text into something much more structured: information that can be validated. In other words, the system doesn’t just produce knowledge — it produces knowledge that can be checked.

For applications that rely heavily on AI, this has enormous implications. Imagine autonomous agents making financial decisions, research assistants generating scientific insights, or educational tools answering complex questions. In all of these cases, the reliability of the output becomes incredibly important.

That’s where Mira’s verification layer becomes valuable.

But in crypto, infrastructure alone is never the full story. There also needs to be an economic system that aligns incentives, and that’s where the $MIRA token comes in.

From what I’ve studied, $MIRA acts as the economic engine of the entire network. Developers who want to verify AI outputs pay fees within the system. Validators participate in the verification process by staking tokens and contributing computational work. At the same time, governance mechanisms allow the community to influence how the protocol evolves over time.

This creates a feedback loop where technology and economics reinforce each other. The more applications rely on verifiable AI outputs, the more activity flows through the network. And as the network grows, the incentive structure strengthens participation from validators and developers.

For crypto communities, this is where the Investor & Tech hybrid perspective becomes so powerful.

If someone only looks at the token price, they miss the technological significance of what’s being built. If someone only studies the technology, they may overlook the economic mechanisms that allow decentralized systems to function at scale.

But when both perspectives come together, the picture becomes much clearer.

Mira isn’t simply another AI project, and it’s not just another crypto token. What it’s attempting to build is something more foundational a trust infrastructure for artificial intelligence.

As AI continues to integrate into everyday systems, the question of whether we can trust machine-generated information will only become more important. The internet already struggles with misinformation and manipulated content. Now imagine a world where billions of AI-generated responses are produced every day.

Without verification, distinguishing truth from confident error becomes incredibly difficult.

This is why the idea behind Mira resonates with me. Instead of asking users to trust AI blindly, the protocol introduces a framework where AI outputs can be verified, validated, and proven.

In many ways, it feels like the natural next step in the evolution of both AI and crypto.

Blockchain taught us how to verify transactions. Mira is exploring how we might verify intelligence itself.

And if that vision succeeds, the conversation around projects like Mira and $MIRA won’t just be about speculation or market cycles. It will be about building the trust layer that the future of AI might ultimately depend on.