The first time I started using AI tools regularly I mostly focused on what they could do The speed was impressive You ask something and a clear explanation appears in seconds Sometimes it even explains complicated topics better than many articles.

But after using these systems for a while a small pattern begins to appear Most answers look confident and structured yet when you check the details occasionally something is slightly wrong Not a big mistake just a number that does not match the source or a reference that cannot really be found.

That is when you realize something important about modern AI systems They are extremely good at producing language but they do not always know whether the information they generate is actually true.

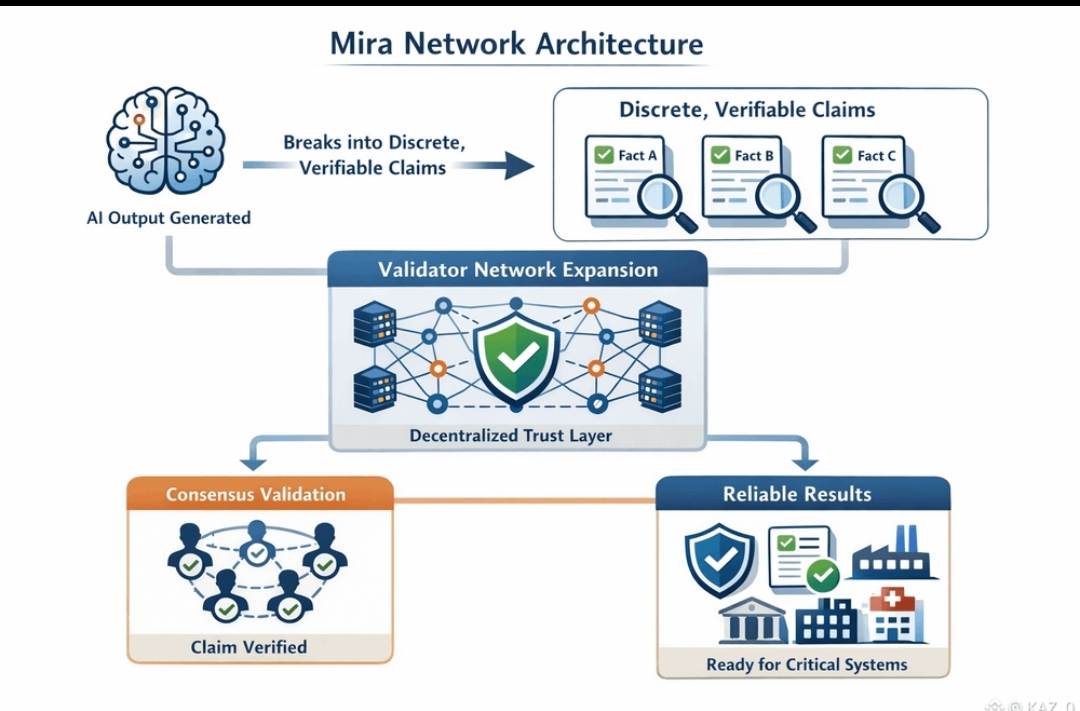

That thought is what made the idea behind Mira Network stand out to me Mira is not trying to build another AI model Instead it focuses on a different layer of the problem which is verification

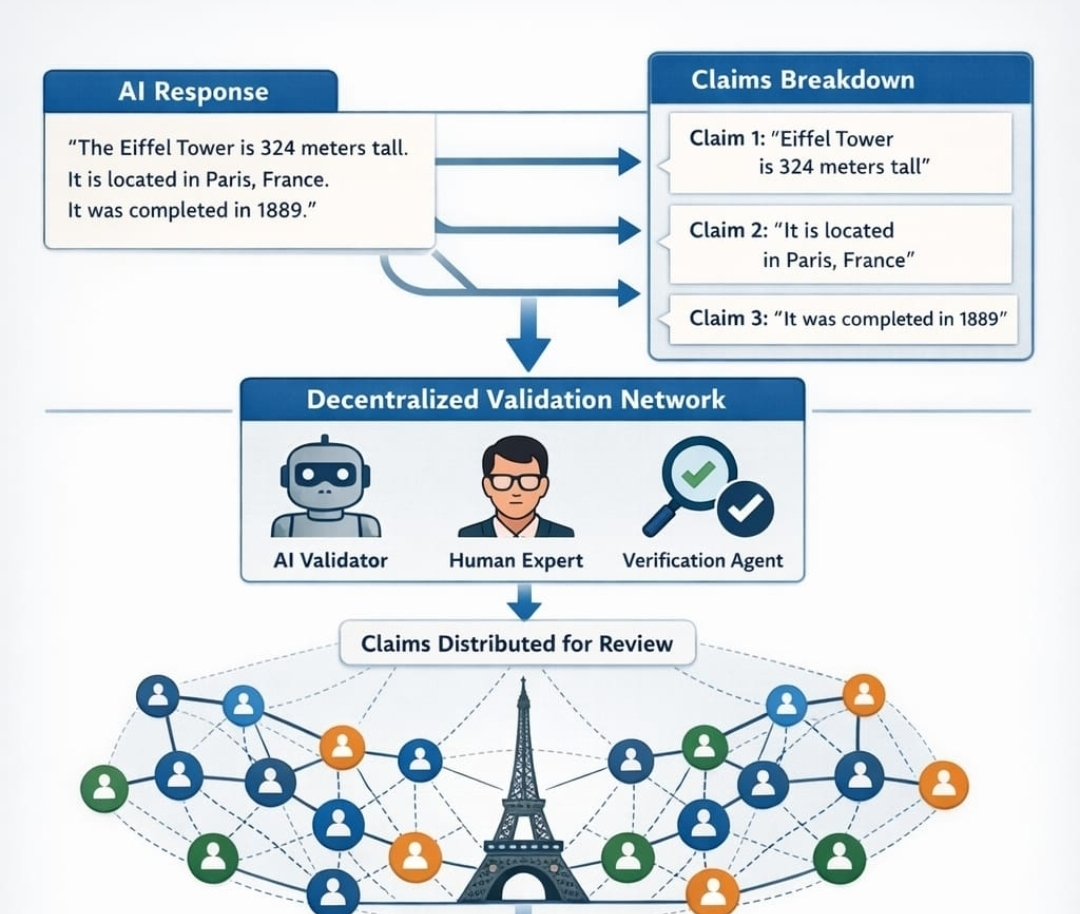

The approach is interesting because it does not treat an AI response as a single block of information Instead the response can be separated into smaller claims Each claim can then be checked individually.

Those claims are sent to a network of validators Some validators may be other AI systems Others may be verification tools that examine the claim from different angles The network then looks for agreement between these validators rather than trusting one single model.

When several independent validators reach the same conclusion the claim can be considered verified and the result can be recorded onchain

This idea changes the trust model around AI outputs Right now when you read an AI generated response you usually have to verify it yourself You search sources compare information or double check the details.

Mira tries to move part of that process into the network Validators are rewarded for verifying information correctly and penalized if they approve something that turns out to be wrong This creates an incentive for careful evaluation rather than blind confirmation.

What makes this concept more interesting is the direction AI technology is moving Today most systems still act as assistants where humans review the answers But new AI agents are already starting to perform tasks automatically across different digital systems.

In that environment the reliability of information becomes much more important A small error might not matter in a conversation but it could matter a lot if an automated system is acting on that information.

That is why verification begins to look like infrastructure rather than a simple feature

Mira is built around the idea that intelligence alone is not enough AI models can generate answers but another layer is needed to verify those answers collectively.

The more AI becomes integrated into research finance governance and automation the more valuable that verification layer may become because at some point the most important question will not only be how intelligent the system is but whether the information it produces can actually be trusted.