The more time I spend exploring modern AI systems, the more one uncomfortable truth becomes clear: fluency is not the same thing as accuracy.

The more time I spend exploring modern AI systems, the more one uncomfortable truth becomes clear: fluency is not the same thing as accuracy.

Today’s AI models are incredibly good at producing natural language. They can write essays, explain complex ideas, and even simulate expert-level analysis. But beneath that smooth surface lies a persistent problem — AI can sound completely confident while quietly inserting incorrect facts, wrong numbers, or even fabricated references.

Most of the time we do not notice these errors because the response is convincing enough.

For casual use this may not matter much. But as AI begins assisting with research, financial analysis, automated decision systems, and critical infrastructure, that small margin of error becomes a serious issue.

This is the challenge that @Mira - Trust Layer of AI and $MIRA are trying to solve.

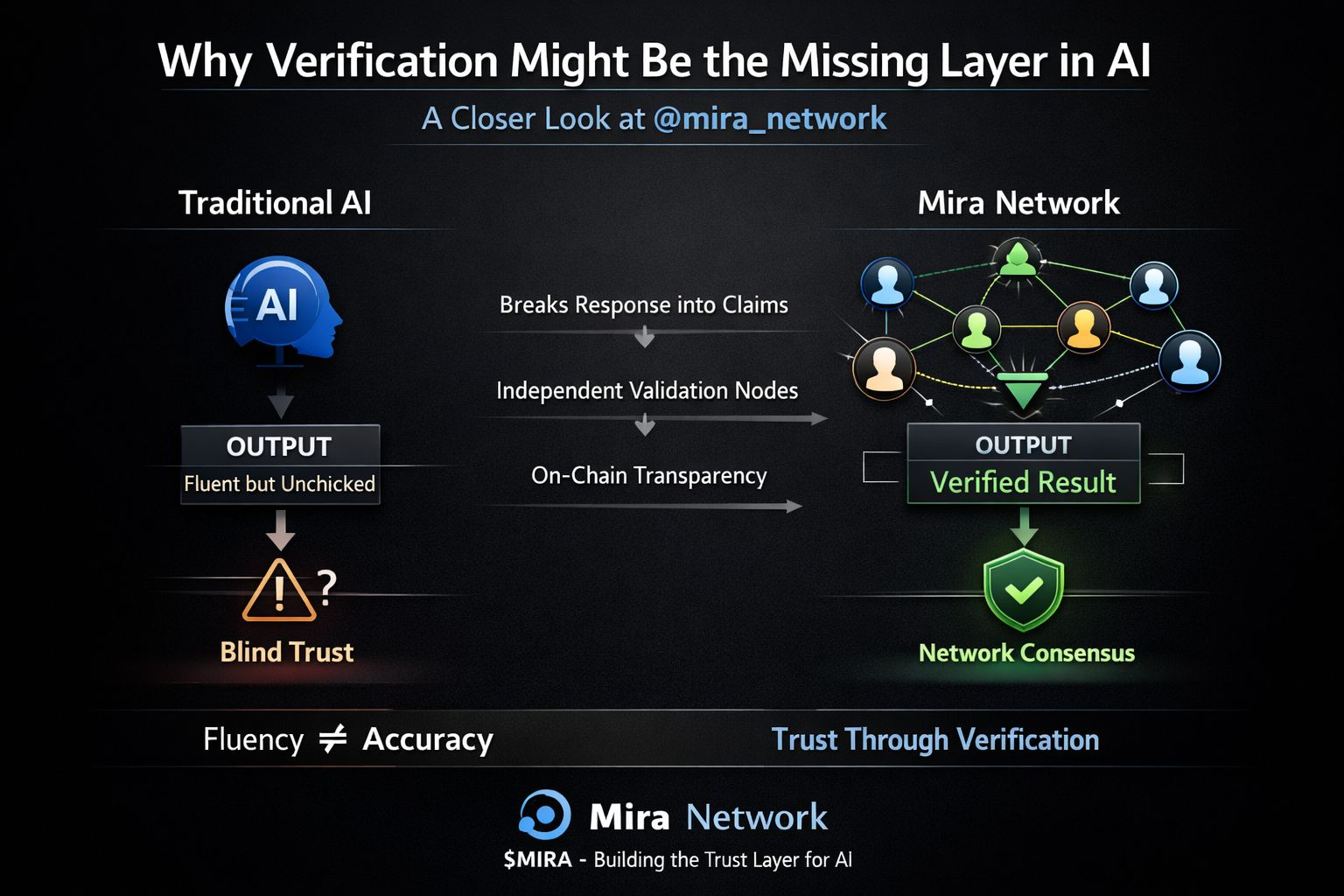

Instead of attempting the nearly impossible task of building a single AI model that never makes mistakes, Mira approaches the problem from a different direction: verification.

When an AI system produces an answer, Mira breaks the response into individual claims. Each claim can then be independently checked rather than blindly trusting the entire output.

Those claims are validated by a decentralized network of AI models and participants. Multiple perspectives evaluate whether each statement holds up under scrutiny. If the claims pass this distributed verification process, they are included in the final validated output.

In other words, accuracy emerges from collective validation rather than individual confidence.

This approach introduces something that traditional AI systems lack: transparent reliability.

Once the verification process is complete, results can be anchored on-chain. This creates a verifiable record showing how consensus around the information was reached. Instead of trusting a single company, model, or API, users can rely on a transparent network process.

That difference becomes extremely important in areas where trust actually matters.

Financial models, research systems, autonomous agents, and machine-to-machine communication all require a higher level of reliability than simple conversational AI. A system that verifies information before it becomes actionable could become a critical layer in the next generation of AI infrastructure.

What makes Mira particularly interesting is that its design acknowledges a simple reality: hallucinations and bias are incredibly difficult problems to eliminate completely.

Rather than pretending AI will suddenly become perfect, Mira builds a system that checks AI outputs continuously.

The more I think about it, the more it feels like #Mira is trying to build the missing trust layer for artificial intelligence.

Models will keep improving. Performance will keep increasing. But the long-term success of AI may depend less on raw intelligence and more on whether we can verify and trust the information those systems produce.

And that is exactly where $MIRA could play an important role.