I was watching a request move through Mira’s verification network recently, and something subtle caught my attention.

The answer itself looked completely ordinary.

It was a short response. One statistic. A single sentence that wrapped around a small conditional clause at the end. Nothing controversial. The kind of statement that normally passes through most systems without anyone giving it a second thought.

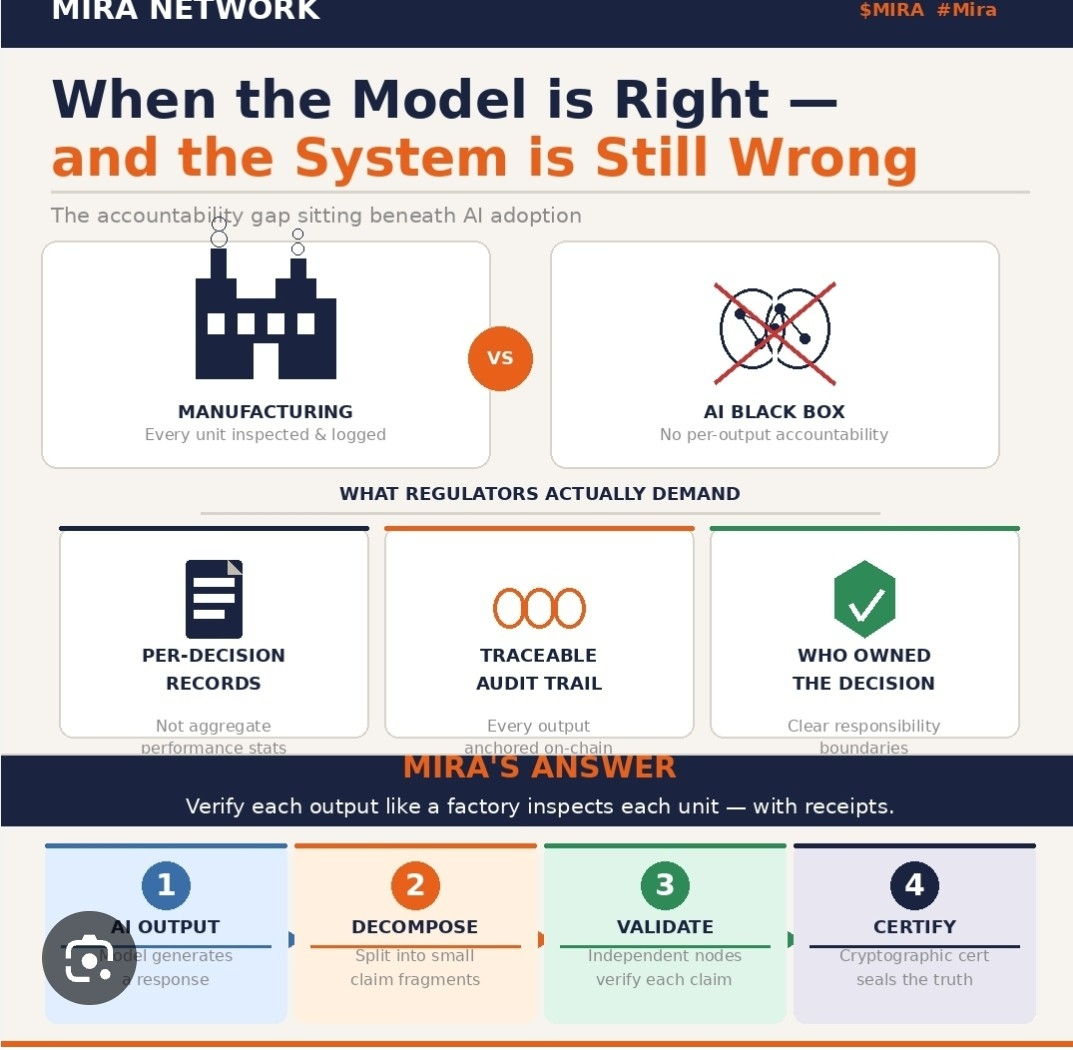

But Mira doesn’t evaluate answers the way typical AI systems do. It breaks responses down into verifiable fragments and routes those fragments through a decentralized network of validator models that search for evidence and attach verification weight.

So the sentence went through the usual first step: claim decomposition.

Three fragments were created.

This part was routine.

Each fragment received its own ID, evidence trace, and validator routing path. Independent validators began checking citations, cross-referencing sources, and building consensus around whether each fragment could be verified.

Fragment 1 closed quickly.

It referenced a clear and widely accepted statistic. Two validators attached evidence almost immediately. The verification certificate formed with minimal cost and the reward distribution panel barely moved.

Fragment 2 took slightly longer.

The citation path wasn’t quite as direct, so validators ran an additional pass before quorum was reached. Still normal. The verification pool ticked down slightly as validator rewards were paid out of the request’s token pool.

Then Fragment 3 started behaving differently.

The fragment itself looked simple. It was just a conditional clause attached to the end of the sentence — a small piece of language that didn’t seem particularly important.

But the verification network treated it very differently.

Instead of pointing to a single evidence path, the clause opened two possible interpretations in the reference graph. Both were plausible. Both needed exploration.

And that’s where things got interesting.

Mira’s validator network doesn’t guess when evidence branches. It walks the branch.

Additional validators joined the verification process. The queue length increased. Each model pass explored another path through the citation graph.

The usage meter started climbing.

Generation of the answer had taken milliseconds. Verification was now taking seconds.

Fragment 1 already had its cryptographic certificate.

Fragment 2 followed shortly after.

Both fragments were finalized, signed, and exportable for downstream integrations that rely on Mira’s verified claims.

But Fragment 3 was still open.

Technically, the response was already “verified enough” for many systems that simply require partial verification before consuming outputs. At that point, stopping the search would have saved tokens.

But that’s not how the system works.

The network kept verifying.

Validators continued exploring both evidence branches until consensus weight crossed quorum. Another verification cycle completed. The validator rewards were paid out from the request pool.

Finally, the fragment closed.

Consensus was reached. The certificate tuple for the full sentence was recomputed and finalized across all fragments.

Round complete.

When the verification ledger printed the final usage number, I paused for a moment.

The cost of verification was higher than the cost of generating the original answer.

Not slightly higher.

Several multiples higher.

From the network’s perspective, nothing unusual happened. Every component behaved exactly as designed.

Claims were decomposed.

Evidence paths were explored.

Validators reached consensus.

Certificates were issued.

The system did its job perfectly.

But the economic trace left an interesting signal.

The smallest fragment in the sentence — the part that looked the least important in plain language — ended up being the most expensive part of the verification process.

That’s the quiet reality of building a trust layer for AI.

Language compresses complexity. Proof expands it.

When statements are broken into verifiable pieces, the cost isn’t determined by how long a sentence is or how confident it sounds. It’s determined by how many possible realities the evidence needs to explore before agreement forms.

Sometimes the biggest cost in a response isn’t the answer itself.

It’s the effort required to prove that one tiny clause inside it is actually true.

And in systems like Mira, that effort is exactly what the network is designed to measure.

Because verification doesn’t stop when a sentence looks simple.

It stops when the evidence finally agrees.