Most engineers can point to a small line in a runbook they once promised themselves they would delete. It usually appears during a stressful week, added quickly between deployments or incident calls. Something simple: cap attempts at three, wait two seconds before the next submit, maybe add a small backoff ladder. At the time it feels harmless, almost temporary—a practical patch to help the system behave during busy hours. But those small lines have a habit of staying. And the longer they stay, the more they reveal something subtle about the system beneath them.

Retries are supposed to be a kindness. Distributed systems are messy environments, and things occasionally slip through the cracks. A worker times out. A network call arrives late. A task misses its execution window. The retry mechanism exists so that small accidents do not derail the entire workflow. In a calm system, “try again” feels like resilience. It smooths out the randomness of networks and machines. Nobody questions it.

But systems rarely stay calm forever. Demand grows, queues deepen, and suddenly the meaning of a retry begins to change. It stops being a polite second chance and starts looking more like persistence. Each retry is another attempt to enter the same doorway, another knock on the same door. Under pressure, the actors who knock more often—or faster—start getting through more reliably. The system never explicitly said they should, yet that is what happens.

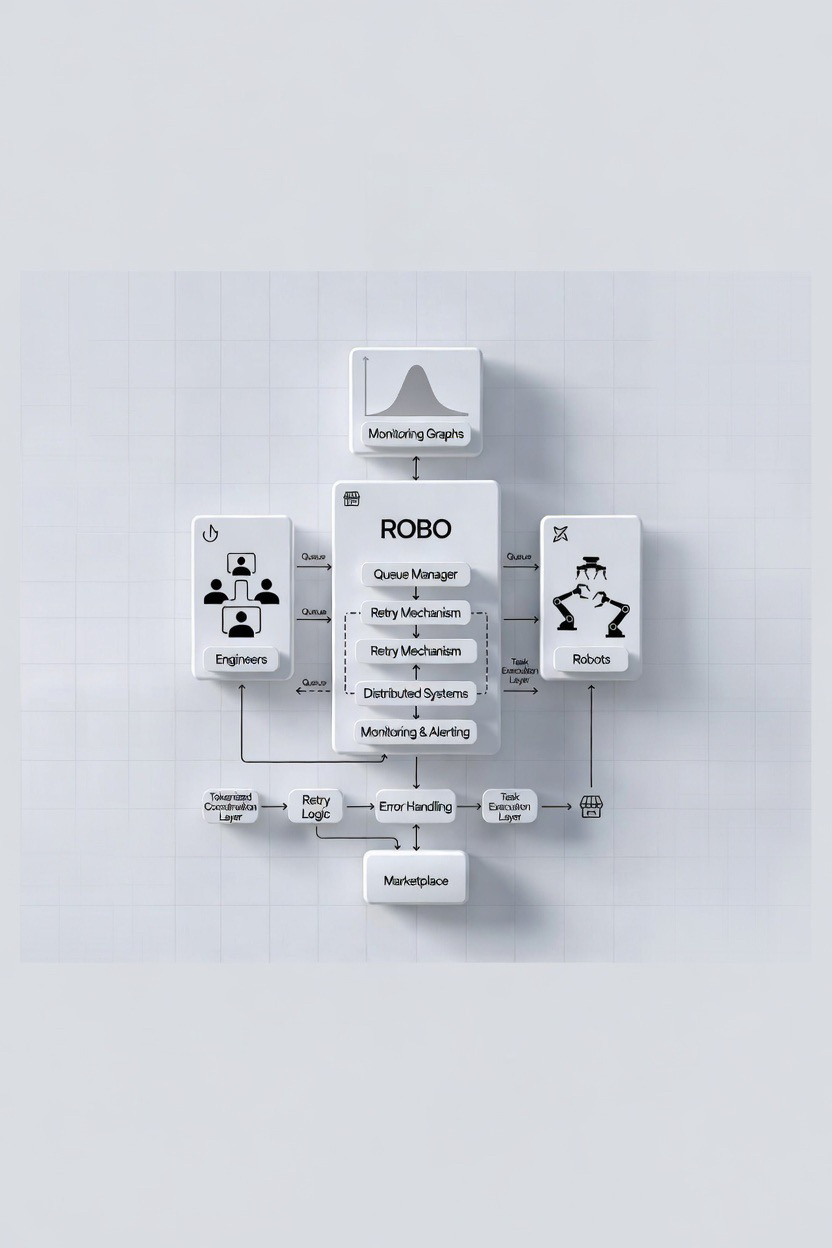

This shift is especially visible in environments that function as work surfaces rather than simple applications. On a work surface every request represents actual work waiting to be admitted. It might be a job for a compute node, a task for an automated service, or in newer systems like the ROBO ecosystem, a real-world robotic action scheduled through decentralized infrastructure. In those environments retries are not just harmless repetitions. Each one consumes attention, capacity, and time. A retry is effectively another attempt to claim a slot inside a limited system.

At first this behavior feels invisible. Engineers see retries climbing slightly and assume the system is simply being resilient. But over time the pattern becomes harder to ignore. The queue begins filling with repeated attempts rather than unique tasks. Monitoring graphs start showing spikes that do not match actual demand. The system believes it is under enormous load, when in reality many of those requests are the same actors trying again and again.

Eventually the engineering response begins. Someone adds a small delay between attempts. Then someone introduces exponential backoff so retries slow down over time. Later a retry budget appears to cap how many attempts a request can make. None of these changes look dramatic. They resemble normal reliability practices, the sort of incremental improvements engineers apply as systems mature. Yet behind them sits a deeper problem: the system never learned how to say no clearly.

When refusal is unclear, people—and machines—interpret it as negotiation. A rejection begins to feel temporary rather than final. If a request fails today, maybe it will succeed five seconds later. If it fails again, perhaps another attempt will land during a quieter moment. Gradually the culture around the system adapts. Participants learn that persistence might improve their chances.

In human systems that behavior already creates friction. In automated systems it becomes far more powerful. Machines do not hesitate to retry hundreds or thousands of times if doing so slightly increases success rates. In ecosystems where autonomous agents coordinate tasks and receive payments—something that networks like ROBO are beginning to explore through decentralized robotic infrastructure—that persistence can turn into a real competitive edge. The actor with the best automation can simply keep knocking on the door until it opens.

The result is something nobody intended: a quiet marketplace forming inside the system. Priority is no longer determined by clear rules but by persistence. The system appears open and neutral, yet the stable experience slowly concentrates among those who can retry most effectively. Engineers might not call it a market, but it behaves like one.

Once that dynamic appears, other symptoms follow. Monitoring jobs start watching tasks long after they complete because “success” does not always stay successful. Retry ladders grow longer as teams attempt to manage the noise. Capacity planning becomes confusing because the system cannot distinguish real demand from amplified demand created by repeated attempts. The infrastructure starts spending energy managing the side effects of retries rather than performing the work itself.

None of this feels catastrophic. In fact, it often looks like responsible engineering. Yet it also reveals a quiet truth: the protocol has stopped making firm decisions. Instead of clearly admitting or rejecting work, it has begun leaving the door slightly open for negotiation.

A healthier alternative begins with something surprisingly simple—making refusal stable. When the system rejects a task, that rejection should mean something definite. Not “maybe later” or “try again immediately,” but a clear state that participants understand. Stable refusal brings calm back to the system because it stops teaching people that persistence will eventually win.

Of course retries cannot disappear entirely. Networks will always be imperfect, and recovery mechanisms remain necessary. The question is not whether retries exist but how the system treats them. If retries remain completely free, they will eventually become signals of priority. If they carry some visible cost or limitation, their role shifts back toward recovery rather than competition.

This is where tokenized coordination layers, such as the ROBO ecosystem, offer an interesting design space. Because the network already relies on a token for governance, staking, and machine-to-machine payments, it also has a way to account for persistence. Retries could draw from limited credits or become progressively more expensive during periods of congestion. The goal would not be punishment but clarity: persistence becomes an explicit choice rather than an invisible strategy.

Builders sometimes resist these ideas because stable refusal feels restrictive. Systems that once allowed endless retries suddenly appear less forgiving. Debugging requires more careful state management. Integrations must be designed with cleaner boundaries because “try again” cannot be the universal escape hatch anymore. Yet the payoff is significant. When refusal becomes stable, queues start reflecting real demand again. Retry storms fade away. Engineers no longer need entire layers of infrastructure dedicated to interpreting ambiguous outcomes.

For anyone watching systems like ROBO evolve, a few small signals reveal whether retries remain healthy or have already turned into a hidden marketplace. One signal appears in the number of retries per hundred tasks. If that number shrinks over time, the system is learning to refuse clearly. If it grows, persistence is quietly becoming priority. Another signal lies in engineering culture itself. When teams begin deleting retry ladders from runbooks, stability has arrived. When they keep adding more rungs, negotiation is still happening.

Eventually every system reaches a moment when this shift becomes obvious. It does not happen through a dramatic announcement. Instead the change appears quietly in daily operations. Watcher jobs disappear because task outcomes are predictable again. Retry graphs flatten. Engineers stop worrying about whether a completed task will be undone by another wave of attempts.

And the phrase “try again” loses its strategic meaning. It becomes what it was always meant to be—a simple recovery tool, not a tactic for gaining advantage.

That is when the system finally stops hosting a hidden market on top of its own work surface. And somewhere in a runbook, that small line engineers once promised themselves they would remove finally disappears.

$ROBO #ROBO @Fabric Foundation