In some cases, the incident that made me doubt the output of AI was quite mundane. One of the complex topics I made a model to analyze was composed of several steps of reasoning, and the solution appeared to be refined, well-organized, and self-assured. However, when I looked up a couple of facts, sections of the argument just fell into silence. The words were definite, the reasoning below was weak. The experience remained entrenched in my memory since it demonstrated that there is a more profound conflict that is emerging as AI systems become more intelligent: it is more challenging to vindicate their logic. It is this realization that initially piqued my interest in Mira and how it is going about verifying complexity at scale.

In my opinion, the issue is not the fact that AI can commit errors; the same thing does a human being. The matter of fact is that AI is capable of producing incredibly complicated reasoning at a very rapid pace. Any long analysis, simulator of an idea or of layers of data can be written by a model in seconds. However, the proof that such outputs are right may necessitate some other expert or another system to repeat the same task. That is, the complexity increases at a higher rate than we check it. At such an imbalance, the design of Mira starts to make sense.

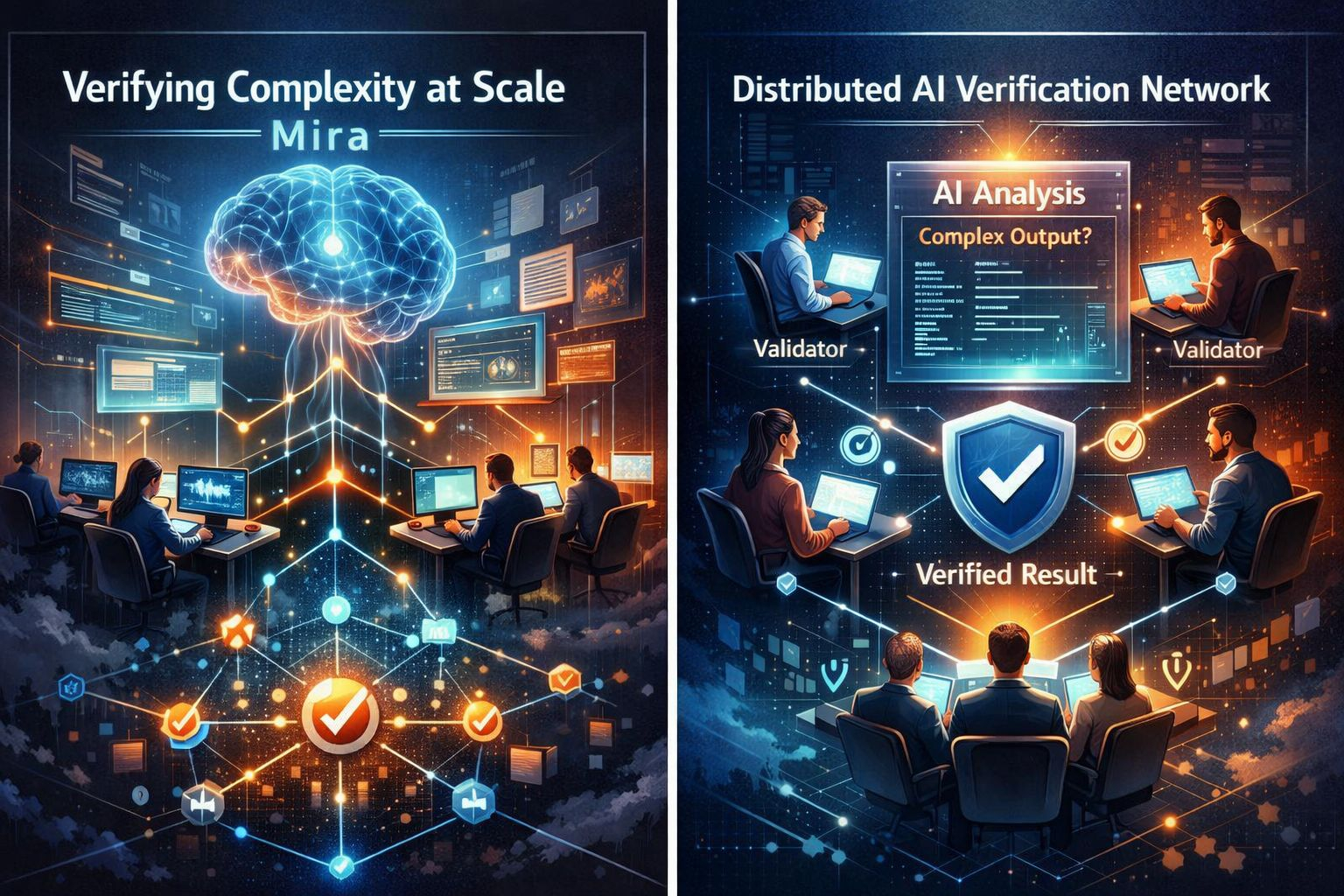

As I was initially introduced to the idea of Mira, it did not seem like the standard crypto project but rather like a layer of infrastructure that unobtrusively evolved under the systems of AI. Mira does not believe that the answer provided by a particular model can be relied upon and instead tries to build a verification network in which the outcomes of the complex AI calculations will be verified by a large number of people simultaneously. The concept is most basic in nature and yet strong on implication: when several independent validators test over the same output and can come to an agreement, the outcome becomes much more solid.

I tend to visualize this process as a system of auditors who are going through a complex report. An individual may ignore a mistake, but a decentralized group that is scrutinized on the various components of the argument will be able to reveal the faults jointly or support the truth. Mira basically transforms examination into a collective duty instead of a point of control. In my case, such change of thought is one of the most interesting parts of the project.

Going more into the way the system operates, the structure starts to resemble a kind of a map with layers. On the surface, the user or application will provide input in the form of tasks, which may be AI generated analysis, reasoning, or data interpretation. On the bottom of that layer, the AI models generate outputs. However, Mira goes to another level under the model itself: a decentralised system of checks and balances where validators check whether the reasoning makes sense at all.

This strategy alters the process of formation of trust. As a rule, individuals put their faith in the expertise of the model that makes the solution. In the case of Mira, trust is built up as a result of the consensus of the multiplicity of validators who verify the calculation. When a sufficient number of participants report that the logic and results hold on their own, the output gets credibility. But it is not unquestioning trust; it is won by the consensus that is spread out.

Personally, in the course of my study with AI tools, this concept becomes even more topical. I have observed that the more complex a model and the more difficult it is to oppose it. The descriptions are extensive, the lines of reasoning complicated and the conclusions usually appear convincing even though they are wrong. With such an environment, a verification layer that is independent of the model itself appears like a precaution that is necessary and not luxury.

Naturally, there are difficulties associated with the approach. Incentives is one of the questions that come to my mind. Why then would participants take time to validate AI outputs? Mira solves this by network incentives where the validators are paid to make proper verification. The architecture is similar to other decentralized systems in which the members ensure the safety of the network as a reward. However, reasoning verification is more sensitive than transaction verification, and therefore these incentives will be important to design.

The other tradeoff is speed. Distributed verification inherently adds another phase, and that phase may increase the speed of processes that users should occur instantly. Mira tries to cope with this by dividing the verification activities among a large number of participants so that they can be running concurrently. Theoretically, parallel verification may make the system efficient despite the increasing complexity. Nevertheless, the question of whether this equilibrium will be maintained in the context of demand in the real world is yet to be answered.

What I am finding the most interesting is how Mira fits in the wider scope of direction of AI and crypto infrastructure. In the long run, the issue of validation of financial dealings without the involvement of central bodies was resolved by blockchain networks. However, with AI being significantly incorporated into the decision making processes, the second issue might be the verification of information and arguments. Mira seems to be branding itself as a member of that new basis.

My personal opinion does not show the usefulness of such a system as dramatically high as it might seem. The majority of users dealing with AI only expect answers, which they can rely on. In case the verification network developed by Mira performs as billed, its effect may be relatively invisible: The output of AI will be smoother, less hallucinatory, and the thinking processes will be checked through various viewpoints before arriving at the client.

Nevertheless, one of the questions persists in my mind: is it possible to scale verification networks with the blinding speed of the development of AI complexity? Models are changing rapidly and their chain of reasoning is getting more complex and long. Unless the architecture of Mira grows at the same rate, it would potentially be a layer in reliable AI systems. Otherwise, innovative solutions might be required.

At this point, my impression after visiting Mira is a skeptical and yet intrigued one. The project is not attempting to make AI smarter and more creative. Rather, it is about something less noisy but no less significant than ensuring that the outputs of the complex AI can be trusted at all. In a world where machines are coming up with more and more intelligent answers construction of systems that test the answers might become one of the greatest issues in the future. It is never known whether Mira eventually transforms into such a basis or not, but it is hard to deny the fact that the issue that it is about seems to be very tangible and is not going to go away soon.$MIRA @Mira - Trust Layer of AI #Mira