Noticing Something Odd While Reading CreatorPad Threads

Last night I was scrolling through a few CreatorPad campaign posts on Binance Square, mostly checking what people were writing about Fabric Protocol. At first the discussions looked similar to most campaign chatter — a mix of speculation, task completion tips, and a few “hidden gem” takes.

But one comment caught my attention.

Someone mentioned that Fabric’s ROBO systems weren’t just bots executing tasks — they were managing entire pipelines of autonomous actions. That phrasing stuck with me. Pipelines.

Most crypto automation tools I’ve used are basically scripts. They do one thing repeatedly: swap tokens, rebalance liquidity, claim rewards. But pipelines imply something more structured — sequences of tasks that react to conditions.

So I opened the documentation and tried to understand how Fabric actually structures these systems.

The Idea Behind ROBO Task Pipelines

From what I could piece together, Fabric’s architecture revolves around ROBO agents — autonomous modules that execute predefined operations across a network.

But unlike simple bots, these agents operate inside task pipelines.

Think of it like a workflow system rather than a single command.

A typical pipeline might look something like:

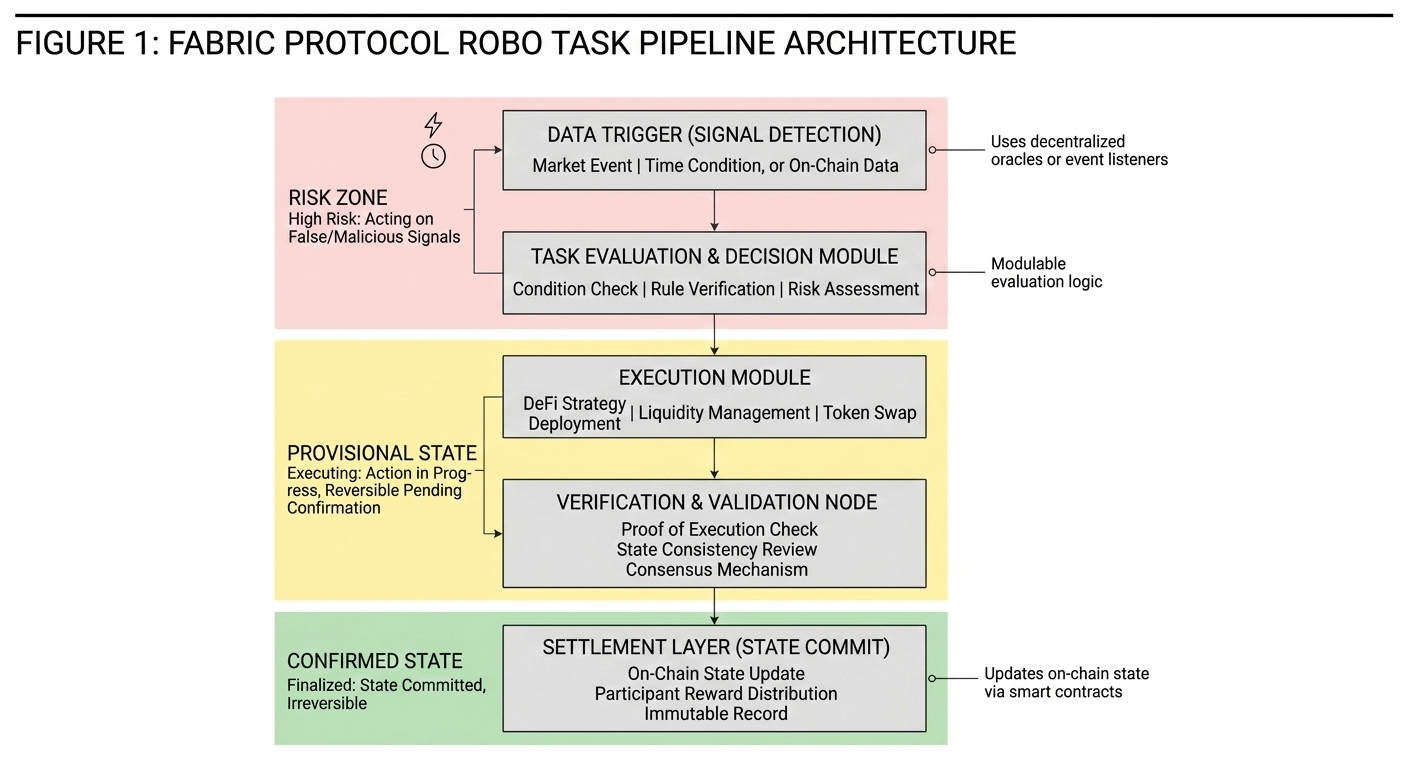

Data Trigger → Task Evaluation → Execution Module → Verification → Settlement

Each stage is handled by a different component in the Fabric network.

Instead of one script doing everything, the process becomes modular. One agent detects signals. Another decides whether the task should proceed. Another performs the action. And finally the system verifies results before committing state changes.

When I drew a small workflow diagram to make sense of it, the design looked surprisingly similar to distributed computing frameworks used outside crypto.

Why This Architecture Is Actually Interesting

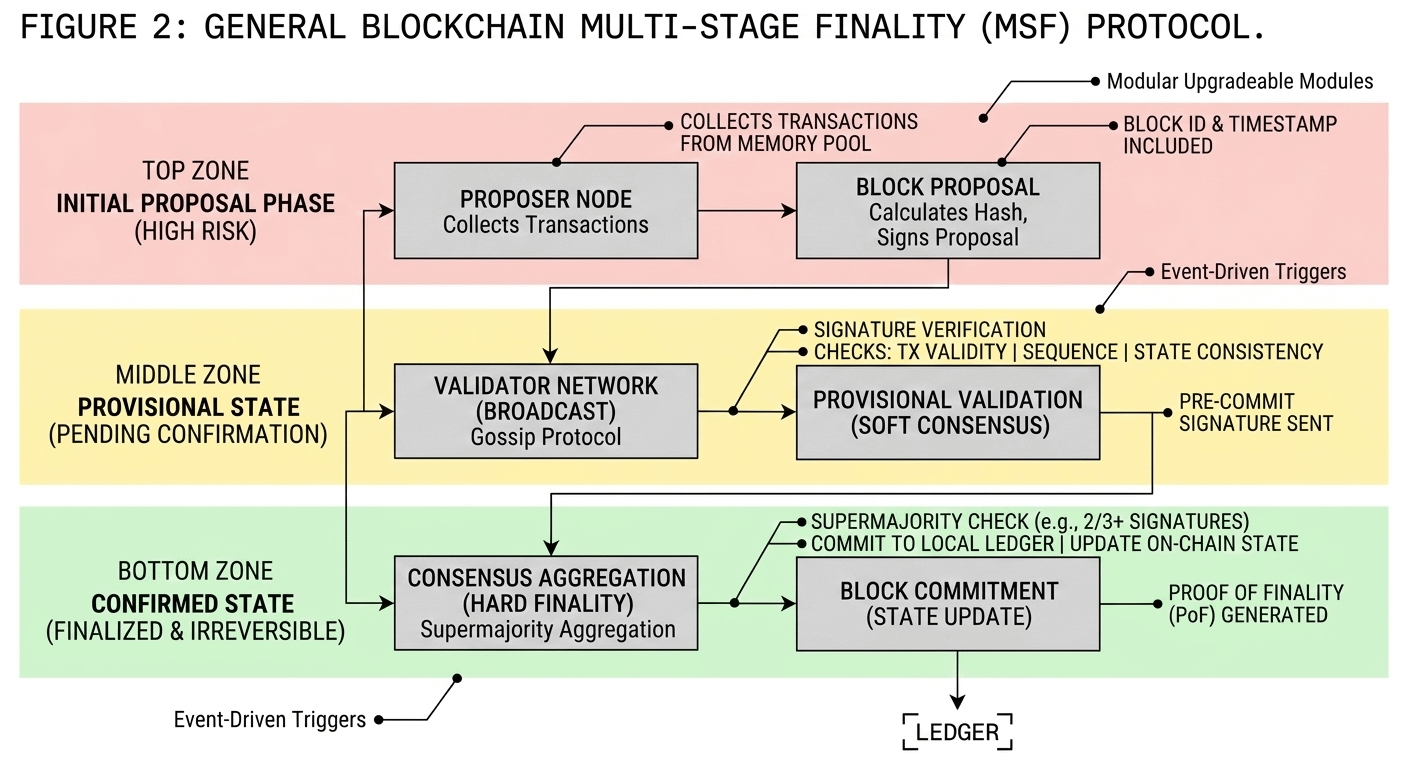

The pipeline design solves a problem that shows up everywhere in decentralized automation: coordination reliability.

Most automated DeFi strategies fail when one part of the system breaks. A price oracle glitches. Gas spikes. Liquidity shifts.

Fabric’s approach separates responsibilities across modules.

Three design details stood out to me:

1. Event-driven triggers

ROBO agents don’t constantly execute tasks blindly. They react to triggers — data signals, time-based conditions, or system events.

That’s much closer to how production systems operate.

2. Verifiable execution stages

Each step in the pipeline can be validated before the next one starts. This prevents cascading failures where a bad action propagates through the system.

3. Modular upgrades

Because the pipeline stages are separated, individual modules can evolve without redesigning the entire automation framework.

This feels more like infrastructure than a simple automation tool.

A Practical Scenario I Kept Thinking About

While reading through this architecture, I kept imagining how it could work in real market conditions.

Say an AI model predicts a volatility spike in a certain asset.

Instead of one bot reacting instantly, Fabric’s pipeline might process it like this:

Signal agent detects market anomaly

Evaluation module confirms threshold conditions

Execution agent deploys hedging strategy

Verification node confirms execution integrity

Settlement layer updates state and rewards participants

The pipeline approach ensures that each decision is processed before the next action begins.

In volatile markets, that sequencing could prevent a lot of automated chaos.

What the CreatorPad Campaign Helped Reveal

One thing I’ve noticed while browsing CreatorPad content is that different participants focus on different parts of the system.

Some are analyzing token incentives.

Others are experimenting with automation scripts.

But a few posts have started sharing system architecture diagrams explaining ROBO workflows, which helped me understand the pipeline structure much faster than reading documentation alone.

That’s actually one underrated aspect of these campaigns — the community ends up reverse-engineering protocol design in public.

The diagrams circulating on Binance Square showing ROBO task flows made the architecture much clearer.

Without them, the mechanism would probably look abstract to most people.

A Question That Still Bugs Me

Even though the design looks elegant, one question keeps coming up.

Autonomous pipelines are powerful — but they also increase system complexity.

More modules means more coordination overhead.

The real challenge will be ensuring that pipeline latency stays low enough for time-sensitive operations, especially in DeFi environments where milliseconds matter.

If verification stages slow execution too much, certain strategies might become impractical.

So Fabric’s long-term success probably depends on how efficiently these modules communicate across the network.

Why This Design Might Matter Long Term

After digging through the architecture, I stopped thinking of Fabric as just another automation protocol.

It looks more like a framework for coordinating autonomous agents.

And that distinction matters.

As AI systems start interacting directly with blockchains — executing trades, managing liquidity, coordinating machine resources — simple bots won’t be enough.

You need structured pipelines where decisions, execution, and verification happen in stages.

Fabric’s ROBO architecture is basically experimenting with that idea.

Whether it becomes widely adopted or not, the concept itself feels like a glimpse of where decentralized automation is heading.

And honestly, that’s not the narrative I expected when I first opened those CreatorPad posts.

$ROBO #ROBO @Fabric Foundation

$SIGN #LearnWithFatima #TrendingTopic #TradingSignals #MarketSentimentToday $OPN