The AI narrative of 2025 is dominated by a dangerous obsession: the pursuit of scale at the expense of certainty. Every developer and investor seems fixated on more parameters, larger context windows, and faster inference. But for those of us operating in the high-stakes world of decentralized finance and automated governance, speed is a liability when the output is merely a "prediction". This is the origin of the "Hallucination Tax"—the massive financial and operational risk incurred when unverified AI makes decisions without human oversight. When Artificial Intelligence makes predictions that seem certain, it becomes dangerous, especially when used to analyze contracts or execute trades.

Mira Network is not just another project building on top of the AI hype; it is building the infrastructure that makes that hype safe for capital. The fundamental problem is that current AI models are "monolithic". When a model generates a trade strategy or a contract analysis, the user is presented with a take-it-or-leave-it output. If the model "hallucinates" a fact or miscalculates a risk, there is no internal signal to warn the user. The system looks clean, confident, and perfectly structured, yet it can be fundamentally wrong. This gap between confidence and correctness is where institutional trust goes to die.

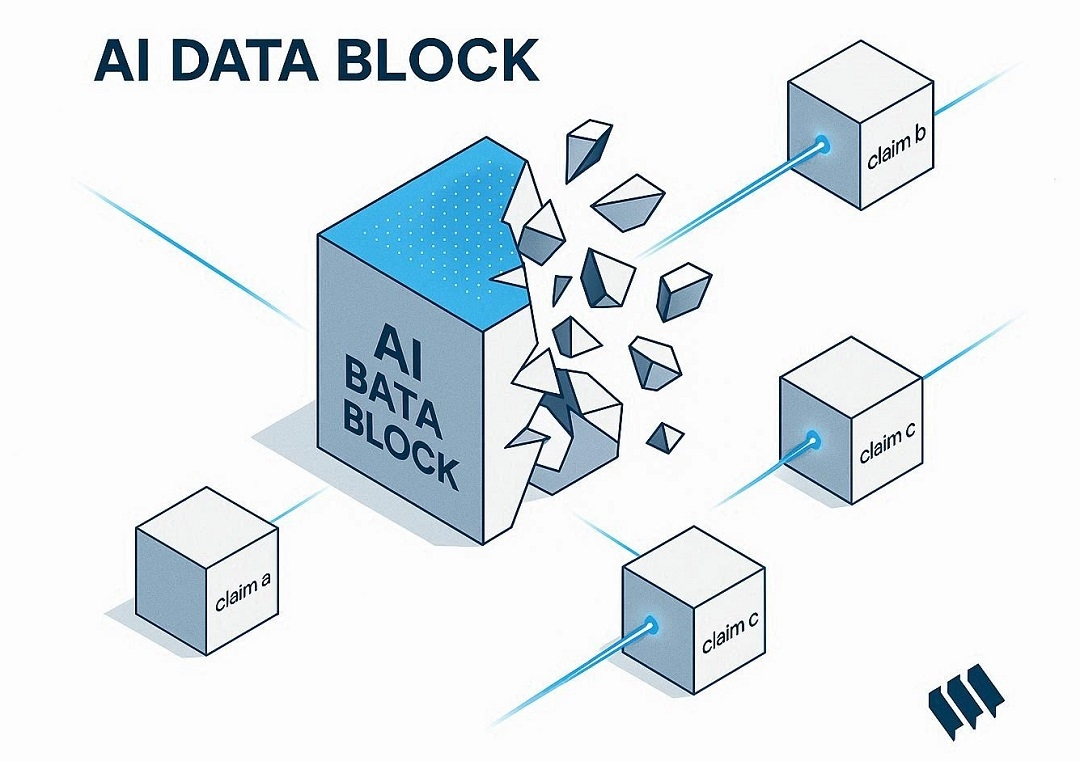

Mira’s solution is as elegant as it is rigorous: don't just accept; verify. Instead of treating AI output as a single block, the protocol decomposes it into discrete, independently verifiable claims. These fragments are then fanned out across a decentralized mesh of independent validator nodes. These aren't just redundant models; they are diverse entities—ranging from pure AI models to hybrid human-AI participants—each operating without knowing what others are evaluating.

The engine driving this verification isn't just code; it's economic incentives. Mira utilizes a hybrid mechanism of Proof-of-Work and Proof-of-Stake to ensure that every claim is defended by capital. The $MIRA token, with its fixed supply of one billion tokens primarily on the Base and BNB chains, serves as the collateral for this truth. Validators must stake $MIRA to participate. If they contribute to a truthful consensus, they are rewarded; if they attempt to validate a hallucination or behave maliciously, they face economic slashing. This transforms trust from a vague assumption into a quantifiable consequence.

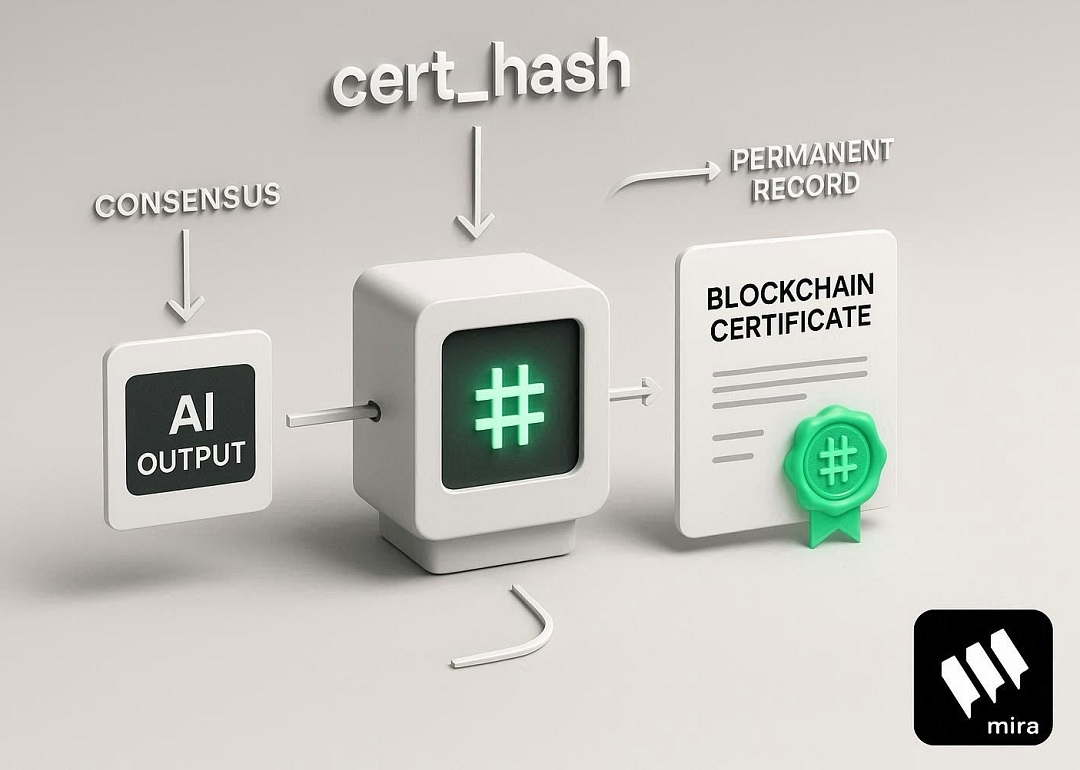

For a developer, the ultimate product of Mira is the cert_hash. This cryptographic certificate anchors a specific output to a specific consensus round on the blockchain. It is the auditable record that regulators, auditors, and DAOs can trace back to see exactly how a decision was defended. However, a critical integration challenge exists: the tension between latency and integrity. Many developers mistakenly prioritize the "200 OK" API response over the final verification certificate. But in a financial system, a "verified" badge that triggers before the cert_hash is returned is nothing more than a latency badge.

The market has already signaled the importance of this layer. With a $9 million seed round from heavyweights like Framework Ventures and Bitkraft Ventures, and its selection for the Binance HODLer Airdrop in September 2025, Mira is rapidly becoming the "Trust Layer" of AI. Furthermore, a $10 million developer grant program shows they are building an ecosystem, not just a product. Whether it’s powering Klok—their verified AI chatbot—or managing complex on-chain DeFi strategies, the goal remains the same: moving AI agents from tools we supervise to systems we can provably trust. The era of blind faith in AI is over; the era of verified autonomy has begun.

@Mira - Trust Layer of AI $MIRA #Mira #mira #bnb