When we talk about scientific research, most people imagine a slow and careful process. Researchers read papers, compare results, test ideas, and gradually build knowledge that others can trust.

And honestly, that slow process exists for a reason. In research, even a small mistake can influence many future studies.

Now think about what has been happening recently. AI tools are starting to appear in research work. Many researchers already use AI to summarize long papers, scan large amounts of literature, suggest research questions, or help interpret complex data. If AI can help with these tasks, it naturally makes things faster.

And you might say something like this: if AI can already do these things, why not simply rely on it completely?

And you might say something like this: if AI can already do these things, why not simply rely on it completely?

That sounds reasonable at first. But when you think about it a little more carefully, another question starts to appear.

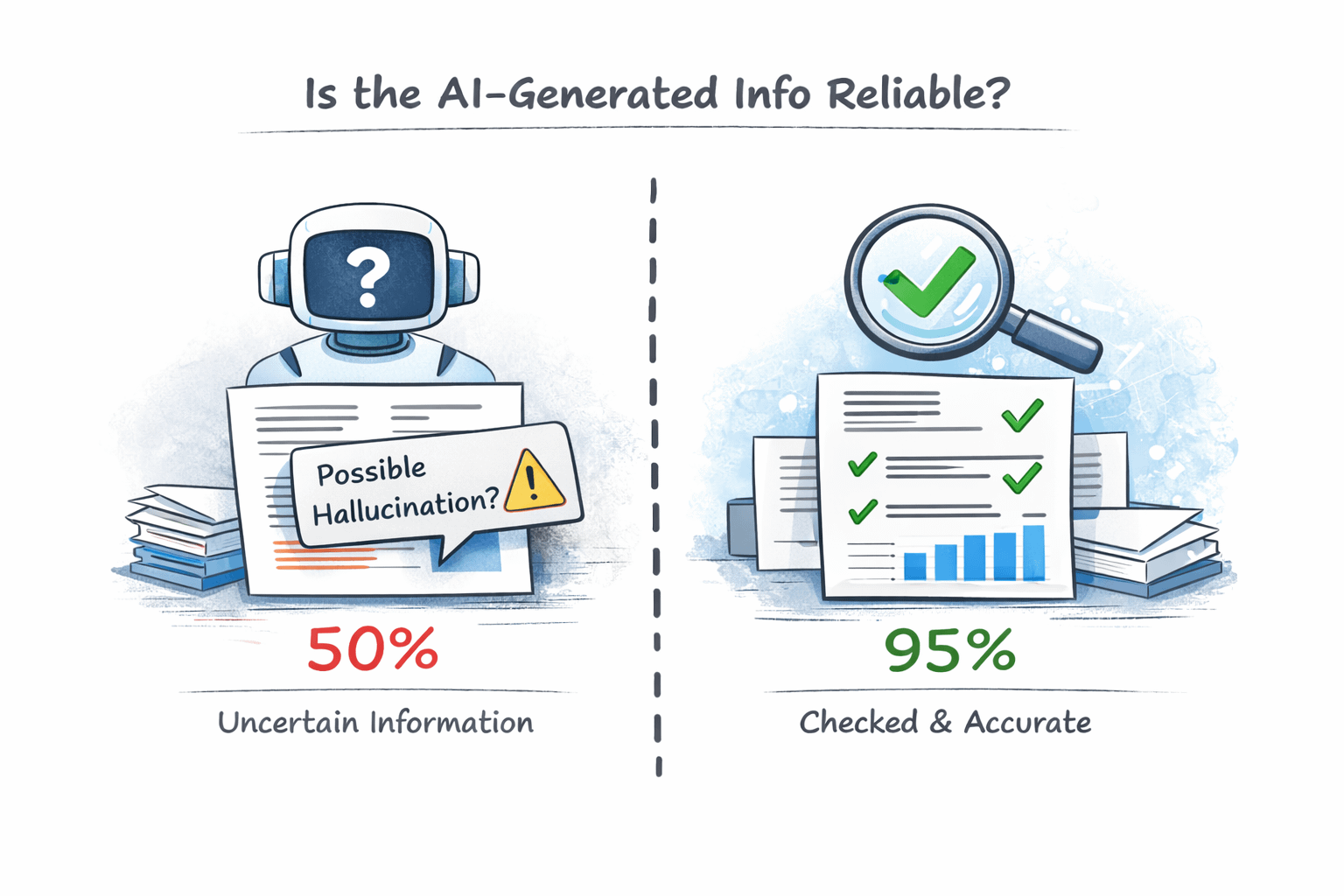

AI systems are very good at generating text. Sometimes they explain complex topics in seconds. But accuracy is not always guaranteed.

An AI model might misunderstand a paper, mix up information, or create a claim that sounds convincing but is not fully supported by evidence.

If that happens during normal conversation, it may not matter much. But if the same thing happens inside scientific research, the situation becomes different. Researchers need to know whether the information they are using is actually correct.

This is where it helps to remember how traditional research works. Scientific knowledge usually passes through several verification steps.

This is where it helps to remember how traditional research works. Scientific knowledge usually passes through several verification steps.

Peer review checks the logic behind an argument. Replication studies test whether results can be repeated. Citations allow other researchers to trace the origin of ideas. These steps exist for one reason: to protect reliability.

Now compare that process with what usually happens when an AI generates a summary. The explanation may look clear and complete, but the claims inside it are rarely checked one by one. The text looks finished, yet the verification layer behind it is often missing.

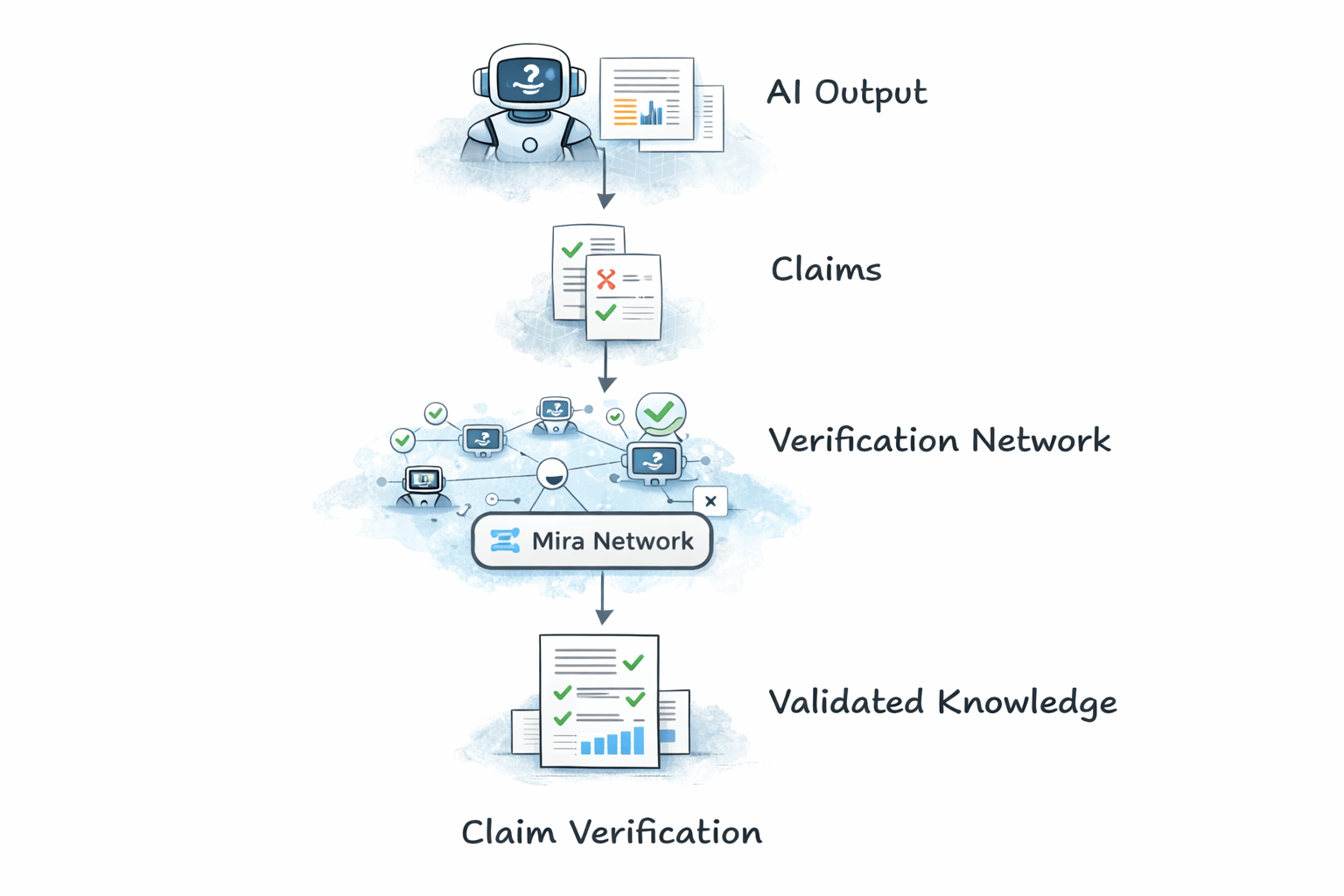

If we look at the situation this way, a simple idea starts to appear. Instead of treating an AI answer as one block of text, what if we break that answer into smaller claims? Each claim could then be checked separately.

Different models or validators could examine those claims and compare their results. Step by step, the system could filter out information that does not hold up under closer inspection.

Thinking about it like this makes the idea behind Mira Network easier to understand. Mira explores a structure where AI outputs can be divided into verifiable claims and evaluated through distributed validation. Rather than trusting a single model’s answer, the system introduces multiple verification steps before the information is accepted.

Imagine a simple research workflow built around that idea.

Imagine a simple research workflow built around that idea.

An AI system generates a research summary. That summary is broken into smaller claims.

A verification network evaluates those claims. The insights that remain are the ones that pass validation.

If something like this worked well, the goal would not be to replace AI research tools. The goal would be to make them more reliable. Verified outputs could reduce hallucination risks and make AI-assisted research easier to trust.

But the idea isn’t perfect either. A few practical questions appear the moment we think about how such a system would actually work. Verification processes may slow things down. Different models may disagree with each other. Building large verification networks is also technically challenging. So the concept may take time to develop.

And when you look at the situation this way, the direction starts to feel quite interesting. AI tools are becoming part of research workflows whether we like it or not.

The real question might not be whether AI will be used in research, but how we make sure the knowledge it produces can actually be trusted.

Look a little closer, and systems like Mira start to appear less like just another AI tool and more like an attempt to build a reliability layer for AI-assisted research.