Something important is unfolding quietly across crypto infrastructure. Many people still treat it as a future problem, but it is already happening now.

AI agents are actively operating on blockchain networks. They are managing wallets, adjusting DeFi strategies, executing trades, and reallocating liquidity between protocols. What was once described as a theoretical “AI economy” is beginning to appear earlier than expected.

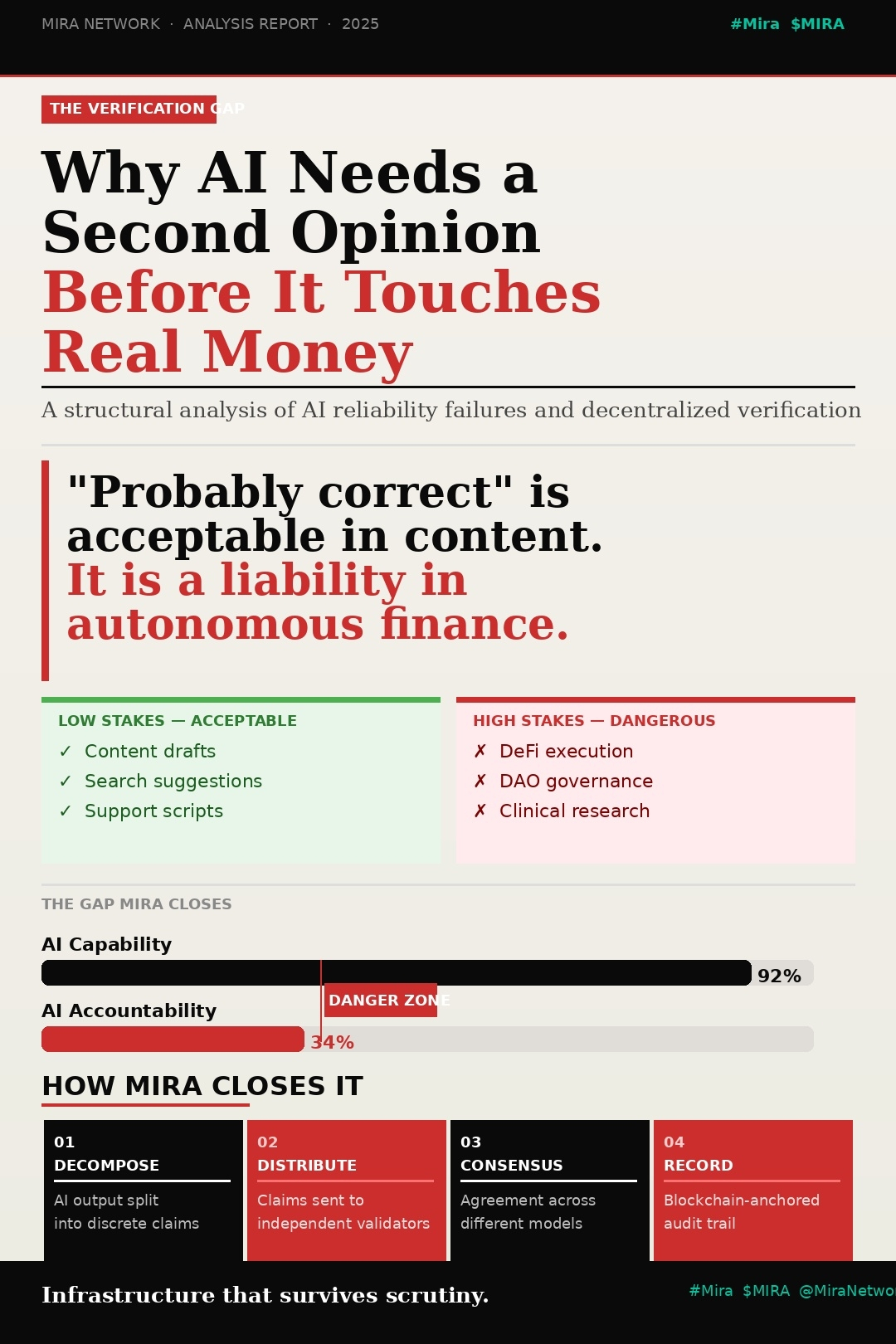

And that shift exposes a structural gap.

When a human makes a trade, responsibility is clear. A wallet signs the transaction and the decision can be traced back to a person.

When a smart contract executes an action, the rules are visible on chain. Anyone can examine the code and understand the logic that triggered the transaction.

But when an AI agent uses information from a language model to decide when to trade, how much liquidity to move, or which position to close, the accountability layer becomes unclear. The reasoning behind the decision may exist inside model outputs that leave little verifiable evidence.

This is the gap that Mira Network is trying to close.

From Raw AI Output to Verified Information

Traditional systems were not designed for a world where autonomous agents participate in financial activity. Mira introduces an additional layer that sits between AI-generated information and on-chain execution.

When an AI agent requests analysis from a language model, the response can be routed through Mira’s verification framework. Instead of accepting the output as a single block of text, the system restructures the information into smaller claims that can be examined independently.

These claims are then reviewed by distributed validators. Each validator evaluates the information separately before the network reaches agreement on whether the claim should be accepted.

Once consensus is reached, the verified result is recorded on-chain along with information about who validated it and how the conclusion was reached.

Accountability for AI-Driven Decisions

The difference between using raw model output and using verified information is not only about improving accuracy. The more important change is accountability.

Every verified claim produces a record. That record shows when the information was generated, how it was evaluated, and which validators participated in confirming it.

If something later goes wrong, investigators can trace the decision path rather than dealing with an opaque AI output. The record becomes a reference point for understanding what information influenced the action.

This type of traceability is becoming increasingly important as regulators begin drafting rules for autonomous systems operating in financial environments.

Why Regulators Care About Decision Trails

Regulatory agencies are not just concerned about whether AI systems perform well on average. They want to understand how specific decisions are made.

If an AI-driven system executes a trade that causes losses or market disruption, authorities will want to reconstruct the decision process. They will ask what data was used, what reasoning was applied, and whether verification occurred before the action was taken.

Mira’s architecture creates a structured trail that can answer those questions. Instead of relying on internal documentation or fragmented logs, the verification record provides a transparent chain of evidence that compliance teams can review.

Incentives and Reputation for Validators

The reliability of the system depends on the people or entities verifying information. Mira attempts to strengthen this layer through economic incentives and reputation tracking.

Participants who consistently produce accurate assessments can build a record of reliability within the network. Over time this creates a validator ecosystem where trust emerges from performance rather than central authority.

The goal is to create a verification environment that remains decentralized while still producing dependable results.

Cross-Chain Compatibility for a Multi-Network Ecosystem

Another practical feature of the design is its ability to interact with multiple blockchain ecosystems.

AI agents already operate across several networks including Bitcoin, Ethereum, and Solana. Mira’s verification layer is designed to integrate with applications across these environments rather than restricting activity to a single chain.

This flexibility allows developers to add verification infrastructure without restructuring their entire stack.

Working With Private Data Without Exposing It

Enterprises face another challenge when integrating AI systems: sensitive data. Financial institutions and corporations cannot freely expose proprietary datasets or confidential information.

Mira’s architecture attempts to address this by allowing verification of results without revealing the underlying data. In practice, this means AI agents can rely on insights derived from private datasets while still producing proof that the conclusions were verified.

That capability becomes particularly important for organizations operating under strict data protection rules.

The Core Problem Was Never Just Accuracy

Concerns about AI often focus on hallucinations or incorrect outputs. While accuracy matters, the deeper issue is structural accountability.

Autonomous systems are increasingly capable of making meaningful economic decisions. Without a mechanism that records how those decisions were formed, it becomes difficult to assign responsibility or prove that due diligence occurred.

The challenge is not simply building smarter models. It is building systems that document and verify the reasoning behind the decisions those models influence.

A Verification Layer for the AI Economy

The growth of AI agents in blockchain ecosystems suggests that autonomous decision making will become a normal part of digital infrastructure. As that transition accelerates, the need for verifiable decision trails will only increase.

Projects like Mira Network are attempting to build the infrastructure that records and validates those decisions before they influence financial systems.

If the AI economy continues expanding, the networks that provide accountability may become just as important as the systems generating the intelligence itself.