AI can sound confident even when it is wrong.

Last night I was testing an AI tool while reading about a complicated topic.

I asked the model to explain something technical, and within seconds it produced a long and detailed answer. The explanation looked clear and convincing. For a moment, I almost accepted it immediately.

But then I checked a few parts of the response.

Some information was correct.

A few details were slightly wrong.

Nothing dramatic. Just small inaccuracies that could easily go unnoticed if someone trusted the answer too quickly.

That moment made something clear to me.

Modern AI systems are very good at generating information.

But generation alone does not guarantee reliability.

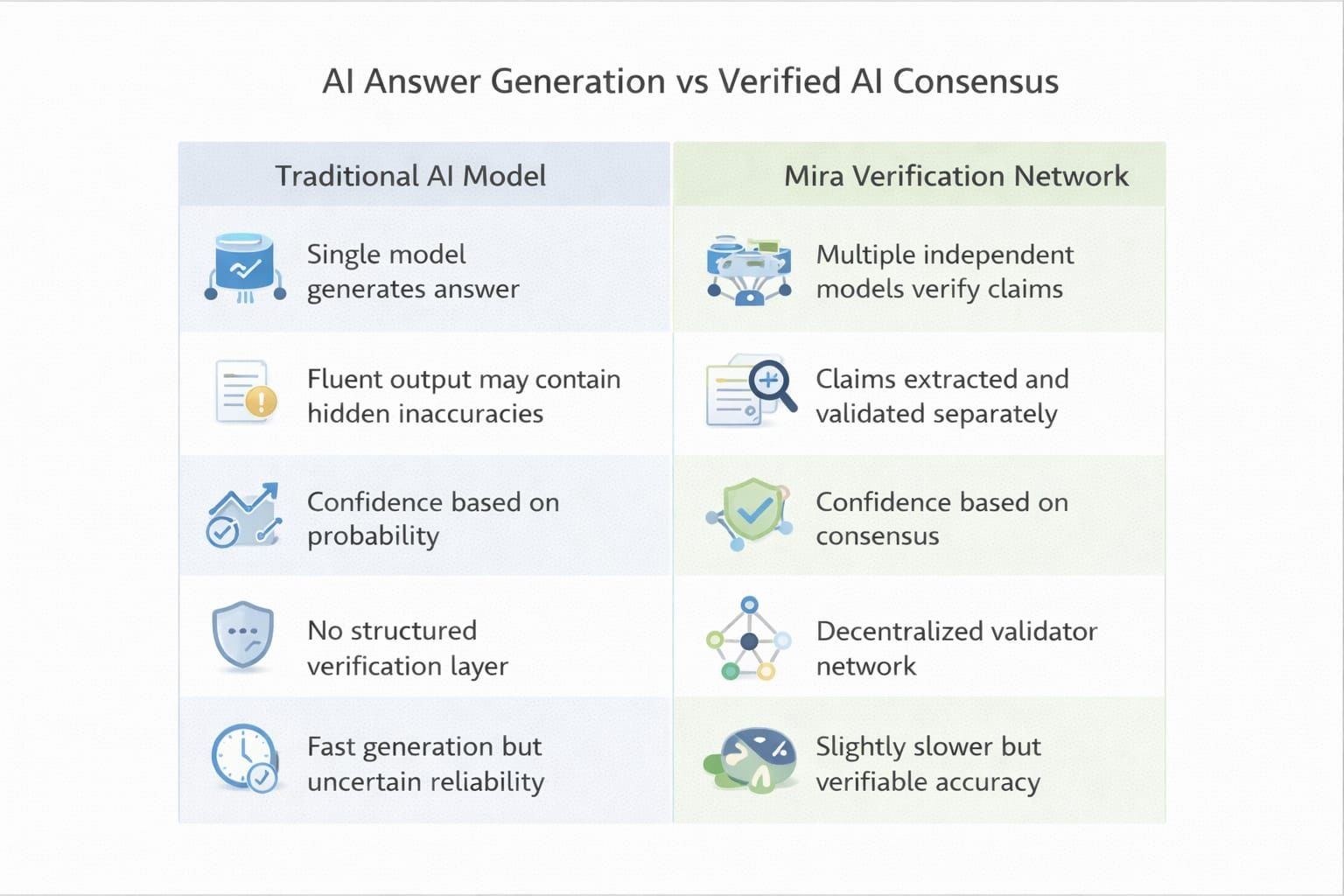

Most language models predict words based on patterns in massive datasets. They can produce explanations that sound logical and fluent. Yet the system itself does not always prove that every statement it produces is actually correct.

This is where @Mira - Trust Layer of AI introduces a different approach.

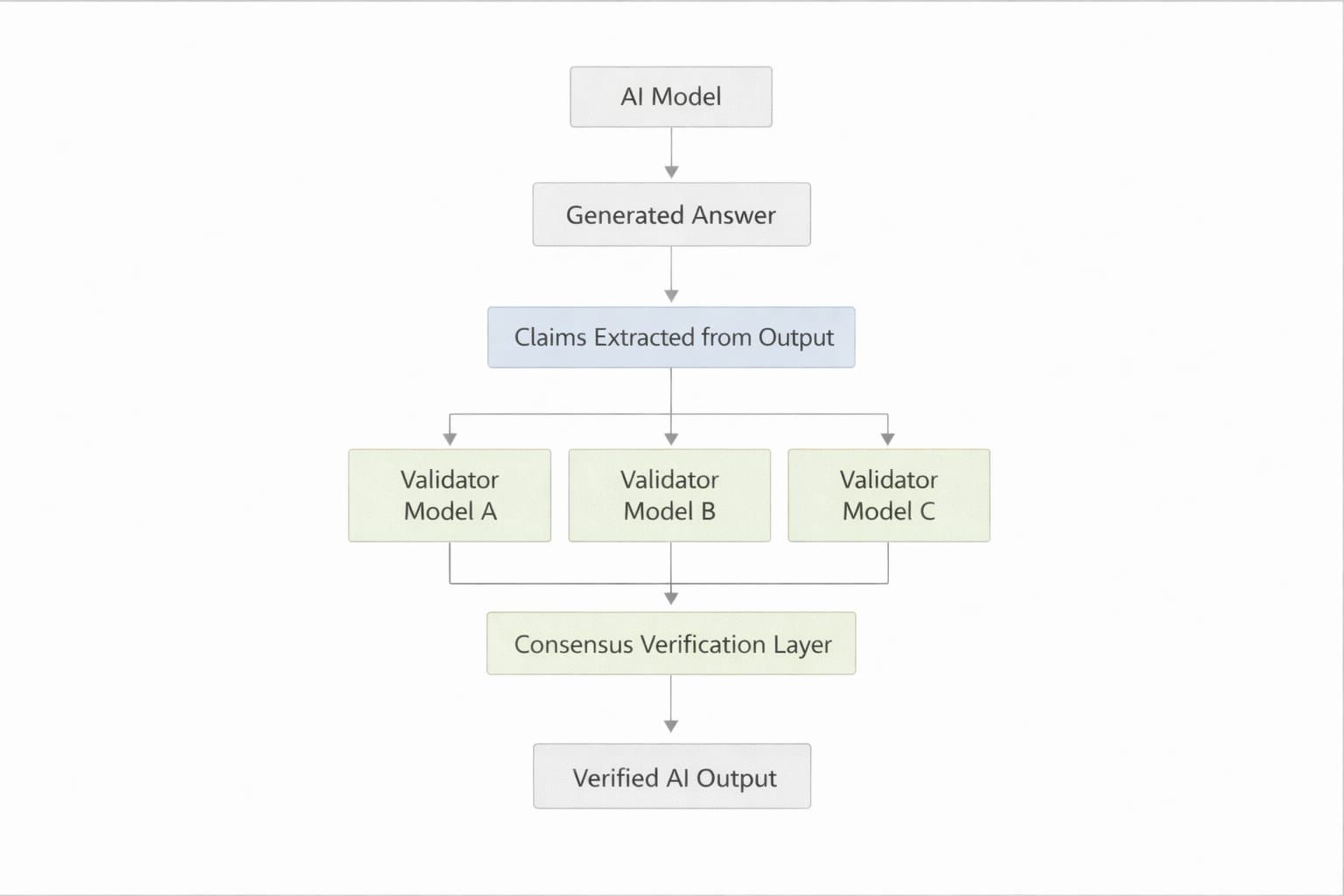

Instead of relying on one model to produce and judge its own answer, Mira separates generation from verification.

An AI output can be broken into smaller claims.

These claims are then reviewed by multiple independent models across a decentralized network.

Each validator checks the information separately.

When enough validators agree, the network reaches consensus about whether the claim is reliable.

What makes this interesting is that AI answers are no longer accepted just because they sound convincing.

They must be verified.

Because in the long run, the real challenge of artificial intelligence may not be generating answers.

It may be proving that those answers are actually true. #Mira