The longer I spend studying emerging technology projects, the less interested I become in their marketing and the more interested I become in their constraints.

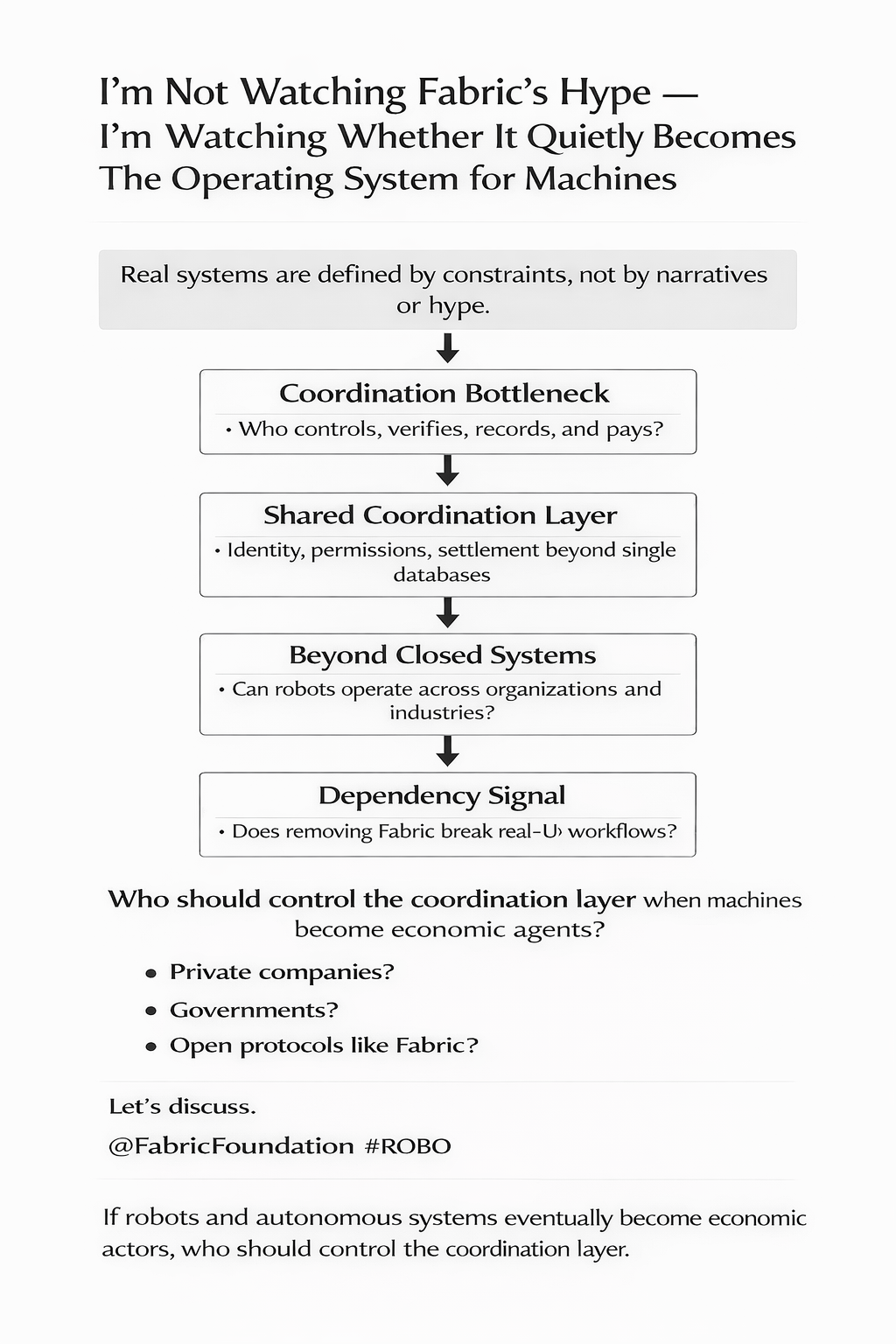

Because real systems are defined by constraints.

Narratives are flexible. Roadmaps are flexible. Timelines are flexible. But constraints are stubborn. They reveal what a system must solve in order to exist outside of a whitepaper.

That’s exactly why Fabric Foundation caught my attention.

Not because it talks about robots or autonomous agents — those narratives have been circulating for years — but because it is focusing on something much less glamorous.

Coordination.

When people talk about the future of machines, they usually jump directly to capability. Faster robots. Smarter AI. Autonomous logistics. Automated factories. The conversation almost always revolves around what machines will be able to do.

But capability is only half of the story.

The other half is coordination.

Machines can perform tasks, but the moment those machines operate across organizations, jurisdictions, and industries, coordination becomes the real bottleneck.

Who controls the machine?

Who verifies what it did?

Who records the outcome?

Who pays when the task is complete?

These questions sound simple until you see them in real operational environments.

Most robotics deployments today exist inside closed systems. A warehouse operator owns the robots, the control software, the dashboards, and the data. Everything works smoothly because the environment is controlled.

But the moment robots interact across boundaries logistics providers, maintenance vendors, insurance companies, regulators, external marketplaces — the system becomes fragile.

Every boundary introduces friction.

This is where Fabric’s approach starts to look interesting to me.

The idea, at least as I understand it, is to create a shared coordination layer where machines can have identity, permissions, and settlement mechanisms that do not depend on any single organization’s database.

Not because blockchain is fashionable.

But because shared coordination systems break when everyone has to trust the same private authority.

This is an example.

Imagine a logistics network where multiple companies deploy autonomous delivery robots across a city. One company manages the robots, another company manages charging stations, a third company operates the delivery marketplace, and a fourth company provides insurance coverage for accidents.

Now imagine a delivery task fails.

The robot claims the package was delivered.

The customer says it wasn’t.

The operator’s logs show conflicting timestamps.

The insurance provider demands proof of what actually happened.

Without a shared record of events, resolving that dispute becomes messy. Each company trusts its own logs. Each system has its own version of the timeline.

Now imagine a shared verification layer where task execution, telemetry checkpoints, and payment settlement are recorded in a standardized protocol that all participants recognize.

The argument isn’t that blockchains magically solve reality.

The argument is that they can standardize evidence.

That distinction matters.

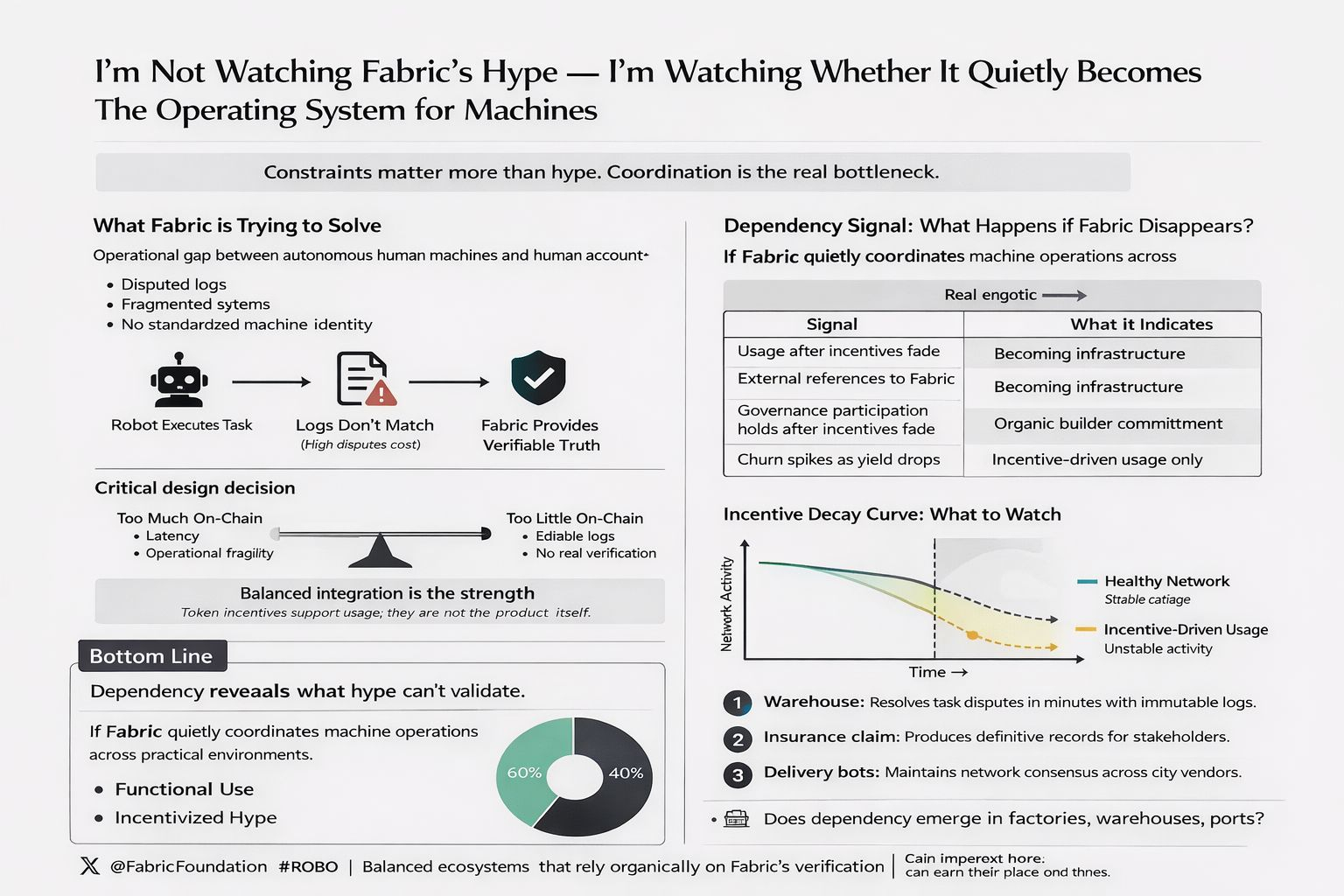

Because robotics doesn’t suffer from a lack of dashboards. It suffers from a lack of trusted reconciliation when systems disagree.

And reconciliation becomes expensive very quickly.

This is another example.

Consider an autonomous warehouse operating overnight with hundreds of picking robots. A batch of orders is reported as completed. The client claims half the items were missing. The internal monitoring system shows the robots completed their routes.

Now someone has to determine whether the robots failed, the software misreported results, or the warehouse staff made a mistake during packing.

If there is no shared record of task verification, the dispute becomes political instead of technical.

Whoever controls the logs controls the story.

Fabric seems to be positioning itself as a neutral layer where those operational events can be recorded and verified.

That’s not a small ambition.

Because infrastructure is not defined by innovation.

It is defined by dependency.

The real test of any coordination protocol is simple: what happens when it disappears?

If Fabric vanished tomorrow, would operators notice immediately?

Or would nothing change except token rewards?

That distinction is brutal, but it’s honest.

Crypto ecosystems often confuse activity with adoption.

Staking rewards can generate temporary participation. Incentives can attract developers for a season. Trading volume can create the illusion of ecosystem growth.

But none of those signals prove that the underlying system is solving a real operational problem.

Real dependency only emerges when removing the system makes existing workflows worse again.

This is an example.

If a robotics company stops using Fabric and suddenly their dispute resolution time doubles, verification processes become inconsistent, and insurance claims take longer to settle — then Fabric has earned its place.

If nothing changes except token emissions, then the usage was rented.

That’s the uncomfortable reality of incentive-driven ecosystems.

And it leads directly to the question of the $ROBO token itself.

I don’t think the token is the product.

I think the token is the enforcement mechanism.

If Fabric is building a coordination layer for machines, then tokens can function as economic guarantees. Validators stake tokens to verify machine activity. Operators stake tokens to register identities. Participants risk slashing if they submit dishonest data.

This is an example.

Imagine an autonomous drone fleet delivering medical supplies between hospitals. Each drone’s activity is verified by independent observers who stake tokens to validate telemetry data.

If a validator confirms incorrect data — perhaps approving a delivery that never occurred — their stake is penalized.

The token becomes collateral for honesty.

That’s when tokens stop being speculative assets and start behaving like infrastructure instruments.

But there’s another dimension that fascinates me even more.

The balance between standard and platform.

Standards succeed by being neutral. They become boring. Invisible. Reliable. The internet runs on protocols most people never think about because they simply work.

Platforms behave differently. Platforms compete for dominance, extract fees, and optimize for growth.

The tricky part is that many crypto foundations talk like standards organizations while their token incentives behave like platform strategies.

Over time, governance dynamics reveal which direction wins.

If governance power concentrates among a small group of token holders, the system begins to resemble a platform.

If participation remains open and rules remain stable regardless of market cycles, the protocol begins to resemble a standard.

This tension will likely define Fabric’s long-term trajectory.

Because neutrality is expensive.

And control is tempting.

What keeps bringing me back to Fabric, though, isn’t the token or the narrative.

It’s the problem space.

Machines interacting with machines is not a speculative concept anymore.

Autonomous logistics systems already exist. Industrial robots already coordinate across supply chains. AI agents are beginning to manage digital workflows.

But those systems still rely heavily on centralized coordination layers.

Every company builds its own control stack.

Every ecosystem builds its own identity system.

Every platform maintains its own data records.

Fabric appears to be asking whether machines need a shared layer the same way humans eventually built shared internet protocols.

Identity.

Permissions.

Verification.

Settlement.

Those components are not flashy.

But they are foundational.

And foundational systems tend to be invisible once they succeed.

Nobody celebrates DNS every day, but the internet stops functioning without it.

Nobody praises payment rails constantly, but global commerce depends on them.

If Fabric eventually becomes a standard layer where machine identity and verification events are recorded across industries, the project may not need constant hype.

It would simply become part of the stack.

But the bar for that outcome is extremely high.

Protocols don’t become infrastructure through ambition alone.

They become infrastructure through repeated usage in environments where failure is unacceptable.

Factories. Ports. Warehouses. Hospitals. Energy systems.

Places where mistakes have real consequences.

So the signals I’m watching aren’t token price charts or exchange listings.

I’m watching something much quieter.

Developers integrating the identity primitives.

Operators relying on verification logs.

External organizations referencing Fabric records during real disputes.

Those are the signals that matter.

Because if Fabric becomes the place people reach for when machines disagree about what happened, it stops being a crypto experiment.

It becomes operational infrastructure.

And infrastructure tends to outlive narratives.

Which leaves me with one question that I keep coming back to.

If robots and autonomous systems eventually become economic actors, who should control the coordination layer they rely on?

Private companies?

Governments?

Or open protocols like Fabric?

I’m genuinely curious how others think about this.

Because the answer will shape how value moves in a world where machines are no longer just tools — but participants in the economy.

Let’s discuss.