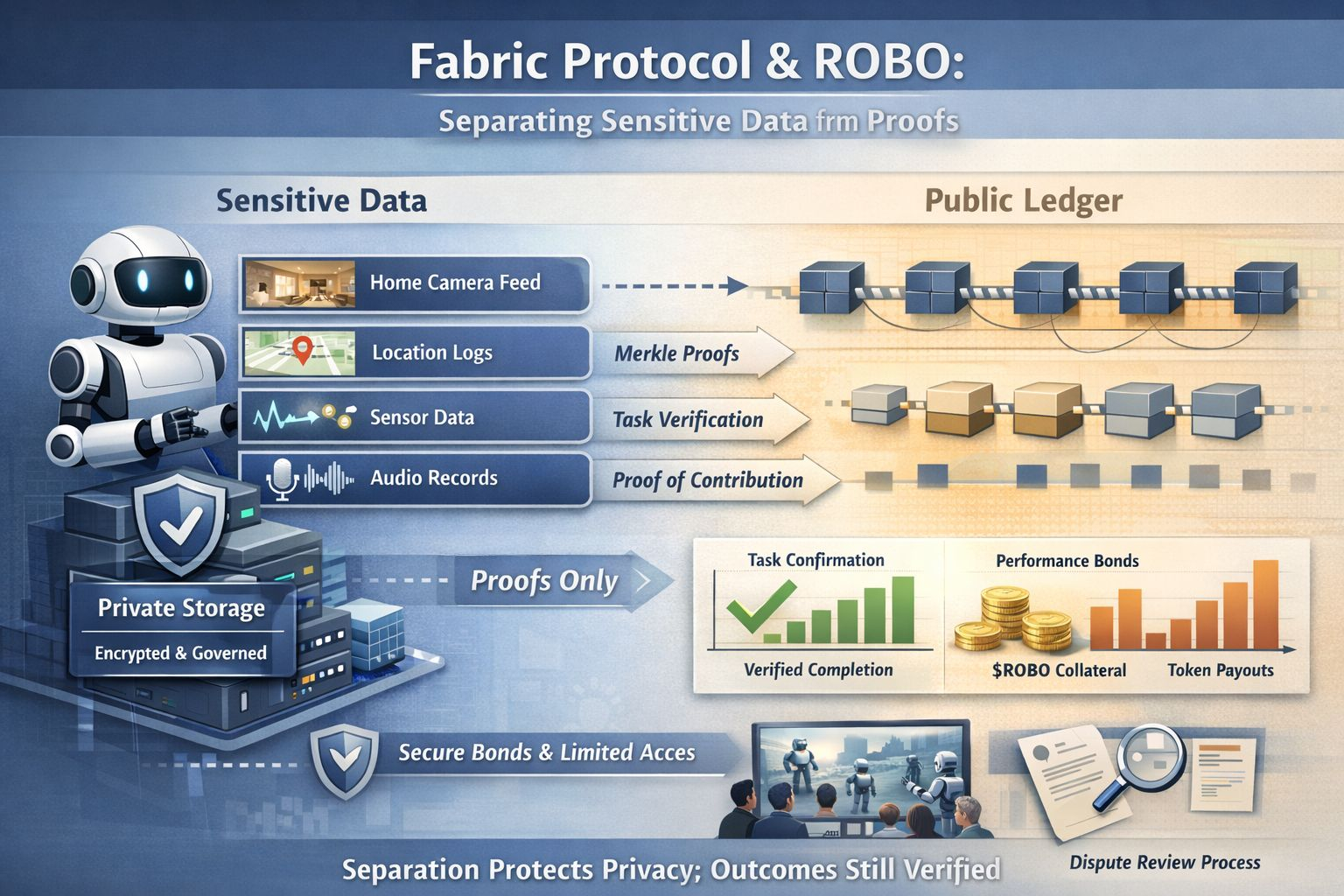

I keep noticing how easy it is to confuse verifiable with exposed. In my head auditability used to mean that the underlying data had to be available to whoever wanted to check the story and the longer I sit with real systems that touch the physical world the more that assumption feels like a trap. What I find more workable is the idea of separating sensitive data from proofs so the private material stays in places where it can be governed while only the minimum evidence moves into the shared layer.

That distinction gets unusually concrete when I look at Fabric Protocol’s goal of building and evolving ROBO as a general purpose robot that stays accountable to people over time. The project frames itself around coordinating computation ownership and oversight through immutable public ledgers and once you say robot the privacy problem stops being abstract because the most useful inputs are often the most personal parts of a life. A robot that operates in homes clinics warehouses or streets can end up touching images sound movement and routines and if auditability is treated as publishing the record then privacy breaks or adoption stalls and neither outcome is really acceptable.

This is where ROBO’s role in the protocol starts to matter as plumbing rather than atmosphere. The whitepaper describes registered robot operators posting a refundable performance bond in ROBO to register hardware and provide services and it frames that bond as a security reservoir that raises the cost of spammy identities. Even before any advanced cryptography shows up this is already a separation move because the public system does not need the operator’s full dossier or internal risk model in order to enforce basic discipline and it mainly needs a bond plus clear rules for what happens when someone fails to meet their obligations.

Then the design gets more interesting when task selection is described as being verified through on chain Merkle proofs with earmarked collateral tied to tasks without requiring a brand new staking action each time. I like this because it points toward a verification story that does not demand full disclosure since a Merkle proof lets you show that something belongs to a committed set while withholding the rest of the set. In practice that means the shared ledger can verify that an operator was legitimately selected under an agreed weighting while avoiding the publication of the intermediate details that could reveal business strategy customer relationships or operational capacity.

The same theme shows up again in the way rewards are described. The protocol’s proof of contribution framing ties token incentives to verified contributions and it includes task completion as protocol measurable activity which makes the boundary between proof and data feel like a survival requirement. If the system has to publish raw sensor logs to prove a task was completed then you have built a machine that can do useful work but cannot respect a front door and the more realistic direction is to keep detailed logs private and ideally deletable while producing a narrow proof that the task met the contract requirements.

This is also why the Global Robot Observatory idea in the whitepaper caught my attention because it imagines humans observing and collectively evaluating robot actions in order to make machines safer and more trustworthy. Oversight like that is hard to do responsibly without careful boundaries since sometimes you truly need evidence when something goes wrong but evidence does not have to mean uploading everything. It can mean structured disclosure where the system can show that a safety constraint was followed or reveal only what is needed for dispute resolution under rules that are explicit and auditable.

I find it helpful to admit that we are not inventing this pattern from scratch. In enterprise settings Hyperledger Fabric’s private data collections already separate raw values that are shared only with eligible parties from hashes that are anchored on the broader ledger and there is also an explicit proposal to support purging private data for right to forget style compliance. Research is also pushing on metadata leakage such as hiding which record was queried through private information retrieval approaches designed for Hyperledger Fabric’s model and even though those are not perfect analogies to robots they still show that prove it without revealing it is becoming normal engineering rather than a theoretical flex.

What feels new today is that proofs are getting cheaper and easier to integrate so the separation pattern can move from a nice idea to a default architecture. When a protocol like Fabric wants durable oversight for ROBO it cannot rely on trust by handshake or on centralized logs that quietly become permanent dossiers and it needs a shared record that is strong enough to coordinate strangers while keeping a private layer that is disciplined enough to protect real lives. If I am honest the hardest part is not the math because the harder part is deciding claim by claim what the world should be able to verify and what the world should never have to see.

@Fabric Foundation #ROBO #robo $ROBO