When I First Realized AI Had a Trust Problem

There's a moment most people in crypto-AI research eventually hit. You're testing some cutting-edge AI model asking it questions that matter, finance, healthcare, legal reasoning and it answers back confidently, fluently, convincingly. And then you fact check it. And it's wrong. Not slightly wrong. Structurally, confidently wrong.

That's the hallucination problem. And the deeper I looked into it, the more I realized it wasn't just a technical bug. It was a systemic trust failure baked into how AI works. One model. One authority. No verification layer.

That's what pulled me toward Mira Network. Not because it's another AI meets blockchain project chasing a narrative, but because it's one of the few that's actually trying to solve the right problem at the right layer and doing it with a security model I hadn't seen before.

What Mira Actually Does

Mira is a decentralized blockchain protocol designed to act as a trust layer for artificial intelligence by verifying the accuracy of AI generated outputs. In plain terms: AI says something, Mira checks whether it's true not through one model, but through a distributed network of independent verifier nodes, each running different AI models.

The protocol works by deconstructing an AI model's complex response into individual, checkable claims. These claims are distributed to a network of independent verifier nodes, each running different AI models. The nodes evaluate the claims independently, and the network uses a consensus mechanism to determine if a claim is accurate.

Think of it like a courtroom with multiple expert witnesses each one independently reaching a verdict. The verdict only stands if they agree.

The Real Innovation: $MIRA (A Security Model Built for AI)

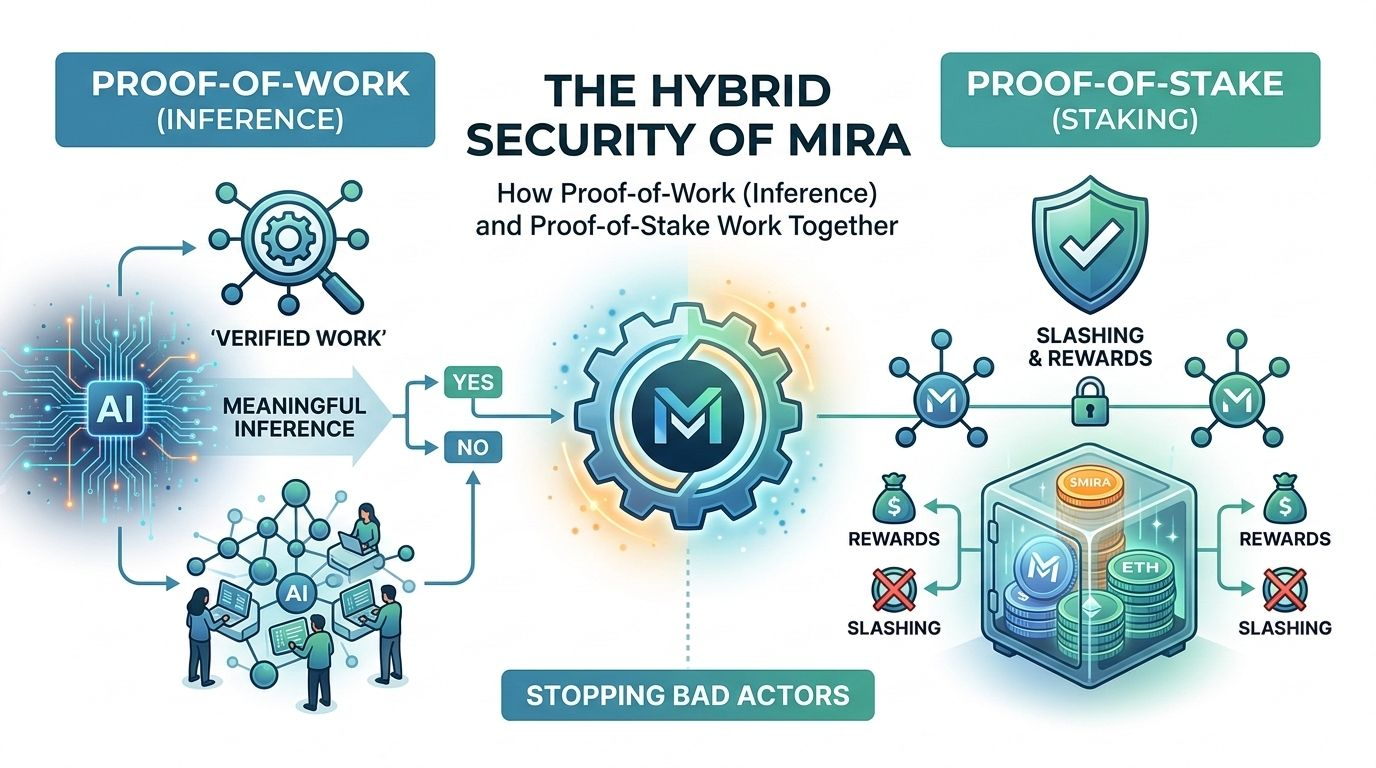

Most people hear Proof of Work and think Bitcoin mining burning energy to solve meaningless math puzzles. Mira's version of PoW is something fundamentally different, and this is where it gets genuinely interesting.

Unlike traditional PoW solving arbitrary puzzles, the Mira network requires meaningful inference computations backed by staked value. Mira In other words, the work a node performs isn't hashing random numbers. It's actually running AI inference processing a real claim and returning a binary verdict: true or false, yes or no.

Mira transforms verification into standardized multiplechoice questions that require actual inference rather than computational brute force. The agreement process operates through binarization breaking complex AI generated content into discrete, independently verifiable claims.

But here's the problem that immediately jumped out to me when I first read the whitepaper. If the output is just yes or no, what stops a lazy node from guessing?

The Guessing Problem And Why PoS Is the Answer

This is where Mira's hybrid model becomes genuinely clever.

For a single inference, the probability that the node gets it right by purely guessing is 50%. If the node has to make 2 independent binary guesses, the probability of getting both correct is 25%. 10 verifications correspond to a probability of 0.0977%. The math works against bad actors at scale. But Mira doesn't rely on probability alone.

To participate in the process of verification, nodes have to stake a valuable amount, which will be prone to slashing should they randomly guess outputs rather than infer. With penalties put in place, node operators are sentenced to act in good faith, performing verifications in an honest manner.

Node operators are incentivized with rewards in $MIRA tokens whenever they carry out a correct inference, while the staked ETH is slashed if a node tries to act maliciously.

So you have two enforcement layers working simultaneously. PoW forces real computational work. PoS makes dishonesty economically irrational. One layer alone is breakable. Together, they close the loop.

Tokenomics: Built Around Participation

The $MIRA token has a fixed total supply of 1 billion tokens, distributed according to a philosophy that the network belongs to those who use it, build on it, and secure it.

The breakdown reflects long-term thinking: 16% reserved for future node rewards to sustain validator incentives over time, 26% allocated to an ecosystem reserve for developer grants and partnerships, and 20% vesting for core contributors over 36 months with a 12-month cliff.

MIRA is used for API access, staking, and governance across the network. [Binance Academy] The token isn't just a speculative asset it's the operational fuel for every verification that happens on the network. That's a key distinction. Usage drives demand, not hype.

Market Position: The Binance Angle

On 25 September, Binance showed support for the Mira Network (MIRA) project, adding it to its HODLer Airdrops programme as the 45th project. That's not a trivial signal. Binance's HODLer Airdrop program has historically spotlighted infrastructure level projects with real utility.

Mira Flows allows developers to build applications on Mira's verification network through a marketplace of pre built AI workflows including common tasks such as summarization, data extraction, and multi stage pipelines. The ecosystem is already live with products like Klok, a multi-model AI assistant, and Learnrite, an AI powered educational content platform built on verified outputs.

Honest Risks Worth Discussing

No analysis is complete without looking at the downside. A few things give me pause.

The binary yes/no output simplifies verification but also limits nuance. Many real world AI outputs exist in grey zones partially correct, contextually dependent. Whether Mira's binarization model handles genuine complexity at scale remains an open question.

Secondly, node adoption is critical. A verification network with too few independent nodes doesn't truly decentralize. The economic incentives need to attract enough diverse operators to prevent subtle collusion.

And like all AI-adjacent crypto projects, Mira faces execution risk. The vision is compelling. The tech architecture is sound on paper. But moving from testnet infrastructure to enterprise-grade production at scale is a different challenge entirely.

The Non-Obvious Insight Most People Are Missing

Here's what I think most crypto observers are skipping past: Mira isn't competing with AI companies. It's positioning itself as the verification layer beneath all of them.

Beyond verification, the vision is a synthetic foundation model that integrates verification directly into the generation process eliminating the distinction between generation and verification, delivering error free outputs.

That's a fundamental paradigm shift. If that vision lands, Mira doesn't become a tool that sits alongside AI models it becomes infrastructure that AI models run on top of. The comparison isn't to other AI crypto tokens. It's closer to what TCP/IP did for the internet: invisible, essential, everywhere.

The network's crypto economic incentives ensure that network security strengthens over time, making it economically unfeasible for malicious actors to game the system a self-reinforcing feedback loop.

Security that gets stronger the more it's used. That's not common in this space.

Final Thought What Do You Think?

Mira is tackling something most blockchain projects avoid: not the token price, not the NFT layer, not the DeFi mechanism but the raw, foundational question of whether AI can be trusted at all.

The hybrid PoW + PoS model is elegant precisely because it treats the problem like what it is a game theory problem, not just a technical one. You don't stop bad actors by making fraud harder. You stop them by making fraud pointless.

Does a decentralized verification layer actually change how you'd trust AI outputs in high-stakes decisions? And do you think the economic model is strong enough to attract honest node operators at the scale needed to make this real?

Drop your thoughts below I'd genuinely like to know where you stand on this one.

@Mira - Trust Layer of AI #Mira