When I first started looking closely at Mira Network, I expected the usual blockchain conversation scalability, throughput, maybe another promise of faster infrastructure. What stood out instead was a much simpler question: if AI systems are going to make decisions inside digital economies, how do we know those decisions are reliable?

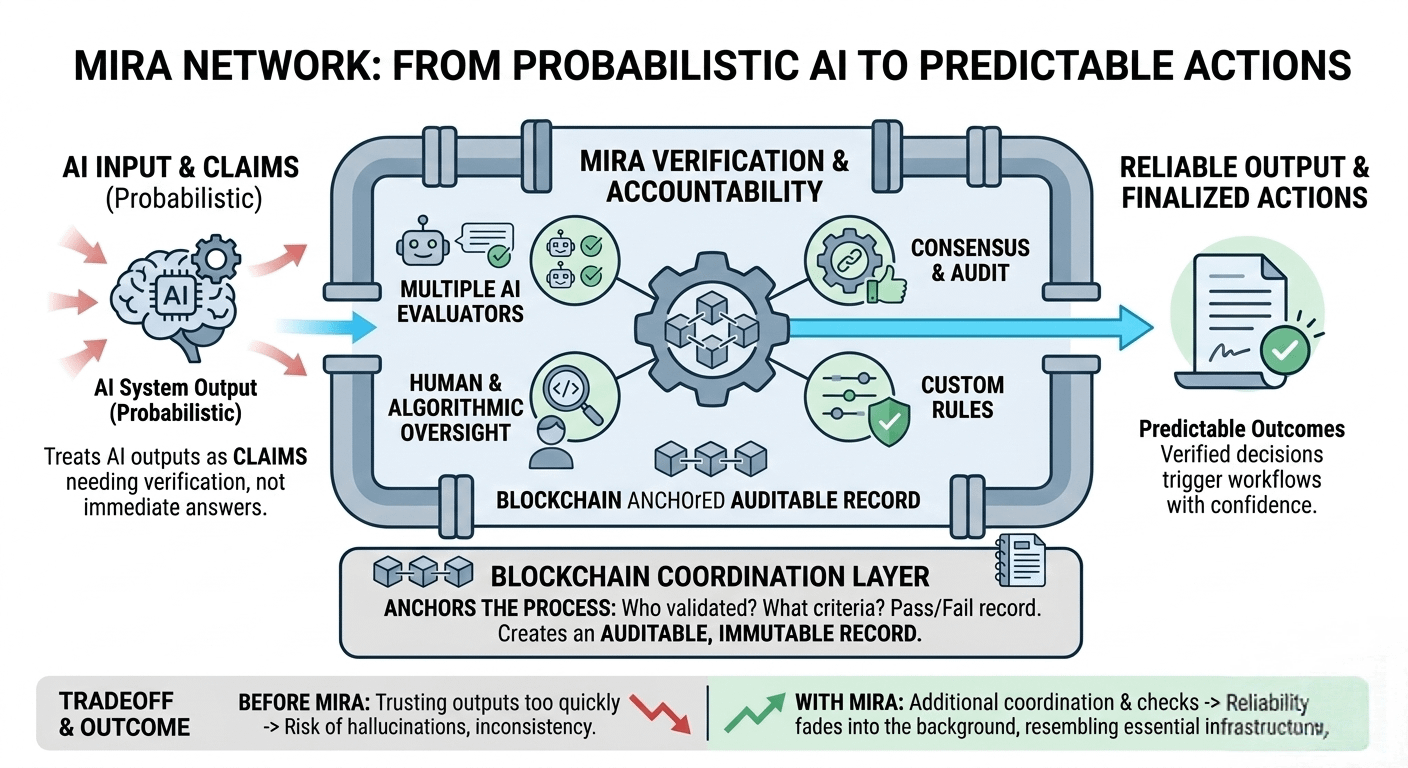

The idea that really clicked for me was that Mira doesn’t treat AI outputs as answers. It treats them as claims that still need verification.

That distinction matters more than I initially realized. Most modern AI models are probabilistic by design. They generate responses based on likelihood, not certainty. When I use AI casually summarizing an article or brainstorming ideas that uncertainty doesn’t bother me much. But once AI starts powering autonomous agents, financial tools, research assistants, or in game systems, “probably correct” starts to feel risky.

Mira’s architecture is built around that tension. Instead of allowing a single AI output to immediately trigger actions, the network introduces a structured verification process. Different evaluators or agents can check whether the result meets certain reliability conditions before it becomes accepted within a workflow. In other words, the system slows down just enough to ask: does this actually hold up?

What I find interesting is how the blockchain fits into this design. In Mira’s model, the chain acts less like a traditional transaction ledger and more like a coordination layer for verification itself. The process of checking an AI result who validated it, what criteria were used, and whether it passed can be anchored on-chain. That creates an auditable record of how conclusions were reached.

Stepping back, that feels like a very human idea. In research, we use peer review. In law, we rely on opposing arguments and evidence. In finance, we depend on multiple parties verifying transactions. Mira seems to apply a similar philosophy to AI systems: intelligence alone isn’t enough there must also be accountability around it.

Another aspect that caught my attention is flexibility. Different applications require different levels of certainty. A casual chatbot might tolerate occasional mistakes, but an autonomous trading agent or data analysis system cannot. Mira’s architecture allows different verification rules depending on the environment. Some systems might rely on multiple AI evaluators confirming a result. Others might combine algorithmic checks with human oversight.

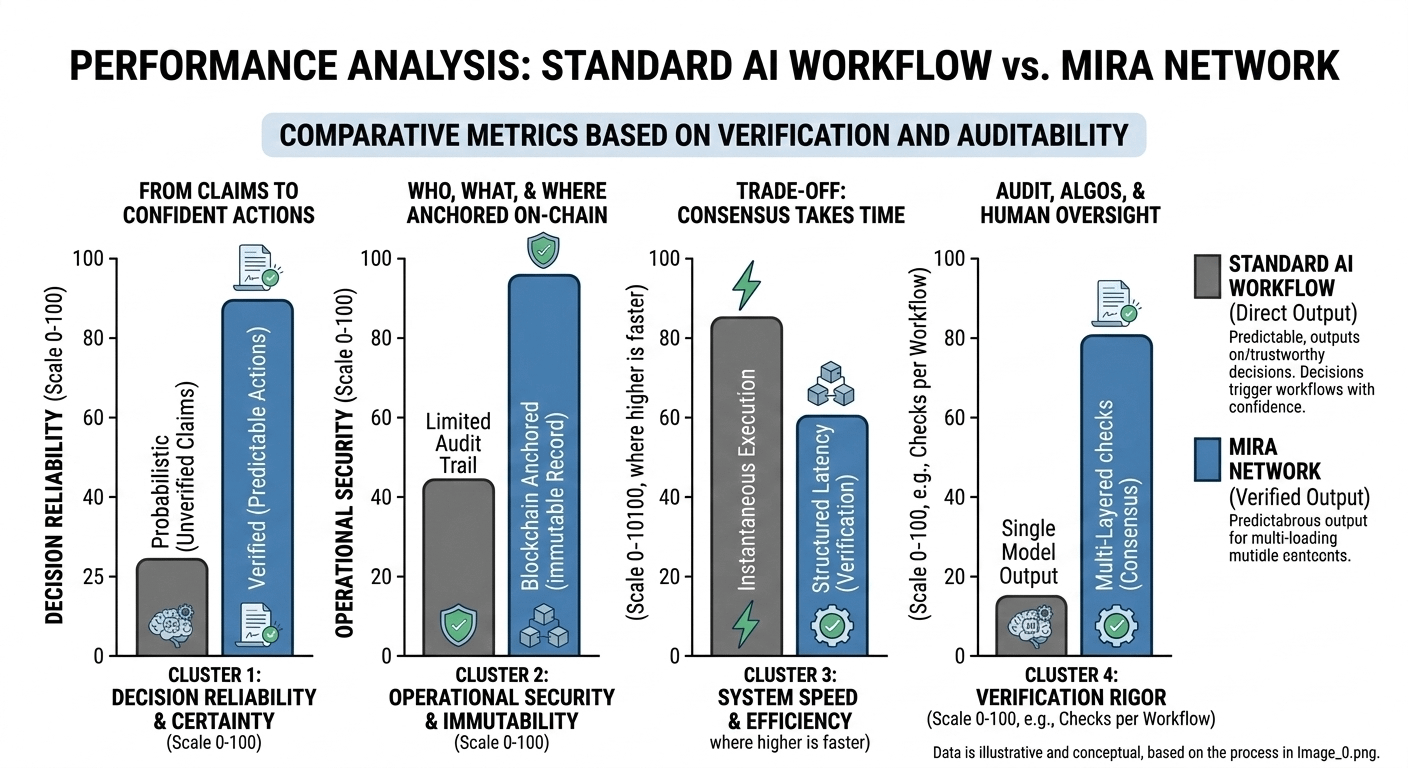

Of course, building verification into AI workflows introduces tradeoffs. Additional checks can slow processes down. They also require extra coordination between systems. Developers will constantly face the decision between speed and certainty.

But the more I thought about it, the more the tradeoff made sense. Many of the problems people experience with AI today come from trusting outputs too quickly. Hallucinated information, inconsistent reasoning, and fragile automation all stem from the same assumption: that the first answer is good enough.

If Mira succeeds, the goal isn’t to make AI louder or more visible. It’s the opposite. Most users won’t think about verification layers, consensus checks, or evaluation mechanisms. They’ll just notice that AI-powered systems behave more predictably when something important is at stake.

The blockchain won’t feel like a feature. It will quietly function as the infrastructure that keeps intelligent systems accountable.

And when reliability becomes invisible like that when it simply fades into the background of everyday tools the technology starts to resemble something we rarely question, like electricity or the internet itself.